PCI Express 2.0: Scalable Interconnect Technology, TNG

by Kris Boughton on January 5, 2008 2:00 AM EST- Posted in

- CPUs

PCI Express Link Speeds and Bandwidth Capabilities

PCI Express uses a highly scalable architecture that is capable of delivering high bandwidth with a relatively low pin-count, dramatically simplifying design complexity while simultaneously allowing for smaller interface footprints. This is accomplished through the use of Low Voltage Differential Signal (LVDS) signal pairs - a simple two wire connection allowing for 1 bit to be transferred per clock (therefore 1GT/s is equal to 1Gbps). A pair per direction makes bi-directional signaling possible, which effectively doubles the throughput to 2 bits per cycle. Together these four pins comprise a single "lane." No additional pins are needed for data transfer as the clock signal is transmitted using these same pins through the use of an encoding scheme known as 8b/10b encoding. Without getting into too much detail this means that 8 bits of data are transferred using a 10-bit signal. While this does add 25% overhead to each data transfer, it eliminates the need to route separate traces for clock signals, the downside being that the maximum throughput is reduced by about 20%. Because the clock rate is so high (2.5GHz), the PCI Express protocol is able to transfer up to 500MB/s of bi-directional data with just four pins (compared to the legacy PCI bus which transfers 133MB/s of data using 32 pins). Higher data transfer rates require either the use of numerous parallel traces or increased clocking rates - in this case we can see that the tradeoff has been clearly been made in favor of a low-pin count.

Multiple lanes can be grouped through a method knows as training, wherein the downstream device and the host negotiate how many lanes will be assigned. Although plugging a PCI Express card into a slot smaller than itself is not physically possible, plugging into a larger slot is mechanically possible. During training, the host device simply queries the device for its maximum link speed and assigns resources as needed. (Keep in mind that link speed is a bit of a misnomer - the PCI Express physical link layer always operates at the same frequency; it's the number of assigned lanes that changes). This method becomes a little more complicated in the case where the mechanical size of the slot does not necessarily match the host's maximum offered link speed. For example, many of today's X38-based motherboards include a third x16 slot but are only capable of providing x4 bandwidth (PCI Express 1.x) - more on why this is later. In this case, the installed device must be willing to operate at a reduced link speed. The PCI Express interface supports interconnect widths of x1, x2, x4, x8, x16, and x32. As an aside, PCI-E x32 slots are rarely seen because of their exceptional length, but thanks to PCI Express 2.0 we can now get the same bandwidth in PCI-E x16 form factor.

The part of the installed card's edge connector to the left of the key notch is always the same, no matter the card. Power, ground, reference voltages, and pads for control, training, and link maintenance are located here and are the required minimum required for operation. Data transfers to and from the device are accomplished using repeating blocks of pads - those that form the signals that comprise a single lane. The longer the slot is from the right of the keyway the higher the speed it offers (this assumes that it does in fact offer a maximum link speed congruent with its mechanical size). This all makes determining a device's default link speed quite easy - a quick look at the length of the edge connector and you have everything you need to know.

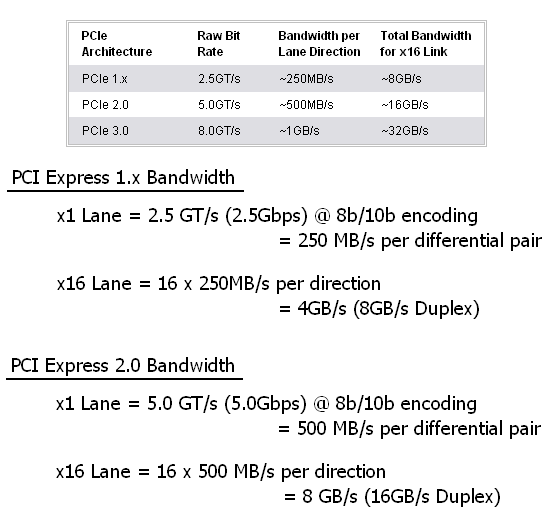

The table below compares PCI Express 1.x with 2.0 as well as 3.0 (which is now in the development stage - expect to see products based on this revision sometime in 2010). PCI Express 2.0 builds upon PCI Express 1.x primarily through the doubling of the clock rate to 5.0GHz (up from 2.5GHz). This brings to light an important point: earlier we noted that the PCI Express physical link layer always operates at the same speed. This is true except in the case where a PCI Express 1.x device is installed in a PCI Express 2.0 compliant slot - although the host is capable of the higher signaling frequency the device is not; the result being the use of the slower (2.5GT/s) clock rate. Note how the 8b/10b encoding overhead is factored into the actual usable bandwidth calculation.

Using PCI Express 2.0, motherboard designers can now either offer double the bandwidth in an equivalent size slot or can choose to create smaller layouts without sacrificing performance. In any case, this additional design flexibility paves the way for significant improvements in future products. PCI Express 3.0 will likely double the bandwidth provided by the previous generation again. You may have noticed though that the change must come from something more than just a decrease in the cycle time. This will be due a change in the encoding scheme - PCI-E 3.0 will stop using 8b/10b encoding, and whether or not the new scheme is truly more efficient than that used today remains to be seen. Expect the same level of backward-compatibility though as PCI-SIG is already assuring us that our then ancient PCI Express 1.x cards will run without difficulty in a PCI Express 3.0 slot. Let's take a closer look at exactly why they can make such a bold claim.

21 Comments

View All Comments

rogerdpack - Monday, July 23, 2012 - link

So shouldn't the article list PCIe 2.0 throughput as 400 MB/s because of the 8b/10b encoding overhead?