Overclocking Intel's New 45nm QX9650: The Rules Have Changed

by Kris Boughton on December 19, 2007 2:00 AM EST- Posted in

- CPUs

Testing System Stability with Prime95

For over 10 years a site operated by a group called the Great Internet Mersenne Prime Search (GIMPS) has sponsored one of the oldest and longest-running distributed computer projects. A Mersenne prime is a prime of the form 2P-1 where "P" is a prime number (an integer greater than one is called a prime number if its only divisors are one and itself). At this time there are only 44 known Mersenne primes. The simple client program, called Prime95, originally released and made available for public download in early January 1996, allows users interested in participating in the search for other Mersenne primes the opportunity to donate spare CPU cycles to the cause. Although few overclockers participate in GIMPS, many use Prime95 for testing overall system stability. While there are other programs available for download designed specifically for this reason, few can match the ease of use and clean interface provided by Prime95.

The load placed on the processor is quite intense (nominally 100% on each core, as reported by Windows) and if there are any weaknesses in the system Prime95 will quickly find them and alert the user. Additionally, newer versions of the program automatically detect the system's processor core count and run the appropriate number of threads (one per core), ensuring maximum system load with the need for little to no user input. It is important to note that high system loads can stress the power supply unit (PSU), motherboard power circuit components, and other auxiliary power delivery systems. Ultimately, the user is accountable for observing responsible testing practices at all times.

Failures can range from simple rounding errors and system locks to the more serious complete system shutdown/reset. As with most testing the key to success comes in understanding what each different failure type means and how to adjust the incorrect setting(s) properly. Because Prime95 tests both the memory subsystem and processor simultaneously, it's not always clear which component is causing the error without first developing a proper testing methodology. Although you may be tempted to immediately begin hunting for your CPU's maximum stable frequency, it's better to save this for later. First efforts should focus on learning the limits of your particular motherboard's memory subsystem. Ignoring this recommendation can lead to situations in which a system's instability is attributed to errors in the wrong component (i.e., the CPU instead of the MCH or RAM).

To begin, we first start by identifying personal limits regarding measurable system parameters. By bounding the range of acceptable values, we protect ourselves from needless component damage - or even worse, complete failure. We have listed below the parameters we consider critical when overclocking any system. In most cases, monitoring and limiting these values will help to ensure trouble-free testing.

Overall System Power Consumption: This is the system's total power draw as measured from the wall. As such, this is the power usage sum of all components as well as power used by the PSU in converting household AC supply current to the DC rails used by the system. P3 International makes a wonderful and inexpensive product called the Kill-A-Watt that can monitor your system's instantaneous power draw (Watts), volts-amps (VA) input, PSU input voltage (V), PSU input current (A), and kW-hr power usage.

A conservative efficiency factor of about 80% works for most of today's high-quality PSUs - meaning that 20% of the total system power consumption goes to power conversion losses in the PSU alone. (Although absolute PSU efficiency is a function of load, we estimate this value here as a single rating for the sake of simplicity.) Knowing this we can estimate how much power the system is really using and how much is nothing more that heat dissipated by the power supply. For example, if your system draws 300W under load then 240W (0.8 x 300W) is the load on the output of the PSU and the remaining 60W (300W - 240W) leaves the PSU as heat. It is important to note that manufacturers rate PSUs based on their power delivery capabilities (output) and not their maximum input power.

Using what we have learned so far, we can calculate the maximum allowable wall power draw for any PSU. Consider the case of a high-quality 600W unit with a conservative efficiency rating of 80%. First find 90% of the maximum output rating (0.9 x 600W = 540W) - this allows us to limit ourselves to at least a small margin below our PSU's maximum load. Now divided that by 0.8: 540W / 0.8 = 675W. For a good 600W PSU we feel comfortable in limiting ourselves to a maximum sustained wall power draw of about 675W as read by our Kill-A-Watt. (Should you decide to use a lower quality power supply, you will get lower efficiency and you won't want to load the PSU as much. So, 70% efficiency and a maximum load of 75% of the rated 600W would yield 643W… only your components are getting far less actual power and the PSU needs to expel a lot more heat. That's why most overclockers value a good PSU.)

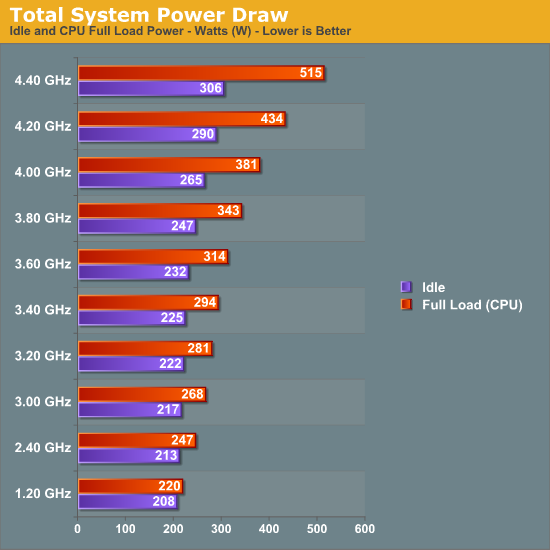

Our PSU's total power draw as a function of CPU speed (full load)

Keep in mind that the power consumption values based on CPU testing alone will not be representative of total system load when running graphics intensive loads, like 3D gaming. The GPU(s) also contribute significantly to this value. Be sure to account for this when establishing your upper power consumption limit. Alternatively, buy a more powerful PSU as overstressing one is a great way to cause a failure.

Processor Voltage (Vcore) and Core Temperatures: As process technology node sizes decrease, so do maximum recommended Vcore values. Better cooling can sometimes allow for higher values but only to the extent that temperatures remain manageable. Even with high-end water-cooling, CPU voltages in excess of ~1.42V with 45nm quad-cores result in extremely elevated full-load core temperatures, especially when pushing above 4.2GHz or higher. Those using traditional air-cooling will more than likely find their limits somewhere around 1.36V or even lower.

Intel's Core 2 family of processors is incredibly resilient in the face of abuse when it comes to Vcore values greater than the maximum specification - damaging your CPU from excessive core voltage will be difficult. In some cases, heat will be the limiting factor. We'll go into more detail later in the article when we discuss the effect of frequency and voltage scaling on maximum sustained core temperatures.

Memory Voltage (VDimm): Unlike CPUs, current memory modules are extremely sensitive to overvoltage conditions and may begin to exhibit early signs of premature failure after relatively short periods of abuse. Most high-performance memory manufactures go to great lengths testing their products to maximum warranted voltages. Our recommendation, which never changes, is that you observe these specifications at all times. For those dealing with conservatively rated memory the following are goods rules of thumb when it comes to memory voltage: 2.4V maximum for DDR2 and 2.1V maximum for DDR3. Exceeding these voltages will more than likely accelerate degradation. Subjecting memory to voltages well in excess of these values has caused almost immediate failure. Remember, just because your motherboard BIOS offers ridiculously high memory voltages doesn't mean you need to test them out.

Northbridge Voltage (Vmch): The Memory Controller Hub (MCH), sometimes referred to as the Northbridge, is responsible for routing all I/O signals and data external to the CPU. Interfaced systems include the memory via the Front Side Bus (FSB), graphics card(s) over PCI Express, and the Southbridge using a relatively low-bandwidth DMI interface. Portions of the MCH run 1:1, 2:1 and even 4:1 with the Front Side Bus (FSB) meaning that just like CPU overclocking, raising the FSB places an increased demand on the MCH silicon.

Sustained MCH voltages in excess of about 1.7V (for X38) will surely cause early motherboard failures. Because Intel uses 90nm process technology for X38, we find that voltages higher than those applied to 65/45nm CPUs are generally fine. During the course of our X38 testing we found the chipset able to drive two DIMM banks (2x1GB) at 400MHz FSB at default voltage (1.25V) while four banks (4x1GB) required a rather substantial increase to 1.45V. Besides FSB and DIMM bank population levels, a couple of other settings which significantly influence minimum required MCH voltages are Static Read Control Delay (tRD) - often called Performance Level - Command Rate selection (1N versus 2N), and the use of non-integer FSB:DIMM clocking ratios. Our recommendation is to keep this value below about 1.6V when finding your maximum overclock.

56 Comments

View All Comments

Lifted - Wednesday, December 19, 2007 - link

Very impressive. Seems more like a thesis paper than a typical tech site article. While the content on AT is of a higher quality than the rest of the sites out there, I think the other authors, founder included, could learn a thing or two from an article like this. Less commentary/controversy and more quality is the way to go.AssBall - Wednesday, December 19, 2007 - link

Shouldn't page 3's title be "Exlporing the limits of 45nm Halfnium"? :Dhttp://www.webelements.com/webelements/elements/te...">http://www.webelements.com/webelements/elements/te...

lifeguard1999 - Wednesday, December 19, 2007 - link

"Do they worry more about the $5000-$10000 per month (or more) spent on the employee using a workstation, or the $10-$30 spent on the power for the workstation? The greater concern is often whether or not a given location has the capacity to power the workstations, not how much the power will cost."For High Performance Computers (HPC a.k.a. supercomputers) every little bit helps. We are not only concerned about the power from the CPU, but also the power from the little 5 Watt Ethernet port that goes unused, but consumes power. When you are talking about HPC systems, they now scale into the tens-of-thousands of CPUs. That 5 Watt Ethernet port is now a 50 KWatt problem just from the additional power required. That Problem now has to be cooled as well. More cooling requires more power. Now can your infrastructure handle the power and cooling load, or does it need to be upgraded?

This is somewhat of a straw-man argument since most (but not all) HPC vendors know about the problem. Most HPC vendors do not include items on their systems that are not used. They know that if they want to stay in the race with their competitors that they have to meet or exceed performance benchmarks. Those performance benchmarks not only include how fast it can execute software, but also how much power and cooling and (can you guess it?) noise.

In 2005, we started looking at what it would take to house our 2009 HPC system. In 2007, we started upgrades to be able to handle the power and cooling needed. The local power company loves us, even though they have to increase their power substation.

Thought for the day:

How many car batteries does it take to make a UPS for a HPC system with tens-of-thousands of CPUs?

CobraT1 - Wednesday, December 19, 2007 - link

"Thought for the day:How many car batteries does it take to make a UPS for a HPC system with tens-of-thousands of CPUs?"

0.

Car batteries are not used in neither static nor rotary UPS's.

tronicson - Wednesday, December 19, 2007 - link

this is a great article - very technical, will have to read it step by step to get it all ;-)but i have one question that remains for me.. how is it about electromigration with the very filigran 45nm structures? we have here new materials like the hafnium based high-k dielectricum, guess this may improove the resistance agains em... but how far may we really push this cpu until we risk very short life and destruction? intel gives a headroom until max 1.3625V .. well what can i risk to give with a good waterchill? how far can i go?

i mean feeding a 45nm core p.ex. 1,5V is the same as giving a 65nm 1,6375! would you do that to your Q6600?

eilersr - Wednesday, December 19, 2007 - link

Electromigration is an effect usually seen in the interconnect, not in the gate stack. It occurs when a wire (or material) has a high enough current density that the atoms actually move, leading to an open circuit, or in some cases, a short.To address your questions:

1. The high-k dielectric in the gate stack has no effect on the resistance of the interconnect

2. The finer features of wires on a 45nm process do have a lower threshold to electromigration effects, ie smaller wires have a lower current density they can tolerate before breaking.

3. The effects of electromigration are fairly well understood at this point, there are all kinds of automated checks built in to the design tools before tapeout as well as very robust reliability tests performed on the chips prior to volume production to catch these types of reliability issues.

4. The voltage a chip can tolerate is limited by a number of factors. Ignoring breakdown voltages and other effects limited by the physics of transistor operation, heat is where most OC'ers are concerned. As power dissipation is most crudely though of in terms of CVf^2 (capacitance times voltage times frequency-squared), the reduced capacitance in the gate due to the high-k dielectric does dramatically lower power power dissipation, and is well cited. The other main component in modern CPU's is the leakage, which again is helped by the high-k dielectric. So you should expect to be able to hit a bit higher voltage before hitting a thermal envelope limitation. However, the actual voltage it can tolerate is going to depend on the CPU and what corner of the process it came from. In all, there's no general guideline for what is "safe". Of course, anything over the recommended isn't "safe", but the only way you'll find out, unfortunately, is trial and error.

eilersr - Wednesday, December 19, 2007 - link

Doh! Just noticed my own mistake:high-k dielectric does not reduce capacitance! Quite the contrary, a high-k dielectric will have higher capacitance if the thickness is kept constant. Don't know what I was thinking.

Regardless, the capacitance of the gate stack is a factor, as the article mentioned. I don't know how the cap of Intel's 45nm gate compares with that of their 65nm gate, but I would venture it is lower:

1. The area of the FET's is smaller, so less W*L parallel plate cap.

2. The thickness of the dielectric was increased. Usually this decreases cap, but the addition of high-k counter acts that. Hard to say what balance was actually achieved.

This is just a guess, only the process engineers no for sure :)

kjboughton - Wednesday, December 19, 2007 - link

Asking how much voltage can be safetly applied to a (45nm) CPU is a lot like asking which story of a building can you jump from without the risk of breaking both legs on the landing. There's inherent risk in exceeding the manufacturer's specification at all and if you asked Intel what they thought I know exactly what they would say -- 1.3625V (or whatever the maximum rated VID value is). The fact of the matter is that choices like these can only be made by you. Personally, I feel exceeding about 1.4V with a quad 45nm CPU is a lot like beating your head against a wall, especially if your main concern is stability. My recommendation is that you stay below this value, assuming you have adequate cooling and can keep your core temperatures in check.renard01 - Wednesday, December 19, 2007 - link

I just wanted to tell you that I am impressed by your article! Deep and practical at the same time.Go on like this.

This is an impressive CPU!!

regards,

Alexander

defter - Wednesday, December 19, 2007 - link

People stop posting silly comments like: "Intel's TDP is below real power consumption, it isn't comparable to AMD's TDP".Here we have a 130W TDP CPU consuming 54W under load.