NVIDIA's 3-way SLI: Can we finally play Crysis?

by Anand Lal Shimpi on December 17, 2007 3:00 PM EST- Posted in

- GPUs

NVIDIA is in a difficult position. On one front it has the chipset division of AMD, fighting hard to make its own chipsets the platform of choice for AMD processors. On the other front it has Intel, the enemy of its enemy, and a very dangerous partner itself. Intel hasn't been very quiet about its plans for dominating the GPU market, but that isn't for another couple of years, until then, Intel will gladly allow NVIDIA to make chipsets for its processors.

As a company, NVIDIA needs to be able to maintain relevancy in the market. In the worst case scenario, AMD and Intel would each make their own chipsets and graphics cards, leaving NVIDIA with nothing to do. The reality is that NVIDIA still has the best graphics cards on the market and neither AMD or Intel is anywhere close to taking the crown yet. The chipset business is more likely in imminent danger, but NVIDIA does have one trick up its sleeve: SLI.

NVIDIA has some very desirable graphics cards, and it has a tremendous brand in those three letters. Of course, SLI only works on NVIDIA chipsets, thus it's no surprise to see NVIDIA trying to add even more value to the SLI proposition.

The GPU manufacturers, in the past two years, started to run into the same sort of thermal walls that Intel did during the Pentium 4 days. Future GPU designs will be more focused on power consumption and performance per watt, and while that won't kill the very high end graphics market, it will undoubtedly change it. If you'll notice, the first two G92 based products NVIDIA launched were both targeted at the mid range and lower high end segments, there were no 8800 GTX/Ultra replacements in the cards.

It may end up being that the way NVIDIA pushes the envelope isn't by introducing single, very powerful GPUs, but rather by SLI-ing lesser GPUs together. That brings us to today's topic: 3-way SLI.

If you can't tell by the name, 3-way SLI is like conventional 2-card SLI but with three cards.

The requirements for 3-way SLI are simple: you need an NVIDIA 680i or 780i motherboard, and you need three 8800 GTX or 8800 Ultra cards. SLI support continues to be the biggest reason to purchase an NVIDIA based motherboard, thus it's no surprise to see 3-way SLI not work on any competitor chipsets. With three cards you need two SLI connectors per card, meaning that all of the recently released G92 based boards won't cut it.

The graphics card limitations are quite possibly the most shocking, because the 8800 GTX and Ultra are still based on the old G80 GPU, which is significantly hotter than the new 65nm G92 that is used in the 8800 GT and GTS 512. NVIDIA unfortunately only outfitted those new G92 cards with a single SLI connector, so 3-way SLI was out of the question from the start and plus, NVIDIA needed some reason to continue to sell the 8800 GTX and Ultra.

Power supply and cooling requirements are also pretty stringent, NVIDIA lists the minimum power supply requirements as:

And a list of 3-way SLI certified power supplies follows:

We actually used an OCZ 1000W unit without any problems (including two 4-pin molex to 6-pin PCIe power connectors to feed the third card), but we'd recommend sticking to NVIDIA's list if you want to be safe.

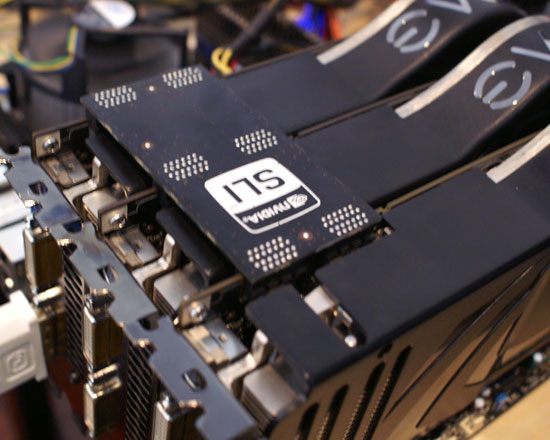

The three cards are connected using a new SLI bridge card that ships with all 780i motherboards:

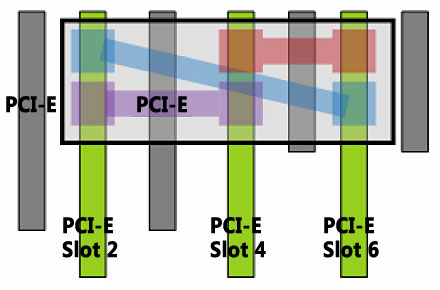

If you don't have this card you can jerry rig a bridge using regular SLI flex cables in the following configuration:

48 Comments

View All Comments

chizow - Tuesday, December 18, 2007 - link

Derek Wilson in 8800GT Review:Completely valid point about using 32-bit vs. 64-bit and somewhat of a hot topic over in the video forums. Honestly you have $5000+ worth of hardware in front of you, yet getting a 64-bit version of Vista running benchmarks at resolutions/settings where 64-bit and 2GB+ would help the most is too difficult? C'mon guys, seriously this is the 2nd sub-par review in a row (512 GTS review was poor too).

Also, could you clarify the bit about 680i boards being able to accomplish the same thing? Exactly what spurred this change in Tri-SLI support? Driver support? Seems Anand used 169.08 but I thought the 169.25 was the first to officially support Tri-SLI from the patch notes. Or has it always been supported and the 780i just hyping up a selling point that has been around for months? Also, the 780i article hinted there would be OC'ing tests with the chipset and I don't see any here. Going to come in a different article? Thanks.

blppt - Tuesday, December 18, 2007 - link

Yeah, seriously. Especially since the 64bit Crysis executable does away with the texture streaming engine entirely...how can you make a serious "super high end ultimate system" benchmark without utilizing the most optimized, publicly available version of the game? Is it that the 64bit Vista drivers dont support 3-way SLI yet?Otherwise, putting together a monster rig with 3 $500 videocards and then testing it with 32bit vista seems rather silly....

Ryan Smith - Tuesday, December 18, 2007 - link

Address space consumption isn't 1:1 with video memory, it's only correlated, and even less so in SLI configurations where some data is replicated between the cards. I'm not sure what exact value Anand had, but I'm confident Anand had more than 2GB of free address space.JarredWalton - Tuesday, December 18, 2007 - link

Testing at high resolutions with ultra-insane graphics settings serves one purpose: it makes hardware like Quad-SLI and Tri-SLI appear to be much better than it really is. NVIDIA recommended 8xAA for quad-SLI back in the day just to make sure the difference was large. It did make QSLI look a lot better, but when you stopped to examine the sometimes sub-20 FPS results it was far less compelling.Run at 4xAA on a 30" LCD at native resolution, and it's more than just a little difficult to see the image quality difference, with sometimes half the frame rate of 4xAA. A far better solution than maxing out every setting possible is to increase quality where it's useful. 4xAA is even debatable at 2560x1600 - certainly not required - and it's the first thing I turn off when my system is too slow for a game. Before bothering with 8xAA, try transparent supersampling AA. It usually addresses the same issue with much less impact on performance.

At the end of the day, it comes down to performance. If you can't enable 8xAA without keeping frame rates above ~40 FPS (and minimums above 30 FPS), I wouldn't touch it. I play many games with 0xAA and rarely notice aliasing on a 30" LCD. Individual pixels are smaller than on 24", 20", 19", etc. LCDs so it doesn't matter as much, and the high resolution compensates for other areas. Crysis at 2560x1600 with Very High settings? The game is already a slide show, so why bother?

0roo0roo - Tuesday, December 18, 2007 - link

faster is faster, the best is expensive and sometimes frivolous. at that price point you arent thinking like a budget buyer anymore. like exotic cars, you can't be that rational about it. its simply power ...power NOW.crimson117 - Tuesday, December 18, 2007 - link

If it's true that it's all about power, then just find the most expensive cards you can buy, install them, and don't bother playing anything. Also, tip your salesman a few hundred bucks to make the purchase that much more expensive.0roo0roo - Tuesday, December 18, 2007 - link

look, its not like you don't get any advantage from it. its not across the board at this point, but its still a nice boost for any 30" gamer.seriously, there are handbags that cost more than this sli stuff.

JarredWalton - Tuesday, December 18, 2007 - link

Next up from AnandTech: Overclocked Handbags!Stay tuned - we're still working on the details...