Unreal Tournament 3 CPU & High End GPU Analysis: Next-Gen Gaming Explored

by Anand Lal Shimpi & Derek Wilson on October 17, 2007 3:35 AM EST- Posted in

- GPUs

UT3 Teaches us about CPU Architecture

For our first real look at Epic's Unreal Engine 3 on the PC, we've got a number of questions to answer. First and foremost we want to know what sort of CPU requirements Epic's most impressive engine to date commands.

Obviously the GPU side will be more important, but it's rare that we get a brand new engine to really evaluate CPU architecture with so we took this opportunity to do just that. While we've had other UE3 based games in the past (e.g. Rainbow Six: Vegas, Bioshock), this is the first Epic created title at our disposal.

The limited benchmarking support of the UT3 Demo beta unfortunately doesn't lend itself to being the best CPU test. The built-in flybys don't have much in the way of real-world physics as the CPU spends its extra time calculating spinning weapons and the position of the camera flying around, but there are no explosions or damage to take into account. The final game may have a different impact on CPU usage, but we'd expect things to get more CPU-intensive, not less, in real world scenarios. We'll do the best we can with what we have, so let's get to it.

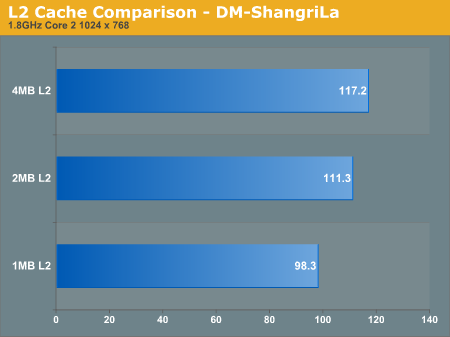

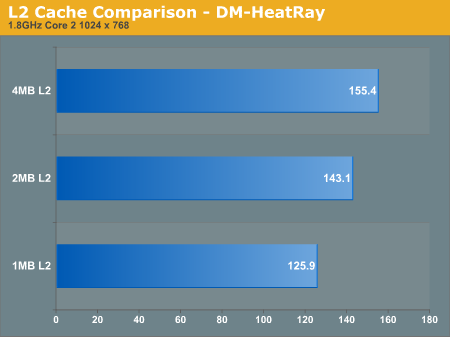

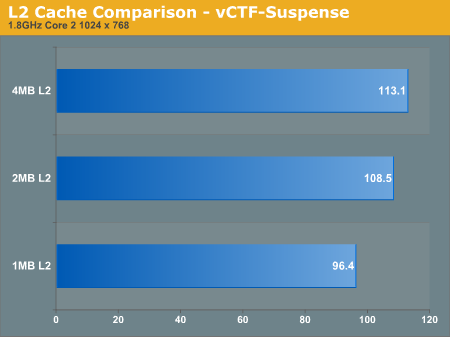

Cache Scaling: 1MB, 2MB, 4MB

One thing we noticed about the latest version of Valve's Source engine is that it is very sensitive to cache sizes and memory speed in general, which is important to realize given that there are large differences in cache size between Intel's three processor tiers (E6000, E4000 and E2000).

The Pentium Dual-Core chips are quite attractive these days, especially thanks to how overclockable they are. If you look back at our Midrange CPU Roundup you'll see that we fondly recommend them, especially when mild overclocking gives you the performance of a $160 chip out of a $70 one. The problem is that if newer titles are more dependent on larger caches then these smaller L2 CPUs become less attractive; you can always overclock them, but you can't add more cache.

To see how dependent Unreal Engine 3 and the UT3 demo are on low latency memory accesses we ran 4MB, 2MB and 1MB L2 Core 2 processors at 1.8GHz to compare performance scaling.

From 1MB to 2MB there's a pretty hefty 12 - 13% increase in performance at 1.8GHz, but the difference from 2MB to 4MB is slightly more muted at 4 - 8.5%. An overall 20% increase in performance simply due to L2 cache size on Intel CPUs at 1.8GHz is impressive. We note the clock speed simply because the gap will only widen at higher clock speeds; faster CPUs are more data hungry and thus need larger caches to keep their execution units adequately fed.

In order to close the performance deficit, you'd have to run a Pentium Dual-Core at almost a 20% higher frequency than a Core 2 Duo E4000, and around a 35% higher frequency than a Core 2 Duo E6000 series processor.

72 Comments

View All Comments

retrospooty - Wednesday, October 17, 2007 - link

The demo is not full detail, its a playable test with lower image settings than the final game. Wait and see the final before we see what its "supposed" to look like.shabby - Wednesday, October 17, 2007 - link

If its really going to look like these pics then this performance review is useless, fps will drop by half. Im hoping you're right, but im betting epic simply polished up those pics to wow everyone.imaheadcase - Wednesday, October 17, 2007 - link

Yah, i downloaded the demo. I was really disappointed. It gets boring really fast, like Quake Wars does. Nothing really innovating in terms of graphics, or fun factor, or "repeatability" over long term.I think TF2 ruined all these games. lol

Pjotr - Wednesday, October 17, 2007 - link

This text does not match the graphs posted, where the max gain was 4.4% for ShangriLa, the other two tests showed close to 0% difference. Analysis mistake, graph error, typo or what?

Frallan - Wednesday, October 17, 2007 - link

Well since many of us today (yes even I bought one) has started to use Lappys and there is ones out now that where it is possible to play a view on how a 8600 GT w 512DDR2 and 256 DDR3 does would be nice and from 1680*1050 downwards. There are tons of these in lappys now so..Pretty please :)

Lifted - Wednesday, October 17, 2007 - link

Seconded.Blacklash - Wednesday, October 17, 2007 - link

Just grab ATiTool from Techpowerup! and reduce the clocks of the XT.Interesting article, and as someone said you have a chart with text referring to AMD above it and the actual CPU numbers labelled as Intel. The last four charts on the clock for clock page say AMD yet list Intel CPUs.

dvinnen - Wednesday, October 17, 2007 - link

Last time I checked, ATiTool dosen't support the HD 2900xt. Only one that I know of is a buggy peice of software from AMD to change clock speeds.NullSubroutine - Wednesday, October 17, 2007 - link

Ati Tools works with 2900, I use it for my overclocking/fan contol.Ati Tray Tols does not work with the 2900. They are two different programs, Ati Tools is mainly for the things I use them for, while Tray Tools is a replacement for Catalyst Control Center.

Bjoern77 - Wednesday, October 17, 2007 - link

why bench the 2900 pro? is there a 2900pro which can't be clocked back to xt level? :)I'm glad i got a 2900pro for myself, regarding this benches i get 95% performance of a 8800gtx for 50% of it's price.