HD Video Decode Quality and Performance Summer '07

by Derek Wilson on July 23, 2007 5:30 AM EST- Posted in

- GPUs

Transporter 2 Trailer (High Bitrate H.264) Performance

This is our heaviest hitting benchmark of the bunch. Nestled into the recesses of the Blu-ray version of The League of Extraordinary Gentlemen (a horrible move if ever there was one) is a very aggressively encoded trailer for Transporter 2. This ~2 minute trailer is encoded with an average bitrate of 40 Mbps. The bitrate actually peaks at nearly 54 Mbps by our observation. This pushes up to the limit of H.264 bitrates allowed on Blu-ray movies, and serves as an excellent test for a decoder's ability to handle the full range of H.264 encoded content we could see on Blu-ray discs.

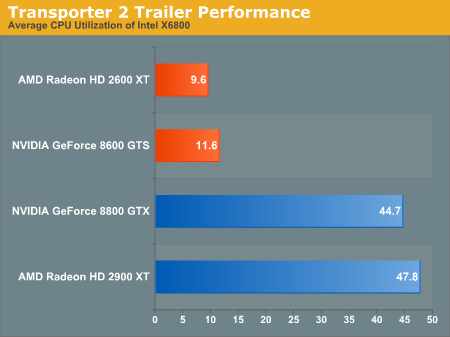

First up is our high performance CPU test (X6800):

Neither the HD 2900 XT nor the 8800 GTX feature bitstream decoding on any level. They are fairly representative of older generation cards from AMD and NVIDIA (respectively) as we've seen in past articles. Clearly, a lack of bitstream decoding is not a problem for such a high end processor, and because end users generally pair high end processors with high end graphics cards, we shouldn't see any problems.

Lower CPU usage is always better. By using an AMD card with UVD, or an NVIDIA card featuring VP2 hardware (such as the 8600 GTS), we see a significant impact on CPU overhead. While AMD does a better job at offloading the CPU (indicating less driver overhead on the part of AMD), both of these solutions enable users to easily run CPU intensive background tasks while watching HD movies.

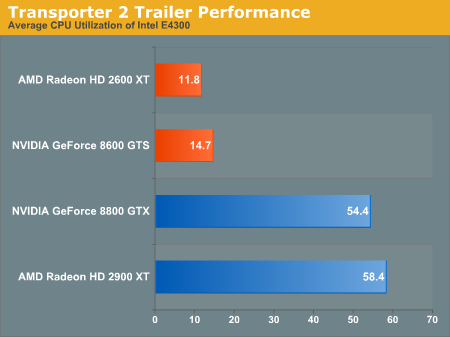

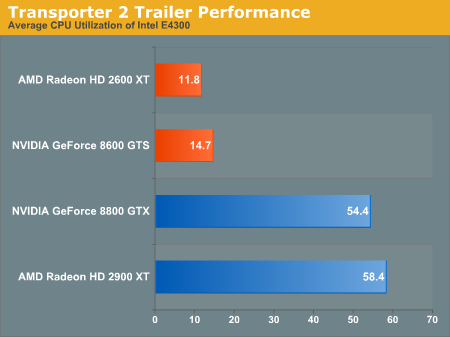

Next up is our look at an affordable current generation CPU (E4300):

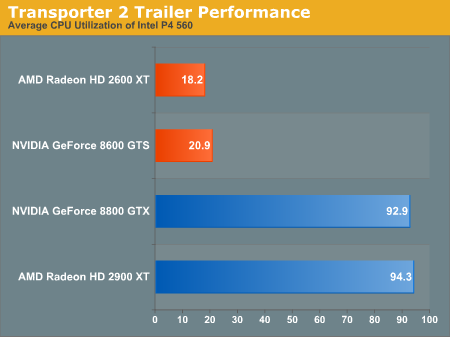

While CPU usage goes up across the board, we still have plenty of power to handle HD decode even without H.264 bitstream decoding on our high end GPUs. The story is a little different when we look at older hardware, specifically our Pentium 4 560 (with Hyper-Threading) processor:

Remember that these are average CPU utilization figures. Neither the AMD nor the NVIDIA high end parts are able to handle decoding in conjunction with the old P4 part. Our NetBurst architecture hardware just does not have what it takes even with heavy assistance from the graphics subsystem and we often hit 100% CPU utilization without one of the GPUs that support bitstream decoding.

Of course, bitstream decoding delivers in a HUGE way here, not only making HD H.264 movies watchable on older CPUs, but even giving us quite a bit of headroom to play with. We wouldn't expect people to pair the high end hardware with these low end CPUs, so there isn't much of a problem with the lack in this area.

Clearly offloading CABAC and CAVLC bitstream processing for H.264 was the right move, as the hardware has a significant impact on the capabilities of the system on the whole. NVIDIA is counting on bitstream processing for VC-1 not really making a difference, and we'll take a look at that in a few pages. First up is another H.264 test case.

This is our heaviest hitting benchmark of the bunch. Nestled into the recesses of the Blu-ray version of The League of Extraordinary Gentlemen (a horrible move if ever there was one) is a very aggressively encoded trailer for Transporter 2. This ~2 minute trailer is encoded with an average bitrate of 40 Mbps. The bitrate actually peaks at nearly 54 Mbps by our observation. This pushes up to the limit of H.264 bitrates allowed on Blu-ray movies, and serves as an excellent test for a decoder's ability to handle the full range of H.264 encoded content we could see on Blu-ray discs.

First up is our high performance CPU test (X6800):

Neither the HD 2900 XT nor the 8800 GTX feature bitstream decoding on any level. They are fairly representative of older generation cards from AMD and NVIDIA (respectively) as we've seen in past articles. Clearly, a lack of bitstream decoding is not a problem for such a high end processor, and because end users generally pair high end processors with high end graphics cards, we shouldn't see any problems.

Lower CPU usage is always better. By using an AMD card with UVD, or an NVIDIA card featuring VP2 hardware (such as the 8600 GTS), we see a significant impact on CPU overhead. While AMD does a better job at offloading the CPU (indicating less driver overhead on the part of AMD), both of these solutions enable users to easily run CPU intensive background tasks while watching HD movies.

Next up is our look at an affordable current generation CPU (E4300):

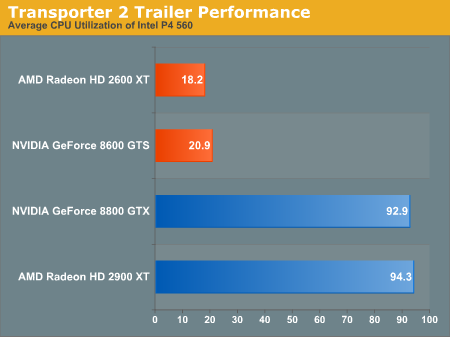

While CPU usage goes up across the board, we still have plenty of power to handle HD decode even without H.264 bitstream decoding on our high end GPUs. The story is a little different when we look at older hardware, specifically our Pentium 4 560 (with Hyper-Threading) processor:

Remember that these are average CPU utilization figures. Neither the AMD nor the NVIDIA high end parts are able to handle decoding in conjunction with the old P4 part. Our NetBurst architecture hardware just does not have what it takes even with heavy assistance from the graphics subsystem and we often hit 100% CPU utilization without one of the GPUs that support bitstream decoding.

Of course, bitstream decoding delivers in a HUGE way here, not only making HD H.264 movies watchable on older CPUs, but even giving us quite a bit of headroom to play with. We wouldn't expect people to pair the high end hardware with these low end CPUs, so there isn't much of a problem with the lack in this area.

Clearly offloading CABAC and CAVLC bitstream processing for H.264 was the right move, as the hardware has a significant impact on the capabilities of the system on the whole. NVIDIA is counting on bitstream processing for VC-1 not really making a difference, and we'll take a look at that in a few pages. First up is another H.264 test case.

63 Comments

View All Comments

Wozza - Monday, March 17, 2008 - link

"As TV shows transition to HD, we will likely see 1080i as the choice format due to the fact that this is the format in which most HDTV channels are broadcast (over-the-air and otherwise), 720p being the other option."I would like to point out that 1080i has become a popular broadcast standard because of it's lower broadcast bandwidth requirements. TV shows are generally mastered on 1080p, then 1080i dubs are pulled from those masters and delivered to broadcasters (although some networks still don't work with HD at all, MTV for instance who take all deliveries on Digital Beta Cam). Pretty much the only people shooting and mastering in 1080i are live sports, some talk shows, reality TV and the evening news.

Probably 90% of TV and film related blu-rays will be 1080p.

redpriest_ - Monday, July 23, 2007 - link

Hint: They didn't. What anandtech isn't telling you is that NO nvidia card supports HDCP over dual-DVI, so yeah, you know that hot and fancy 30" LCD with gorgeous 2560x1600 res? You need to drop it down to 1280x800 to get it to work with an nvidia solution.This is a very significant problem, and I for one applaud ATI for including HDCP over dual-DVI.

DigitalFreak - Wednesday, July 25, 2007 - link

Pwnd!defter - Tuesday, July 24, 2007 - link

You are wrong.Check Anand's 8600 review, they clearly state that 8600/8500 cards support HDCP over dual-DVI.

DigitalFreak - Monday, July 23, 2007 - link

http://guru3d.com/article/Videocards/443/5/">http://guru3d.com/article/Videocards/443/5/http://guru3d.com/article/Videocards/443/5/Chadder007 - Monday, July 23, 2007 - link

I see the ATI cards lower CPU usage, but how is the power readings when the GPU is being used compared to the CPU??chris92314 - Monday, July 23, 2007 - link

Does the HD video acceleration work with other programs, and with non blueray/hddvd sources? For example if I wanted to watch a h.264 encoded .mkv file would I still see the performance and image enhancements.GPett - Monday, July 23, 2007 - link

Well, what annoys me is that there used to be all-in-wonder video cards for this kinda stuff. I do not mind a product line that has TV tuners and HD playback codecs, but not at the expense of 3d performance.It is a mistake for ATI and Nvidia to try to include this stuff on all video cards. The current 2XXX and 8XXX generation of video cards might not been as pathetic had the two GPU giants focused on actually making a GPU good instead of adding features that not everyone wants.

I am sure lots of people watch movies on their computer. I do not. I don't want a GPU with those features. I want a GPU that is good at playing games.

autoboy - Wednesday, July 25, 2007 - link

All in wonder cards are a totally different beast. The all in wonder card was simply a combination of a TV tuner card (and a rather poor one) and a normal graphics chip. The TV tuner simply records TV and has nothing to do with playback. ATI no longer sells All in wonder cards because the TV tuner card did not go obsolete quickly, while the graphics core in the AIW card went obsolete quickly, requiring the buyer to buy another expensive AIW card when only the graphics part was obsolete. A separate tuner card made so much more sense.Playback of video is a totally different thing and the AIW cards performed exactly the same as regular video cards based on the same chip. At the time, playing video on the PC was more rare and the video playback of all cards was essentially the same because no cards offered hardware deinterlacing on their video cards. Now, video on the PC is abundant and is the new Killer App (besides graphics) which drives PC performance, storage, and internet speed. Nvidia was first to the party offering Purevideo support, which did hardware deinterlacing for DVDs and SD TV on the video card instead of in software. It was far superior to any software solution at the time (save a few diehard fans of Dscaler with IVTC) and came out at exactly the right time, with the introduction of media center and cheap TV tuner cards and HD video. Now, Purevideo 2 and AVIVO HD introduce the same high quality deinterlacing to HD video for mpeg2 (7600GT and up could do HD mpeg2 deinterlacing) as well as VC-1 and H.264 content. If you don't think this is important, remember that all new satelite HD broadcasts coming online are in 1080i h264, requiring deinterlacing to look its best, and new products are coming and exist already if you are willing to work for it, that allow you to record this content on your computer. Also, new TV series are likely to be released in 1080i on HD discs because that is their most common broadcast format. If you don't need this fine, but they sell a lot of cards to people who do.

autoboy - Wednesday, July 25, 2007 - link

Oh, I forgot to mention that only the video decode acceleration requires extra transistors, the deinterlacing calculations are done on the programable shaders of the cards requiring no additional hardware, just extra code in the drivers to work. The faster the video card, the better your deinterlacing, which explains why the 2400 and the 8500 cannot get perfect scores on the HQV tests. You can verify this on HD 2X00 cards by watching the GPU% in Riva Tuner while forcing different adaptive deinterlacing in CCC. This only works in XP btw.