A Messy Transition: Practical Problems With 32bit Addressing In Windows

by Ryan Smith on July 12, 2007 12:00 PM EST- Posted in

- Software

Now that we've seen what can happen when we reach the 2GB barrier and how easy it can be to pass it, let's talk about what it took to remove it in the first place for Supreme Command. Supreme Commander is unfortunately not compiled as being large address aware and must be modified so that Windows thinks that it is. Microsoft supplies a tool as part of the Visual Studio suite, Editbin, that can do just this by rewriting the file header to report to Windows that it is in fact large address aware.

To the best of our knowledge Supreme Commander was programmed using proper programming practices and can handle the larger address space, and this is merely an issue of turning it on. However on a more pragmatic note this can break future patches, and disturbingly it doesn't set off any sort of multiplayer cheat detection in the game in spite of the fact that we have modified the executable in a very visible way. Out of the changes we need to make to deal with the 2GB barrier though, this is the safer of the two.

Update: Gas Powered Games contacted us and let us know that the modified executable not setting off any cheat detection is intentional. The game code is all in a DLL, and the executable is just a launcher; it's left unchecked because of the various Digital Rights Management systems used change the exectuable.

Changing Windows on the other hand to allocate more of the virtual address space to applications is in practice just as dangerous as we theorized earlier. We initially set our copy of Windows Vista to adjust the split to 1GB kernel mode, 3GB user mode, only for Vista to encounter a BSOD while booting. We had to settle for a 1.4GB/2.6GB split before Vista would boot, and even then Vista still periodically encounters a BSOD upon booting at that allocation or any other allocation other than 2GB/2GB. While what problems may occur and with what values is highly variable from system to system, this is why trying to move the barrier at all can be dangerous.

Having made the above changes, we also used the chance to take a look at system performance both in and outside of Supreme Commander, taking interest in to the effect of allocating more user mode space. As we theorized before, taking space away from the kernel may impact performance, and this is something that needs to be tested. For this we ran a cut-down version of our normal system test suite, with allocations of 1.4GB/2.6GB, 1.7GB/2.3GB, and the default 2GB/2GB.

| Software Test Bed | |

| Processor | AMD Athlon 64 4600+ (2x2.4GHz/512KB Cache, S939) |

| RAM | OCZ EL Platinum DDR-400 (4x512MB) |

| Motherboard | ASUS A8N-SLI Premium (nForce 4 SLI) |

| System Platform Drivers | NV 15.00 |

| Hard Drive | Maxtor MaXLine Pro 500GB SATA |

| Video Cards | 1 x GeForce 8800GTX |

| Video Drivers | NV ForceWare 158.45 |

| Power Supply | OCZ GameXStream 700W |

| Desktop Resolution | 1600x1200 |

| Operating System | Windows Vista Ultimate 32-Bit |

| . | |

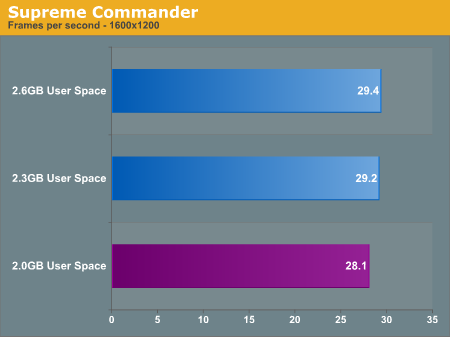

Starting with Supreme Commander, the only test here that can even utilize more than 2GB of user space, we do find a minor but consistent variation in performance. Increasing the user space improved Supreme Commander performance by about 1 frame per second, which at around a 3.5% performance improvement is right along the edge of either being significant or a normal variation. We repeated this test several times just to make sure that it wasn't a variation and the results remained consistent, so it doesn't appear to be a variation. With that said the instability caused by adjusting the user space size does not justify the extremely minor performance improvement.

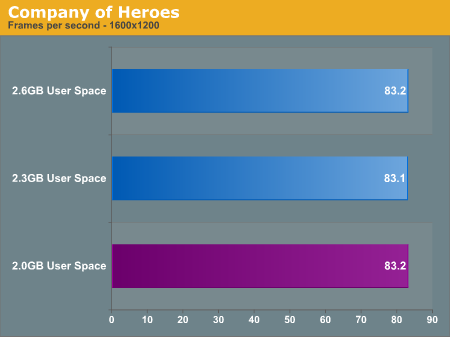

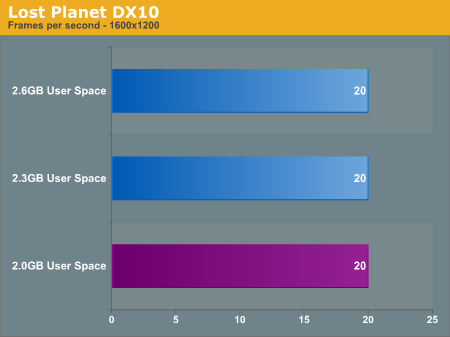

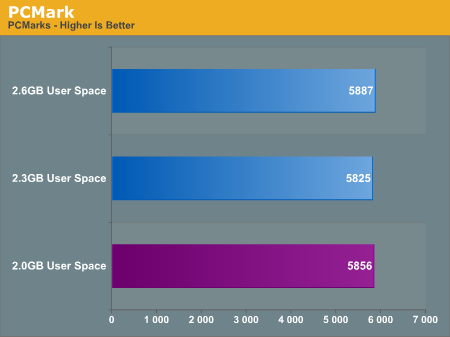

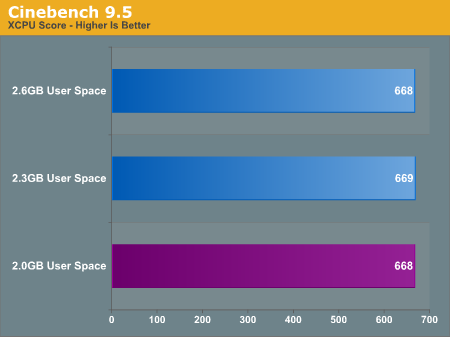

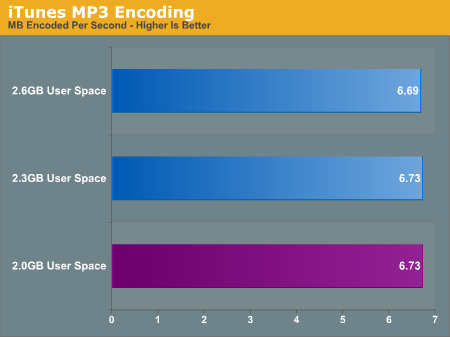

Going down the list of benchmarks, we find that there is no notable change in performance in any of our benchmarks. Since none of these benchmarks are capable of using more user space we weren't expecting an increase, but this puts to rest the idea of a performance decrease. There does not appear to be a performance decrease in adjusting the user space size; if it boots it'll perform well.

69 Comments

View All Comments

BabyBear - Thursday, July 12, 2007 - link

This also happens with Flight Simulator X by the way.

Over on Phil Taylor's blog he makes mention of it awhile back

http://blogs.msdn.com/ptaylor/archive/2007/06/15/f...">Microsoft's Phil Taylors Blog

There was also some talk that the June 07 DirectX Redist. seemed to 'help' with Out of Memory problems when using the /3gb switch.

joetron2030 - Thursday, July 12, 2007 - link

On "Page 4: A Case Study: Supreme Commander", in the paragraph discussing the first screen shot from Sysinternals' Process Explorer, you refer to the "Virtual Size" column as the "Private Size" column (which doesn't exist in the screen shot).Had me briefly confused.

quanta - Thursday, July 12, 2007 - link

Although Windows 2000/2003/Vista server versions aren't exactly designed for gaming, did anyone tested game titles on these server OS to see if these large address aware titles will use the Physical Address Extension feature?Ryan Smith - Thursday, July 12, 2007 - link

The short answer is no.The longer answer is that due to a combination of chipset support, software support, performance, and driver support, it's not really usable outside of a server environment and shouldn't be used on a consumer system.

brink - Thursday, July 12, 2007 - link

Wouldn't matter, WinXP SP2 uses PAE, why would you want to install it on a server OS? Only 2003 is a server OS of the ones you mentioned, and I think only Windows 2003 Enterprise is the only 32-bit OS that has the ability to use more than 4GB of memory (8GB seems to be the limit for what modules/mobos are available right now)TA152H - Thursday, July 12, 2007 - link

I'm just reading the remarks about the 386 pushing the memory from 1 MB, to 4 GB. It's patently untrue, unless you think the 386 succeeded the 8086, and not the 286. The 286 had 24 bit addressing (in 64K segments) for 16 MB of memory. This is not only what OS/2 used, but extended memory as well. So, it's not academic.Ryan Smith - Thursday, July 12, 2007 - link

Just hearing the words "segmented memory" gives me flashbacks - and they're not the good kind. For this article we're talking strictly about flat addressing since I could write a small book on just segmented addressing, but I've updated the article to make this clear.TA152H - Thursday, July 12, 2007 - link

Either way, you're using the wrong values. Even on the 8086 the memory was segmented. Actually, so was the 386, but it could handle segments up to 4 GB, so it wasn't important. So, using 1 MB and saying you're not referring to segmentation is inaccurate; that is segmented memory.Segmentation was not a bad thing, particularly back then. It saved memory space, and back in 1978 that was a big thing. Addresses were given in 16 bits, you didn't have to specify the rest, and the net result was you had shorter code. You would have to change the registers to point to the next segment from time to time, but overall it saved a lot of memory. You also could protect apps from each other, but that wasn't really used.

I was an OS/2 developer, and it was a pain sometimes for sure. By the time the 286 came out, which I think was the best processor Intel ever made considering the timing, and the incredible capabilities it had over it's predecessor (the 8086, not the 80186 which was made in parallel with the 286), the extra memory saving was not worth the nuisance of having to deal with, at most, 64K segments. But it really wasn't possible for Intel to do it any other way, as I'll explain below.

Motorola even added some external memory management unit that added segmentation, which today seems strange. It's widely viewed now as just a bad thing, but back then, it wasn't. Motorola really had a choice though, since the 68K was part 32-bit, and part 16-bit, and part 24-bit (addressing). Although the 286 was a more powerful processor than the 68000, it was pure 16-bit, except for the oddity of the 24-bit addressing. Consequently, allowing flat 24-bit addressing would not have been feasible. So, they dealt with it by just adding more segments. Considering the absolutely incredible improvement in this processor in just about every way, it was not such a bad tradeoff.

BitJunkie - Friday, July 13, 2007 - link

Hiya Bill.jay401 - Thursday, July 12, 2007 - link

Just FYI, SupCom has already exhibited this problem and crashes once it breaks the 2GB barrier, which can happen easily in longer games with a high unit limit.This is mostly due to the poor efficiency in their code and models, something GPG (the developer, Gas Powered Games) reported could not easily be fixed in a patch because they basically have to re-render every unit in the game so they take up less memory. Due to this, it's likely to remain unfixed until the expansion pack (now a standalone game) Forged Alliance comes out in November, if it is fixed at all

One forum member developed a way to increase the addressable memory to around 3GB on 32bit WinXP and Vista, so if you're running 4GB, this provides a sort of band-aid solution in the meantime.