A Messy Transition: Practical Problems With 32bit Addressing In Windows

by Ryan Smith on July 12, 2007 12:00 PM EST- Posted in

- Software

As we stated earlier, the inspiration behind this article is Supreme Commander, which was crashing during gameplay due to what we discovered to be was the game exhausting its user space. Without taking extreme and convoluted measures, most applications can do little besides (gracefully) crash when they've exhausted their user space. Server applications, which on the whole tend to be the king of memory hogs in the first place, tend to have some sort of compensation mechanism, but for more generalized applications like games this is not the case.

In this case, Supreme Commander was crashing late in to big games on a system that otherwise is completely stable. No amount of testing could come up with a problem outside of Supreme Commander, and most telltale of all was that it was only a problem with large maps with large number of players with high quality settings; turning anything down made the crashing stop. Although not clearly a memory error it greatly reduces the list of possible problems to a few things. The entire process, we suspect, will be similar for everyone hitting the 2GB barrier: the affected application is crashing under heavy load. Thankfully the problem is possible to debug, albeit with moderate difficulty.

With all of that said, I did not come up with the diagnosis or the solution myself and while the problem in retrospect is quite obvious, at the time I did not even take the 2GB barrier in to consideration. As such, credit for the diagnosis and solution belongs to MadBoris of the Gas Powered Games forums who identified Supreme Commander as hitting the 2GB barrier and publishing a solution for it, which we will cover here.

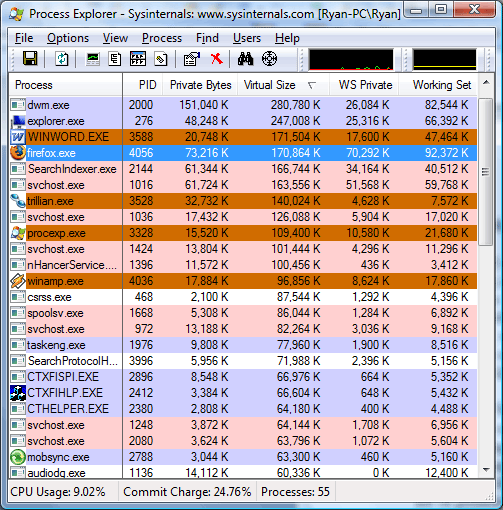

Getting to the root of the problem requires additional tools beyond what comes with Windows XP or Vista. The task manger, the logical tool for this task, doesn't track the information we need to know. At best, the task manager can list how much data is in the various memory pools altogether, however this is not the metric we're looking for. What we actually need to know is how much of the application's share of the virtual address space has been allocated (the fundamental problem after all is when we run out of unused virtual address space to allocate) which due to a multitude of reasons can be much greater than the amount of memory in use by an application. For finding this we'll need Sysinternals' Process Explorer.

The above screenshot was taken as this paragraph was written, showcasing several different memory values for the running processes. The WS Private (Working Set Private) column lists how much physical memory the application is using, and this is the same column listed by the Windows Task Manager for memory usage. The Private Bytes column is the total amount of memory the application is using. Last but not least however is the Virtual Size column, which finally lists the amount of virtual address space allocated, the metric we're after.

We'll quickly focus on Microsoft Word as an example of the discrepancy between physical memory usage and private size. While Word is only using 17MB of physical memory, its virtual size is 170MB, 10 times the physical memory usage and 9 times the total memory usage. This is more or less a worse case scenario but it also points out just how misleading any memory usage - physical or total - is when trying to diagnose this kind of problem. Applications or games with high memory usage tend to not have nearly as a severe spread, but as we can see it's possible for an application to hit the 2GB barrier well before it needs 2GB of physical memory.

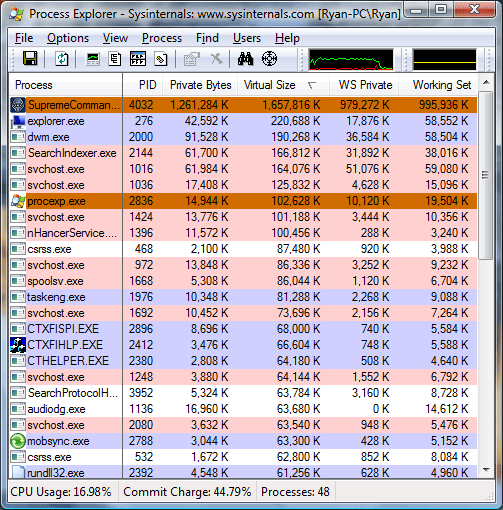

Getting to the meat of things however, the process readout for Supreme Commander basically says everything that needs to be said. In order to prove an exaggerated point, we started a 6 player game with 5 AI units on a maximum size map (81km x 81km) and set the game speed to maximum with maximum quality settings, while patching Supreme Commander and adjusting our system configuration to break the 2GB barrier. This actually isn't very playable for a variety of reasons (we'll overtax the CPU quickly due to all the AI units, slowing the game down dramatically) but represents not only what can happen in bad situations, but also is something that happens even in more manageable situations on smaller maps later in to the game.

Just at the start of the game, our virtual size was already in excess of 1.6GB, dangerously close to the 2GB barrier. 16 minutes in to the game, we have already hit a virtual size of 2.2GB, meaning had we not broken the 2GB barrier the game would have already crashed. At this point our total memory usage (private bytes) is only at 1.7GB, with 1.4GB of that in physical memory, nearly maximizing the RAM usage of our 2GB system. Our spread between memory usage and virtual size is .5GB, showcasing how deceptive memory usage is in identifying 2GB barrier issues.

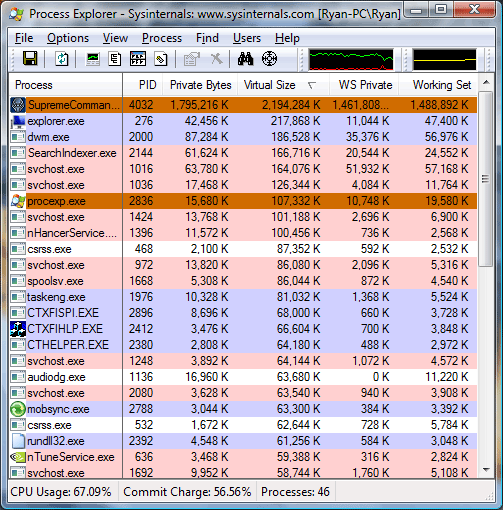

Finally at 22 minutes in to the game, the game crashes as the virtual size has reached the 2.6GB barrier we have reconfigured this system for. Perhaps the most troubling thing at this point is that Supreme Commander is not aware that it ran out of user space in its virtual address pool, as we are kicked out of the game with a generic error message. Unfortunately Windows Vista reverted to a non-accelerated desktop at this point, preventing us from capturing a screenshot of the exact memory readouts or the error message.

69 Comments

View All Comments

titan7 - Thursday, July 12, 2007 - link

There is nothing you can do. If you ship a game you could test it and ensure it doesn't run out of memory (e.g. GameCube has just 24megs of system memory and the last Zelda looked great. Quite a ways away from 2048megabytes PC games run into!), but what about a mod?When the application starts just allocate 512 megabytes or whatever you feel is reasonable. When new throws an exception free that memory, display a warning you're low on memory and need to upgrade to Vista64, and continue. When it new fails again one microsecond later you're screwed so display a message to the end user along the lines of "I told you so!" End users really like that type of thing. ;)

You could get a bit fancier by replacing all units with simple cubes or something, but all that does is delay in the inevitable a bit longer.

ncage - Thursday, July 12, 2007 - link

1) First step is to detect which OS you are using at startup. Is it 64 bit os or not?2) You SHOULD never code your application around a 2GB memory limit. It is very bad coding practice. Going through thorough examples in a short post like this isn't very practicle

3) Some higher level langauge/constructs abstract this away from you. For example if you are using .Net CLR you don't really have to worry about this unless maybe your doing some crazy pinvoke stuff which in most cases you shouldn't be doing anyways. Of course if your doing VB6 your never going to get around it anyways because vb6 is only 32 bit.

4) If you are doing C++ or assembly with windows then you can use the GlobalMemoryStatus() Function to Effectively see how much available address space you have.

Ryan Smith - Thursday, July 12, 2007 - link

The key to any of this is monitoring how much of the virtual address pool is in use; there should be an API call to ask Windows this. The easiest thing to do would be to give a warning at 1.9GB or so and then either do nothing, trigger a crash early, or attempt to reduce detail or flush space to stay below the 2GB barrier. The warning is the easy part, the hard part is preventing the crash, and I don't honestly believe anyone can or will be preventing crashing.yacoub - Thursday, July 12, 2007 - link

I just wish we had a better solution than Vista. Sure we can use 64bit XP but that's only going to last how much longer with full patch support from MS?If Vista wasn't such a pile and didn't perform worse in games when using equivalent hardware as the same system running XP, it wouldn't be such an unappealing alternative.

And even so, when running 4GB of RAM, how much over a LargeAddressAware flagged game with the 3GB boot.ini switch are you really gaining by using 64bit OS? Not much, really. We first need motherboards that are happy running 8GB of RAM, RAM cheap enough to buy 8GB for a reasonable price (which is not too hard with DDR2 2GB DIMMs right now), and do so at full performance/speed settings.

Really it's not just a move to a 64bit OS, it's also a move to 8GB of RAM.

OR it's simply having developers who code their games to work properly within 3GB of addressable RAM.

instant - Saturday, July 21, 2007 - link

How many more patches do you need for XP 64bit anyway?As long as the games work for it, why care about microsoft updating "security" hotfixes or not.

Tegeril - Thursday, July 12, 2007 - link

Articles like this make me very glad that I opted to go with 64bit Vista. All my hardware is supported at this point with stable drivers (we can argue the Creative X-Fi, but it works fine). I'm just amazed that people saw this coming and yet we have games that just die because of the problem. 64 bit isn't as bad as people make it out to be regarding compatibility :D - except iTunes and Quicktime :(halfeatenfish - Thursday, July 12, 2007 - link

How do the *nix variants deal with this same issue? Do they even have it? Can someone shed some light, especially in terms of OS X... Leopard, if anyone knows anything there. But Tiger info is just as good.titan7 - Thursday, July 12, 2007 - link

They all do the same thing for 32bit. Bu generally speaking *nix has been 64bit for years (decades) so if it is a problem just run the 64bit version of everything. And being more cross platform their code tends to have less hacks like you get in Windows apps that assume there is an extra bit available on every pointer. A single bit! Bah, we're talking about billions of bytes and elite programmers are trying to squeeze every last bit out of their application at the expense of future compatibility. LAME.The Boston Dangler - Thursday, July 12, 2007 - link

OSX is a 64-bit system, *nix ymmvMadBoris - Thursday, July 12, 2007 - link

Cool article Ryan. Good to see these issues getting more global attention.Since 32 bit seems it is here to stay for a lot longer than we want it to, and with software bloat continuing, this will hopefully continue to put pressure on driver devs to write better drivers that can handle >2GB addresses without issue. So that people can use the /3Gb switch without concern. I personally have never had problems with /3GB with any of my hardware/drivers but certainly 'less mainstream' drivers may not be handled with the care that they should be.

I like the breakdown of games/apps that support the LargeAddressAware flag, maybe this list can grow for future articles covering more apps/games. I also enjoyed your testing on the "potential" penalty of less kernel space, something I never took the time to do on my own.

Imagine my suprise today when making my rounds to my favorite hardware site. ;)