Real World DirectX 10 Performance: It Ain't Pretty

by Derek Wilson on July 5, 2007 9:00 AM EST- Posted in

- GPUs

Call of Juarez

There has been quite a bit of controversy and drama surrounding the journey of Call of Juarez from DirectX 9 to DirectX 10. As many may remember, AMD handed out demos of the DirectX 10 version of Call of Juarez prior to the launch of R600. This build didn't fully support NVIDIA hardware, so many review sites opted not to test it. On its own, this is certainly fine and no cause for worry. It's only normal to expect a company to want to show off something cool running on their hardware even if it isn't as fully functional as the final product will be.

But, after NVIDIA found out about this, they set out to help Techland bring their demo up to par and get it to run properly on G80 based systems. Some publications were able to get an updated build of the game from Techland which included NVIDIA's fixes. When we requested the same from them, they declined to provide us with this updated code. They cited the fact that they would be releasing a finalized benchmark in the near future. Again, this was fine with us and nothing out of the ordinary. We would have liked to get our hands on the NVIDIA update, but it's Techland's code and they can do what they want with it.

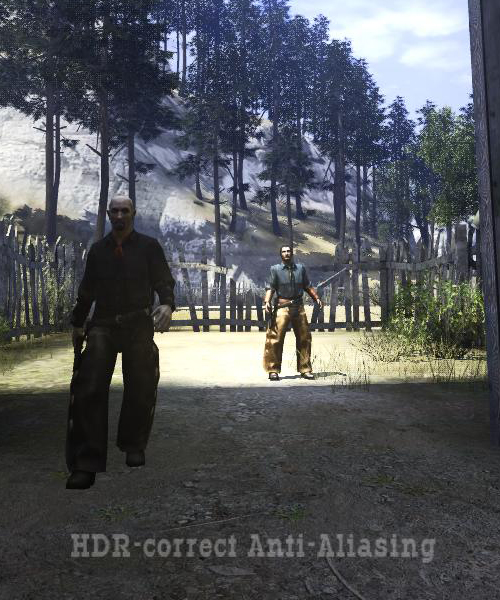

Move forward to the release of the of the Call of Juarez benchmark we currently have for testing, and now we have a more interesting situation on our hands. Techland decided to implement something they call "HDR Correct" antialiasing. This feature is designed to properly blend polygon edges in cases with very high contrast due to HDR lighting. Using a straight average or even a "gamma corrected" blend of MSAA samples can result in artifacts in extreme cases when paired with HDR.

The real caveat here is that doing HDR correct AA requires custom MSAA resolve. AMD hardware must always necessarily perform AA resolves in the shader hardware (as the R/RV6xx line lack dedicated MSAA resolve hardware in their render backends), so this isn't a big deal for them. NVIDIA's MSAA hardware, on the other hand, is bypassed. The ability of DX10 to allow individual MSAA samples to be read back is used to perform the custom AA resolve work. This incurs quite a large performance hit for what NVIDIA says is little to no image quality gain. Unfortunately, we are unable to compare the two methods ourselves, as we don't have the version of the benchmark that actually ran using NVIDIA's MSAA hardware.

NVIDIA also tells us that some code was altered in Call of Juarez's parallax occlusion mapping shader that does nothing but degrade the performance of this shader on NVIDIA hardware. Again, we are unable to verify this claim ourselves. There are also other minor changes that NVIDIA feels unnecessarily paint AMD hardware in a better light than the previous version of the benchmark.

But Techland's response to all of this is that game developers are the one's who have the final say in what happens with their code. This is definitely a good thing, and we generally expect developers to want to deliver the best experience possible to their users. We certainly can't argue with this sentiment. But whether or not anything is going on under the surface, it's very clear that Techland and NVIDIA are having some relationship issues.

No matter what's really going on, it's better for the gamer if hardware designers and software developers are all able to work closely together to design high quality games that deliver a consistent experience to the end user. We want to see all of this as just an unfortunate series of miscommunications. And no matter what the reason, we are here today with what Techland has given us. The performance of their code as it is written is the only thing that really matters, as that is what gamers will experience. We will leave all other speculation in the hands of the reader.

So, what are the important DirectX 10 features that this benchmark uses? We see geometry shaders to simulate water particle effects, alpha-to-coverage for smooth leaf and grass edges, and custom MSAA resolve for HDR correct AA.

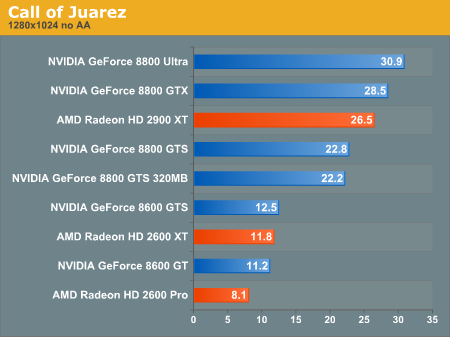

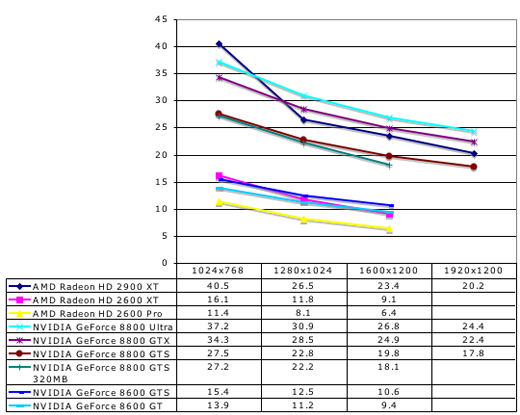

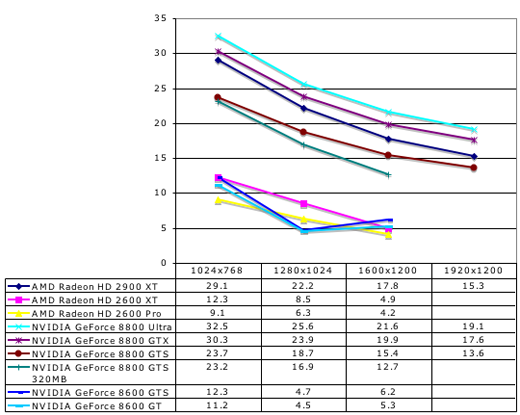

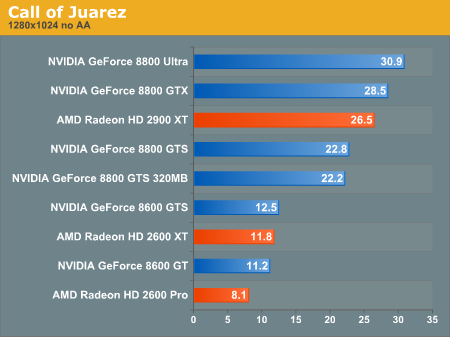

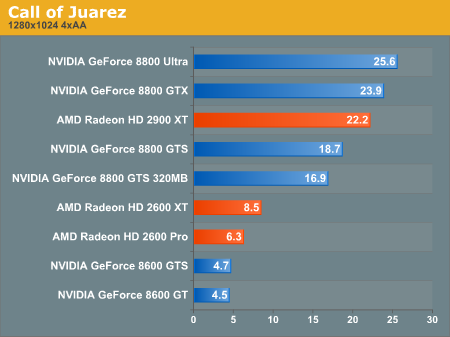

Call of Juarez Performance

The AMD Radeon HD 2900 XT clearly outperforms the GeForce 8800 GTS here. At the low end, none of our cards are playable under any option the Call of Juarez benchmark presents. While all the numbers shown here are with large shadow maps and high quality shadows, even without these features, the 2400 XT only posted about 10 fps at 1024x768. We didn't bother to test it against the rest of our cards because it just couldn't stack up.

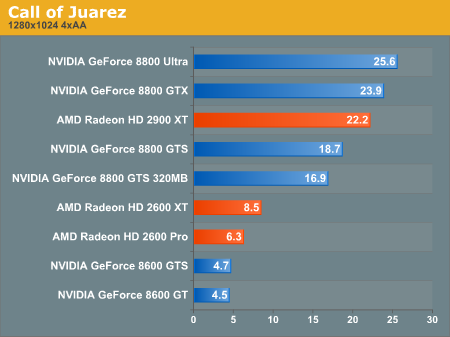

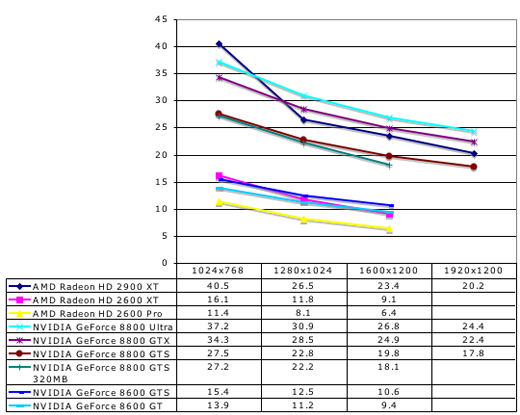

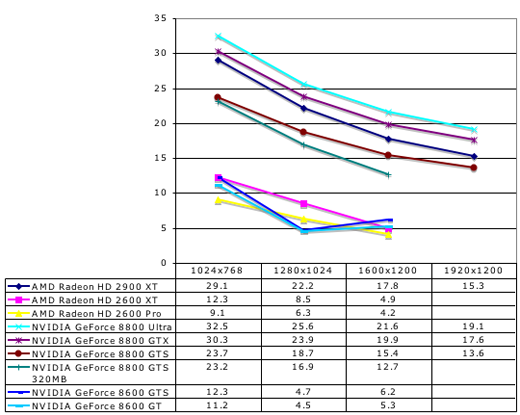

Call of Juarez 4xAA Performance

With 4xAA enabled, our low-end NVIDIA hardware really tanks. Remember that even these cards must resolve all MSAA samples in their shader hardware. AMD's parts are designed to always handle AA in this manner, but NVIDIA's parts only support the feature inasmuch as DX10 requires it.

We do see some strange numbers from the low-end NVIDIA cards at 1600x1200, but its likely that they performed so poorly here that rendering certain aspects of the scene failed to the point of improving performance (in other words, it's likely not everything was rendered properly even though we didn't notice anything).

There has been quite a bit of controversy and drama surrounding the journey of Call of Juarez from DirectX 9 to DirectX 10. As many may remember, AMD handed out demos of the DirectX 10 version of Call of Juarez prior to the launch of R600. This build didn't fully support NVIDIA hardware, so many review sites opted not to test it. On its own, this is certainly fine and no cause for worry. It's only normal to expect a company to want to show off something cool running on their hardware even if it isn't as fully functional as the final product will be.

But, after NVIDIA found out about this, they set out to help Techland bring their demo up to par and get it to run properly on G80 based systems. Some publications were able to get an updated build of the game from Techland which included NVIDIA's fixes. When we requested the same from them, they declined to provide us with this updated code. They cited the fact that they would be releasing a finalized benchmark in the near future. Again, this was fine with us and nothing out of the ordinary. We would have liked to get our hands on the NVIDIA update, but it's Techland's code and they can do what they want with it.

Move forward to the release of the of the Call of Juarez benchmark we currently have for testing, and now we have a more interesting situation on our hands. Techland decided to implement something they call "HDR Correct" antialiasing. This feature is designed to properly blend polygon edges in cases with very high contrast due to HDR lighting. Using a straight average or even a "gamma corrected" blend of MSAA samples can result in artifacts in extreme cases when paired with HDR.

The real caveat here is that doing HDR correct AA requires custom MSAA resolve. AMD hardware must always necessarily perform AA resolves in the shader hardware (as the R/RV6xx line lack dedicated MSAA resolve hardware in their render backends), so this isn't a big deal for them. NVIDIA's MSAA hardware, on the other hand, is bypassed. The ability of DX10 to allow individual MSAA samples to be read back is used to perform the custom AA resolve work. This incurs quite a large performance hit for what NVIDIA says is little to no image quality gain. Unfortunately, we are unable to compare the two methods ourselves, as we don't have the version of the benchmark that actually ran using NVIDIA's MSAA hardware.

NVIDIA also tells us that some code was altered in Call of Juarez's parallax occlusion mapping shader that does nothing but degrade the performance of this shader on NVIDIA hardware. Again, we are unable to verify this claim ourselves. There are also other minor changes that NVIDIA feels unnecessarily paint AMD hardware in a better light than the previous version of the benchmark.

But Techland's response to all of this is that game developers are the one's who have the final say in what happens with their code. This is definitely a good thing, and we generally expect developers to want to deliver the best experience possible to their users. We certainly can't argue with this sentiment. But whether or not anything is going on under the surface, it's very clear that Techland and NVIDIA are having some relationship issues.

No matter what's really going on, it's better for the gamer if hardware designers and software developers are all able to work closely together to design high quality games that deliver a consistent experience to the end user. We want to see all of this as just an unfortunate series of miscommunications. And no matter what the reason, we are here today with what Techland has given us. The performance of their code as it is written is the only thing that really matters, as that is what gamers will experience. We will leave all other speculation in the hands of the reader.

So, what are the important DirectX 10 features that this benchmark uses? We see geometry shaders to simulate water particle effects, alpha-to-coverage for smooth leaf and grass edges, and custom MSAA resolve for HDR correct AA.

The AMD Radeon HD 2900 XT clearly outperforms the GeForce 8800 GTS here. At the low end, none of our cards are playable under any option the Call of Juarez benchmark presents. While all the numbers shown here are with large shadow maps and high quality shadows, even without these features, the 2400 XT only posted about 10 fps at 1024x768. We didn't bother to test it against the rest of our cards because it just couldn't stack up.

With 4xAA enabled, our low-end NVIDIA hardware really tanks. Remember that even these cards must resolve all MSAA samples in their shader hardware. AMD's parts are designed to always handle AA in this manner, but NVIDIA's parts only support the feature inasmuch as DX10 requires it.

We do see some strange numbers from the low-end NVIDIA cards at 1600x1200, but its likely that they performed so poorly here that rendering certain aspects of the scene failed to the point of improving performance (in other words, it's likely not everything was rendered properly even though we didn't notice anything).

59 Comments

View All Comments

SniperWulf - Thursday, July 5, 2007 - link

I would have been nice if you guys could have included numbers with the latest publicly available drivers (beta or not) from ATI and NV, just to get an idea of what type of performance we can expect in the futureDerekWilson - Thursday, July 5, 2007 - link

Actually, the beta drivers will give you a better idea of what to expect than the current WHQL drivers, which is why we used them.TA152H - Thursday, July 5, 2007 - link

First of all, the whole name of the article is a poor choice of words. DX10 is pretty, but it's not fast. At least I think that was your point.Next, the choice of processors is too limited. I don't know where you guys get your sales figures from, but Intel's extreme processors aren't their best sellers. Since part of the point of these processors is to do work in the video card in DX10 that was done in the CPU before, you might want another data point with a relatively inexpensive processor and see how DX9 relates to DX10.

Saying there will not be ANY DX10 only games for the next two years is strange. You will have an installed base to overcome, but for gamers it's not so important because they upgrade often, and some of the games don't design for old hardware anyway. Aren't there some games now that weren't made to play on hardware two years ago well? I would guess someone will decide well before then that designing for obsolete software isn't worth the effort and cost, and will ignore the installed base to create a better product that will take less time and cost less. Going only slightly, if you had an exceptionally good DX10 game, there would be enough people to make it profitable if you were the only one that did it. You always have the dorks that like using words like "eye-candy" (which probably means they have insufficient testosterone in their bloodstream) that will go out and buy whatever is prettiest. No DX9 means no wasted developers, means faster development for DX10, and less cost. So, before two years, it's entirely plausible that someone making a cutting edge game will decide it's not worth the effort, or cost, of supporting an obsolete software base.

OK, those charts are horrible. Put a little effort in them, instead of letting everyone know they are an afterthought that you hate doing. The whole purpose of charts is to disseminate information quickly and intuitive, your charts totally fail at this. Top chart, you have the ambiguous "% change from DX9 performance". Now most people, just viewing the chart, would assume something to the right is an enhancement. But, no! Everything is less. The next chart uses the same words, but this time green denotes a gain in performance. Yes, blue is typically what people associate with negatives, green with positives. Ever hear of "in the red", or "in the black"? I have. Most people have. Red for negatives, and black for positives would have been a little more understandable if you simply refuse to put in a guide.

Next you have "perf drop with xxx", which is what the first chart should have read, but you didn't want to go back and fix it. Even then, red would have been a better color for a negative, it's more intuitive and that's what charts are about.

Last, maybe Nvidia and AMD are right, that features are important. I was saying this earlier, and you're comparing apples and oranges when you say performance went down. Did DX10 performance go down? No. So, broadly, performance did not. DX10 performance didn't even exist, nor did some of the other features. I'm not saying everyone should buy these cards at all, they should measure what they need, and certainly some of the older cards with their obsolete feature set are fine for many, many people. At least today. But, by the same token, someone running Vista is probably going to want DX10 hardware, and they may not have massive amounts of money either to spend on it. So, the low end cards make sense too. Someone wanting the best possible visual experience WILL buy a DX10 card, and an expensive one. They have reasons for existing, they aren't broadly failures, but I think what irritates you is they aren't broadly successful either, like previous generations are and you are used to. It's understandable, but I think you're taking this so far you aren't seeing the value in them either. Obviously, since everyone has disappointed you in DX10 performance (Intel, Nvidia and AMD), shouldn't it show it's not an easy implementation, and that maybe they aren't all screwing up? If you give an exam and everyone gets a 10, maybe you need to look at the nature of the grading or test.

NT78stonewobble - Thursday, July 5, 2007 - link

I think the point regarding the low end dx 10 hardware was the following." Performance is so low that you will in reality not be able to use DX 10. "

So everyone that cannot afford the high end DX 10 would be better off with a dx 9 card that will be cheaper and performing the same (or maybe even better).

TA152H - Thursday, July 5, 2007 - link

Not true, the only software that runs DX10 will not be super demanding video games. And you will not be forced to run them at very high settings. For gaming, yes, but that's not the whole thing.DerekWilson - Friday, July 6, 2007 - link

in these cases, dx10 wouldn't necessarily be a better fit than dx9 -- or (more probably) opengl ...Martimus - Thursday, July 5, 2007 - link

While you got voted down because your comment was set in an attacking tone, I felt that the comment was very well written and that you had some very valid points. As for the charts, I thought that those were performance increases until I read your comment. I doubt most casual readers will take the time to understand the counterintuitive charts. The charts are what most people look at, so they are really the most important part of the article to get right. Maybe the author will learn from this article and do a better job on the charts in the future.TA152H - Thursday, July 5, 2007 - link

You know, when I see people like him writing things without thinking like that, it really irritates me, because it's so uninformed, so I get angry. I wish I didn't react like that, but let who is without sin cast the first stone. I guess it's better than being passionless. I really don't mind negative votes, I'd be more worried if I got positive ones.The charts really got under my skin, because he made no effort. It's just half-ass garbage. When you consider how many people read them, it's unsupportable. If I did work like that, I'd be ashamed.

Instead of taking a step back and saying, well, all the DX10 hardware hasn't been what we expected, maybe there is a reason for it, they quickly damn every company that makes it. It's got to be comparitive, because obviously these authors really don't know anything about designing GPUs (nor do I for that matter, so I'm not saying it to be vicious). But, when Intel, AMD and Nvidia all have disappointing DX9 performance with their DX10 cards, and DX10 performance broadly isn't great (although it seems better unless you add features), then maybe you should take a step back and say "Hmmmm, maybe we need to adjust our expectations".

It's kind of funny, because they do this with microprocessors already, because there was a fundemental change that made everyone reevaluate it. The Core 2 would be a complete piece of crap if you judged it by the 1980s and 1990s standard. It was, by those standards, an extremely small improvement over the P7 core, and even worse over the Yonah. But, the way processors are graded are different now, because our expectations were lowered somewhat by the P6 (it was a great processor, but again, it wasn't as good as the P5 was over the P4, or the P4 over the P3), and greatly by the K7 and P7. So, maybe GPUs are hitting that point in maturity where the incredible improvements in performance are a thing of the past, and the pace will slow down. Now, someone will correctly say, well, DX9 performance has decreased in some cases. Well, that's fine, but we also have a precedence in the processor world. Let's go back to the P6. It ran the majority of existing code (Real Mode) WORSE than the P5, because it was designed for 386 protected mode and didn't care much about Real mode. Or how about the 386? It wasn't any better on 16-bit code in terms of performance (it did at the virtual 86 mode though, but it wasn't for performance), but added two new modes the most important of which was (although not at the time) was 386 protected.

Articles like this irritate me because they are so simplistic and have so little thought put in them. They lack perspective.

titan7 - Thursday, July 12, 2007 - link

Here's a bit of info on the cards. Back in the d3d8 era Matrox introduces the first 256bit memory bus for their cards with the Parhelia. All things equal that provides twice the speed of a 128bit bus, but is more expensive to manufacture.nvidia and ATI still had 128bit buses at the time, but for their high end d3d9 cards (Radeon 9700 and GeForceFX 5900) switched to 256 bit buses because 128 just couldn't keep up.

We're four generations ahead now and both IHVs have used 128bit buses for their mid range d3d10 cards, even though 128bits was becoming bottle neck during the d3d8 era five generations ago!

This article was right on the money, nvidia focused on making their 8600 pin compatible with their old mid range 6600 card which is now three generations old! Intel didn't even leave their p4 compatible with itself! Yay, nvidia allowed Asus, etc to save on R&D costs. Too bad it meant customers have a handy capped chip. This article called them on it.

256bit, 512megabytes is the standard for mid range d3d10. We need to wait a generation to get there.

DerekWilson - Thursday, July 5, 2007 - link

First, the data presented in the graphs does make sense if you take a second to think about what it means.It shows basically that under DX10 NVIDIA generally performs better relative to AMD than under DX9.

It also shows that under DX10 AMD handles AA better relative to NVIDIA than under DX9.

I will work on altering the graphs to show +/- 100% if people think that would present better. Honestly, I don't think it will be much easier to read with the exception that people tend to think higher is better.

But ... as for the rest of your post, I completely disagree.

When we step back and stop pushing AMD and NVIDIA to live up to specific expectations, we've stopped doing our job.

Justifying poor design choices by looking at the past is no way to advance the industry. A poor design choice is a poor design choice, no matter how you slice it. And it's the customer, not the industry of the company, who is qualified to decide whether or not something was a poor choice or not.

The fundamental problem with engineering is that you are building a device to fit within specific constraints. It is a very difficult job that consists of a great deal of cost/benefit analysis and hard choices. But the bottom line with any engineering project is that it must satisfy the customer's needs or it will not sell and it doesn't matter how much careful planning went into it.

It's when consumers (and hardware review sites who represent the consumers) stop demanding fundamental characteristics that absolutely must be present in the devices we purchase that we subject our selves to sub par hardware.

Having studied computer engineering, with a focus in microprocessor architecture and 3d graphics, I certainly do know a bit about designing GPUs. And, honestly, there are reasons that DX10 hardware hasn't been what people wanted. This is a first generation of hardware that supports a new API using a very new hardware model based on general purpose unified shaders. It was a lot to do in one generation, and no one is damning anyone else for it.

But that doesn't mean we have to pretend that we're happy about it. And our expectations have always been more subdued than that of the general public BTW. We've said for a while not to expect heavy DX10 dependent games for years. It's the same situation we saw with DX9.

And honestly, the problems we are seeing are similar to what we saw with the original GeForce FX -- only not as extreme. Especially because both NVIDIA and AMD do well in the thing they must do well at: DirectX 9 rendering.

Honestly, this supports what we've been saying all along: the most important factor in a 3d graphics purchase today is DirectX 9 performance.