ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

DX10 Redux

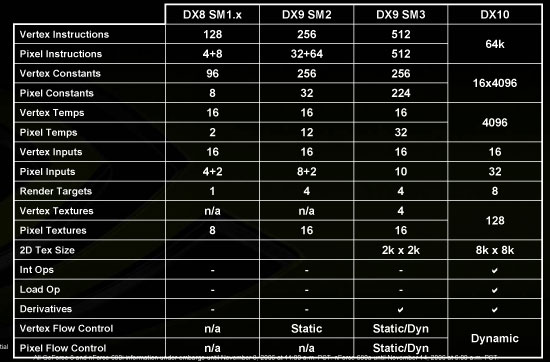

Being late to the game also means being late to DirectX 10; luckily for AMD there hasn't been much in the way of DX10 titles released nor will there be for a while - a couple titles should have at least some form of DX10 support in the next month or two, but that's about it. What does DX10 offer above and beyond DX9 that makes this move so critical? We looked at DirectX 10 in great detail when NVIDIA released G80, but we'll give you a quick recap here as a reminder of what the new transistors in R600 are going to be used for.

From a pure performance standpoint, DX10 offers more efficient state and object management than DX9, resulting in less overhead from the API itself. There's more room to store data for use in shader programs, and this is largely responsible for the reduction in management overhead. For more complex shader programs, DX10 should perform better than DX9.

A hot topic these days in the CPU world is virtualization, and although it's not as much of a buzzword among GPU makers there's still a lot to talk about the big V when it comes to graphics. DirectX 10 and the new WDDM (Windows Display Driver Model) require that graphics hardware supports virtualization, the reason being that DX10 applications are no longer guaranteed exclusive access to the GPU. The GPU and its resources could be split between a 3D game and physics processing, or in the case of a truly virtualized software setup, multiple OSes could be vying for use of the GPU.

Virtual memory is also required by DX10, meaning that the GPU can now page data out to system memory if it runs out of memory on the graphics card. If managed properly and with good caching algorithms, virtualized graphics memory can allow game developers to use ridiculously large textures and objects. 3DLabs actually first introduced virtualized graphics memory on its P10 graphics processor back in 2002; Epic Games' Tim Sweeney had this to say about virtual memory for graphics cards back then:

"This is something Carmack and I have been pushing 3D card makers to implement for a very long time. Basically it enables us to use far more textures than we currently can. You won't see immediate improvements with current games, because games always avoid using more textures than fit in video memory, otherwise you get into texture swapping and performance becomes totally unacceptable. Virtual texturing makes swapping performance acceptable, because only the blocks of texels that are actually rendered are transferred to video memory, on demand.

Then video memory starts to look like a cache, and you can get away with less of it - typically you only need enough to hold the frame buffer, back buffer, and the blocks of texels that are rendered in the current scene, as opposed to all the textures in memory. So this should let IHVs include less video RAM without losing performance, and therefore faster RAM at less cost.

This does for rendering what virtual memory did for operating systems: it eliminates the hard-coded limitation on RAM (from the application's point of view.)"

Obviously the P10 was well ahead of its time as a gaming GPU, but now with DX10 requiring it, virtualized graphics memory is a dream come true for game developers and will bring even better looking games to DX10 GPUs down the line. Of course, with many GPUs now including 512MB or more RAM it may not be as critical a factor as before, at least until we start seeing games and systems that benefit from multiple Gigabytes of RAM.

Continuing on the holy quest of finding the perfect 3D graphics API, DX10 does away with the hardware capability bits that were present in DX9. All DX10 hardware must support the same features and furthermore, Microsoft will only allow DX10 shaders to be written in HLSL (High Level Shader Language). The hope is that the combination of eliminating cap bits and shader level assembly optimization will prevent any truly "bad" DX10 hardware/software from getting out there, similar to what happened in the NV30 days.

Although not specifically a requirement of DX10, Unified Shaders are a result of changes to the API. While DX9 called for different precision for vertex and pixel shaders, DX10 requires all shaders to use 32-bit precision, making the argument for unified shader hardware more appealing. With the same set of 32-bit execution hardware, you can now run pixel, vertex, and the new geometry shaders (also introduced in DX10). Unified shaders allow extracting greater efficiency out of the hardware, and although they aren't required by DX10 they are a welcome result now implemented by both major GPU makers.

Finally, there are a number of enhancements to Shader Model 4.0 which simply allow for more robust shader programs and greater programmer flexibility. In the end, the move to DX10 is just as significant as prior DirectX revisions but don't expect this rate of improvement to continue. All indications point to future DX revisions slowing in their pace and scope. However, none of this matters to us today; with the R600 as AMD's first DX10 architecture, much has changed since the DX9 Radeon X1900 series.

86 Comments

View All Comments

johnsonx - Monday, May 14, 2007 - link

and to which are you going to admit to?What was that old saying about glass houses and throwing stones? Shouldn't throw them in one? Definitely shouldn't them if you ARE one!

Puddleglum - Monday, May 14, 2007 - link

You mean, while it does compete performance-wise?johnsonx - Monday, May 14, 2007 - link

No, I'm pretty sure they mean DOESN'T. That is, the card can't compete with a GTX, yet still uses more power.INTC - Monday, May 14, 2007 - link

Chadder007 - Monday, May 14, 2007 - link

When will we have the 2600's out in review?? Thats the card im waiting for.TA152H - Monday, May 14, 2007 - link

Derek,I like the fact you weren't mincing your words, except for a little on the last page, but I'll give you a perspective of why it might be a little better than some people will think.

There are some of us, and I am one, that will never buy NVIDIA. I bought one, had nothing but trouble with it, and have been buying ATI for 20 years. ATI has been around for so long, there is brand loyalty, and as long as they come out with something that is competent, we'll consider it against their other products without respect to NVIDIA. I'd rather give up the performance to work with something I'm a lot more comfortable with.

The power though is damning, I agree with you 100% on this. Any idea if these beasts are being made by AMD now, or still whoever ATI contracted out? AMD is typically really poor in their first iteration of a product on a process technology, but tend to improve quite a bit in succeeding ones. I wonder how much they'll push this product initially. It might be they just get it out to have it out, and the next one will be what is really a worthwhile product. That only makes sense, of course, if AMD is now manufacturing this product. I hope they are, they surely don't need to make anymore of their processors that aren't selling well.

One last thing I noticed is the 2400 Pro had no fan! It had a heatsink from Hell, but that will still make this a really attractive product for a growing market segment. Any chance of you guys doing a review on the best fanless cards?

DerekWilson - Wednesday, May 16, 2007 - link

TSMC is manufacturing the R600 GPUs, not AMD.AnnonymousCoward - Tuesday, May 15, 2007 - link

"I bought one, had nothing but trouble with it, and have been buying ATI for 20 years."That made me laugh. If one bad experience was all it took to stop you from using a computer component, you'd be left with a PS/2 keyboard at best.

"...to work with something I'm a lot more comfortable with."

Are you more comfortable having 4:3 resolutions stretched on a widescreen? Maybe you're also more comfortable with having crappier performance than nvidia has offered for the last 6 months and counting? This kind of brand loyalty is silly.

MadBoris - Monday, May 14, 2007 - link

As far as your brand loyalty, ATI doesn't exist anymore. Furthermore AMD executives will got the staff so you can't call it the same.Secondly, Nvidia has been a stellar company providing stellar products. Everyone has some ups and downs. Unfortunately with the hardware and drivers this is ATI's (er AMD's) downs.

This card should do ok in comparison to the GTS, especially as drivers mature. Some reviews show it doing better than GTS640 in most tests, so I am not sure where or how discrepencies are coming about. Maybe hardware compatibility, maybe settings.

rADo2 - Monday, May 14, 2007 - link

Many NVIDIA 8600GT/GTS cards do not have a fan, are available on the market now, and are (probably; different league) much more powerful than 2400 ;) But as you are a fanboy, you are not interested, right?