Merging CPUs and GPUs

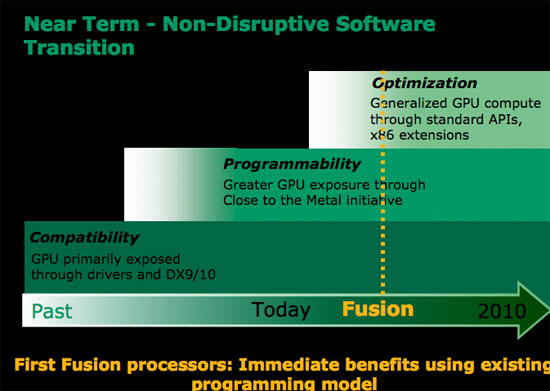

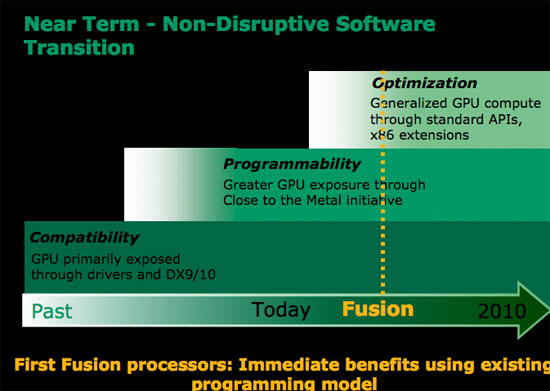

AMD has already outlined the beginning of its CPU/GPU merger strategy in a little product called Fusion. While quite bullish on Fusion, AMD hasn't done a tremendous job of truly explaining the importance of Fusion. Fusion, if you haven't heard, is AMD's first single chip CPU/GPU solution due out sometime in the 2008 - 2009 timeframe. Widely expected to be two individual die on a single package, the first incarnation of Fusion will simply be a more power efficient version of a platform with integrated graphics. Integrated graphics is nothing to get excited about, but it is what follows as manufacturing technology and processor architectures evolve that is really interesting.

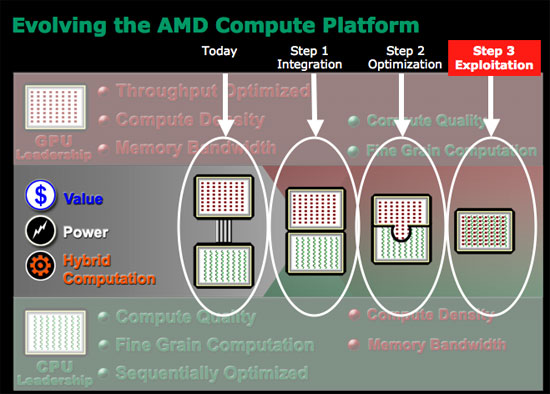

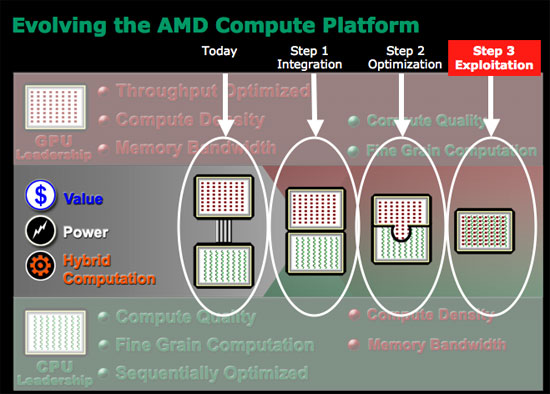

AMD views the Fusion progression as three discrete steps:

Today we have a CPU and a GPU separated by an external bus, with the two being quite independent. The CPU does what it does best, and the GPU helps out wherever it can. Step 1, is what AMD is calling integration, and it is what we can expect in the first Fusion product due out in 2008 - 2009. The CPU and GPU are simply placed next to one another and there's minor leverage of that relationship, mostly from a cost and power efficiency standpoint.

Step 2, which AMD calls optimization, gets a bit more interesting. Parts of the CPU can be shared by the GPU and vice versa. There's not a deep level of integration, but it begins the transition to the most important step - exploitation.

The final step in the evolution of Fusion is where the CPU and GPU are truly integrated, and the GPU is accessed by user mode instructions just like the CPU. You can expect to talk to the GPU via extensions to the x86 ISA, and the GPU will have its own register file (much like FP and integer units each have their own register files). Elements of the architecture will be shared, especially things like the cache hierarchy, which will prove useful when running applications that require both CPU and GPU power.

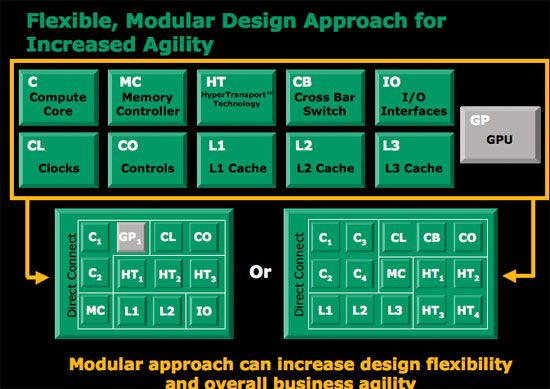

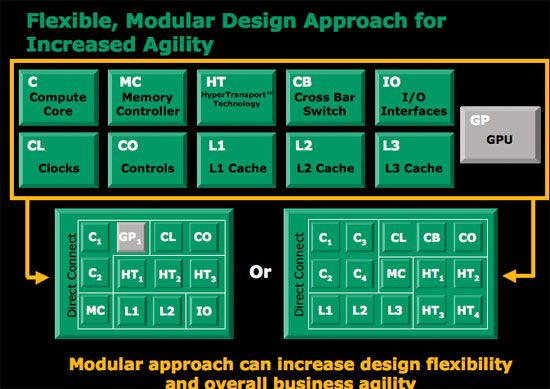

The GPU could easily be integrated onto a single die as a separate core behind a shared L3 cache. For example, if you look at the current Barcelona architecture you have four homogenous cores behind a shared L3 cache and memory controller; simply swap one of those cores with a GPU core and you've got an idea of what one of these chips could look like. Instructions that can only be processed by the specialized core will be dispatched directly to it, while instructions better suited for other cores will be sent to them. There would have to be a bit of front end logic to manage all of this, but it's easily done.

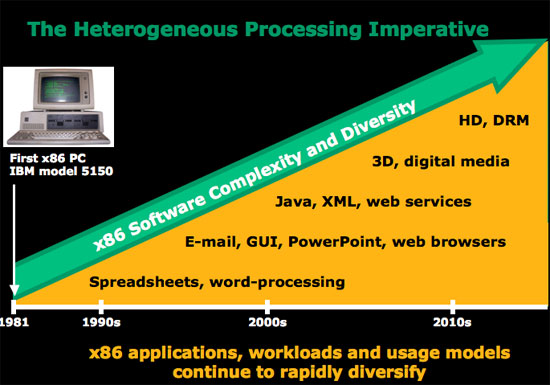

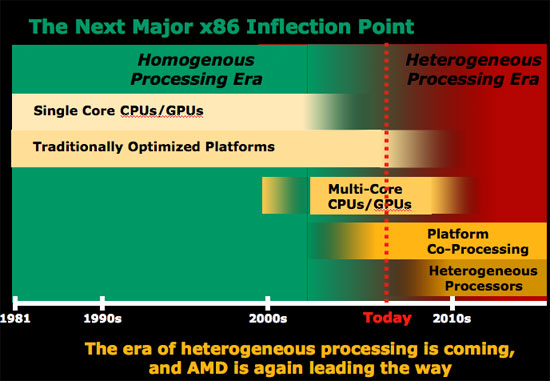

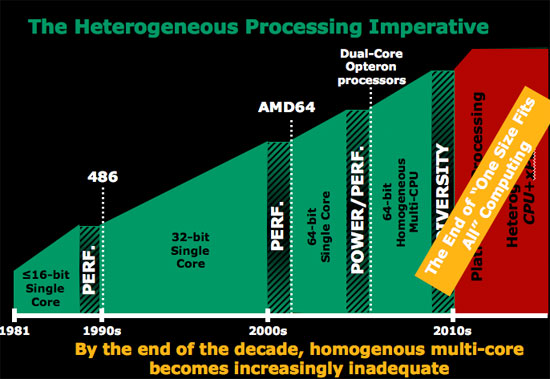

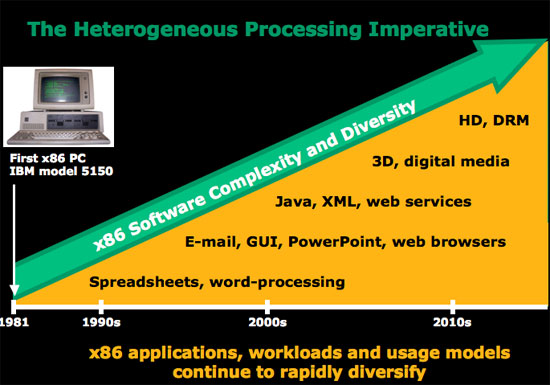

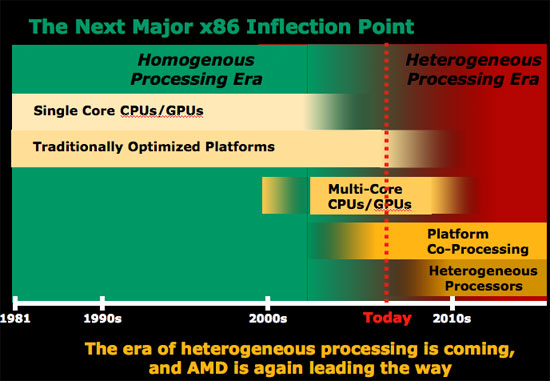

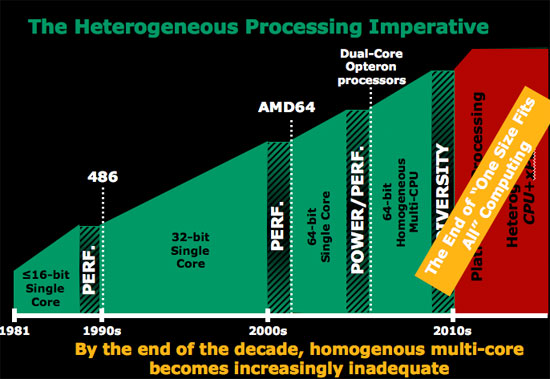

AMD went as far as to say that the next stage in the development of x86 is the heterogeneous processing era. AMD's Phil Hester stated plainly that by the end of the decade, homogeneous multi-core becomes increasingly inadequate. The groundwork for the heterogeneous processing era (multiple cores on chip each with a different purpose) will be laid in the next 2 - 4 years, with true heterogeneous computing coming after 2010.

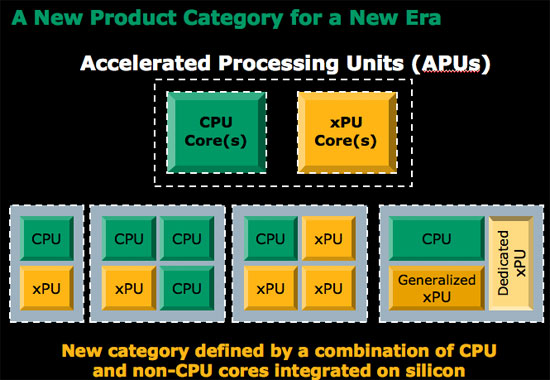

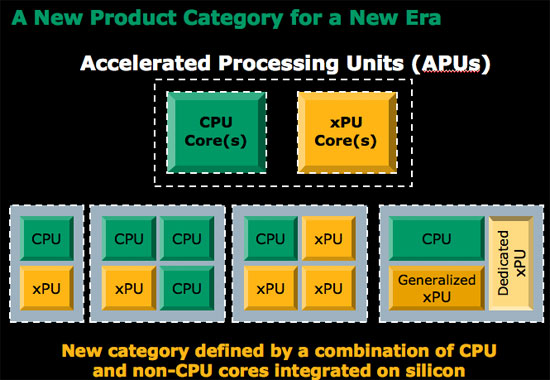

It's not just about combining the CPU and GPU as we know them; it's also about adding other types of processors and specialized hardware. You may remember that Intel made some similar statements a few IDFs ago, but not nearly as boldly as AMD given that Intel doesn't have nearly as strong of a graphics core to begin integrating. The xPUs listed in the diagram above could easily be things like H.264 encode/decode engines, network accelerators, virus scan accelerators, or any other type of accelerator that's deemed necessary for the target market.

In a sense, AMD's approach is much like that of the Cell processor, the difference being that with AMD's direction the end result would be a much more powerful sequential core combined with a true graphics core. Cell was very much ahead of its time, and by the time AMD and Intel can bring similar solutions to the market the entire industry will be far more ready for them than it was for Cell. Not to mention that treating everything as extensions to the x86 ISA makes programming far easier than with Cell.

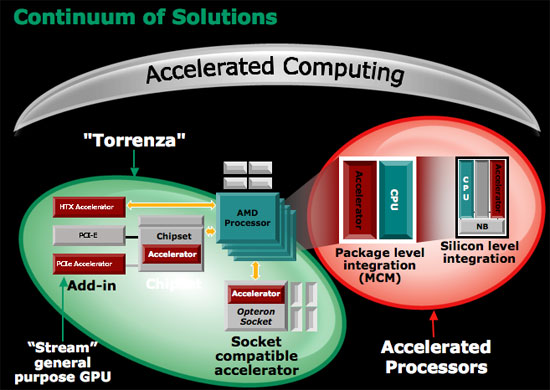

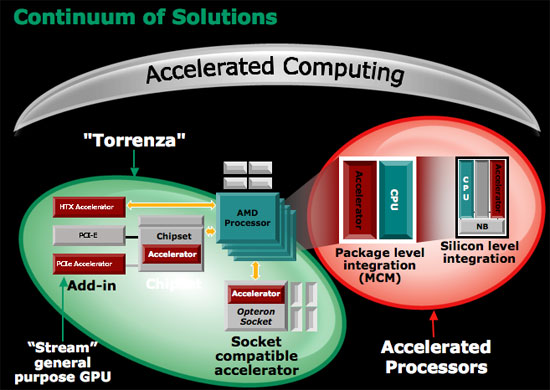

Where does AMD's Torrenza fall into play? If you'll remember, Torrenza is AMD's platform approach to dealing with different types of processors in an AMD system. The idea being that external accelerators could simply pop into an AMD processor socket and communicate with the rest of the system over Hyper Transport. Torrenza actually works quite well with AMD's Fusion strategy, because it allows for other accelerators (xPUs if you will) to be put in AMD systems without having to integrate the functionality on AMD's processor die. If there's enough demand in the market, AMD can eventually integrate the functionality on die, but until then Torrenza offers a low cost in-between solution.

AMD drew the parallel to the 287/387 floating point coprocessor socket that was present on 286/386 motherboards. Only around 2 - 3% of 286 owners bought a 287 FPU, while around 10 - 20% of 386 owners bought a 387 FPU; when the 486 was designed it simply made sense to integrate the functionality of the FPU into all models because the demand from users and developers was there. Torrenza would allow the same sort of migration to occur from external socket to eventual die integration if it makes sense, for any sort of processor.

AMD has already outlined the beginning of its CPU/GPU merger strategy in a little product called Fusion. While quite bullish on Fusion, AMD hasn't done a tremendous job of truly explaining the importance of Fusion. Fusion, if you haven't heard, is AMD's first single chip CPU/GPU solution due out sometime in the 2008 - 2009 timeframe. Widely expected to be two individual die on a single package, the first incarnation of Fusion will simply be a more power efficient version of a platform with integrated graphics. Integrated graphics is nothing to get excited about, but it is what follows as manufacturing technology and processor architectures evolve that is really interesting.

AMD views the Fusion progression as three discrete steps:

Today we have a CPU and a GPU separated by an external bus, with the two being quite independent. The CPU does what it does best, and the GPU helps out wherever it can. Step 1, is what AMD is calling integration, and it is what we can expect in the first Fusion product due out in 2008 - 2009. The CPU and GPU are simply placed next to one another and there's minor leverage of that relationship, mostly from a cost and power efficiency standpoint.

Step 2, which AMD calls optimization, gets a bit more interesting. Parts of the CPU can be shared by the GPU and vice versa. There's not a deep level of integration, but it begins the transition to the most important step - exploitation.

The final step in the evolution of Fusion is where the CPU and GPU are truly integrated, and the GPU is accessed by user mode instructions just like the CPU. You can expect to talk to the GPU via extensions to the x86 ISA, and the GPU will have its own register file (much like FP and integer units each have their own register files). Elements of the architecture will be shared, especially things like the cache hierarchy, which will prove useful when running applications that require both CPU and GPU power.

The GPU could easily be integrated onto a single die as a separate core behind a shared L3 cache. For example, if you look at the current Barcelona architecture you have four homogenous cores behind a shared L3 cache and memory controller; simply swap one of those cores with a GPU core and you've got an idea of what one of these chips could look like. Instructions that can only be processed by the specialized core will be dispatched directly to it, while instructions better suited for other cores will be sent to them. There would have to be a bit of front end logic to manage all of this, but it's easily done.

AMD went as far as to say that the next stage in the development of x86 is the heterogeneous processing era. AMD's Phil Hester stated plainly that by the end of the decade, homogeneous multi-core becomes increasingly inadequate. The groundwork for the heterogeneous processing era (multiple cores on chip each with a different purpose) will be laid in the next 2 - 4 years, with true heterogeneous computing coming after 2010.

It's not just about combining the CPU and GPU as we know them; it's also about adding other types of processors and specialized hardware. You may remember that Intel made some similar statements a few IDFs ago, but not nearly as boldly as AMD given that Intel doesn't have nearly as strong of a graphics core to begin integrating. The xPUs listed in the diagram above could easily be things like H.264 encode/decode engines, network accelerators, virus scan accelerators, or any other type of accelerator that's deemed necessary for the target market.

In a sense, AMD's approach is much like that of the Cell processor, the difference being that with AMD's direction the end result would be a much more powerful sequential core combined with a true graphics core. Cell was very much ahead of its time, and by the time AMD and Intel can bring similar solutions to the market the entire industry will be far more ready for them than it was for Cell. Not to mention that treating everything as extensions to the x86 ISA makes programming far easier than with Cell.

Where does AMD's Torrenza fall into play? If you'll remember, Torrenza is AMD's platform approach to dealing with different types of processors in an AMD system. The idea being that external accelerators could simply pop into an AMD processor socket and communicate with the rest of the system over Hyper Transport. Torrenza actually works quite well with AMD's Fusion strategy, because it allows for other accelerators (xPUs if you will) to be put in AMD systems without having to integrate the functionality on AMD's processor die. If there's enough demand in the market, AMD can eventually integrate the functionality on die, but until then Torrenza offers a low cost in-between solution.

AMD drew the parallel to the 287/387 floating point coprocessor socket that was present on 286/386 motherboards. Only around 2 - 3% of 286 owners bought a 287 FPU, while around 10 - 20% of 386 owners bought a 387 FPU; when the 486 was designed it simply made sense to integrate the functionality of the FPU into all models because the demand from users and developers was there. Torrenza would allow the same sort of migration to occur from external socket to eventual die integration if it makes sense, for any sort of processor.

55 Comments

View All Comments

TA152H - Friday, May 11, 2007 - link

Actually, I do have an idea on what AMD had to do with it. You don't. If you know anyone from Microsoft, ask about it.Even publicly, AMD admitted that Microsoft co-developed it with them.

By the way, when was the last time you used AMD software? Do you have any idea what you're talking about, or just an angry simpleton?

rADo2 - Friday, May 11, 2007 - link

Oh man, AMD copied, in fact, all Intel patents, due to their "exchange". They copied x86 instruction set, SSE, SSE2, SSE3, and many others. Intel was the first to come up with 64-bit Itanium.And AMD is/was damn expensive, while it had a window of opportunity. My most expensive CPU ever bought was AMD X2 4400+ ;-)

fic2 - Friday, May 11, 2007 - link

What does the 64-bit Itanium have to do with x86. Totally different instruction set.And what would the Intel equivalent to your X2 4400+ have cost you at the time? Or was there even an Intel equivalent.

rADo2 - Friday, May 11, 2007 - link

"What does the 64-bit Itanium have to do with x86" -- Intel had 64-bit CPU way before AMD, a true new platform. AMD came up with primitive AMD64 extension, which was not innovative at all, they just doubled registry and added some more.yyrkoon - Friday, May 11, 2007 - link

You mean - A 'primitive' 64BIT CPU that outpeformed the Intel CPU in just about every 32BIT application out there. This was also one reason why AMD took the lead for a few years . . .fitten - Friday, May 11, 2007 - link

It was actually pretty smart on AMD's part. Intel was trying to lever everyone off of x86 for a variety of reasons. AMD knew that lots of folks didn't like that so they designed x86-64 and marketed it. Of course people would rather be backwards compatible fully, which is why AMD was successful with it and Intel had to copy it to still compete. So... it's AMD's fault we can't get rid of the x86 albatross again ;)TA152H - Friday, May 11, 2007 - link

AMD had no choice but to go the way they did, there was nothing smart about it. They lacked the market power to introduce a new instruction set, as well as the software capability to make it a viable platform.Intel didn't even have the marketing muscle to make it an unqualified success. x86 is bigger than both of them. It's sad.

rADo2 - Friday, May 11, 2007 - link

I bought X2 because I wanted NVIDIA SLI (2x6800, 2x7800, 2x7900, etc.) with dualcore, so Pentium D was not an option (NVIDIA chipsets for Intel are even worse than for AMD, if that is possible).X2 was more expensive than my current quadcore, Q6600, and performed really BAD in all things except games.

I hated that CPU, while paying about $850 (including VAT) for it. For audio and video processing, it was a horrible CPU, worse than my previous P4 Northwood with HT, bought for $100, not to mention unstable NVIDIA nForce4 boards, SATA problems, NVIDIA firewall problems, etc.

I never want to see AMD again. Intel CPU + Intel chipset = pure godness.

yyrkoon - Friday, May 11, 2007 - link

SO, by your logic, just because a product does not meet your 'standard' ( which by the way seem to be based on 'un-logic' ), you would like to see a company, that you do not like, go under, and thus rendering the company that you hold so dearly in your mind, a monopoly.Pray AMD never goes under, because if they do go away, your next system may cost you 5x as much, and may perform 5x worse, and there will be nothing you can do about it.

Cheers

TA152H - Friday, May 11, 2007 - link

Not only that, but HP had more to do with the design than Intel.