The Road to Acquisition

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

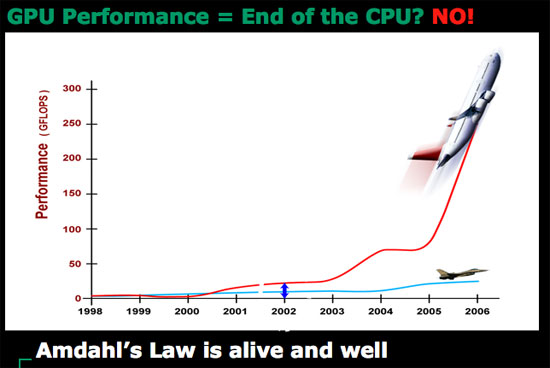

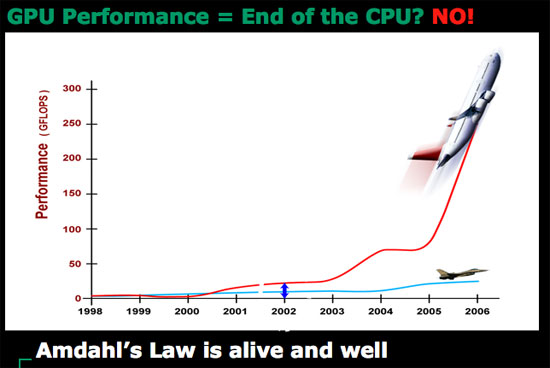

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

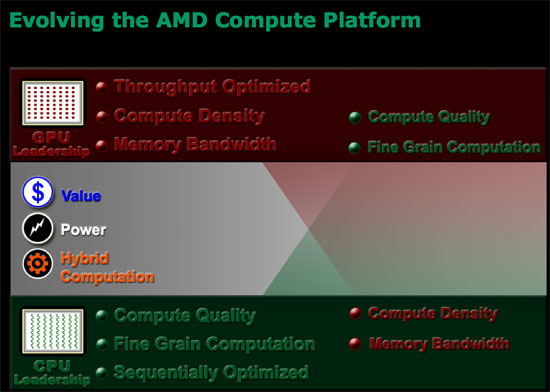

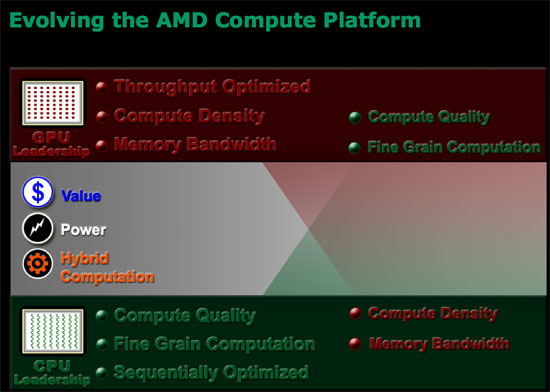

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

55 Comments

View All Comments

TA152H - Friday, May 11, 2007 - link

Actually, I do have an idea on what AMD had to do with it. You don't. If you know anyone from Microsoft, ask about it.Even publicly, AMD admitted that Microsoft co-developed it with them.

By the way, when was the last time you used AMD software? Do you have any idea what you're talking about, or just an angry simpleton?

rADo2 - Friday, May 11, 2007 - link

Oh man, AMD copied, in fact, all Intel patents, due to their "exchange". They copied x86 instruction set, SSE, SSE2, SSE3, and many others. Intel was the first to come up with 64-bit Itanium.And AMD is/was damn expensive, while it had a window of opportunity. My most expensive CPU ever bought was AMD X2 4400+ ;-)

fic2 - Friday, May 11, 2007 - link

What does the 64-bit Itanium have to do with x86. Totally different instruction set.And what would the Intel equivalent to your X2 4400+ have cost you at the time? Or was there even an Intel equivalent.

rADo2 - Friday, May 11, 2007 - link

"What does the 64-bit Itanium have to do with x86" -- Intel had 64-bit CPU way before AMD, a true new platform. AMD came up with primitive AMD64 extension, which was not innovative at all, they just doubled registry and added some more.yyrkoon - Friday, May 11, 2007 - link

You mean - A 'primitive' 64BIT CPU that outpeformed the Intel CPU in just about every 32BIT application out there. This was also one reason why AMD took the lead for a few years . . .fitten - Friday, May 11, 2007 - link

It was actually pretty smart on AMD's part. Intel was trying to lever everyone off of x86 for a variety of reasons. AMD knew that lots of folks didn't like that so they designed x86-64 and marketed it. Of course people would rather be backwards compatible fully, which is why AMD was successful with it and Intel had to copy it to still compete. So... it's AMD's fault we can't get rid of the x86 albatross again ;)TA152H - Friday, May 11, 2007 - link

AMD had no choice but to go the way they did, there was nothing smart about it. They lacked the market power to introduce a new instruction set, as well as the software capability to make it a viable platform.Intel didn't even have the marketing muscle to make it an unqualified success. x86 is bigger than both of them. It's sad.

rADo2 - Friday, May 11, 2007 - link

I bought X2 because I wanted NVIDIA SLI (2x6800, 2x7800, 2x7900, etc.) with dualcore, so Pentium D was not an option (NVIDIA chipsets for Intel are even worse than for AMD, if that is possible).X2 was more expensive than my current quadcore, Q6600, and performed really BAD in all things except games.

I hated that CPU, while paying about $850 (including VAT) for it. For audio and video processing, it was a horrible CPU, worse than my previous P4 Northwood with HT, bought for $100, not to mention unstable NVIDIA nForce4 boards, SATA problems, NVIDIA firewall problems, etc.

I never want to see AMD again. Intel CPU + Intel chipset = pure godness.

yyrkoon - Friday, May 11, 2007 - link

SO, by your logic, just because a product does not meet your 'standard' ( which by the way seem to be based on 'un-logic' ), you would like to see a company, that you do not like, go under, and thus rendering the company that you hold so dearly in your mind, a monopoly.Pray AMD never goes under, because if they do go away, your next system may cost you 5x as much, and may perform 5x worse, and there will be nothing you can do about it.

Cheers

TA152H - Friday, May 11, 2007 - link

Not only that, but HP had more to do with the design than Intel.