The Road to Acquisition

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

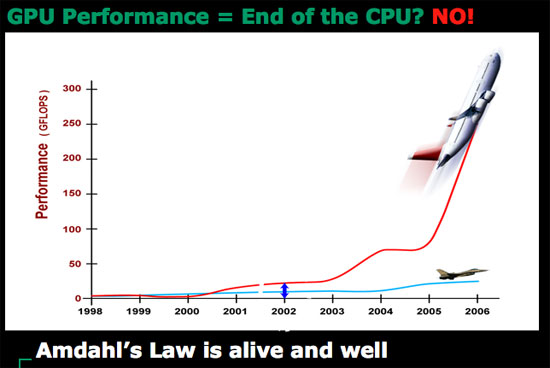

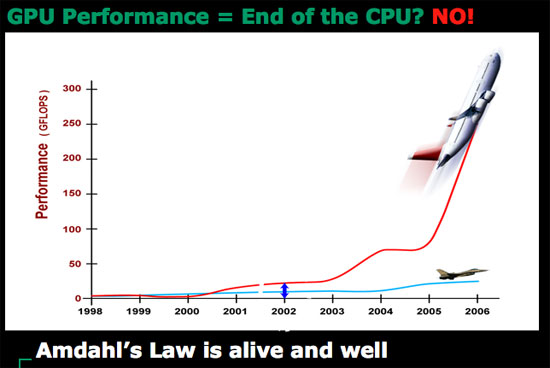

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

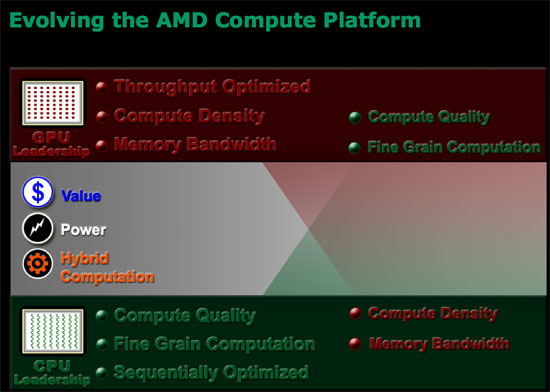

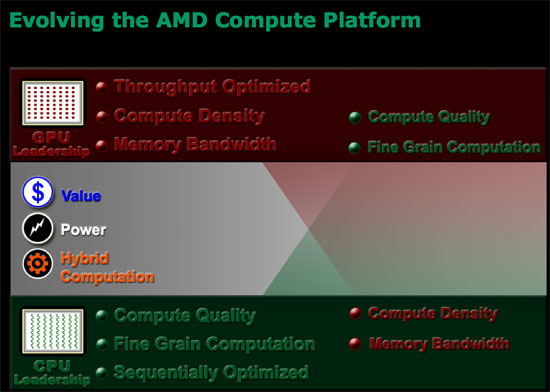

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

The CPU and the GPU have been on this collision course for quite some time; although we often refer to the CPU as a general purpose processor and the GPU as a graphics processor, the reality is that they are both general purpose. The GPU is merely a highly parallel general purpose processor, which is particularly well suited for particular applications such as 3D gaming. As the GPU became more programmable and thus general purpose, its highly parallel nature became interesting to new classes of applications: things like scientific computing are now within the realm of possibility for execution on a GPU.

Today's GPUs are vastly superior to what we currently call desktop CPUs when it comes to things like 3D gaming, video decoding and a lot of HPC applications. The problem is that a GPU is fairly worthless at sequential tasks, meaning that it relies on having a fast host CPU to handle everything else other than what it's good at.

ATI discovered that long term, as the GPU grows in its power, it will eventually be bottlenecked by the ability to do high speed sequential processing. In the same vein, the CPU will eventually be bottlenecked by the ability to do highly parallel processing. In other words, GPUs need CPUs and CPUs need GPUs for all workloads going forward. Neither approach will solve every problem and run every program out there optimally, but the combination of the two is what is necessary.

ATI came to this realization originally when looking at the possibilities for using its GPU for general purpose computing (GPGPU), even before AMD began talking to ATI about a potential acquisition. ATI's Bob Drebin (formerly the CTO of ATI, now CTO of AMD's Graphics Products Group) told us that as he began looking at the potential for ATI's GPUs he realized that ATI needed a strong sequential processor.

We wanted to know how Bob's team solved the problem, because obviously they had to come up with a solution other than "get acquired by AMD". Bob didn't directly answer the question, but he did so with a smile, by saying that ATI tried to pair its GPUs with as low power of a sequential processor as possible but always ran into the same problem of the sequential processor becoming a bottleneck. In the end, Bob believes that the AMD acquisition made the most sense because the new company is able to combine a strong sequential processor with a strong parallel processor, eventually integrating the two on a single die. We really wanted to know what ATI's "plan-B" was, had the acquisition not worked out, because we're guessing that ATI's backup plan is probably very similar to what NVIDIA has planned for its future.

To understand the point of combining a highly sequential processor like modern day desktop CPUs and a highly parallel GPU you have to look above and beyond the gaming market, into what AMD is calling stream computing. AMD perceives a number of potential applications that will require a very GPU-like architecture to solve, things that we already see today. Simply watching an HD-DVD can eat up almost 100% of some of the fastest dual core processors today, while a GPU can perform the same decoding task with much better power efficiency. H.264 encoding and decoding are perfect examples of tasks that are better suited for highly parallel processor architectures than what desktop CPUs are currently built on. But just as video processing is important, so are general productivity tasks, which is where we need the strengths of present day Out of Order superscalar CPUs. A combined architecture that can excel at both types of applications is clearly a direction that desktop CPUs need to target in order to remain relevant in future applications.

Future applications will easily combine stream computing with more sequential tasks, and we already see some of that now with web browsers. Imagine browsing a site like YouTube except where all of the content is much higher quality and requires far more CPU (or GPU) power to play. You need the strengths of a high powered sequential processor to deal with everything other than the video playback, but then you need the strengths of a GPU to actually handle the video. Examples like this one are overly simple, as it is very difficult to predict the direction software will take when given even more processing power; the point is that CPUs will inevitably have to merge with GPUs in order to handle these types of applications.

55 Comments

View All Comments

strikeback03 - Friday, May 11, 2007 - link

This implies that actual performance numbers would make Barcelona more visible. But to factor into a buying decision they have to know Barcelona is coming, and anyone who knows that can probably guess it will be a significant step forward, based on it's need to compete with Intel. Soe either you don't know Barcelona is coming, in which case performance numbers don't matter; or you do know it is coming, in which case the only reason to buy AMD before then is because it's cheap.

At least they stated that the new processors will be usable in the AM2 motherboards.

TA152H - Friday, May 11, 2007 - link

You are using pretzel logic here.If you know Barcelona is a significant step forward, why do you need the results posted beforehand?

Actually, performance would make Barcelona more visible, and if it were better than expected, you'd kill current sales. You can speculate on performance, but you really don't know. The only place you'd really want people to know beforehand is the server market, because people plan these purchases. And guess what? AMD released those numbers, and there were pretty high.

It's also completely different to know something is coming out and guessing at the performance, than actually seeing the numbers and from that being thoroughly disgusted with the performance. I could live with any of the processors today, but once I see one get raped by the next generation, I don't want it. It hits you on a visceral level, and after that, it's difficult to go back to it. Put another way, say there is a girl you can out with today that's fairly attractive and would certainly add to your life. You could wait for one that will be more attractive later on, but you don't really need to since this new one is more than adequate. Now say you see this bombshell. Do you think you'd really want to go back to the one that wasn't so attractive?

We're human, we respond to things on an emotional level even when we know we shouldn't. The head never wins against the heart. I'm not sure that's a bad thing either, life would be so uninteresting were it not so.

blppt - Friday, May 11, 2007 - link

"AMD's reasoning for not disclosing more information today has to do with not wanting to show all of its cards up front, and to give Intel the opportunity to react."Come on....I'm sure Intel already has a pretty good idea of what they are up against. I'm sure Intel has access to information on their competitors that the general tech public doesn't.

michal1980 - Friday, May 11, 2007 - link

All they said is there is new stuff coming. Trust me, if the cpu's they had right now were beating the pants off of intel, they would post the number. I'm not saying give us the freq, the cpu runs on. But if they knew that games run 50% faster, they would at least hint it.Nice things: looks like the new mobo chip runs cool, look at how small the hsf are on those chips.

Not nice: how hot are these new cpus? look at all those fans, its like a tornado in the case.

Note nice: No DATES? all that means is its even easier to push things back. Winter 2007, because early 2008

Ard - Friday, May 11, 2007 - link

Excellent article as always, Anand. It's nice to finally get some info on AMD and find out that they're not throwing in the towel just yet. Some performance numbers would've been nice but I guess you can't have everything. I did have to laugh at the slide that said S939 will continued to be supported throughout 2007 though, considering you can't even buy new S939 CPUs.Beenthere - Friday, May 11, 2007 - link

It's a known fact that Intel has had to try and copy the best features of AMD's products to catch up in performance to AMD. Funny how when Intel was secretive and blackmailing consumers for 30 years that was fine but when AMD doesn't give away all of their upcoming product technical info. for Intel to copy, that's not good -- according to some. With Intel being desperate to generate sales for their non-competitive products over the past 2-3 years, they decided to really manipulate the media - and it's worked. The once secretive Intel is the best friend a hack can find these days. They'll tell a hack anything to get some form of media exposure.I find AMD's release of info. just fine. If it were not for AMD all consumers would be paying $1000 for a Pentium 90 CPU today and that would be the fastest CPU you could buy. People tend to forget all that AMD has done for consumers. The world would be a lot worse off than it is if it were not for AMD stepping to the plate to take on the bully from Satan Clara.

Many in the media are shills and most of the media is manipulated by unscrupulous companies like Intel, Asus, and a long list of others. Promise a hack some "inside info." or insiders tour so they can get a scoop or a prototype piece of hardware that has been massaged for better performance than the production hardware and the fanboy hacks will write glowing opine about a companies products and chastise the competition every chance they get.

Unfortunately what was once a useful service - honest product reviews -- is now a game of shilling for dollars. You literally can't believe anything reported at 99% of websites these days because it's usually slanted based on which way the money flows... It's no secret that Intel and MICROSUCKS are more than willing to lubricate the wheels of the ShillMeisters to get favorable tripe.

TA152H - Friday, May 11, 2007 - link

Beenthere,What are you talking about? Intel invented the microprocessor (4004), invented the instruction set used today (8086) and has been getting copied by AMD for years.

The Athlon was certainly nothing to copy, you could just as easily say they copied the Pentium III (and did a bad job of it, whereas the Core is much better than the Athlon). What's so unique about the Athlon that could be copied anyway? It's a pretty basic design. It worked OK, I guess, but the performance per watt was always poor until the Pentium 4 came around and redefined just what poor meant.

x86-64 is straightforward, and you can be sure Microsoft designed most of it. I'm not saying this as anything bad about AMD, because who better to design the instruction set than Microsoft? Intel and Microsoft do enough software to understand what is best, AMD is allergic to software, so I think this is a good thing.

I agree, only slightly, that these review sites are ass-kissers by nature, because they need good relationships with the makers. I doubt they are getting kick-backs, but say Anand is more honest with his opinions (he always is about a lousy product, after the company comes out with a good one), he'd get cut off from some information or products from that same company. So, they kiss ass because if they write scalding and honest reviews they lose out and can't function as an information site as well. I don't like it, but can you blame him? In his situation, you'd have to do exactly the same thing - give a review in a delicate way without offending the hand that feeds you, but trying to get your point across anyway with the factual data. Tom Pabst was funny as Hell in his old reviews, he took a devil may care attitude, but nowadays even that site has accepted the reality of being on good terms with technology companies whenever possible. In the long run, it's worth it.

Viditor - Saturday, May 12, 2007 - link

Actually, most of the work was done at Fairchild Semiconductor...that's where both Gordon Moore (founder of Intel) and Jerry Sanders (founder of AMD) worked together.

Moore left FS in 1968 to form Intel (along with Bob Noyce) and Sanders left in 1969 to form AMD.

Intel began as a memory manufacturer, but Busicom contracted them to create a 4-bit CPU chip set architecture that could receive instructions and perform simple functions on data. The CPU becomes the 4004 microprocessor...Intel bought back the rights from Busicom for US$60,000.

Interestingly, TI had a system on a chip come out at the same time, but they couldn't get it to work properly so Intel got the money (and the credit).

You're kidding, right??

1. Athlon had vastly superior FP because of it's super-pipelined, out-of-order, triple-issue floating point unit (it could operate on more than one floating point instruction at once)

2. Athlon had the largest level 1 cache in x86 history

3. When it was first launched, it showed superior performance compared to the reigning champion, Pentium III, in every benchmark

4. Three generalized CISC to RISC decoders

5. Nine-issue superscalar RISC core

Just look at the reviews during release (you might think it's si,ilar to the C2D reviews...)

http://www.aceshardware.com/Spades/read.php?articl...">Aces Hardware

That's just silly...while I'm sure MS had plenty of input, there are no chip architects on their staff that I'm aware of (in other words nobody their COULD design it).

It's like saying that when a Pro driver gives feedback to the engineers on what he wants, he's the one who designed the car...don't think so.

TA152H - Sunday, May 13, 2007 - link

What's your point about the 4004? You're giving commonly known information that in no way changes the fact that Intel invented the first microprocessor. It wasn't for themselves, initially, but it was their product. AMD didn't create it, and they didn't create the other microprocessors they were a second source from Intel. Look at their first attempt at their own x86 processor to see how good they were at it, the K5. It was late, slower than expected, and created huge problems for Compaq, which had bet on them. Jerry Sanders was smart enough to by NexGen after that.You are clearly clueless about microprocessors if you think any of those things you mention about the Athlon are in any way anything but basic.

The largest L1 cache is a big difference??? Why that's a real revolution there!!!! They made a bigger cache! Holy Cow! Intel still hasn't copied that, by the way, so even though it's nothing innovative, it was still never copied.

The FP unit was NOT the first pipelined one, the Pentium was and the Pentium Pro line was also pipelined, or superpipelined as you misuse the word. Do you know what superpipelined even means? It means lots of stages? Are you implying the Athlon was better in floating point because it had more floating point stages? Are you completely clueless and just throwing around words you heard?

Wow, they had slightly better decoding than the P6 generation!!!! Wow, that's a real revolutionary change.

You're totally off on this. They did NOTHING new on it, it was four years later than the Pentium Pro, and barely outperformed it, and in fact was surpassed by the aging P6 architecture when the Coppermine came out. It was much bigger, used much more power, and had relatively poor performance for the size and power dissipation. The main problem with the P6 was the memory bandwidth too, if it had the Athlon's it would have raped it, despite being much smaller. I don't really call that a huge success. Although, it does have to be said the Athlon was capable of higher clock speeds on the same process. Still, it was hardly an unqualified success like the Core 2, which is good by any measure.

The Core 2 is MUCH faster than the Athlon 64, and isn't a much larger and much more power hungry beast. In fact, it's clearly better in power/performance than the Athlon 64. The Athlon was dreadful in this regard.

I was talking about the instruction set with regards to Microsoft, which should have been obvious since x86-64 is an instruction set, not an architecture. And yes, they did design most of it, if not all. Ask someone from Microsoft, and even if you don't know one, use some common sense. Microsoft writes software, and compilers, and have to work with the instruction set. They are naturally going to know what works best and what they want, and AMD has absolutely no choice but to do what Microsoft says to do. Microsoft is holding a Royal Flush, AMD has a nine high. Microsoft withholding support for x86-64 would have made it as meaningless as 3D Now! They knew it, AMD knew it, and Microsoft got what they wanted. Anything else is fiction. Again, use common sense.

hubajube - Friday, May 11, 2007 - link

Dude, WTF are YOU talking about? Allergic to software? Is that an industry phrase? YOU have NO idea what AMD did or didn't do in regards to X86-64 so how can you even make a comment on it?