DX10 for the Masses: NVIDIA 8600 and 8500 Series Launch

by Derek Wilson on April 17, 2007 9:00 AM EST- Posted in

- GPUs

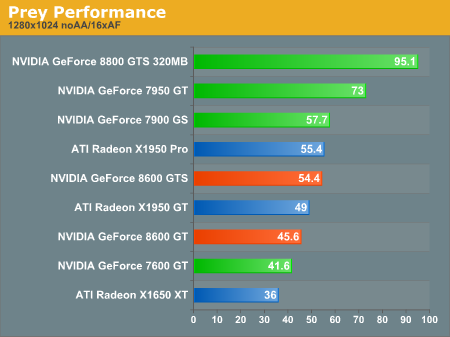

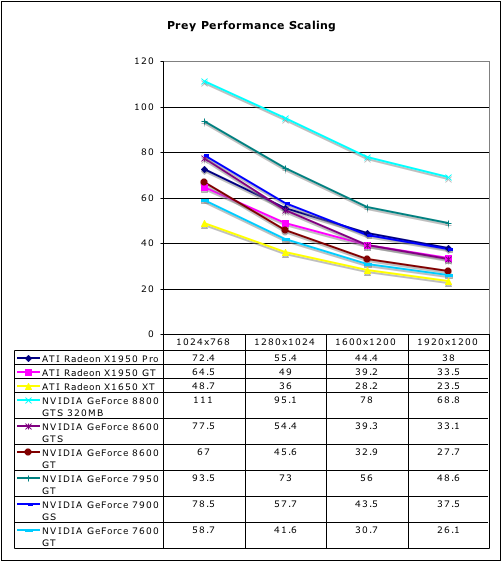

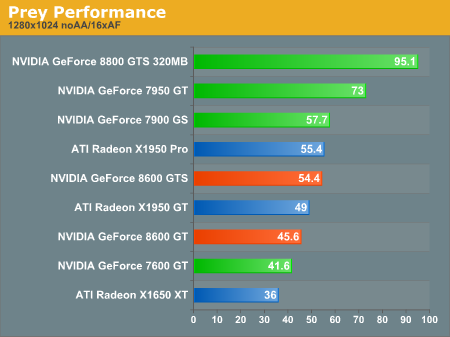

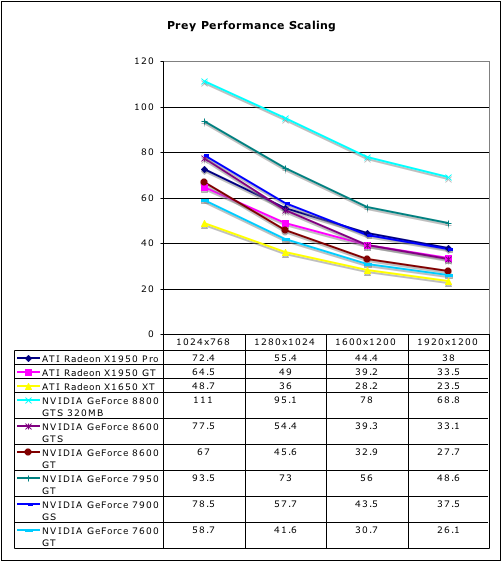

Prey Performance

This OpenGL game represents the Doom 3 engine and is heavy on the texture and z/stencil operations. There are quite a few factors that could cause this game to run poorly on the G84 (clearly G80 has no real issues here). The reduced memory bandwidth or fewer ROPs (which means fewer z/stencil ops/clock) are likely culprits, but without more information it would be hard to nail down the reason we see such poor performance.

The 8600 GTS is able to keep up with its competition from AMD, but lags quite a bit behind the 7950 GT. The 8600 GT isn't able to hold its own against current $150 offerings, but it does at least stay ahead of the 7600 GT which held the $150 line for quite a while.

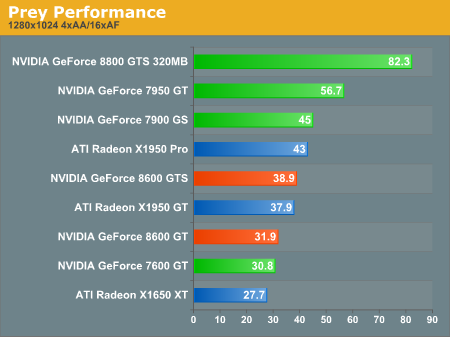

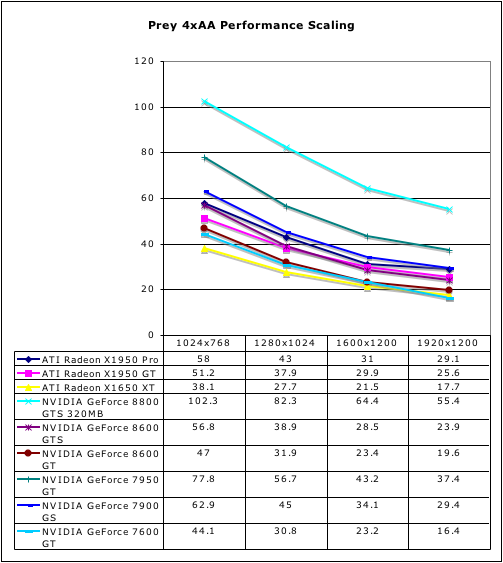

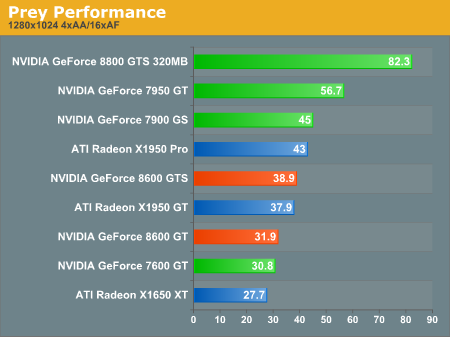

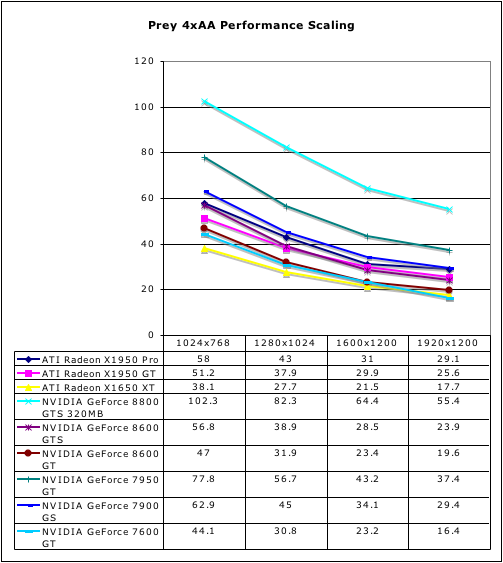

Both the 8600 cards fall further than other hardware when AA is enabled. Neither of our early generation DX9 games paints an attractive picture of the 8600 hardware. Let's take a look at our final test to round things out.

This OpenGL game represents the Doom 3 engine and is heavy on the texture and z/stencil operations. There are quite a few factors that could cause this game to run poorly on the G84 (clearly G80 has no real issues here). The reduced memory bandwidth or fewer ROPs (which means fewer z/stencil ops/clock) are likely culprits, but without more information it would be hard to nail down the reason we see such poor performance.

The 8600 GTS is able to keep up with its competition from AMD, but lags quite a bit behind the 7950 GT. The 8600 GT isn't able to hold its own against current $150 offerings, but it does at least stay ahead of the 7600 GT which held the $150 line for quite a while.

Both the 8600 cards fall further than other hardware when AA is enabled. Neither of our early generation DX9 games paints an attractive picture of the 8600 hardware. Let's take a look at our final test to round things out.

60 Comments

View All Comments

kilkennycat - Tuesday, April 17, 2007 - link

(As of 8AM Pacific Time, April 17)See:-

http://www.zipzoomfly.com/jsp/ProductDetail.jsp?Pr...">http://www.zipzoomfly.com/jsp/ProductDetail.jsp?Pr...

http://www.zipzoomfly.com/jsp/ProductDetail.jsp?Pr...">http://www.zipzoomfly.com/jsp/ProductDetail.jsp?Pr...

Chadder007 - Tuesday, April 17, 2007 - link

Thats really not too bad for a DX10 part. I just wish we actually had some DX10 games to see how it performs though....bob4432 - Tuesday, April 17, 2007 - link

that performance is horrible. everyone here is pretty dead on - this is strictly for marketing to the non-educated gamer. too bad they will be disappointed and probably return such a piece of sh!t item. what a joke.come on ati, this kind of performance should be in the low end cards, this is not a mid-range card. maybe if nvidia sold them for $100-$140 they may end up in somebody htpc but that is about all they are good for.

glad i have a 360 to ride out this phase of cards while my x1800xt still works fine for my duties.

if i were the upper management at nvidia, people would be fired over this horrible performance, but sadly the upper management is more than likely the cause of this joke of a release.

AdamK47 - Tuesday, April 17, 2007 - link

nVidia needs to have people with actual product knowledge dictate what the specifications of future products will be. This disappointing lineup has marketing written all over it. They need to wise up or they will end up like Intel and their failed marketing derived netburst architecture.wingless - Tuesday, April 17, 2007 - link

In the article they talk about the Pure Video features as if they are brand new. Does this mean they ARE NOT implemented in the 8800 series? The article talked about how 100% of the video decoding process is on the GPU but it did not mention the 8800 core which worries the heck outta me. Also does the G84 have CUDA capabilities?DerekWilson - Tuesday, April 17, 2007 - link

CUDA is supportedDerekWilson - Tuesday, April 17, 2007 - link

The 8800 series support PureVideo HD the same way GeForce 7 sereis does -- through VP1 hardware.The 8600 and below support PureVideo HD through VP2 hardware, the BSP, and other enhancements which allow 100% offload of decode.

While the 8800 is able to offload much of the process, it's not 100% like the 8600/8500. Both support PureVideo HD, but G84 does it with lower CPU usage.

wingless - Tuesday, April 17, 2007 - link

I just checked NVIDIA's website and it appears only the 8600 and 8500 series support Pure Video HD which sucks balls. I want 8800GTS performance with Pure Video HD support. Guess I'll have to wait a few more months, or go ATI but ATI's future isn't stable these days.defter - Tuesday, April 17, 2007 - link

Why you want 8800GTS performance with improved Purevideo HD support? Are you going to pair 8800GTS with $40 Celeron? 8800GTS has more than enough power to decode H.264 at HD resolutions as long as you pair with modern CPU: http://www.anandtech.com/printarticle.aspx?i=2886">http://www.anandtech.com/printarticle.aspx?i=2886This improved Purevide HD is aimed for low-end systems that are using a low end-CPU. That's why this feature is important for low/mid-range GPUs.

wingless - Tuesday, April 17, 2007 - link

If I'm going to spend this kind of money for an 8800 series card then I want VP2 100% hardware decoding? Is that too much to ask? I want all the extra bells and whistles. Damn, I may have to go ATI for the first time since 1987 when I had that EGA Wonder.