Power Within Reach: NVIDIA's GeForce 8800 GTS 320MB

by Derek Wilson on February 12, 2007 9:00 AM EST- Posted in

- GPUs

The 8800 GTS 320MB and The Test

Normally, when a new part is introduced, we would spend some time talking about number of pipelines, computer power, bandwidth, and all the other juicy bits of hardware goodness. But this time around, all we need to do is point back to our original review of the G80. Absolutely the only difference between the original 8800 GTS and the new 8800 GTS 320MB is the amount of RAM on board.

The GeForce 8800 GTS 320MB uses the same number of 32-bit wide memory modules as the 640MB version (grouped in pairs to form 5 64-bit wide channels). The difference is in density: the 640MB version uses 10 64MB modules, whereas the 320MB uses 10 32MB modules. That makes it a little easier for us, as all the processing power, features, theoretical peak numbers, and the like stay the same. It also makes it very interesting, as we have a direct comparison point through which to learn just how much impact that extra 320MB of RAM has on performance.

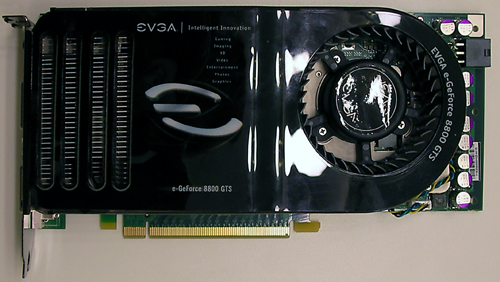

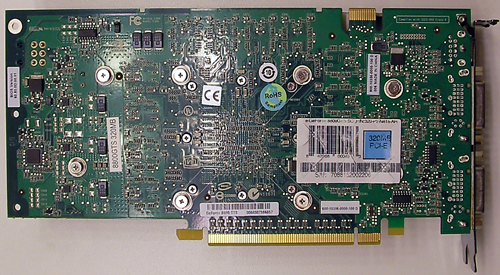

Here's a look at the card itself. There really aren't any visible differences in the layout or design of the hardware. The only major difference is the use of the traditional green PCB rather than the black of the recent 8800 parts we've seen.

Interestingly, our EVGA sample was overclocked quite high. Core and shader speeds were at 8800 GTX levels, and memory weighed in at 850MHz. In order to test the stock speeds of the 8800 GTS 320MB, we made use of software to edit and flash the BIOS on the card. The 576MHz core and 1350MHz shader clocks were set down to 500 and 1200 respectively, and memory was adjusted down to 800MHz as well. This isn't something we recommend people run out and try, as we almost trashed our card a couple times, but it got the job done.

The test system is the same as we have used in our recent graphics hardware reviews:

Normally, when a new part is introduced, we would spend some time talking about number of pipelines, computer power, bandwidth, and all the other juicy bits of hardware goodness. But this time around, all we need to do is point back to our original review of the G80. Absolutely the only difference between the original 8800 GTS and the new 8800 GTS 320MB is the amount of RAM on board.

The GeForce 8800 GTS 320MB uses the same number of 32-bit wide memory modules as the 640MB version (grouped in pairs to form 5 64-bit wide channels). The difference is in density: the 640MB version uses 10 64MB modules, whereas the 320MB uses 10 32MB modules. That makes it a little easier for us, as all the processing power, features, theoretical peak numbers, and the like stay the same. It also makes it very interesting, as we have a direct comparison point through which to learn just how much impact that extra 320MB of RAM has on performance.

Here's a look at the card itself. There really aren't any visible differences in the layout or design of the hardware. The only major difference is the use of the traditional green PCB rather than the black of the recent 8800 parts we've seen.

Interestingly, our EVGA sample was overclocked quite high. Core and shader speeds were at 8800 GTX levels, and memory weighed in at 850MHz. In order to test the stock speeds of the 8800 GTS 320MB, we made use of software to edit and flash the BIOS on the card. The 576MHz core and 1350MHz shader clocks were set down to 500 and 1200 respectively, and memory was adjusted down to 800MHz as well. This isn't something we recommend people run out and try, as we almost trashed our card a couple times, but it got the job done.

The test system is the same as we have used in our recent graphics hardware reviews:

| System Test Configuration | |

| CPU: | Intel Core 2 Extreme X6800 (2.93GHz/4MB) |

| Motherboard: | EVGA nForce 680i SLI |

| Chipset: | NVIDIA nForce 680i SLI |

| Chipset Drivers: | NVIDIA nForce 9.35 |

| Hard Disk: | Seagate 7200.7 160GB SATA |

| Memory: | Corsair XMS2 DDR2-800 4-4-4-12 (1GB x 2) |

| Video Card: | Various |

| Video Drivers: | ATI Catalyst 7.1 NVIDIA ForceWare 93.71 (G7x) NVIDIA ForceWare 97.92 (G80) |

| Desktop Resolution: | 2560 x 1600 - 32-bit @ 60Hz |

| OS: | Windows XP Professional SP2 |

55 Comments

View All Comments

A5 - Monday, February 12, 2007 - link

People with a 19" monitor aren't going to drop $300+ on a video card. You can get a X1950 Pro for $175 that can handle 1280x1024 in pretty much every game out today.jsmithy2007 - Monday, February 12, 2007 - link

Are you high? I know plenty of people with 19 and 21" CRTs that use latest gen GPUs. These people are typically called "gamers" or "enthusiasts," perhaps you've heard of these terms. Even at moderate resolutions (1280x1024, 1600x1200), to run a game like Oblivion with all the eye candy turned on really does require a higher end GPU. Hell, I need 2 7800GTXs in SLI to just barely play with max settings at 1280x1024 while running 2xAA. Granted my GPUs are getting a little long in the tooth, but the point is still the same.Omega215D - Monday, February 12, 2007 - link

Yes but the X1950 Pro doesn't do DirectX 10 and hopefully with the new unified shader architecture the 8800GTS won't be too obsolete when majority of the games shipping will be DX10.I run a widescreen 19" monitor at 1440 x 900, for some reason my card can run games when I was at the 1280 x 1024 res but now games have become a little choppy in this resolution even though the pixel count is less... any idea why?

DerekWilson - Monday, February 12, 2007 - link

Non standard resolutions can sometimes have an impact on performance depeding on the hardware, game, and driver combination.As far as DX10 goes, gamers who run 12x10 are best off waiting to upgrade to new hardware.

There will be parts that will perform very well at 12x10 while costing much less than $300 and providing DX10 support from both AMD and NVIDIA at some point in the future. At this very moment, DX10 doesn't matter that much, and dropping all that money on a card that won't provide any real benefit without a larger monitor or some games that really take advantage of the advanced features just isn't something we can recommend.

damsaddm - Tuesday, February 27, 2018 - link

Where is the download link? I found the link here: https://secondgeek.com/drivers/nvidia-geforce-8800...It is working...