Power Within Reach: NVIDIA's GeForce 8800 GTS 320MB

by Derek Wilson on February 12, 2007 9:00 AM EST- Posted in

- GPUs

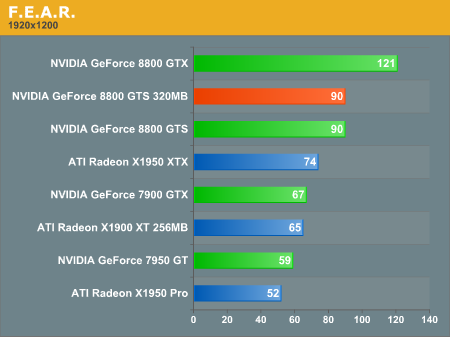

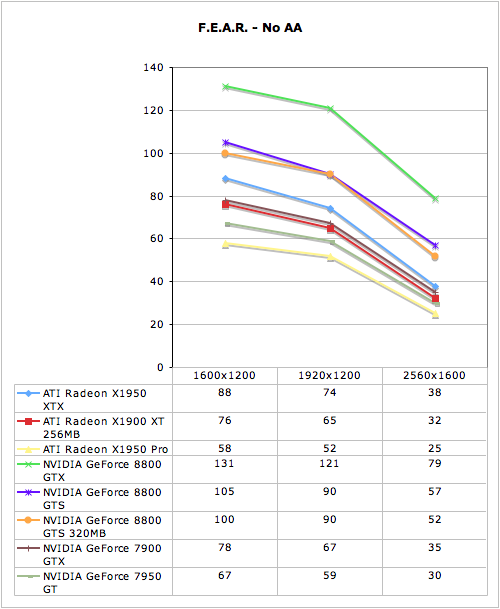

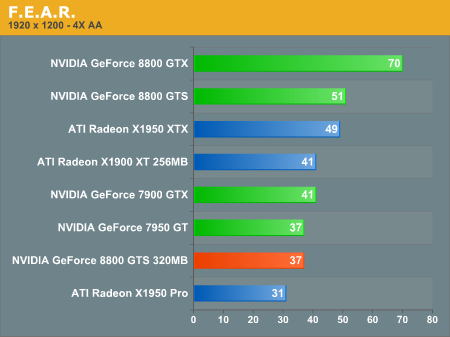

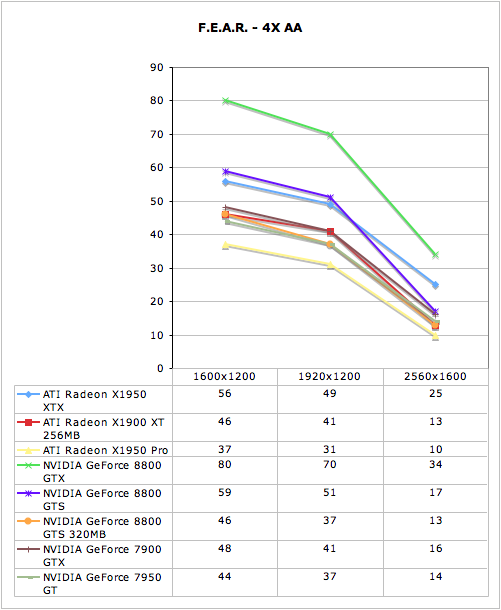

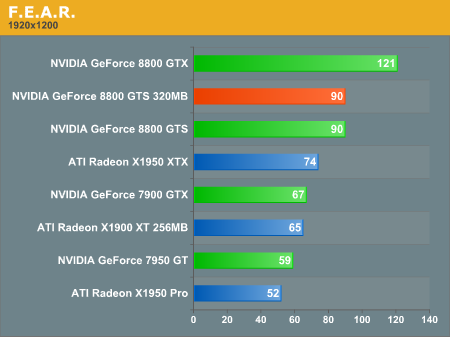

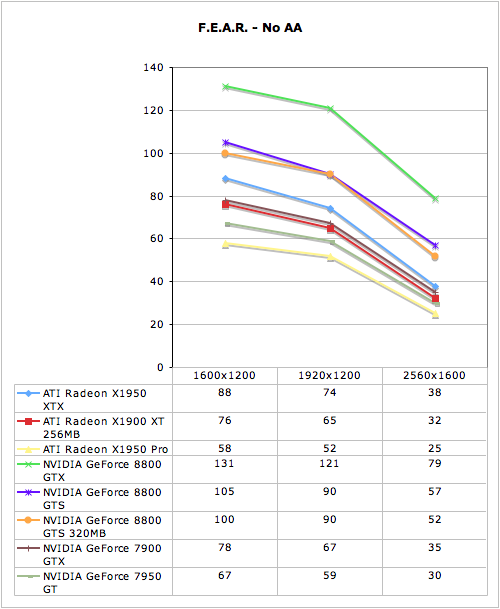

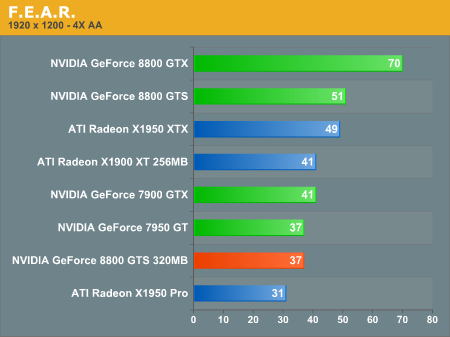

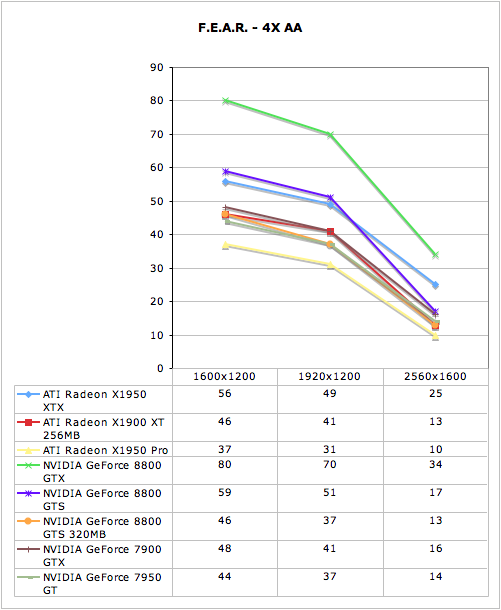

F.E.A.R. Performance

There is a built in performance test in F.E.A.R. that provides some useful statistics including average framerate. We use this performance test with all the options turned all the way up except for Soft Shadows. We disable Soft Shadows because it incurs a very high performance penalty while not delivering a good quality effect. Here we are using the 1.08 patch.

The 320MB 8800 GTS is easily able to keep up with the 640MB version under F.E.A.R. without 4xAA enabled. Performing identically at 1920x1200 shows that memory size doesn't make a difference here. Of course, as we've seen with other games, AA does increase memory usage and performance in a big way on the smaller memory part.

The two GTS parts scale similarly here, but the 320MB part performs much worse even at 1600x1200. Only our 8800 GTX is really playable at 2560x1600, but at least the 8800 GTS 320MB makes the grade at 1920x1200. Coming in with a playable score on a fairly widely used resolution is good news to majority of gamers who don't own 30" monitors.

There is a built in performance test in F.E.A.R. that provides some useful statistics including average framerate. We use this performance test with all the options turned all the way up except for Soft Shadows. We disable Soft Shadows because it incurs a very high performance penalty while not delivering a good quality effect. Here we are using the 1.08 patch.

The 320MB 8800 GTS is easily able to keep up with the 640MB version under F.E.A.R. without 4xAA enabled. Performing identically at 1920x1200 shows that memory size doesn't make a difference here. Of course, as we've seen with other games, AA does increase memory usage and performance in a big way on the smaller memory part.

The two GTS parts scale similarly here, but the 320MB part performs much worse even at 1600x1200. Only our 8800 GTX is really playable at 2560x1600, but at least the 8800 GTS 320MB makes the grade at 1920x1200. Coming in with a playable score on a fairly widely used resolution is good news to majority of gamers who don't own 30" monitors.

55 Comments

View All Comments

LoneWolf15 - Tuesday, February 13, 2007 - link

I'm not running Vista (other than RC2 on a beta-testing box, my primary is XP), actually, just making a point. (note: I bought the 8800 not because I had to have the latest and greatest, but because the 8800GTS offered reasonable performance at 1920x1200).But I will say like I've said to everyone else claiming "early adoption! early adoption!" that nVidia released the G80 last fall. Early November, actually. And when they did, the G80 cards from multiple vendors listed prominently on their boxes "Vista Ready", "DX10 Compatible" "Runs with Vista", and so on. This includes nVidia, who, up until last week (think the backlash had something to do with it?) posted prominently on their website: "nVidia: Essential for the best Windows Vista Experience".

We know that at this time those slogans are bollocks. SLI doesn't work right in Vista. There's lots of G80 driver bugs that might have been worked out during Vista's long gestation period from Beta 2 through all the release candidates (consider nVidia claims to have 5 years of development into the G80 GPU, so one could argue they've had the time). The truth of the matter is that nVidia shouldn't have made promises about compatibility unless they already knew they had working drivers. They didn't, and failed to get non-beta drivers out at the time of Windows Vista's release, breaking the promise on every single one of those boxes. The G80 is not currently Vista ready, and there are still some driver bugs even on XP; for a long list, check out nVnews.net 's forums.

I believe that when you make a promise (and I believe nVidia did with their marketing of the card) you should make good on it. While I don't plan to adopt Vista for some time to come (maybe not even this year), that doesn't mean I'll give nVidia a free pass for failing to deliver on a promise they've made. Your post seems to insinuate I'm whining and pouting about this, and it also indicates why nVidia gets away with not keeping promises: for every one person that complains, there are two more that beat him/her up for being an early adopter, or not having a properly configured system, or for running Windows Vista.

maevinj - Monday, February 12, 2007 - link

I'll trade you my 6800gt since the you're having driver issues with your 8800.SonicIce - Monday, February 12, 2007 - link

How can the 8800 GTS 320MB be slower in BF2 than a 7900 GTX or 7950 GT even when it has more capabilities and memory? Isn't it better in every aspect (inclusing memory) so how could it possibly lose?coldpower27 - Wednesday, February 14, 2007 - link

It looks like the G80 memory optimizations are currently in their infancy. G80's memory size performance is the strangest I have seen in a long while for Nvidia hardware.lopri - Monday, February 12, 2007 - link

While I do understand the need to have consistency in the graph (especially with G7X series cards), everyone knows that Oblivion can be played with AA enabled on G80. (It's absolutely beautiful) HDR+AA is one of the main selling points of G80 and it's surprising that this feature hasn't been tested a little more. I'm quite confident to say the frame buffer would make a bigger difference with AA enabled in Oblivion.Also curious, why was Company of Heroes not tested? This game is, by far, most memory hogging game I've ever seen. (to the extent I suspect a memory leak bug) Company of Heroes @1920x1200/4AA/16AF and up will use up to ~1GB of GRAPHICS MEMORY.

Avalon - Monday, February 12, 2007 - link

I agree with Munky here. I'm completely surprised that the card's horrible AA performance was just simply glossed over. With AA on in most of those games, the card couldn't even outpace a 7900GTX (once or twice the X1950Pro). That's pathetic. I don't know if it's the drivers, or just the inherent nature of the architecture, but it seems like this card can't stand up there with less than 640MB of RAM.What gamers are going to pay $300 for a card and NOT use AA? I think the only game that the card could handle using AA without choking was HL2...so if that's all you play...meh. Otherwise, this card doesn't seem worth it.

LoneWolf15 - Monday, February 12, 2007 - link

Your comment is more astute yet when you take in the fact that current 8800GTS 320MB cards are running $299 online, and (admittedly with some instant rebate magic) 640MB cards can be gotten for around $365.If the price drops on the 320MB, I think it'll be a steal of a card, and it's certainly a bonus option for OEM's like Dell. But a $65 difference for double the RAM doesn't seem like a lot extra to pay.

JarredWalton - Monday, February 12, 2007 - link

I truly think there's a lot to be done in the drivers right now. I think NVIDIA focused first on 8800 GTX, with GTS being something of an afterthought. However, GTS was close enough in design that it didn't matter all that much. Now, with only 320MB of RAM, the new GTS cards are probably still running optimizations designed for a 768MB card, so they're not swapping textures when they should or something. Just a hunch, but I really see no reason for the 320MB card to be slower than 256MB cards in several tests, other than poor drivers.I don't think most of this would have occurred were it not for the combination of G80 + Vista + 64-bit driver support all coming at around the same time. NVIDIA could have managed any one of those items individually, but with all three something had to give. 64-bit drivers are only a bit different, but still time was taken away to work on that instead of just getting the drivers done. G80 already required a complete driver rewrite, it seems, and couple that with Vista requiring another rewrite and you get at least four driver sets currently being maintained. (G70 and earlier for XP and Vista, G80 for XP and Vista - plus 64-bit versions as well!)

The real question now is how long it will take for NVIDIA to catch up with the backlog of fixes/optimizations that need to be done. Or more importantly, will they ever truly catch up, since we have lesser G8x chips coming out in the next few months most likely? I wouldn't be surprised to see GTS/320 performance finally get up to snuff when the new midrange cards launch, just because NVIDIA will probably be spending more time optimizing for RAM on those models.

code255 - Monday, February 12, 2007 - link

I wonder how big the difference between the normal GTS and the GTS 320 will be in Enemy Territory: Quake Wars. That game's engine's MegaTexture technology will probably need sh*tloads of GPU memory if one wants to play at Ultra texture quality.Btw, in Doom 3 / Quake 4, how big's the difference in memory requirement between High and Ultra quality? I heard that the textures in High mode look basically just as good as in Ultra.

OvErHeAtInG - Tuesday, February 13, 2007 - link

As someone who still plays Quake 4 - This is something that has bothered me in the last dozen AT GPU reviews so I guess I'll gripe here.What's with testing Quake 4 in Ultra mode. It's cool for academic purposes, but in the real world, there is *NO* difference in visual quality between Ultra Quality mode and High Quality mode, whereas the framebuffer is approx 3 times larger in Ultra mode, severely crippling cards < 512 MB.

Not that it's a huge deal. I've used a 512MB card for almost a year now, and run Quake 4 in High-quality mode - why? it's far more efficient! Anyone who plays this game is going to prefer FPS over IQ, especially IQ that is more theoretical than noticeable.

You even allude to it in this article:

<q>The visual quality difference between High and Ultra quality in Quake 4 is very small for the performance impact it has in this case, so Quake 4 (or other Doom 3 engine game) fans who purchase the 8800 GTS 320MB may wish to avoid Ultra mode.</q>

Um understatement?