HD Video Playback: H.264 Blu-ray on the PC

by Derek Wilson on December 11, 2006 9:50 AM EST- Posted in

- GPUs

X-Men: The Last Stand CPU Overhead

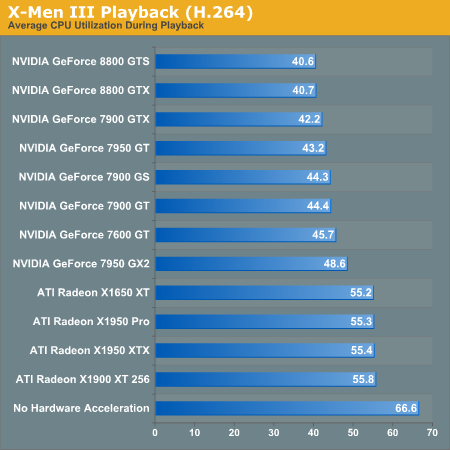

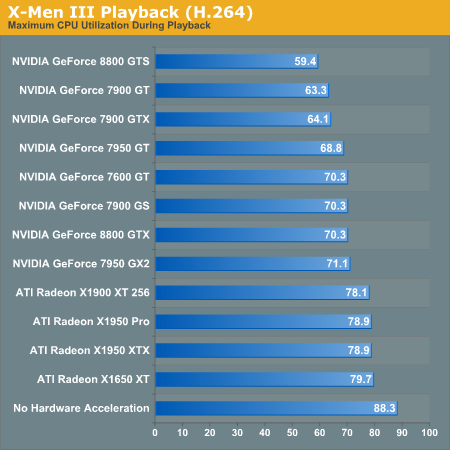

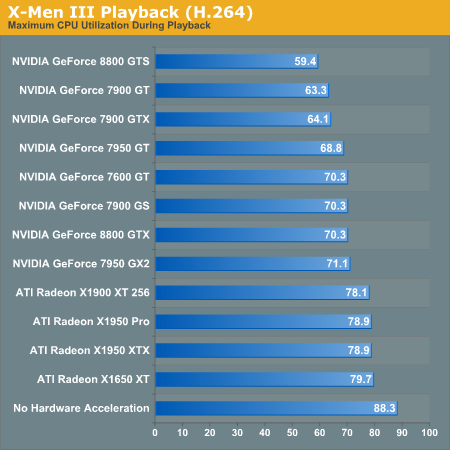

The first benchmark we will see compares the CPU utilization of our X6800 when paired with each one of our graphics cards. While we didn't test multiple variations of each card this time, we did test the reference clock speeds for each type. Based on our initial HDCP roundup, we can say that overclocked versions of these NVIDIA cards will see better CPU utilization. ATI hardware doesn't seem to benefit from higher clock speeds. We have also included CPU utilization for the X6800 without any help from the GPU for reference.

The leaders of the pack are the NVIDIA GeForce 8800 series cards. While the 7 Series hardware doesn't do as well, we can see that clock speed does affect video decode acceleration with these cards. It is unclear whether this will continue to be a factor with the 8 Series, as the results for the 8800 GTX and GTS don't show a difference.

ATI hardware is very consistent, but just doesn't improve performance as much as NVIDIA hardware. This is different than what our MPEG-2 tests indicated. We do still see a marked improvement over our unassisted decode performance test, which is good news for ATI hardware owners.

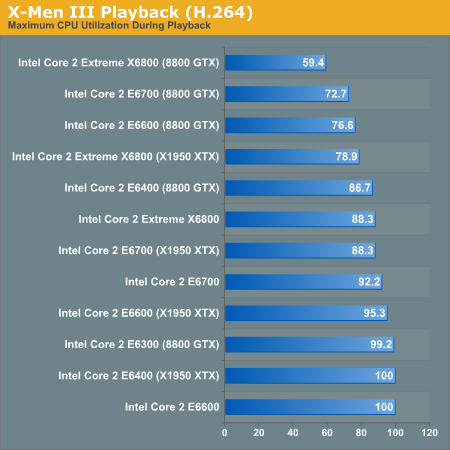

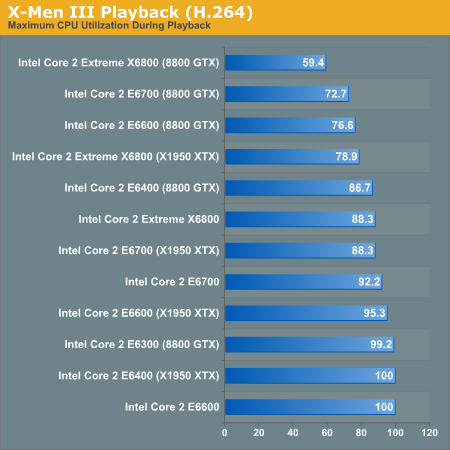

The second test we ran explores different CPUs performance with X-Men 3 decoding. We used NVIDIA's 8800 GTX and ATI's X1950 XTX in order to determine a best and worse case scenario for each processor. The following data isn't based on average CPU utilization, but on maximum CPU utilization. This will give us an indication of whether or not any frames have been dropped. If CPU utilization never hits 100%, we should always have smooth video. The analog to max CPU utilization in game testing is minimum framerate: both tell us the worst case scenario.

While only the E6700 and X6800 are capable of decoding our H.264 movie without help, we can confirm that GPU decode acceleration will allow us to use a slower CPU in order to watch HD content on our PC. The X1950 XTX clearly doesn't help as much as the 8800 GTX, but both make a big difference.

The first benchmark we will see compares the CPU utilization of our X6800 when paired with each one of our graphics cards. While we didn't test multiple variations of each card this time, we did test the reference clock speeds for each type. Based on our initial HDCP roundup, we can say that overclocked versions of these NVIDIA cards will see better CPU utilization. ATI hardware doesn't seem to benefit from higher clock speeds. We have also included CPU utilization for the X6800 without any help from the GPU for reference.

The leaders of the pack are the NVIDIA GeForce 8800 series cards. While the 7 Series hardware doesn't do as well, we can see that clock speed does affect video decode acceleration with these cards. It is unclear whether this will continue to be a factor with the 8 Series, as the results for the 8800 GTX and GTS don't show a difference.

ATI hardware is very consistent, but just doesn't improve performance as much as NVIDIA hardware. This is different than what our MPEG-2 tests indicated. We do still see a marked improvement over our unassisted decode performance test, which is good news for ATI hardware owners.

The second test we ran explores different CPUs performance with X-Men 3 decoding. We used NVIDIA's 8800 GTX and ATI's X1950 XTX in order to determine a best and worse case scenario for each processor. The following data isn't based on average CPU utilization, but on maximum CPU utilization. This will give us an indication of whether or not any frames have been dropped. If CPU utilization never hits 100%, we should always have smooth video. The analog to max CPU utilization in game testing is minimum framerate: both tell us the worst case scenario.

While only the E6700 and X6800 are capable of decoding our H.264 movie without help, we can confirm that GPU decode acceleration will allow us to use a slower CPU in order to watch HD content on our PC. The X1950 XTX clearly doesn't help as much as the 8800 GTX, but both make a big difference.

86 Comments

View All Comments

charleski - Tuesday, December 19, 2006 - link

The only conclusion that can be taken from this article is that PowerDVD uses a very poor h.264 decoder. You got obsessed with comparing different bits of hardware and ignored the real weak link in the chain - the software.Pure software decoding of 1080-res h.264 can be done even on a PentiumD if you use a decent decoder such as CoreAVC or even just the one in ffdshow. You also ignored the fact that these different decoders definitely do differ in the quality of their output. PowerDVD's output is by far the worst to my eyes, the best being ffdshow closely followed by CoreAVC.

tronsr71 - Friday, December 15, 2006 - link

The article mentions that the amount of decoding offloaded by the GPU is directly tied into core clock speed (at least for Nvidia)... If this is true, why not throw in the 6600GT for comparison?? They usually come clocked at 500 mhz stock, but I am currently running mine at 580 with no modifications or extra case cooling.In my opinion, if you were primarily interested in Blu-Ray/HD-DVD watching on your computer or HTPC and gaming as a secondary pastime, the 6600GT would be a great inexpensive approach to supporting a less powerful CPU.

Derek, any chance we could see some benches of this GPU thrown into the mix?

balazs203 - Friday, December 15, 2006 - link

Could somebody tell me what the framerate is of the outgoing signal from the video card? I know that the Playstation 3 can only output a 60 fps signal, but some standalone palyers can output 24 fps.valnar - Wednesday, December 13, 2006 - link

From the 50,000 foot view, it seems just about right, or "fair" in the eyes of a new consumer. HD-DVD and BluRay just came out. It requires a new set-top player for those discs. If you built a new computer TODAY, the parts are readily available to handle the processing needed for decoding. One cannot always expect their older PC to work with today's needs - yes, even a PC only a year old. All in all, it sounds about right.I fall into the category as most of the other posters. My PC can't do it. Build a new one (which I will do soon), and it will. Why all the complaining? I'm sure most of us need to get a new HDCP video card anyway.

plonk420 - Tuesday, December 12, 2006 - link

i can play a High Profile 1080p(25) AVC video on my X2-4600 at maybe 40-70 CPU max (70% being a peak, i think it averaged 50-60%) with CoreAVC...now the ONLY difference is my clip was sans audio and 13mbit (i was simulating a movie at a bitrate if you were to try to squeeze The Matrix onto a single layer HD DVD disc). i doubt 18mbit adds TOO much more computation...

plonk420 - Wednesday, December 13, 2006 - link

http://www.megaupload.com/?d=CLEBUGGH">http://www.megaupload.com/?d=CLEBUGGHgive that a try ... high profile 1080p AVC, with all CPU-sapping options on except for B-[frame-]pyramid.

it DOES have CAVLC (IIRC), 3 B-frames, 3 Refs, 8x8 / 4x4 Transform

Spoelie - Friday, April 20, 2007 - link

CABAC is better and more cpu-sapping then CAVLCStereodude - Tuesday, December 12, 2006 - link

How come the results of this tests are so different from http://www.pcper.com/article.php?aid=328&type=...">this PC Perspective review? I realize they tested HD-DVD, and this review is for Blu-Ray, but H.264 is H.264. Of note is that nVidia provided an E6300 and 7600GT to them to do the review with and it worked great (per the reviewer). Also very interesting is how the hardware acceleration dropped CPU usage from 100% down to 50% in their review on the worst case H.264 disc, but only reduced CPU usage by ~20% with a 7600GT in this review.Lastly, why is nVidia http://download.nvidia.com/downloads/pvzone/Checkl...">recommending an E6300 for H.264 blu-ray and HD-DVD playback with a 7600GT if it's completely inadequate as this review shows?

DerekWilson - Thursday, December 14, 2006 - link

HD-DVD movies even using H.264 are not as stressful. H.264 decode requirements depend on the bitrate at which video is encoded. Higher bitrates will be more stressful. Blu-ray disks have the potential for much higher bitrate movies because they currently support up to 50GB (high bitrate movies also require more space).balazs203 - Wednesday, December 13, 2006 - link

Maybe the bitrate of their disk is not as high as the bitrate of that part of XMEN III.I would not say it completely inadequate. According to the Anandtech review the E6300 with the 8800GTX could remain under 100% CPU utilisation even under the highest bitrate point (the 8800GTX and the 7600GT had the same worst case CPU utilisation in the tests).