NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

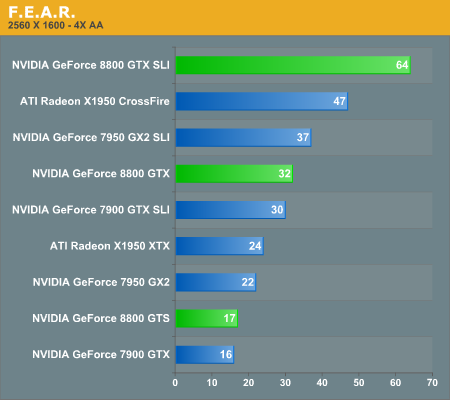

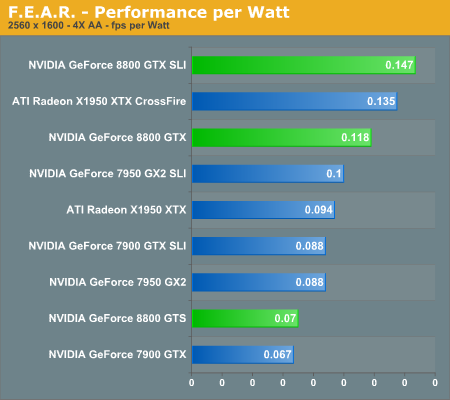

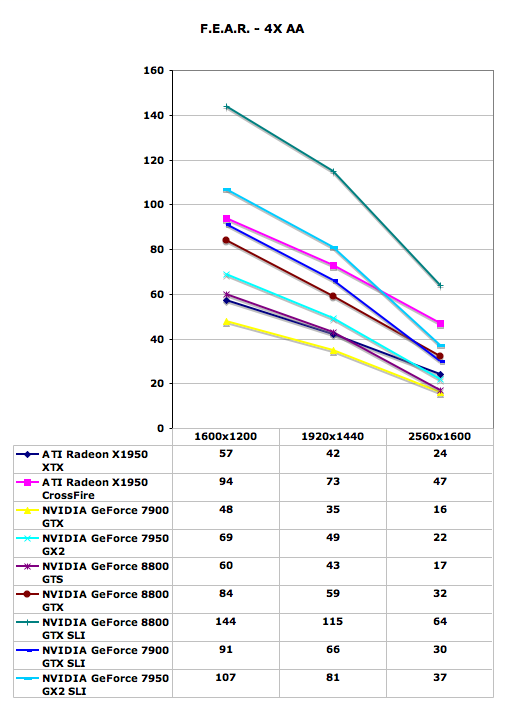

F.E.A.R. Performance

With the latest 1.08 patch, F.E.A.R. has gained multi-core support, potentially using even up to quad core CPUs in order to deliver improved performance. We were able to confirm a performance increase with Core 2 Duo, and we will try to take a look at whether or not Core 2 Quad helps in the near future. Either way, this means that we should now be completely GPU limited in F.E.A.R. testing.

The new GeForce 8800 GTX card still manages to come out faster than the competition, but this time a single 8800 GTX is not able to surpass the performance of dual X1950 XTX cards (or 7900 GTX SLI for that matter). Quad SLI also manages to make a decent showing in this particular benchmark, coming in second place except at the highest resolution. Meanwhile, GeForce 8800 GTS doesn't fare as well, only managing to tie the X1950 XTX for performance, and it even loses that battle at 2560x1600. F.E.A.R. is a game that can use a lot of memory bandwidth, so it's likely that the 2GHz GDDR4 memory on the X1950 XTX is helping out.

If money isn't a concern, 8800 GTX SLI will finally allow you to play F.E.A.R. at 2560x1600 with 4xAA without dropping below 30 FPS. Is that really necessary? Probably not to most people, but if a similar situation exists in other games it becomes a bit more feasible.

111 Comments

View All Comments

JarredWalton - Wednesday, November 8, 2006 - link

Page 17:"The dual SLI connectors are for future applications, such as daisy chaining three G80 based GPUs, much like ATI's latest CrossFire offerings."

Using a third GPU for physics processing is another possibility, once NVIDIA begins accelerating physics on their GPUs (something that has apparently been in the works for a year or so now).

Missing Ghost - Wednesday, November 8, 2006 - link

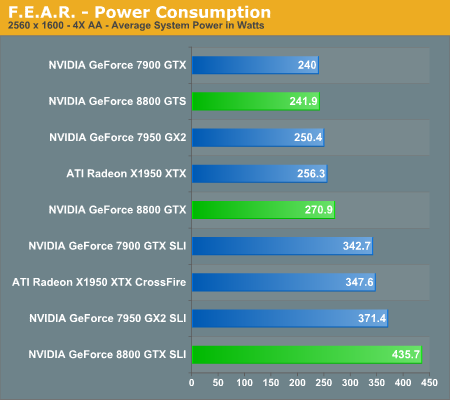

So it seems like by substracting the highest 8800gtx sli power usage result with the one for the 8800gtx single card we can conclude that the card can use as much as 205W. Does anybody knows if this number could increase when the card is used in DX10 mode?JarredWalton - Wednesday, November 8, 2006 - link

Without DX10 games and an OS, we can't test it yet. Sorry.JarredWalton - Wednesday, November 8, 2006 - link

Incidentally, I would expect the added power draw in SLI comes from more than just the GPU. The CPU, RAM, and other components are likely pushed to a higher demand with SLI/CF than when running a single card. Look at FEAR as an example, and here's the power differences for the various cards. (Oblivion doesn't have X1950 CF numbers, unfortunately.)X1950 XTX: 91.3W

7900 GTX: 102.7W

7950 GX2: 121.0W

8800 GTX: 164.8W

Notice how in this case, X1950 XTX appears to use less power than the other cards, but that's clearly not the case in single GPU configurations, as it requires more than everything besides the 8800 GTX. Here's the Prey results as well:

X1950 XTX: 111.4W

7900 GTX: 115.6W

7950 GX2: 70.9W

8800 GTX: 192.4W

So there, GX2 looks like it is more power efficient, mostly because QSLI isn't doing any good. Anyway, simple subtraction relative to dual GPUs isn't enough to determine the actual power draw of any card. That's why we presented the power data without a lot of commentary - we need to do further research before we come to any final conclusions.

IntelUser2000 - Wednesday, November 8, 2006 - link

It looks like putting SLI uses +170W more power. You can see how significant video card is in terms of power consumption. It blows the Pentium D away by couple of times.JoKeRr - Wednesday, November 8, 2006 - link

well, keep in mind the inefficiency of PSU, generally around 80%, so as overall power draw increases, the marginal loss of power increases a lot as well. If u actually multiply by 0.8, it gives about 136W. I suppose the power draw is from the wall.DerekWilson - Thursday, November 9, 2006 - link

max TDP of G80 is at most 185W -- NVIDIA revised this to something in the 170W range, but we know it won't get over 185 in any case.But games generally don't enable a card to draw max power ... 3dmark on the other hand ...

photoguy99 - Wednesday, November 8, 2006 - link

Isn't 1920x1440 a resolution that almost no one uses in real life?Wouldn't 1920x1200 apply many more people?

It seems almost all 23", 24", and many high end laptops have 1900x1200.

Yes we could interpolate benchmarks, but why when no one uses 1440 vertical?

Frallan - Saturday, November 11, 2006 - link

Well i have one more suggestion for a resolution. Full HD is 1920*1080 - that is sure to be found in a lot of homes in the future (after X-mas any1 ;0) ) on large LCDs - I believe it would be a good idea to throw that in there as well. Especially right now since loads of people will have to decide how to spend their money. The 37" Full HD is a given but on what system will I be gaming PS-3/X-Box/PC... Pls advice.JarredWalton - Wednesday, November 8, 2006 - link

This should be the last time we use that resolution. We're moving to LCD resolutions, but Derek still did a lot of testing (all the lower resolutions) on his trusty old CRT. LOL