NVIDIA's GeForce 8800 (G80): GPUs Re-architected for DirectX 10

by Anand Lal Shimpi & Derek Wilson on November 8, 2006 6:01 PM EST- Posted in

- GPUs

Black & White 2 Performance

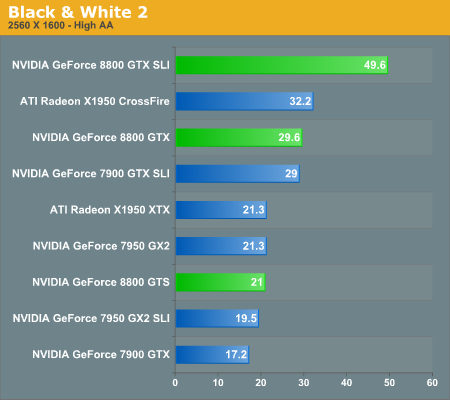

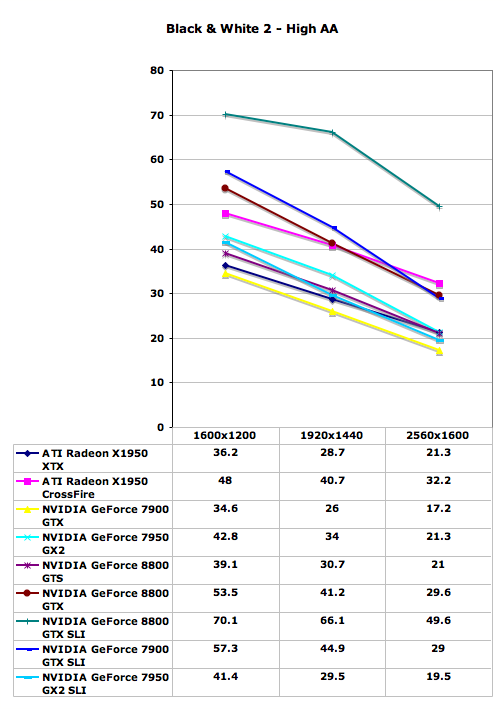

The GeForce 8800 GTX once again makes an impressive showing in Black and White 2, nearly equaling the performance of X1950 XTX CrossFire and GeForce 7900 GTX SLI in all tested resolutions. Even at these very high quality settings, 8800 GTX SLI becomes CPU limited below 1920x1440, so you will definitely want a large monitor before even considering two of these cards. Quad SLI has a pretty poor showing in this game, which is a problem that has plagued QSLI since it first became available. In games that can leverage the technology, it can improve performance quite a bit, but in other titles Quad SLI has difficulty even keeping up with 7900 GTX SLI.

GeForce 8800 GTS is quite a bit slower than its big brother, offering performance more or less equal to the X1950 XTX and the 7950 GX2. It still has the DirectX 10 advantage, but in current generation titles it's more a case of remaining competitive rather than adding a substantial performance increase. In this game, GeForce 8800 GTS is only ~15% faster than 7900 GTX. Two 8800 GTS cards in SLI should still take second place overall, but it's going to be a distant second.

111 Comments

View All Comments

haris - Thursday, November 9, 2006 - link

You must have missed the article they published the very next day http://www.theinquirer.net/default.aspx?article=35...">here. saying they goofed.Araemo - Thursday, November 9, 2006 - link

Yes I did - thanks.I wish they would have updated the original post to note the mistake, as it is still easily accessible via google. ;) (And the 'we goofed' post is only shown when you drill down for more results)

Araemo - Thursday, November 9, 2006 - link

In all the AA comparison photos of the power lines, with the dome in the background - why does the dome look washed out in the G80 images? Is that a driver glitch? I'm only on page 12, so if you explain it after that.. well, I'll get it eventually.. ;) But is that just a driver glitch, or is it an IQ problem with the G80 implementation of AA?bobsmith1492 - Thursday, November 9, 2006 - link

Gamma-correcting AA sucks.Araemo - Thursday, November 9, 2006 - link

That glitch still exists whether or not gamma-correcting AA is enabled or disabled, so that isn't it.iwodo - Thursday, November 9, 2006 - link

I want to know if these power hungry monster have any power saving features?I mean what happen if i am using Windows only most of the time? Afterall CPU have much better power management when they are idle or doing little work. Will i have to pay extra electricity bill simply becoz i am a cascual gamer with a power - hungry/ ful GPU ?

Another question pop up my mind was with CUDA would it now be possible for thrid party to program a H.264 Decoder running on GPU? Sounds good to me:D

DerekWilson - Thursday, November 9, 2006 - link

oh man ... I can't believe I didn't think about that ... video decoder would be very cool.Pirks - Friday, November 10, 2006 - link

decoder is not interesting, but the mpeg4 asp/avc ENCODER on the G80 GPU... man I can't imagine AVC or ASP encoding IN REAL TIME... wow, just wooowwwI'm holding my breath here

Igi - Thursday, November 9, 2006 - link

Great article. The only thing I would like to see in a follow up article is performance comparison in CAD/CAM applications (Solidworks, ProEngineer,...).BTW, how noisy are new cards in comparison to 7900GTX and others (in idle and under load)?

JarredWalton - Thursday, November 9, 2006 - link

I thought it was stated somewhere that they are as loud (or quiet if you prefer) as the 7900 GTX. So really not bad at all, considering the performance offered.