Server Guide part 2: Affordable and Manageable Storage

by Johan De Gelas on October 18, 2006 6:15 PM EST- Posted in

- IT Computing

SAS layers in the real world

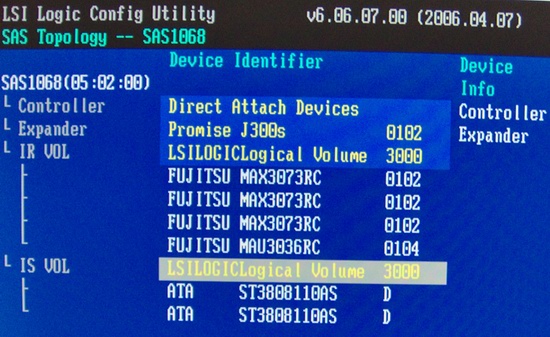

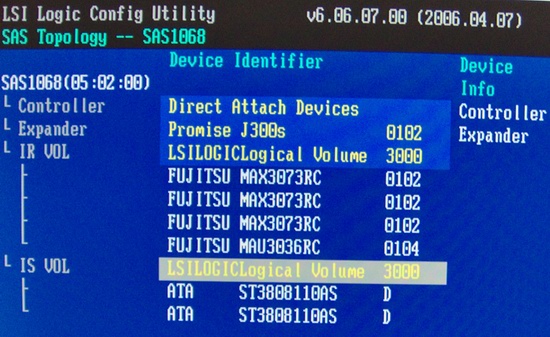

The SAS layered model is much more than just theory. Below you can see how our LSI Logic card is able to combine a SATA RAID volume with two SATA disks and a SAS RAID volume with four 15000 rpm Fujitsu SAS hard disks in the same Promise Vtrak 300JS JBOD storage rack. Don't let the word JBOD confuse you: it does not refer to the RAID level "Just a Bunch Of Disks". It means that the storage rack doesn't have any raid capabilities, and that the RAID implementation needs to be on the HBA card. Also notice the word "expander" in the screenshot below, which refers to the circuit which is basically a SAS crossbar switch and a router at the same time. We will discuss the expander's functionality in more detail.

The bandwidth between the HBA and the SAS storage racks is also much higher than similar SCSI-320 configurations. Let us take a look at the external port of our HBA card. We find an industry standard 4x wide-port SAS connector, which combines four 300 MB/s SAS cables in one "SFF-8470 4X to 4X" external SAS cable. This gives us 1.2 GB/s bandwidth to any external storage rack, four times more than what would be possible with SCSI-320.

A 4x wide port

When we combine this wide port with a storage rack that has a built-in expander, magic happens. We get the advantages of the parallel SCSI architecture and those of the Serial SATA architecture. To understand why we say that "magic happens", let us take a concrete example. The storage rack that we tried out was the Promise Vtrack J300S, which can contain up to 12 3.5 inch drives. Take a look at the picture below.

In this configuration you get a big 1.2 GB/s pipe, called a SAS wide port, to your storage rack. If we use SATA without a port multiplier, we would only be able to attach four drives: each drives needs its own cable, its own point to point connection. If we use SATA with a port multiplier, we are able to use all 12 drives, but our maximum bandwidth to our HBA would be limited to 300 MB/s. This is OK for transaction based traffic, but it might introduce a bottleneck for streaming applications.

With SCSI we would be able to use up to 14 drives without a port multiplier, but we would have to add another SCSI HBA card if we ran out of space with our 14 SCSI drives. As hot swap PCI slots are very rare and expensive, this could mean we would have to take the server and storage down for some time.

Thanks to the built-in expander of the Vtrack J300s, not only can we address 12 drives with only 4 point to point connections, but we can also use up to four cascaded storage racks. So in this case, Promise has limited the SAS routing and SAS traffic distribution to 48 (4x 12) drives. In theory SAS can support up to 128 devices, but in practice HBA is limited to about 122 drives.

In other words SAS combines all the advantages of SATA and SCSI, without inheriting any of the disadvantages:

Serial Attached SCSI or SAS has been available since late 2005 and is a logical evolution of the old parallel SCSI-320. However, it is quite revolutionary as it offers in some ways functionality that was only available with high end fibre channel storage.

The SAS layered model is much more than just theory. Below you can see how our LSI Logic card is able to combine a SATA RAID volume with two SATA disks and a SAS RAID volume with four 15000 rpm Fujitsu SAS hard disks in the same Promise Vtrak 300JS JBOD storage rack. Don't let the word JBOD confuse you: it does not refer to the RAID level "Just a Bunch Of Disks". It means that the storage rack doesn't have any raid capabilities, and that the RAID implementation needs to be on the HBA card. Also notice the word "expander" in the screenshot below, which refers to the circuit which is basically a SAS crossbar switch and a router at the same time. We will discuss the expander's functionality in more detail.

The bandwidth between the HBA and the SAS storage racks is also much higher than similar SCSI-320 configurations. Let us take a look at the external port of our HBA card. We find an industry standard 4x wide-port SAS connector, which combines four 300 MB/s SAS cables in one "SFF-8470 4X to 4X" external SAS cable. This gives us 1.2 GB/s bandwidth to any external storage rack, four times more than what would be possible with SCSI-320.

A 4x wide port

When we combine this wide port with a storage rack that has a built-in expander, magic happens. We get the advantages of the parallel SCSI architecture and those of the Serial SATA architecture. To understand why we say that "magic happens", let us take a concrete example. The storage rack that we tried out was the Promise Vtrack J300S, which can contain up to 12 3.5 inch drives. Take a look at the picture below.

In this configuration you get a big 1.2 GB/s pipe, called a SAS wide port, to your storage rack. If we use SATA without a port multiplier, we would only be able to attach four drives: each drives needs its own cable, its own point to point connection. If we use SATA with a port multiplier, we are able to use all 12 drives, but our maximum bandwidth to our HBA would be limited to 300 MB/s. This is OK for transaction based traffic, but it might introduce a bottleneck for streaming applications.

With SCSI we would be able to use up to 14 drives without a port multiplier, but we would have to add another SCSI HBA card if we ran out of space with our 14 SCSI drives. As hot swap PCI slots are very rare and expensive, this could mean we would have to take the server and storage down for some time.

Thanks to the built-in expander of the Vtrack J300s, not only can we address 12 drives with only 4 point to point connections, but we can also use up to four cascaded storage racks. So in this case, Promise has limited the SAS routing and SAS traffic distribution to 48 (4x 12) drives. In theory SAS can support up to 128 devices, but in practice HBA is limited to about 122 drives.

In other words SAS combines all the advantages of SATA and SCSI, without inheriting any of the disadvantages:

- You can use up to 122 drives instead of 14 (SCSI)

- You do not have to use a cable for each drive (SATA-1)

- Thanks to wide ports, the bandwidth of several channels can be combined into one big multiplexed pipe

- Thanks to the serial signalling of SAS, the bandwidth of wide ports will double in 2008 (4x600 MB/s instead of 4x 300 MB/s)

- You only need one SAS cable to attach external storage

- You can use cheaper SATA and fast SAS drives in the same storage rack

Serial Attached SCSI or SAS has been available since late 2005 and is a logical evolution of the old parallel SCSI-320. However, it is quite revolutionary as it offers in some ways functionality that was only available with high end fibre channel storage.

21 Comments

View All Comments

Bill Todd - Saturday, October 28, 2006 - link

It's quite possible that the reason you are seeing far fewer unrecoverable errors than the specs would suggest is that you're reading all or at least a large percentage of your data far more frequently than the specs assume. Background 'scrubbing' of data - using a disk's idle periods to scan its surface and detect any sectors which are unreadable (in which case they can be restored from a mirror or parity-generated copy if one exists) or becoming hard to read (in which case they can just be restored to better health, possibly in a different location, from their own contents) - decreases the incidence of unreadable sectors by several orders of magnitude compared to the value specified, and the amount of reading that you're doing may be largely equivalent to such scrubbing (or, for that matter, perhaps you're actively scrubbing as well).While Johan's article is one of the best that I've seen on storage technology in years, in this respect I think he may have been a bit overly influenced by Seagate marketing, which conveniently all but ignores (and certainly makes no attempt to quantify) the effect of scrubbing on potential data loss from using those alleged-risky SATA upstarts. Seagate, after all, has a lucrative high-end drive franchise to protect; we see a similar bias in their emphasis on the lack of variable sector sizes in SATA, with no mention of new approaches such as Sun's ZFS that attain more comprehensive end-to-end integrity checks without needing them, and while higher susceptibility to rotational vibration is a legitimate knock, it's worst in precisely those situations where conventional SATA drives are inappropriate for other reasons (intense, continuous-duty-cycle small-random-access workloads: I'd really have liked to have seen more information on just how well WD SATA Raptors match enterprise drives in that area, because if one can believe their specs they would seem to be considerably more cost-effective solutions in most such instances).

- bill

JohanAnandtech - Thursday, October 19, 2006 - link

"Nonetheless, something bugs me in your article on Seagate test. I manage a cluster of servers whose total throughoutput is around 110 TB a day (using around 2400 SATA disks). With Seagate figure (an Unrecoverable Error every 12.5 terabytes written or read), I would get 10 Unrecoverable Errors every day. Which, as far as I know, is far away from what I may see (a very few per week/month). "1. The EUR number is worst case, so the 10 Unrec errors you expect to see are really the worst situation that you would get.

2. Cached reads are not included as you do not access the magnetic media. So if on average the servers are able to cache rather well, you are probably seeing half of that throughtput.

And it also depends on how you measured that. Is that throughput on your network or is that really measured like bi/bo of Vmstat or another tool?

Fantec - Thursday, October 19, 2006 - link

There is no cache (for two reason, first the data is accessed quite randomly while there is only 4 GB of memory for 6 TB of data, second data are stored/accessed on block device in raw mode). And, indeed, throughoutput is mesured on network but figures from servers match (iostat).

Sunrise089 - Thursday, October 19, 2006 - link

I liked this story, but I finished feeling informed but not satisfied. I love AT's focus on real-world performance, so I think an excellent addition would be more info into actually building a storage system, or at least some sort of a buyers guide to let us know how the tech theory translates over to the marketplace. The best idea would be a tour of AT's own equipment and a discussion of why it was chosen.JohanAnandtech - Thursday, October 19, 2006 - link

If you are feeling informed and not satisfied, we have reached our goal :-). The next article will go in through the more complex stuff: when do I use NAS, when do I use DAS and SAN. What about iSCSI and so on. We are also working to having different storage solutions in our lab.stelleg151 - Wednesday, October 18, 2006 - link

In the table the cheetah decodes 1000block of 4KB faster than the raptor decodes 100 blocks of 4KB. Guessing this is a typo. Liked the article.JarredWalton - Wednesday, October 18, 2006 - link

Yeah, I notified Johan of the error but figured it wasn't big enough problem to hold back releasing the article. I guess I can Photoshop the image myself... I probably should have just done that, but I was thinking it would be more difficult than it is. The error is corrected now.slashbinslashbash - Wednesday, October 18, 2006 - link

I appreciate the theory and the mentioning of some specific products and the general recommendations in this article, but you started off mentioning that you were building a system for AT's own use (at the lowest reasonable cost) without fully going into exactly what you ended up using or how much it cost.So now I know something about SAS, SATA, and other technologies, but I have no idea what it will actually cost me to get (say) 1TB of highly-reliable storage suitable for use in a demanding database environment. I would love to see a line-item breakdown of the system that you ended up buying, along with prices and links to stores where I can buy everything. I'm talking about the cables, cards, drives, enclosures, backplanes, port multipliers, everything.

Of course my needs aren't the same as AnandTech's needs, but I just need to get an idea of what a typical "total solution" costs and then scale it to my needs. Also it'd be cool to have a price/performance comparison with vendor solutions like Apple, Sun, HP, Dell, etc.

BikeDude - Friday, October 20, 2006 - link

What if you face a bunch of servers with modest disk I/O that require high availability? We typically use SATA drives in RAID-1 configurations, but I've seen some disturbing issues with the onboard SATA RAID controller on a SuperMicro server which leads me to believe that SCSI is the right way to go for us. (the issue was that the original Adaptec driver caused Windows to eventually freeze given a certain workload pattern -- I've also seen mirrors that refuse to rebuild after replacing a drive; we've now stopped buying Maxtor SATA drives completely)More to the point: Seagate has shown that massive amount of IO requires enterprise class drives, but do they say anything about how enterprise class drives behave with a modest desktop-type load? (I wish the article linked directly to the document on Seagate's site, instead it links to a powerpoint presentation hosted by microsoft?)

JohanAnandtech - Thursday, October 19, 2006 - link

Definitely... When I started writing this series I start to think about what I was asking myself years ago. For starters, what the weird I/O per second benchmarking. If you are coming from the workstation world, you expect all storage benchmarks to be in MB/s and ms.Secondly, one has to know the interfaces available. The features of SAS for example could make you decide to go for a simple DAS instead of an expensive SAN. Not always but in some cases. So I had to make sure that before I start talking iSCSI, FC SAN, DAS that can be turned in to SAN etc., all my readers know what SAS is all about.

So I hope to address the things you brought up in the second storage article.