Intel's Core 2 Extreme & Core 2 Duo: The Empire Strikes Back

by Anand Lal Shimpi on July 14, 2006 12:00 AM EST- Posted in

- CPUs

FSB Bottlenecks: Is 1333MHz Necessary?

Although all desktop Core 2 processors currently feature a 1066MHz FSB, Intel's first Woodcrest processors (the server version of Conroe) offer 1333MHz FSB support. Intel doesn't currently have a desktop chipset with support for the 1333MHz FSB, but the question we wanted answered was whether or not the faster FSB made a difference.

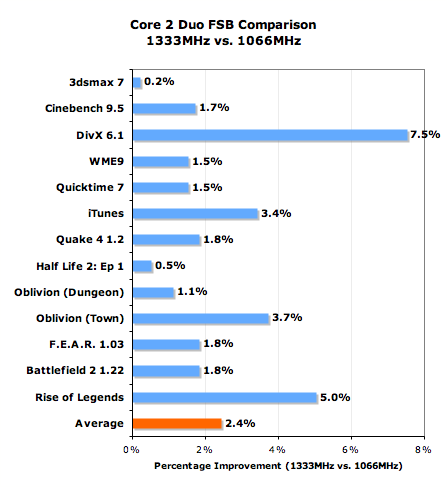

We took our unlocked Core 2 Extreme X6800 and ran it at 2.66GHz using two different settings: 266MHz x 10 and 333MHz x 8; the former corresponds to a 1066MHz FSB and is the same setting that the E6700 runs at, while the latter uses a 1333MHz FSB. The 1333MHz setting used a slightly faster memory bus (DDR2-811 vs. DDR2-800) but given that the processor is not memory bandwidth limited even at DDR2-667 the difference between memory speeds is negligible.

With Intel pulling in the embargo date of all Core 2 benchmarks we had to cut our investigation a bit short, so we're not able to bring you the full suite of benchmarks here to investigate the impact of FSB frequency. That being said, we chose those that would be most representative of the rest.

Why does this 1333MHz vs. 1066MHz debate even matter? For starters, Core 2 Extreme owners will have the option of choosing since they can always just drop their multiplier and run at a higher FSB without overclocking their CPUs (if they so desire). There's also rumor that Apple's first Core 2 based desktops may end up using Woodcrest and not Conroe, which would mean that the 1333MHz FSB would see the light of day on some desktops sooner rather than later.

The final reason this comparison matters is because in reality, Intel's Core architecture is more data hungry than any previous Intel desktop architecture and thus should, in theory, be dependent on a nice and fast FSB. At the same time, thanks to a well engineered shared L2 cache, FSB traffic has been reduced on Core 2 processors. So which wins the battle: the data hungry 4-issue core or the efficient shared L2 cache? Let's find out.

On average at 2.66GHz, the 1333MHz FSB increases performance by 2.4%, but some applications can see an even larger increase in performance. Under DivX, the performance boost was almost as high as going from a 2MB L2 to a 4MB L2. Also remember that as clock speed goes up, the dependence on a faster FSB will also go up.

Thanks to the shared L2 cache, core to core traffic is no longer benefitted by a faster FSB so the improvements we're seeing here are simply due to how data hungry the new architecture is. With its wider front end and more aggressive pre-fetchers, it's no surprise that the Core 2 processors benefit from the 1333MHz FSB. The benefit will increase even more as the first quad core desktop CPUs are introduced. The only question that remains is how long before we see CPUs and motherboards with official 1333MHz FSB support?

If Apple does indeed use a 1333MHz Woodcrest for its new line of Intel based Macs, running Windows it may be the first time that an Apple system will be faster out of the box than an equivalently configured, non-overclocked PC. There's an interesting marketing angle.

202 Comments

View All Comments

Calin - Friday, July 14, 2006 - link

This is a bit more complicated - you could buy a $1000 FX-62, or you could buy a $316 Core2Duo, then a $150+ mainboard. If you want to run SLI, you are out of luck right now - but things might change in the immediate future. If you have NVidia SLI, you must go to Crossfire (at this moment).But anyway, looks like AMD can not compete in the top

Regs - Friday, July 14, 2006 - link

Since the P6!!!! Makes me think if AMD actually cares about improving performance on their processors. Maybe they should scrap the Fab in New York and make a research facility instead. Start hiring interns from MIT. Do something! lol.I admit, even though I enjoyed AMD having the performance crown, It was a period of limited choice and limited performance gain. Who here on the free market care about 100MHz increaments? They went from a 110nm to 90nm with no performance benifit - they went from single core to the dual core X2's with no performance benifit -- they went from DDR to DDR2 with no performance improvement -- now they are going to 65nm which they also made clear they will make no changes to increase performance. AMD has really dropped the ball and they deserve what they get. I don't know why anyone, including over clockers, would want to be a AMD fan boy at the momment.

CKDragon - Friday, July 14, 2006 - link

AMD went from single core to dual core with no performance benefit?Maybe on Planet Troll...

Regs - Friday, July 14, 2006 - link

The X2 improved performance only on specific suites of software. Can you say the same about Conroe? I mean I was really able to crank up the rez in oblivion after I upgraded to an X2 *rolls eyes*.JarredWalton - Friday, July 14, 2006 - link

The performance increases you're seeing in most games on Core 2 Duo come entirely from the better architecture, not from dual processor cores. We just can't test single core performance on Core 2 because such chips don't exist and they won't until Conroe-L ships (in about a year judging by road maps -- it looks like Intel and their partners want to have time to clear out all of their NetBurst inventory first).Regs - Friday, July 14, 2006 - link

Yes, I completely agree. The only difference on the X2 compared to the single cores was encoding. Not unless you do own a 10-thousand dollar server for well...server use.Calin - Friday, July 14, 2006 - link

Being a fanboy is like a religion - you don't change your religion overnight.AMD cares about selling expensive processors. As long as the P4 was the opposition (especially after the Northwood days), AMD was king of hill, and sold its processors at whatever prices the market would pay. Now, Intel took that place. I hope this will change with K8L, as this will bring even lower prices for even better processors.

Also, AMD was unable to produce enough processors, so they sold most of it for a premium. As for the move to 90nm, they got some extra frequency headroom, and lower power consumption. This also reduced their costs (too bad the cost reduction wasn't really passed to customers).

If their move from single to dual core brought no performance benefit, tell that to companies buying dual core opterons for thousands dollars apiece.

segagenesis - Friday, July 14, 2006 - link

Good lord. You might as well throw up the GAME OVER and TILT signs for AMD right now. Although I wouldnt want them to disappear from competition (I dont want us to return to expensive Intel cpu's at the same time) there isnt much I see in this article that gives AMD any advantage at the moment over Intel. Sooner or later this was bound to happen from Intel though, the Athlon 64 made a similar situation against Pentium 4 making it look pretty obsolete comparitavely at the time.Now assuming the prices that AMD plans to drop to are correct, perhaps they can remain compeditive for building a budget system vs. Core 2 as I would not recommend a new Pentium 4 at this point to anyone...

That reminds me of the good ol days over overclocking the Celeron A...

dice1111 - Wednesday, July 19, 2006 - link

Ahhh, yes. My old Celeron A (still overclocked and in use). I was so happy about overclocking back then. Please Intel, let me get that taste of nostalgia!!!mobutu - Friday, July 14, 2006 - link

I'd really like to upgrade to Conroe but I don't want the Intel chipset on motherboard.Jarred, Wesley, do you know (estimate) when you'll have a review with final 590 reference board and when we can expect motherboards with 590 Intel edtn to be available?

Thanks in advance guys. Great Conroe review.