Intel's Core 2 Extreme & Core 2 Duo: The Empire Strikes Back

by Anand Lal Shimpi on July 14, 2006 12:00 AM EST- Posted in

- CPUs

Power Consumption: Who is the king?

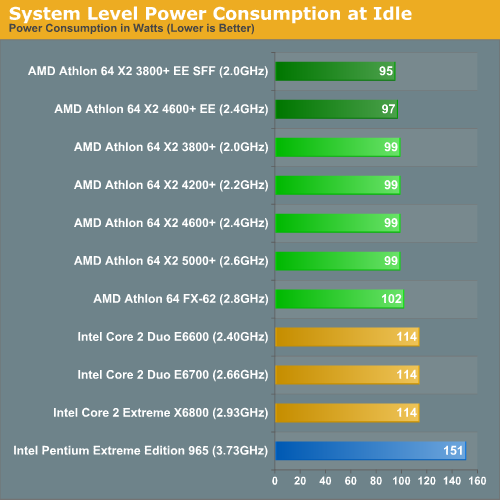

Intel promised us better performance per watt, lower energy consumed per instruction, and an overall serious reduction in power consumption with Conroe and its Core 2 line of processors. Compared to its NetBurst predecessors, the Core 2 lineup consumes significantly lower power - but what about compared to AMD?

This is one area that AMD is not standing still in, and just days before Intel's launch AMD managed to get us a couple of its long awaited Energy Efficient Athlon 64 X2 processors that are manufactured to target much lower TDPs than its other X2 processors. AMD sent us its Athlon 64 X2 4600+ Energy Efficient processor which carries a 65W TDP compared to 89W for the regular 4600+. The more interesting CPU is its Athlon 64 X2 3800+ Energy Efficient Small Form Factor CPU, which features an extremely low 35W rating. We've also included the 89W Athlon 64 X2s in this comparison, as well as the 125W Athlon 64 FX-62.

Cool 'n Quiet and EIST were enabled for AMD and Intel platforms respectively; power consumption was measured at the wall outlet. We used an ASUS M2NPV-VM for our AM2 platform and ASUS' P5W DH Deluxe for our Core 2 platform, but remember that power consumption will be higher with a SLI chipset on either platform. We used a single GeForce 7900 GTX, but since our power consumption tests were all done at the Windows desktop 3D performance/power consumption never came into play.

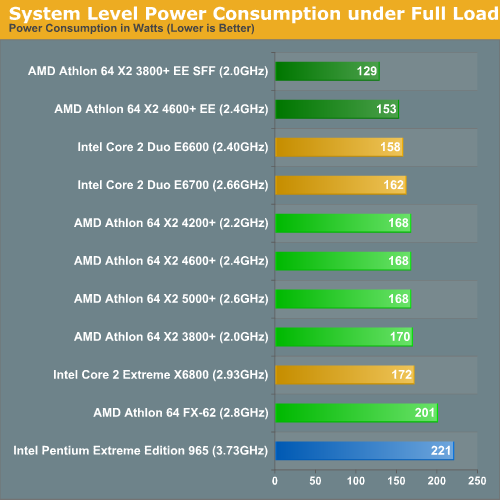

We took two power measurements: peak at idle and peak under load while performing our Windows Media Encoder 9 test.

Taking into consideration the fact that we were unable to compare two more similar chipsets (we will take a look back at that once retail Intel nForce 5 products hit the shelves), these power numbers heavily favor Intel. The releative power savings over the Extreme Edition 965 show just how big the jump is, and the ~15% idle power advantage our lower power AMD motherboard has over the Intel solution isn't a huge issue, especially when considering the performance advantage for the realtively small power investment.

When looking at load power, we can very clearly see that AMD is no longer the performance per watt king. While the Energy Efficient (EE) line of X2 processors is clearly very good at dropping load power (especially in the case of the 3800+), not even these chips can compete with the efficiency of the Core 2 line while encoding with WME9. The bottom line is that Intel just gets it done faster while pulling fewer watts (e.g. Performance/Watt on the X6800 is 0.3575 vs. 0.2757 on the X2 3800+ EE SFF).

In fact, in a complete turn around from what we've seen in the past, the highest end Core 2 processor is actually the most efficient (performance per Watt) processor in the lineup for WME9. This time, those who take the plunge on a high priced processor will not be stuck with brute force and a huge electric bill.

202 Comments

View All Comments

code255 - Friday, July 14, 2006 - link

Thanks a lot for the Rise of Legends benchmark! I play the game, and I was really interested in seeing how different CPUs perform in it.And GAWD DAMN the Core 2 totally owns in RoL, and that's only in a timedemo playback environment. Imagine how much better it'll be over AMD in single-player games where lots of AI calculations need to be done, and when the settings are at max; the high-quality physics settings are very CPU intensive...

I've so gotta get a Core 2 when they come out!

Locutus465 - Friday, July 14, 2006 - link

It's good to see intel is back. Now hopefully we'll be seeing some real innovation in the CPU market again. I wonder what the picture is going to look like in a couple years when I'm ready to upgrade again!Spoonbender - Friday, July 14, 2006 - link

First, isn't it misleading to say "memory latency" is better than on AMD systems?What happens is that the actual latency for *memory* access is still (more or less) the same. But the huge cache + misc. clever tricks means you don't have to go all the way to memory as often.

Next up, what about 64-bit? Wouldn't it be relevant to see if Conroe's lead is as impressive in 64-bit? Or is it the same horrible implementation that Netburst used?

JarredWalton - Friday, July 14, 2006 - link

Actually, it's the "clever tricks" that are reducing latency. (Latency is generally calculated with very large data sets, so even if you have 8 or 16 MB of cache the program can still determine how fast the system memory is.) If the CPU can analyze RAM access requests in advance and queue up the request earlier, main memory has more time to get ready, thus reducing perceived latency from the CPU. It's a matter of using transistors to accomplish this vs. using them elsewhere.It may also be that current latency applications will need to be adjusted to properly compute latency on Core 2, but if their results are representative of how real world applications will perceive latency, it doesn't really matter. Right now, it appears that Core 2 is properly architected to deal with latency, bandwidth, etc. very well.

Spoonbender - Friday, July 14, 2006 - link

Well, when I think of latency, I think worst-case latency, when, for some reason, you need to access something that is still in memory, and haven't already been queued.Now, if their prefetching tricks can *always* start memory loads before they're needed, I'll agree, their effective latency is lower. But if it only works, say, 95% of the time, I'd still say their latency is however long it takes for me to issue a memory load request, and wait for it to get back, without a cache hit, and without the prefetch mechanism being able to kick in.

Just technical nitpicking, I suppose. I agree, the latency that applications will typcially perceive is what the graph shows. I just think it's misleading to call that "memory latency"

As you say, it's architected to hide the latency very well. Which is a damn good idea. But that's still not quite the same as reducing the latency, imo.

Calin - Friday, July 14, 2006 - link

You could find the real latency (or most of it) by reading random locations in the main memory. Even the 4MB cache on the Conroe won't be able to prefetch all the main memory.Anyway, the most interesting is what memory latency the application that run feels. This latency might be lower on high-load, high-memory server processors (not that current benchmarks hint at this for Opteron against server-level Core2)

JarredWalton - Friday, July 14, 2006 - link

"You could find the real latency (or most of it) by reading random locations in the main memory."I'm pretty sure that's how ScienceMark 2.0 calculates latency. You have to remember, even with the memory latency of approximately 35 ns, that delay means the CPU now has approximately 100 cycles to go and find other stuff to do. At an instruction fetch rate of 4 instructions per cycle, that's a lot of untapped power. So, while it waits on main memory access one, it can be scanning the next accesses that are likely to take place and start queuing them up. And the net result is that you may never actually be able to measure latency higher than about 35 ns or whatever.

The way I think of it is this: pipeline issues aside, a large portion of what allowed Athlon 64 to outperform at first was reduced memory latency. Remember, Pentium 4 was easily able to outperform Athlon XP in the majority of benchmarks -- it just did so at higher clock speeds. (Don't *even* try to tell me that the Athlon XP 3200+ was as fast as a Pentium 4 3.2 GHz... LOL.) AMD boosted performance by about 25% by adding an integrated memory controller. Now Intel is faster at similar clock speeds, and although the 4-wide architectural design helps, they almost certainly wouldn't be able to improve performance without improving memory latency -- not just, but in actual practice. With us, I have to think that our memory latency scores are generally representative of what applications see. All I can say is, nice design Intel!

JarredWalton - Friday, July 14, 2006 - link

"...allowed Athlon 64 to outperform at first was...."Should be:

"...allowed Athlon 64 to outperform NetBurst was..."

Bad Dragon NaturallySpeaking!

yacoub - Friday, July 14, 2006 - link

""Another way of looking at it is that Intel's Core 2 Duo E6600 is effectively a $316 FX-62".Then the only question that matters at all for those of us with AMD systems is: Can I get an FX-62 for $316 or less (and run it on my socket-939 board)? If so, I would pick one up. If not, I would go Intel.

End of story.

Gary Key - Friday, July 14, 2006 - link

A very good statement. :)