NVIDIA Single Card, Multi-GPU: GeForce 7950 GX2

by Derek Wilson on June 5, 2006 12:00 PM EST- Posted in

- GPUs

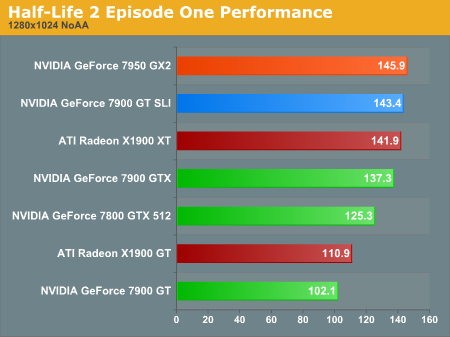

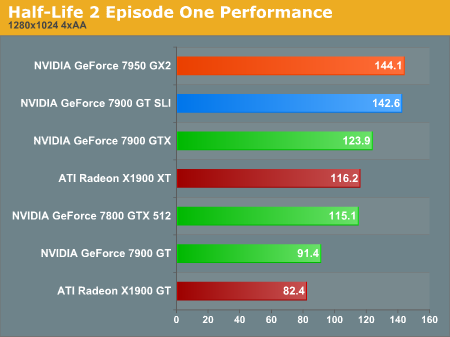

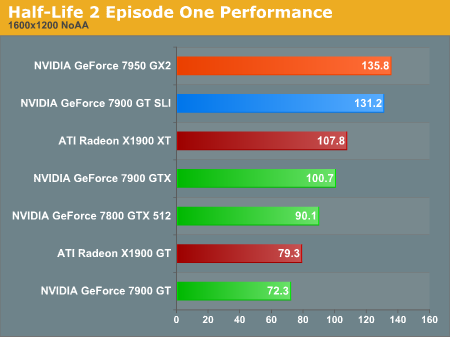

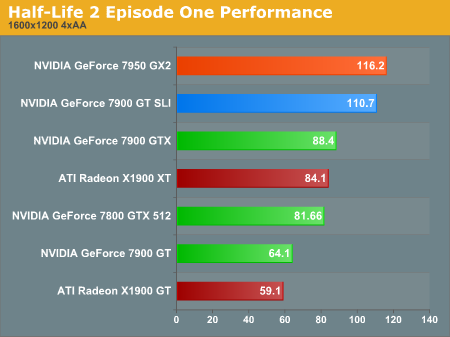

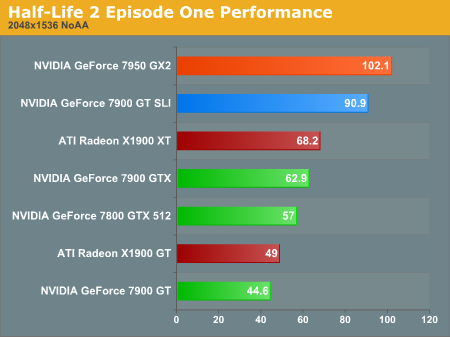

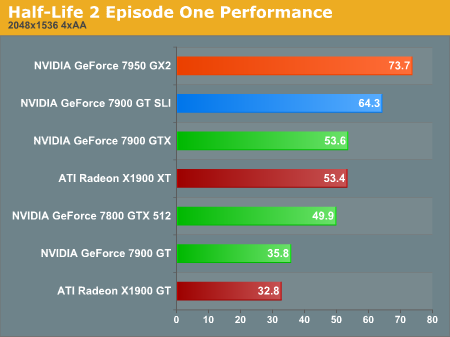

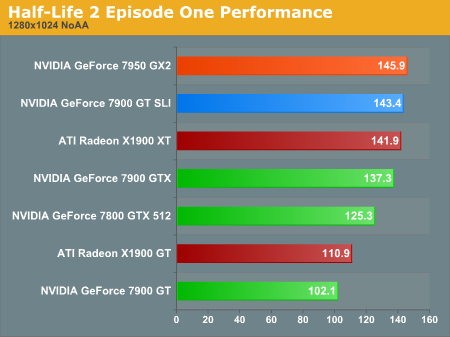

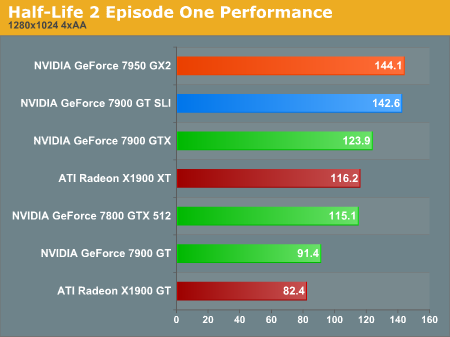

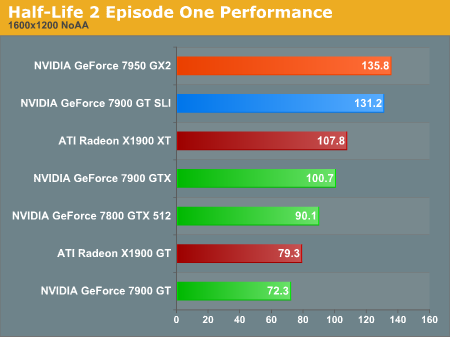

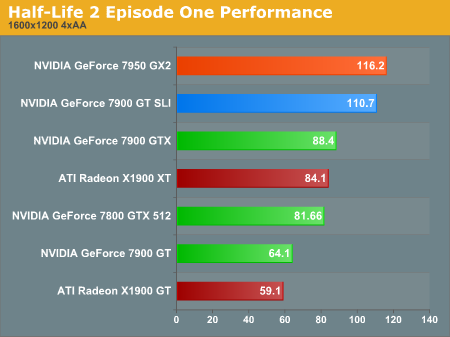

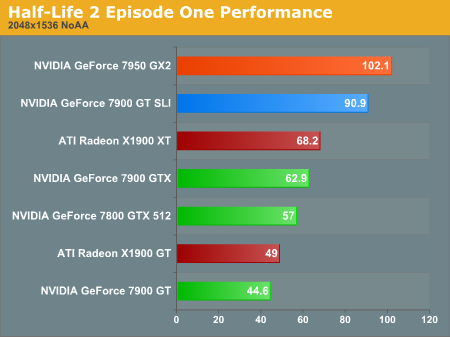

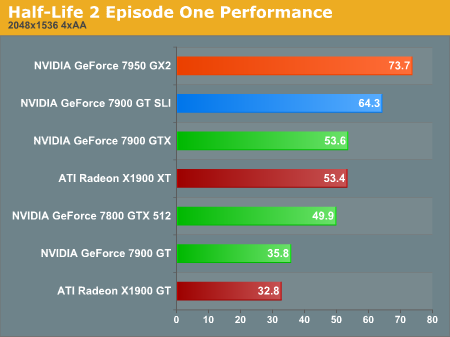

Half-Life 2 Episode One Performance

Even the newest installment of HL2 is CPU limited at the high end under 1600x1200. These tests do show that, in spite of the CPU limitation, the multi-GPU overhead isn't incredibly damaging under the Source engine.

The performance advantage of 7950 GX2 increases moving up in resolution and adding AA. While there is a benefit due to the hardware at this level of quality, framerates this high are just not necessary for playing HL2. Those with a 1600x1200 resolution limit who play HL2 style games won't need to drop $600 on hardware to get a great experience.

At the top end of our performance tests, we don't see any surprises. The 7950 GX2 is the king of HL2 as far as single board solutions go. Getting this baby in SLI for a quad GPU solution will be quite interesting indeed.

Even the newest installment of HL2 is CPU limited at the high end under 1600x1200. These tests do show that, in spite of the CPU limitation, the multi-GPU overhead isn't incredibly damaging under the Source engine.

The performance advantage of 7950 GX2 increases moving up in resolution and adding AA. While there is a benefit due to the hardware at this level of quality, framerates this high are just not necessary for playing HL2. Those with a 1600x1200 resolution limit who play HL2 style games won't need to drop $600 on hardware to get a great experience.

At the top end of our performance tests, we don't see any surprises. The 7950 GX2 is the king of HL2 as far as single board solutions go. Getting this baby in SLI for a quad GPU solution will be quite interesting indeed.

60 Comments

View All Comments

kilkennycat - Monday, June 5, 2006 - link

Just to reinforce another poster's comments. Oblivion is now the yardstick for truly sweating a high-performance PC system. A comparison of a single GX2 vs dual 7900GT in SLI would be very interesting indeed, since Oblivion pushes up against the 256Meg graphics memory limit of the 7900GT (with or without SLI), and will exceed it if some of the 'oblivion.ini' parameters are tweaked for more realistic graphics in outdoor environments, especially in combo with some of the user-created texture-enhancements mods.Crassus - Monday, June 5, 2006 - link

That was actually my first thought and the reason I read the article ... "How will it run Oblivion?". I hope you'll find the time to add some graphs for Oblivion. Thanks.TiberiusKane - Monday, June 5, 2006 - link

Nice article. Some insanely rich gamers may want to compare the absolute high-end, so they may have wanted to see 1900XT in Crossfire. It'd help with the comparison of value.George Powell - Monday, June 5, 2006 - link

Didn't the ATI Rage Fury Maxx post date the Obsidian X24 card?Also on another point its a pity that there are no Oblivion benchmarks for this card.

Spoelie - Monday, June 5, 2006 - link

Didn't the voodoo5 post date that one as well? ^^Myrandex - Monday, June 5, 2006 - link

For some reason page 1 and 2 worked for me, but when I tried 3 or higher no page would load and I received a "Cannot find server" error message.JarredWalton - Monday, June 5, 2006 - link

We had some server issues which are resolved now. The graphs were initially broken on a few charts (all values were 0.0) and so the article was taken down until the problem could be corrected.ncage - Monday, June 5, 2006 - link

This is a very cool but what would be a better idea if nvidia would use the socket concept where you can change out the VPU just like you can a cpu. So you could buy a card with only one VPU and then add another one later if you needed it....BlvdKing - Monday, June 5, 2006 - link

Isn't that what PCI-Express is? Think of a graphics card like a slot 1 or slot A CPU back in the old days. A graphics card is a GPU with it's own cache on the same PCB. If we were to plug a GPU into the motherboard, then it would have to use system memory (slow) or use memory soldiered onto the motherboard (not updatable). The socket idea for GPUs doesn't make sense.DerekWilson - Monday, June 5, 2006 - link

actually this isn't exactly what PCIe is ...but it is exactly what HTX will be with AMD's Torrenza and coherent HT links from the GPU to the processor. The CPU and the GPU will be able to work much more closely together with this technology.