nForce 500: nForce4 on Steroids?

by Gary Key & Wesley Fink on May 24, 2006 8:00 AM EST- Posted in

- CPUs

New Feature: DualNet

DualNet's suite of options actually brings a few enterprise type network technologies to the general desktop such as teaming, load balancing, and fail-over along with hardware based TCP/IP acceleration. Teaming will double the network link by combining the two integrated nForce5 Gigabit Ethernet ports into a single 2-Gigabit Ethernet connection. This brings the user improved link speeds while providing fail-over redundancy. TCP/IP acceleration reduces CPU utilization rates by offloading CPU-intensive packet processing tasks to hardware using a dedicated processor for accelerating traffic processing combined with optimized driver support.While all of this sounds impressive, the actual impact for the general computer user is minimal. On the other hand, a user setting up a game server/client for a LAN party or implementing a home gateway machine will find these options valuable. Overall, features like DualNet are better suited for the server and workstation market and we suspect these options are being provided since the NVIDIA professional workstation/server chipsets are typically based upon the same core logic.

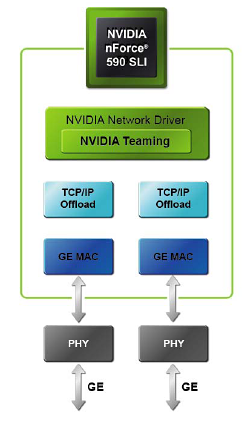

NVIDIA now integrates dual Gigabit Ethernet MACs using the same physical chip. This allows the two Gigabit Ethernet ports to be used individually or combined depending on the needs of the user. The previous NF4 boards offered the single Gigabit Ethernet MAC interface with motherboard suppliers having the option to add an additional Gigabit port via an external controller chip. This too often resulted in two different driver sets, with various controller chips residing on either the PCI Express or PCI bus with typically worse performance than well-implemented dual-PCIe Gigabit Ethernet.

New Feature: Teaming

Teaming allows both of the Gigabit Ethernet ports in NVIDIA DualNet configurations to be used in parallel to set up a 2-Gigabit Ethernet backbone. Multiple computers can be connected simultaneously at full gigabit speeds while load balancing the resulting traffic. When Teaming is enabled, the gigabit links within the team maintain their own dedicated MAC address while the combined team shares a single IP address.

Transmit load balancing uses the destination (client) IP address to assign outbound traffic to a particular gigabit connection within a team. When data transmission is required, the network driver uses this assignment to determine which gigabit connection will act as the transmission medium. This ensures that all connections are balanced across all the gigabit links in the team. If at any point one of the links is not being utilized, the algorithm dynamically adjusts the connections to ensure optimal or formance. Receive load balancing uses a connection steering method to distribute inbound traffic between the two gigabit links in the team. When the gigabit ports are connected to different servers, the inbound traffic is distributed between the links in the team.

The integrated fail-over technology ensures that if one link goes down, traffic is instantly and automatically redirected to the remaining link. As an example, if a file is being downloaded, the download will continue without loss of packet or corruption of data. Once the lost link has been restored, the grouping is re-established and traffic begins to transmit on the restored link.

NVIDIA quotes on average a 40% performance improvement in throughput can be realized when using teaming, although this number can go higher. In a multi-client demonstration, NVIDIA was able to achieve a 70% improvement in throughput utilizing six client machines. In our own internal test we realized about a 45% improvement in throughput utilizing our video streaming benchmark while playing Serious Sam II across four client machines. For those without a Gigabit network, DualNet has the capability to team two 10/100 Fast Ethernet connections. Once again, this is a feature set that few desktop users will truly be able to exploit currently, but we commend NVIDIA for some forward thinking in this area.

Improved Feature: TCP/IP Acceleration

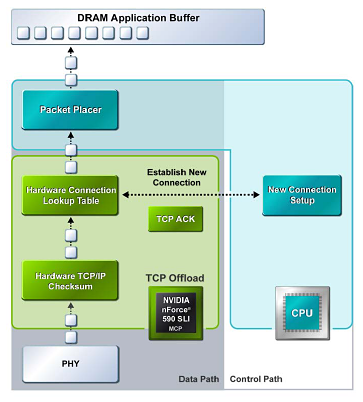

NVIDIA TCP/IP Acceleration is a networking solution that includes both a dedicated processor for accelerating networking traffic processing and optimized drivers. The current nForce500 MCPs have TCP/IP acceleration and hardware offload capability built in to both native Gigabit Ethernet Controllers. This typically will lower the CPU utilization rate when processing network data at gigabit speeds.

In software solutions, the CPU is responsible for processing all aspects of the TCP protocol: calculating checksums, ACK processing, and connection lookup. Depending upon network traffic and the types of data packets being transmitted, this can place a significant load upon the CPU. In the above example all packet data is processed and then checksummed inside the MCP instead of being moved to the CPU for software-based processing, and this improves overall throughout and reduces CPU utilization.

NVIDIA has dropped the ActiveArmor slogan for the nForce 500 release. The ActiveArmor firewall application has been jettisoned to deep space as NVIDIA pointed out that the features provided by ActiveArmor will be a part of the upcoming Microsoft Vista. No doubt NVIDIA was also influenced to drop ActiveArmor due to the reported data corruption issues with the nForce4 caused in part by overly aggressive CPU utilization settings, and quite possibly in part due to hardware "flaws" in the original nForce design.

We have not been able to replicate all of the reported data corruption errors with nForce4, but many of our readers reported errors with the nForce4 ActiveArmor even after the latest driver release. With nForce5 that is no longer a concern. This stability comes at a price though. If TCP/IP acceleration is enabled via the new control panel, then third party firewall applications (including Windows XP firewall) must be switched off in order to use the feature. We noticed CPU utilization rates near 14% with the TCP/IP offload engine enabled and rates above 30% without it.

64 Comments

View All Comments

DigitalFreak - Wednesday, May 24, 2006 - link

Does NTune 5 also work with NF4 boards?Gary Key - Wednesday, May 24, 2006 - link

Yes, but depending upon bios support several of the new features will not be active. We have an updated bios coming for a nF4 board so we can verify which features do and not do work with full nF4 bios support.

nullpointerus - Wednesday, May 24, 2006 - link

Does nTune 5 support multiple profiles and automatic profile switching? If so, do these things actually work properly? Unfortunately, nTune 3 was a mess on my MSI board.Gary Key - Wednesday, May 24, 2006 - link

Yes to multiple profiles and working correctly, what is your definition of automatic profile switching? You can setup custom rules that will dictate how the system should operate under different conditions, a game profile for max performance or a DVD profile that will instruct the system to go in to "quiet mode" once a DVD is inserted if you are watching a movie as an example. We are still testing the rules setup, but so far, it works. We only received the kits last Friday so all major features were tested first but I am following up on the bells and whistles now. nTune 5 probably deserves a small but separate article on its features. We just received a new build last night so testing begins again today.

We did report a bug to NVIDIA as the motherboard settings screen will not refresh correctly after loading a new profile. We had to exit to the main control panel and then return to the performance section for a refresh. I personally have close to 30 profiles setup for our test suites at this time. It is just a matter

DigitalFreak - Wednesday, May 24, 2006 - link

JarredWalton - Wednesday, May 24, 2006 - link

Sorry - that was smy fault and I'll edit it. Written while not thinking I guess.R3MF - Wednesday, May 24, 2006 - link

"If TCP/IP acceleration is enabled via the new control panel, then third party firewall applications must be switched off in order to use the feature."this statement presumes that non third-party firewalls (i.e. nVidia firewall application) would work fine with the TCP-IP acceleration function.............?

nVidia: here is a great function, but you can't use it without getting haXXoR3d

???

Wesleyrpg - Wednesday, May 24, 2006 - link

hey anand,wheres this dodgy nforce4 networking article that you been promising for weeks?

Gary Key - Wednesday, May 24, 2006 - link

The nf4 tests with driver sets back to the 5 series is complete, waiting on release versions of the new 9.x platform drivers to see what actual changes have been made since 6.85 on the nf4 x16 boards.

Wesleyrpg - Thursday, May 25, 2006 - link

can people with the 'normal' nforce4 chipset use the 6.85 drivers or are we stuck with the bodgy 6.70 drivers.