Exclusive: ASUS Debuts AGEIA PhysX Hardware

by Derek Wilson on May 5, 2006 3:00 AM EST- Posted in

- GPUs

PhysX Performance

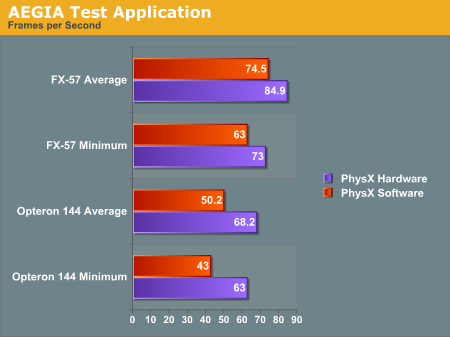

The first program we tested is AGEIA's test application. It's a small scene with a pyramid of boxes stacked up. The only thing it does is shoot a ball at the boxes. We used FRAPS to get the framerate of the test app with and without hardware support.

With the hardware, we were able to get a better minimum and average framerate after shooting the boxes. Obviously this case is a little contrived. The scene is only CPU limited with no fancy graphics going on to clutter up the GPU: just a bunch of solid colored boxes bouncing around after being shaken up a bit. Clearly the PhysX hardware is able to take the burden off the CPU when physics calculations are the only bottleneck in performance. This is to be expected, and doing the same amount of work will give higher performance under PhysX hardware, but we still don't have any idea of how much more the hardware will really allow.

Maybe in the future AGEIA will give us the ability to increase the number of boxes. For now, we get 16% higher minimum frame rates and 14% higher average frame rates by using be AGEIA PhysX card over just the FX-57 CPU. Honestly, that's a little underwhelming, considering that the AGEIA test application ought to be providing more of a best case scenario.

Moving to the slower Opteron 144 processor, the PhysX card does seem to be a bit more helpful. Average frame rates are up 36% and minimum frame rates are up 47%. The problem is, the target audience of the PhysX card is far more likely to have a high-end processor than a low-end "chump" processor -- or at the very least, they would have an overclocked Opteron/Athlon 64.

Let's take a look at Ghost Recon and see if the story changes any.

Ghost Recon Advanced Warfighter

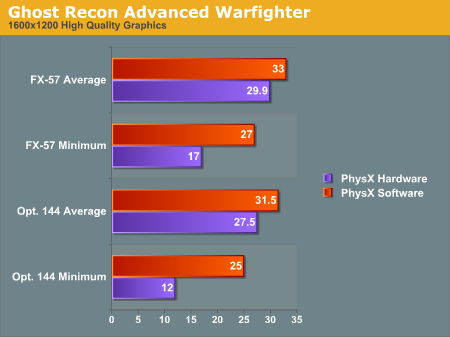

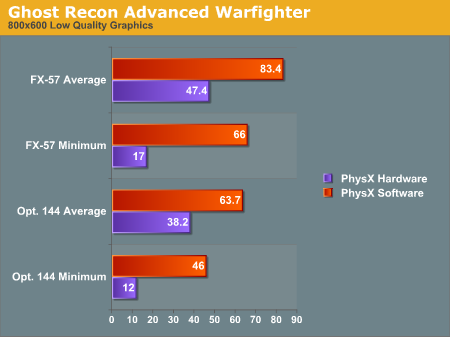

This next test will be a bit different. Rather than testing the same level of physics with hardware and software, we are only able to test the software at a low physics level and the hardware at a high physics level. We haven't been able to find any way to enable hardware quality physics without the board, nor have we discovered how to enable lower quality physics effects with the board installed. These numbers are still useful as they reflect what people will actually see.

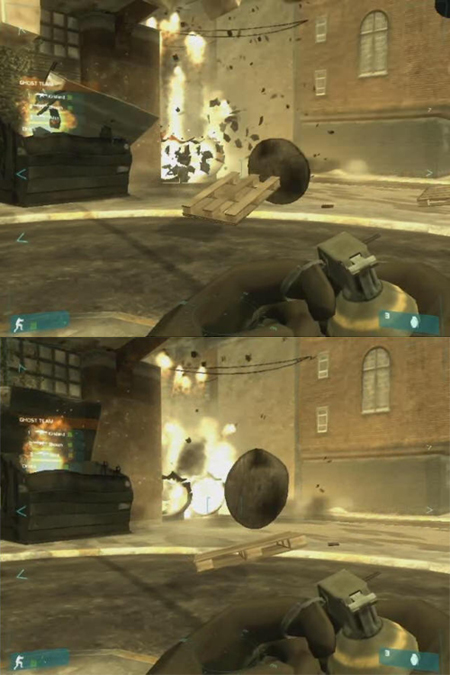

For this test, we looked at a low quality setting (800x600 with low quality textures and no AF) and a high quality setting (1600x1200 with high quality textures and 8x AF). We recorded both the minimum and the average framerate. Here are a couple screenshots with (top) and without (bottom) PhysX, along with the results:

The graphs show some interesting results. We see a lower framerate in all cases when using the PhysX hardware. As we said before, installing the hardware automatically enables higher quality physics. We can't get a good idea of how much better the PhysX hardware would perform than the CPU, but we can see a couple facts very clearly.

Looking at the average framerate comparisons shows us that when the game is GPU limited there is relatively little impact for enabling the higher quality physics. This is the most likely case we'll see in the near term, as the only people buying PhysX hardware initially will probably also be buying high end graphics solutions and pushing them to their limit. The lower end CPU does still have a relatively large impact on minimum frame rates, however, so the PPU doesn't appear to be offloading a lot of work from the CPU core.

The average framerates under low quality graphics settings (i.e. shifting the bottleneck from the GPU to another part of the system) shows that high quality physics has a large impact on performance behind the scenes. The game has either become limited by the PhysX card itself or by the CPU, depending on how much extra physics is going on and where different aspects of the game are being processed. It's very likely this is a more of a bottleneck on the PhysX hardware, as the difference between the 1.8 and 2.6 GHz CPU with PhysX is less than the difference between the two CPUs using software PhysX calculations.

If we shift our focus to the minimum framerates, we notice that when physics is accelerated by hardware our minimum framerate is very low at 17 frames per second regardless of the graphical quality - 12 FPS with the slower CPU. Our test is mostly that of an explosion. We record slightly before and slightly after a grenade blowing up some scenery, and the minimum framerate happens right after the explosion goes off.

Our working theory is that when the explosion starts, the debris that goes flying everywhere needs to be created on the fly. This can either be done on the CPU, on the PhysX card, or in both places depending on exactly how the situation is handled by the software. It seems most likely that the slowdown is the cost of instancing all these objects on the PhysX card and then moving them back and forth over the PCI bus and eventually to the GPU. It would certainly be interesting to see if a faster connection for the PhysX card - like PCIe X1 - could smooth things out, but that will have to wait for a future generation of the hardware most likely.

We don't feel the drop in frame rates really affects playability as it's only a couple frames with lower framerates (and the framerate isn't low enough to really "feel" the stutter). However, we'll leave it to the reader to judge whether the quality gain is worth the performance loss. In order to help in that endeavor, we are providing two short videos (3.3MB Zip) of the benchmark sequence with and without hardware acceleration. Enjoy!

One final note is that judging by the average and minimum frame rates, the quality of the physics calculations running on the CPU is substantially lower than it needs to be, at least with a fast processor. Another way of putting it is that the high quality physics may be a little too high quality right now. The reason we say this is that our frame rates are lower -- both minimum and average rates -- when using the PPU. Ideally, we want better physics quality at equal or higher frame rates. Having more objects on screen at once isn't bad, but we would definitely like to have some control over the amount of additional objects.

101 Comments

View All Comments

Ickus - Saturday, May 6, 2006 - link

Hmmm - seems like the modern equivelant of the old-school maths co-processors.Yes these are a good idea and correct me if I'm wrong, but isn't that $250 (Aus $) CPU I forked out for supposedly quite good at doing these sorts of calculations what with it's massive FPU capabilities and all? I KNOW that current CPU's have more than enough ability to perform the calculations for the physics engines used in todays video games. I can see why companies are interested in pushings physics add-on cards though...

"Are your games running crap due to inefficient programming and resource hungry operating systems? Then buy a physics processing unit add-in card! Guaranteed to give you an unimpressive performance benefit for about 2 months!" If these PPU's are to become mainstream and we take another backwards step in programming, please oh please let it be NVidia who takes the reigns... They've done more for the multimedia industry in the last 7 years than any other company...

DerekWilson - Saturday, May 6, 2006 - link

CPUs are quite well suited for handling physics calculations for a single object, or even a handful of objects ... physics (especially game physics) calculations are quite simple.when you have a few hundred thousand objects all bumping into eachother every scene, there is no way on earth a current CPU will be able to keep up with PhysX. There are just too many data dependancies and too much of a bandwidth and parallel processing advantage on AGEIA's side.

As for where we will end up if another add-in card business takes off ... well that's a little too much to speculate on for the moment :-)

thestain - Saturday, May 6, 2006 - link

Just my opinion, but this product is too slow.Maybe there needs to be minimum ratio's to cpu and gpu speeds that Ageia and others can use to make sure they hit the upper performance market.

Software looks ok, looks like i might be going with software PhysX if available along with software Raid, even though i would prefer to go with the hardware... if bridged pci bus did not screw up my sound card with noise and wasn't so slow... maybe.. but my thinking is this product needs to be faster and wider... pci-e X4 or something like it, like I read in earlier articles it was supposed to be.

pci... forget it... 773 mhz... forget it... for me... 1.2 GHZ and pci-e X4 would have this product rocking.

any way to short this company?

They really screwed the pouch on speed for the upper end... should rename their product a graphics decelerator for faster cpus,.. and a poor man's accelerator.. but what person who owns a cpu worth $50 and a video card worth $50 will be willing to spend the $200 or more Ageia wants for this...

Great idea, but like the blockhead who give us RAID hardware... not fast enough.

DerekWilson - Saturday, May 6, 2006 - link

afaik, raid hardware becomes useful for heavy error checking configurations like raid 5. with raid 0 and raid 1 (or 10 or 0+1) there is no error correction processing overhead. in the days of slow CPUs, this overhead could suck the life out of a system with raid 5. Today it's not as big an impact in most situations (espeically consumer level).raid hardware was quite a good thing, but only in situations where it is necessary.

Cybercat - Saturday, May 6, 2006 - link

I was reading the article on FiringSquad (http://www.firingsquad.com/features/ageia_physx_re...">exact page here) where Ageia responded to Havok's claims about where the credit is due, performance hits, etc, and on performance hits they said:So they essentially responded immediately with a driver update that supposedly improves performance.

http://ageia.com/physx/drivers.html">http://ageia.com/physx/drivers.html

Driver support is certainly a concern with any new hardware, but if Ageia keeps up this kind of timely response to issues and performance with frequent driver updates, in my mind they'll have taken care of one of the major factors in determining their success, and swinging their number of advantages to outweigh their obstacles for making it in the market.

toyota - Friday, May 5, 2006 - link

i dont get it. Ghost Recon videos WITHOUT PhysX looks much more natural. the videos i have seen with it look pretty stupid. everything that blows up or gets shot has the same little black pieces flying around. i have shot up quite a few things in my life and seen plenty of videos of real explosions and thats not what it looks like.DeathBooger - Friday, May 5, 2006 - link

The PC version of the new Ghost Recon game was supposed to be released along side the Xbox360 version but was delayed at the last minute for a couple of months. My guess is that PhysX implementation was a second thought while developing the game and the delay came from the developers trying to figure out what to do with it.shecknoscopy - Friday, May 5, 2006 - link

Think <I>you're</i> part of a niche market?I gotta' tell you, as a scientist, this whole topic of putting 'physics' into games makes for an intensely amusing read. Of course I understand what's meant here, but when I first look at people's text in these articles/discussion, I'm always taken aback: "Wait? We need to <i>add</i> physics to stuff? Man, THAT's why my experiments have been failing!"

Anyway...

I wonder if the types of computations employed by our controversial little PhysX accelerator could be harvested *outside* of the gaming environment. As someone who both loves to game, but also would love to telecommute to my lab, I'd ideally like to be able to handle both tasks using one machine (I'm talking about in-house molecular modeling, crystallographic analysis, etc... ). Right now I have to rely on a more appropriate 'gaming' GPU at home, but hustle on in to work to use an essentially indentical computer which has been outfitted with a Quadro graphics card do to my crazy experiments. I guess I'm curious if it's plasuable to make, say, a 7900GT + PhysX perform comparable calculations to a Quado/Fire-style workstation graphics setup. 'Cause seriosuly, trying to play BF2 on your $1500 Quadro card is seriously disappointing. But then, so is trying to perform realtime molecular electron density rendering on your $320 7900GT.

SO - anybody got any ideas? Some intimate knowledge of the difference between these types of calculations? Or some intimate knowledge of where I can get free pizza and beer? Ah, Grad School.

Walter Williams - Friday, May 5, 2006 - link

It will be great for military use as well as the automobile industry.

escapedturkey - Friday, May 5, 2006 - link

Why don't developers use the second core of many dual core systems to do a lot of physics calculations? Is there a drawback to this?