NVIDIA's Tiny 90nm G71 and G73: GeForce 7900 and 7600 Debut

by Derek Wilson on March 9, 2006 10:00 AM EST- Posted in

- GPUs

The Test and Power

For our test setup, we are using two different 2x x16 ASUS boards: one based on NVIDIA and the other ATI core logic. For all tests with NVIDIA GPUs we used the NVIDIA motherboard, and for all tests with ATI GPUs we employed the ATI based motherboard. All power tests were performed using the same motherboard (the RD580 board).

In an attempt to keep everything readable and manageable, we have split up the high end and mid range comparisons. Our high end parts will be compared at 1280x1024, 1600x1200, 1920x1440, and 2048x1536. The mid range comparison will look at 1024x768, 1280x1024, and 1600x1200. All SLI and CrossFire tests will be included with the high end data.

Test Setup:

ASUS A8N32 NVIDIA nForce 4 X16 SLI Motherboard

ASUS A8R32 ATI RD580 Motherboard

AMD Athlon 64 FX-57

2GB OCZ 2.5:3:3:8 DDR400 RAM

160GB Seagate 7200.7 Hard Drive

600W OCZ PowerStream PSU

Drivers:

NVIDIA ForceWare 84.17 (Beta)

ATI Catalyst 6.2

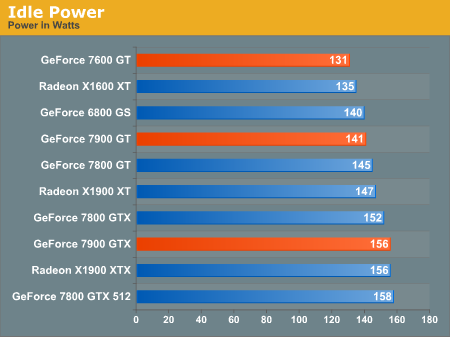

For power consumption, we once again take a look at the power draw of the system at the wall using our trusty Kill-A-Watt. Power load was measured while running the 3dmark06 feature tests as they tend to provide something near a worst case power load. What we see in games is usually a handful of watts lower than this. For idle power, we don't see that much difference between the high end cards, and the 7900 GT is similar in power to the 6800 GS. The 7600 GT seems to be on par with the X1600 XT for idle power.

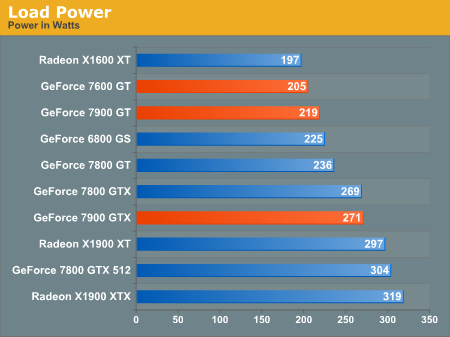

When it comes to load, the new NVIDIA parts simply clean up. The performance per watt leader in this contest is hands down NVIDIA. The 7600 GT and 7900 GT both come in at a lower power than the 6800 GS and the 7900 GTX pulls the same wattage as the much lower clocked 7800 GTX.

For our test setup, we are using two different 2x x16 ASUS boards: one based on NVIDIA and the other ATI core logic. For all tests with NVIDIA GPUs we used the NVIDIA motherboard, and for all tests with ATI GPUs we employed the ATI based motherboard. All power tests were performed using the same motherboard (the RD580 board).

In an attempt to keep everything readable and manageable, we have split up the high end and mid range comparisons. Our high end parts will be compared at 1280x1024, 1600x1200, 1920x1440, and 2048x1536. The mid range comparison will look at 1024x768, 1280x1024, and 1600x1200. All SLI and CrossFire tests will be included with the high end data.

Test Setup:

ASUS A8N32 NVIDIA nForce 4 X16 SLI Motherboard

ASUS A8R32 ATI RD580 Motherboard

AMD Athlon 64 FX-57

2GB OCZ 2.5:3:3:8 DDR400 RAM

160GB Seagate 7200.7 Hard Drive

600W OCZ PowerStream PSU

Drivers:

NVIDIA ForceWare 84.17 (Beta)

ATI Catalyst 6.2

For power consumption, we once again take a look at the power draw of the system at the wall using our trusty Kill-A-Watt. Power load was measured while running the 3dmark06 feature tests as they tend to provide something near a worst case power load. What we see in games is usually a handful of watts lower than this. For idle power, we don't see that much difference between the high end cards, and the 7900 GT is similar in power to the 6800 GS. The 7600 GT seems to be on par with the X1600 XT for idle power.

When it comes to load, the new NVIDIA parts simply clean up. The performance per watt leader in this contest is hands down NVIDIA. The 7600 GT and 7900 GT both come in at a lower power than the 6800 GS and the 7900 GTX pulls the same wattage as the much lower clocked 7800 GTX.

97 Comments

View All Comments

DigitalFreak - Thursday, March 9, 2006 - link

I saw that as well. Any comments, Derek?DerekWilson - Thursday, March 9, 2006 - link

I did not see any texture shimmering during testing, but I will make sure to look very closely druing our follow up testing.Thanks,

Derek Wilson

jmke - Thursday, March 9, 2006 - link

just dropped by to say that you did a great job here, plenty of info, good benchmarks, nice "load/idle" tests. not many people here know how stresfull benchmarking against the clock can be. keep up the good work. Looking forward to the follow-up!Spinne - Thursday, March 9, 2006 - link

I think it's safe to say that atleast for now, there is no clear winner with a slight advantage to ATI. From the bechmarks, it seems that the 7900GTX performs on par with the X1900XT with the X1900XTX a few fps higher (not a huge diff IMO). The future will therefore be decided by the drivers and the games. The drivers are still pretty young and I bet we'll see performance improvements in the future as both sets of drivers mature. The article says, ATI has the more comprehensive graphics solution (sorta like the R420 vs/s the NV40 situation in reverse?), so if code developers decide to take advantage of the greater functionality offered by ATI (most coders will probably aim for the lowest common denominator to increase sales, while a few may have 'ATI only' type features) then that may tilt the balance in ATI's favor. What's more important is the longevity of the R580 & G71 families. With VISTA set to appear towards the end of the year, how long can ATI and NVIDIA push these DX9 parts? I'm sure both companies will have a new family ready for VISTA, though the new family may just be a more refined version of the R580 and G71 architectures (much as the R420 was based off the R300 family). In terms of raw power, I think we're already VISTA games ready.The real question is, what does DX10 bring to the table from the perspective of the end user? There were certain features unique to DX9 that a DX8 part just could not render. Are there things that a DX10 card will be able to do that a DX9 card just can't do? As I understand it, the main difference between DX9 and 10 is that DX10 will unify Pixel Shaders and Vertex shaders, but I don't see how this will let a DX10 card render something that a DX9 card can't. Can anyone clarify?

Lastly, one great benefit of Crossfire and SLI will be that I can buy a high end X1900XT for gaming right now and then add a low end card or a HD accelerator card (like the MPEG accelerator cards a few yyears ago) when it is clear if HDCP support will be necessary to play HD content and when I can afford to add a HDCP compliant monitor.

yacoub - Friday, March 10, 2006 - link

And price, bro, and price. Two cards at the same performance level from two different companies = great time for a price war. Especially when one has the die shrink advantage as an incentive to drop the price to squeeze out the other's profits.

bob661 - Thursday, March 9, 2006 - link

I only buy based on games I play anyways but it's good to see them close in performance.DerekWilson - Thursday, March 9, 2006 - link

The major difference that DX9 parts will "just not be able to do" is vertex generation and "geometry shading" in hardware. Currently a vertex shader program can only manipulate existing data, while in the future it will be possible to adaptively create or destroy vertecies.Programmatically, the transition from DX9 to DX10 will be one of the largest we have seen in a while. Or so we have been told. Some form of the DX10 SDK (not sure if it was a beta or not) was recently released, so I may look into that for more details if people are interested.

feraltoad - Friday, March 10, 2006 - link

I too would be very interested to learn more about DX10. I have looked online but I haven't really seen anything beyond the unification u mentioned.Also Unreal 2007 does look ungodly, and I didn't even think to wonder if it was DX9 or 10 like the other poster. Will it be comparable to games that will run on 8.1 hardware sans DX9 effects? That engine will make them big bux when they license it out. Sidenote-I read they were running demos of it with a quad SLI setup to showcase the game. I wonder what it will need to run it at full tilt?

BTW Derek I think you do a very good job at AT, I always find your articles full of good common sense advice. When U did a review on the 3000+ budget gaming platform I jumped on the A64 bandwagon (I had to get an AsrockDual tho, instead of an NF4 cuz I wanted to keep my AGP 6600gt, and that's sad now considering the 7900 gt performance/price in sli compared to a 7900gtx.) and I've been really happy with my 3000+ at 2250 it runs noticeably better than my XP2400M oc'd 2.2) I'm just one example of someone you & AT have made more satisfied with their PC experience. So don't let disparaging comments get you down. You thorough committment to accuracy of your work shows how you accept criticism with grace and correct mistakes swiftly. I think the only thing "slipping" around here are peoples' manners.

Spinne - Thursday, March 9, 2006 - link

Yes, please do! So if you can actually generate vertices, the impact would be that you'd be able to do stuff like the character's hair flying apart in a light breeze without having to create the hair as a high poly model, right? What about the Unreal3 engine? Is it a DX9 or DX10 engine?Rock Hydra - Thursday, March 9, 2006 - link

I didn't read all of that, but I'm glad it's close becasue the consumer becomes the real winner.