ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

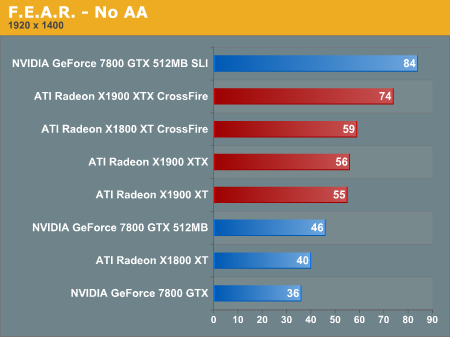

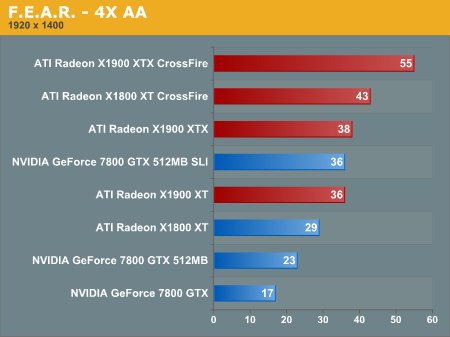

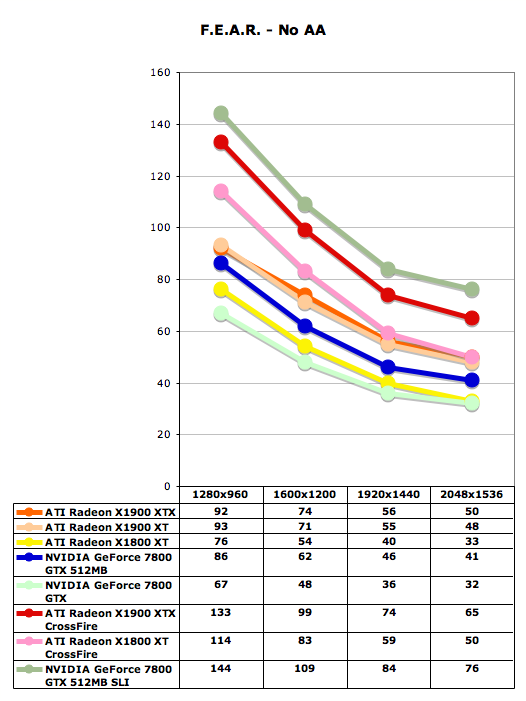

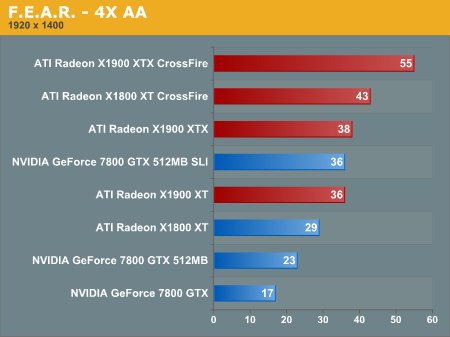

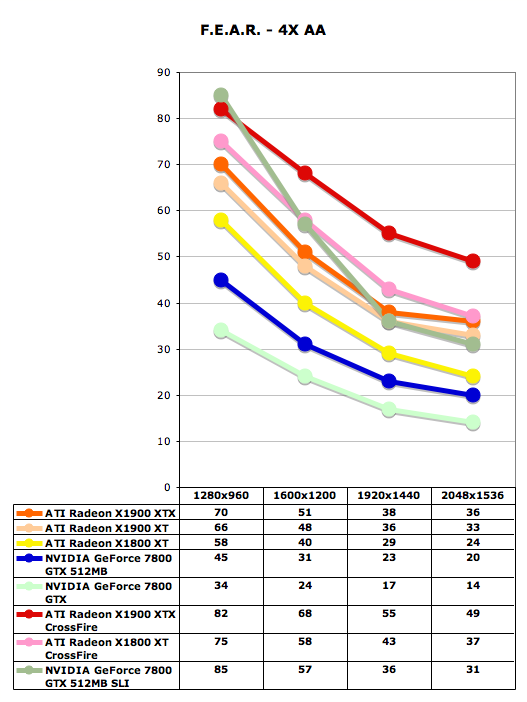

F.E.A.R. Performance

Notoriously demanding on GPUs, F.E.A.R. has the ability to put a very high strain on graphics hardware, and is therefore another great benchmark for these ultra high-end cards. The graphical quality of this game is high, and it's highly enjoyable to watch these cards tackle the F.E.A.R demo.

With F.E.A.R we have a similar situation to Black and White 2, in that the game does so much better with it's own maximum quality settings, that using the driver's max settings would be impractical. With this game we find that it favors ATI hardware over all with and without 4xAA enabled. This game looks like just the kind of thing the X1900 XTX Crossfire setup was made for, as it handles 2048x1536 and 4xAA with ease (49 fps).

Notoriously demanding on GPUs, F.E.A.R. has the ability to put a very high strain on graphics hardware, and is therefore another great benchmark for these ultra high-end cards. The graphical quality of this game is high, and it's highly enjoyable to watch these cards tackle the F.E.A.R demo.

With F.E.A.R we have a similar situation to Black and White 2, in that the game does so much better with it's own maximum quality settings, that using the driver's max settings would be impractical. With this game we find that it favors ATI hardware over all with and without 4xAA enabled. This game looks like just the kind of thing the X1900 XTX Crossfire setup was made for, as it handles 2048x1536 and 4xAA with ease (49 fps).

120 Comments

View All Comments

ChronoReverse - Tuesday, January 24, 2006 - link

Indeed. My 6800LE cost $129 and unlocked, gets pretty close to 6800GT speeds.Obviously not as good, but still pretty damn good and I paid a lot less too.

It's been like this since the TNT2 M64 came out.

mi1stormilst - Tuesday, January 24, 2006 - link

I am taking issue with ATI and Anandtech on this one:1.) The bloody X1800 series is pretty dang new, they are taking about phasing it out when I have not even had a chance to use the AVIO video tool yet WTF!?

2.) The section of the article "Performance Breakdown" http://anandtech.com/video/showdoc.aspx?i=2679&...">http://anandtech.com/video/showdoc.aspx?i=2679&... is very misleading to say the least. I think you owe the readers an explination at how you arrived at those numbers? Did you test at one resolution? Only testing for a resolution that allows the ATI card the advantadge is hardly fair. I know a lot of gamers including myself that still generally game at 1024x768 how do the cards fair at that resolution? What are the real differences overall? I think you should either pull this out of the article all together or test at least 3 common resolutions (1024x768) (1280x1024) & (1600x1200).

Just my two cents.

GTMan - Tuesday, January 24, 2006 - link

Yes, your card is now out of date. You should stop using it. I'll give you $5 for it if that would make you feel better.mi1stormilst - Wednesday, January 25, 2006 - link

HAHA! My kid 8 year old kid will inherit the X1800XL in a few months after I order the X1900XT. I bet that bothers you ... no? (-;beggerking - Tuesday, January 24, 2006 - link

I 2nd that!!b/w, this is a repost. lol.. see my comments above..

DerekWilson - Tuesday, January 24, 2006 - link

I appreciate your comments.The point about the X1800 is well taken. It would be hard for us to expect them to push back their "refresh" part because they dropped the ball on the R520. And it also doesn't make economic sense to totally scrap the R520 before it gets out the door.

It was a tough sitation. At lesat ATI didn't take as much of a bath on the R520 as NVIDIA did on NV30 ...

But again, I certainly understand your sentiment.

Second, I did explain where the numbers came from. 2048x1536 with 4xAA in each game. The graph mentions that we calculated percent increase to x1900 performance -- which means our equation looks like this --

((x1900 score) - (competing score)) / competing score * 100

if you game at 1024x768, you have absolutely no business buying a $600 video card.

again ... ^^

we did test 12x10 and 16x12 and people who want those results can easily see them on each game test page.

This is a high end card and it seems like the best fit to describe performance is a high end test. If we did a 1024x768 test it would just be an exercise in observing the cpu overhead of the driver and how well the fx57 was able to handle it.

Our intention is not to mislead. But people often want a quick overview, and detail and acuracy are fundamentally at odds with the idea of a quick and easy demonstration. Our understanding is that people interested in this card are intereted in high quality, high res performance, so this cross section of performance seemed the most logical.

mi1stormilst - Wednesday, January 25, 2006 - link

Thanks for responding (-:I do think benchmarking at 1024x768 is perfectly valid. I for one game at 1024x768 with all the candy turned on so not only can I enjoy my looks of the game but I can still render high frames while playing online. When I want to enjoy the single player option I am willing to let the frames dive down to the bare minimums so I can enjoy all that the game has to offer without getting lag killed. So I think that justifys my reasons to want a $600.00 video card (-;

Although I was slightly incorrect about how you benchmarked I stand by my feeling that is is not a TRUE representation of the cards performance across the board. It is a snapshot which leaves a lot of holes unfilled. I would feel cheated as a customer to find that it did not perform as well as I had been lead to believe if the way I wanted to use it was not optimized as well as another way. Understood?

Thanks for the time you have spent evaluating it...it does give us an overall feeling and of course when I am looking at spending that kind of money you can bet I will be doing a lot more then reading one review (-:

Garrett

beggerking - Tuesday, January 24, 2006 - link

It is kind of misleading since Nvidia leads in max quality test in a few games, but the advantage is still given to ATI.x1900xtx is a better performing card overall, but it is not THAT much better. quite an exaggeration.

blahoink01 - Tuesday, January 24, 2006 - link

It would be nice to see World of Warcraft included in the benchmark set. Considering it is probably the most popular game in the world, I'm sure many readers would find the benchmarks useful.fishbits - Tuesday, January 24, 2006 - link

Why, was the WoW engine changed recently? It's easy to max out WoW display settings on far less capable cards, so what useful information would come from benchmarking it with bleeding edge gear? Unless maybe you're running it on some massive $3000 monitor, in which case upgrading to a 300-500 dollar video card should be a no-brainer. The only useful benchmark would be "How would my older video card handle WoW?" and that's already been done. Must be missing something here.