ATI's X1000 Series: Extended Performance Testing

by Derek Wilson on October 7, 2005 10:15 AM EST- Posted in

- GPUs

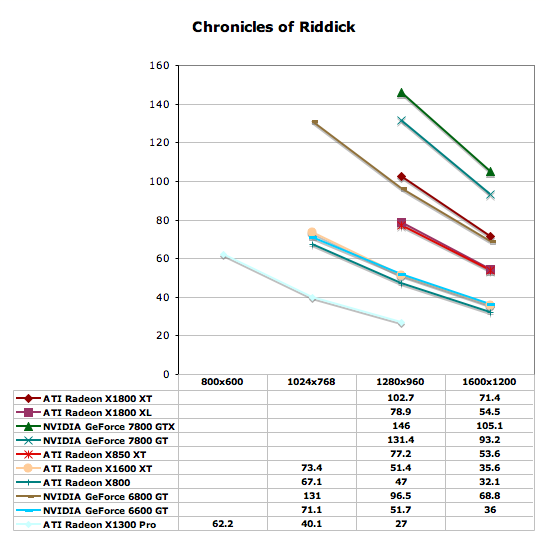

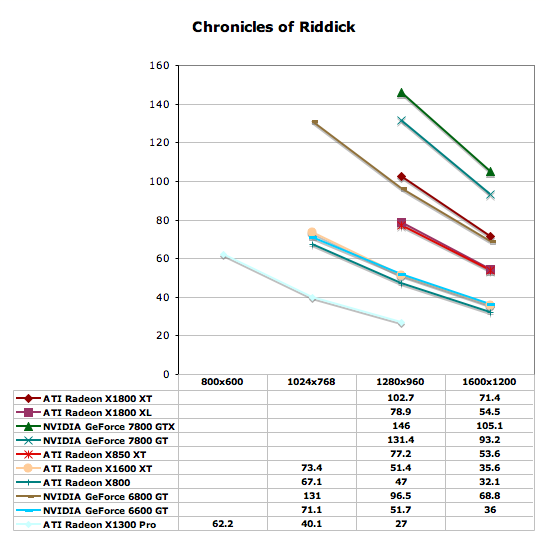

The Chronicles of Riddick Performance

We don't have as much data as we would like for The Chronicles of Riddick as it looks like the game itself checks the capabilities of our monitor before allowing a setting to be run. While the monitor that we use to test games at high resolutions is capable of running at up to 2048x1536, the data it reports to graphics cards is unreliable. NVIDIA and ATI allow for the ability to specify manually the limits of one's monitor, but in this case, the game goes straight to the flawed source. In any case, all these tests were run with the shader level set to 2.0.

With the data that we have, the high end NVIDIA cards absolutely dominate this benchmark. The 6800 GT even performs as well as the X1800 XT. The X850 XT keeps up with the X1800 XL, and the only surprise is that the X1600 XT performs the same as the 6600 GT. Once again, the playability of the X1300 Pro is limited to 1024x768.

We don't have as much data as we would like for The Chronicles of Riddick as it looks like the game itself checks the capabilities of our monitor before allowing a setting to be run. While the monitor that we use to test games at high resolutions is capable of running at up to 2048x1536, the data it reports to graphics cards is unreliable. NVIDIA and ATI allow for the ability to specify manually the limits of one's monitor, but in this case, the game goes straight to the flawed source. In any case, all these tests were run with the shader level set to 2.0.

With the data that we have, the high end NVIDIA cards absolutely dominate this benchmark. The 6800 GT even performs as well as the X1800 XT. The X850 XT keeps up with the X1800 XL, and the only surprise is that the X1600 XT performs the same as the 6600 GT. Once again, the playability of the X1300 Pro is limited to 1024x768.

93 Comments

View All Comments

waldo - Friday, October 7, 2005 - link

I have been one that has been critical of the video card reviews, and am pleasantly suprised with this review! Thanks for the work Derek, and I am sure the overtime it took to punch this together...I can only imagine the hours you had to pull to put this together. That is why I love AnandTech! Great site, and responsive to the readers! Cheers!DerekWilson - Friday, October 7, 2005 - link

Anything we can do to help :-)I am glad that this article was satisfactory, and I regret that we were unable to provide this ammount of coverage in our initial article.

Keep letting us know what you want and we will keep doing our best to deliver.

Thanks,

Derek Wilson

supafly - Friday, October 7, 2005 - link

Maybe I missed it, but what system are these tests being done on?The tests from "ATI's Late Response to G70 - Radeon X1800, X1600 and X1300" were using:

ATI Radeon Express 200 based system

AMD Athlon 64 FX-55

1GB DDR400 2:2:2:8

120 GB Seagate 7200.7 HD

600 W OCZ PowerStreams PSU

Is this one the same? I would be interested to see the same tests run on a NF4 motherboard.

supafly - Friday, October 7, 2005 - link

Ahh, I skipped over that last part.. " The test system that we employed is the one used for our initial tests of the hardware."I would still like to see it on a NF4 mobo.

photoguy99 - Friday, October 7, 2005 - link

Vista will have DirectX 10, which adds geometry shaders and other bits.The ATI cards will run vista of course, but do everything DX10 hardware is capable of.

photoguy99 - Saturday, October 8, 2005 - link

Sorry, I meant the new ATI cards will *not* be DX10 compatible.The biggest difference is DX10 will introduce geometry shaders which is a whole new architectural concept.

This is a big difference that will make the X1800XT seem out of date.

The question is when will it seem out of date. Another year for Vista to be released with DX10, and then how long before a game not only has a DX10 rendering path, but has it do something interesting?

Hard to say - it could be the games with a DX10 rendering path show little difference, it could be you see a lot more geometry detail in UT2007.

Make your predications, spend your money, good luck.

Chadder007 - Friday, October 7, 2005 - link

Sooo...the new ATI's are pre-DX10 compliant? If so, what about the new Nvidia parts?DerekWilson - Friday, October 7, 2005 - link

This is not true -- DX10 will specific functions will not be compatible with either new ATI or NVIDIA hardware.Games written for Vista will be required to support DX9 initially and DX10 will be the advanced featureset. This will be to support hardware from the Radeon 9700 and GeFroce FX series through the Radeon X1K and 7800 series.

There is currently no hardware that is DX10 capable.

Xenoterranos - Saturday, October 8, 2005 - link

Im just hoping NVIDIA doesn't go braindead again ont he DX compliance. I'm still stuck with a non-fully compatible 5900 card. It runs HL2 very well even at high settings, but I know Im missing all the pretty DX9 stuff. I probably won't get another card untill DX10 hits, and then buy the first card that fully supports it.JarredWalton - Saturday, October 8, 2005 - link

Well, part of that is marketing. DX9 graphics are better than DX8.1, but it's not a massive difference on many games. Far Cry is almost entirely DX8.1, and other than a slight change to the water, you're missing nothing but performance.It's like the SM2.0 vs. SM3.0 debate. SM3.0 does allow for more complex coding, but mostly it just makes it so that the developers don't have to unroll loops. HDR, instancing, displacement mapping, etc. can all be done with SM2.0; it's just more difficult to accomplish and may not perform quite as fast.

Okay, I haven't ever coded SM2.0 or 3.0 (advanced graphics programming is beyond my skill level), but that's how I understand things to be. The SM3.0 brouhaha was courtesy of NVIDIA marketing, just like the full DX9 hubub was from ATI marketing. Anyway, MS and Intel have proven repeatedly that marketing is at least as important as technology.