Gigabyte's i-RAM: Affordable Solid State Storage

by Anand Lal Shimpi on July 25, 2005 3:50 PM EST- Posted in

- Storage

For years now, motherboard manufacturers have been struggling to find other markets to branch out to, in an attempt to diversify themselves, preparing for inevitable consolidation in the market. Every year at Computex, we'd hear more and more about how the motherboard business was getting tougher and we'd see more and more non-motherboard products from these manufacturers. For the most part, the non-motherboard products weren't anything special. Everyone went into making servers, then multimedia products, then cases, networking, security, water cooling; the list goes on and on.

This year's Computex wasn't very different, except for one thing. When Gigabyte showed us their collection of goodies for the new year, we were actually quite interested in one of them. And after we posted an article about it, we found that quite a few of you were very interested in it too. Gigabyte's i-RAM was an immediate success and it wasn't so much that the product was a success, but it was the idea that piqued everyone's interests.

Pretty much every time a faster CPU is released, we always hear from a group of users who are marveled by the rate at which CPUs get faster, but loathe the sluggish rate that storage evolves. We've been stuck with hard disks for decades now, and although the thought of eventually migrating to solid state storage has always been there, it's always been so very distant. These days, you can easily get a multi-gigabyte solid state drive if you're willing to spend the tens of thousands of dollars it costs to get one; prices actually vary from the low $1000s to the $100K range for solid state devices, obviously making them impractical for desktop users.

The performance benefits of solid state storage have always been tempting. With no moving parts, reliability is improved tremendously, and at the same time, random accesses are no longer limited by slow and difficult to position read/write heads. While sequential transfer rates have improved tremendously over the past 5 years, thanks to ever increasing platter densities among other improvements, it is the incredibly high latency that makes random accesses very expensive from a performance standpoint for conventional hard disks. A huge reduction in random access latency and increase in peak bandwidth are clear performance advantages to solid state storage, but until now, they both came at a very high price.

The other issue with solid state storage is that DRAM is volatile, meaning that as soon as power is removed from the drive, all of your data would be lost. More expensive solutions get around this by using a combination of a battery backup as well as a hard disk that keeps a backup of all data written to the solid state drive, just in case the battery or main power should fail.

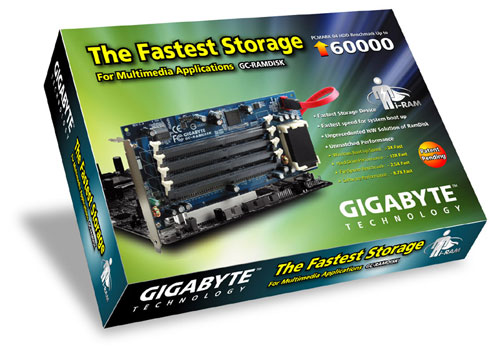

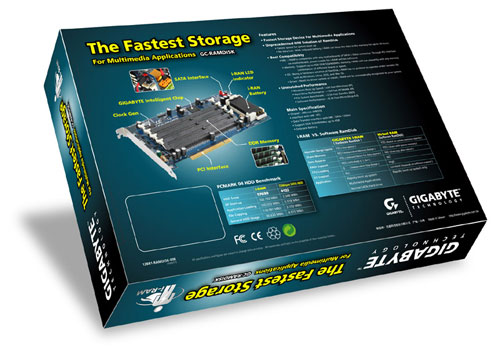

Recognizing the allure of solid state storage, especially to performance-conscious enthusiast users, Gigabyte went about creating the first affordable solid state storage device, and they called it i-RAM.

Through some custom logic, the i-RAM works and acts just like a regular SATA hard drive. But how much of a performance increase is there for desktop users? And is the i-RAM worth its still fairly high cost of entry? We've spent the past week trying to find out...

This year's Computex wasn't very different, except for one thing. When Gigabyte showed us their collection of goodies for the new year, we were actually quite interested in one of them. And after we posted an article about it, we found that quite a few of you were very interested in it too. Gigabyte's i-RAM was an immediate success and it wasn't so much that the product was a success, but it was the idea that piqued everyone's interests.

Pretty much every time a faster CPU is released, we always hear from a group of users who are marveled by the rate at which CPUs get faster, but loathe the sluggish rate that storage evolves. We've been stuck with hard disks for decades now, and although the thought of eventually migrating to solid state storage has always been there, it's always been so very distant. These days, you can easily get a multi-gigabyte solid state drive if you're willing to spend the tens of thousands of dollars it costs to get one; prices actually vary from the low $1000s to the $100K range for solid state devices, obviously making them impractical for desktop users.

The performance benefits of solid state storage have always been tempting. With no moving parts, reliability is improved tremendously, and at the same time, random accesses are no longer limited by slow and difficult to position read/write heads. While sequential transfer rates have improved tremendously over the past 5 years, thanks to ever increasing platter densities among other improvements, it is the incredibly high latency that makes random accesses very expensive from a performance standpoint for conventional hard disks. A huge reduction in random access latency and increase in peak bandwidth are clear performance advantages to solid state storage, but until now, they both came at a very high price.

The other issue with solid state storage is that DRAM is volatile, meaning that as soon as power is removed from the drive, all of your data would be lost. More expensive solutions get around this by using a combination of a battery backup as well as a hard disk that keeps a backup of all data written to the solid state drive, just in case the battery or main power should fail.

Recognizing the allure of solid state storage, especially to performance-conscious enthusiast users, Gigabyte went about creating the first affordable solid state storage device, and they called it i-RAM.

Through some custom logic, the i-RAM works and acts just like a regular SATA hard drive. But how much of a performance increase is there for desktop users? And is the i-RAM worth its still fairly high cost of entry? We've spent the past week trying to find out...

133 Comments

View All Comments

Sea Shadow - Monday, July 25, 2005 - link

I wonder if the OS is the limiting factor. They should run some tests using other os *cough* linux *cough*.What would be really neat is if they could design an i-Ram device that uses 2 HDD bays and supported 8+ GB of ram and ran from a standard molex.

sprockkets - Tuesday, July 26, 2005 - link

You people think about using Knoppix and copying to the drive? For that matter, that and stuff like damnsmalllinux and such can be run totally from system ram.Instead of using this though for a slient drive, you are better off using flash memory drives for that.

ViRGE - Monday, July 25, 2005 - link

Unfortunately if they did that, it would mean that your computer could never be turned off. As noted in the review, the card is currently still powered even if the machine is "off", due to the fact that when a modern ATX computer is off, it's actually more of a super-standby mode that leaves a few choice items powered on for wake-on events(LAN/modems, and the power button of course). All Gigabyte is doing here is taping in to the 3.3v line on the PCI slot that wake-on power is provided through, which is enough to keep the device powered up even when the system is in its diminished state.Molex plugs on the other hand are completely powered down when the system is "off", so it would be running off of battery power in this case. A lot of us leave our systems on 24/7 anyhow, but I still think they'd have a hard time selling a device that would require your computer to be off for no more than 16 hours at a time.

Gatak - Monday, July 25, 2005 - link

They could use the USB power. On most motherboards you can enable with a jumper or BIOS to supply standby power to the USB. Often the setting is called "Wake on USB" or "Wake on Keyboard" etcreactor - Monday, July 25, 2005 - link

"What would be really neat is if they could design an i-Ram device that uses 2 HDD bays and supported 8+ GB of ram and ran from a standard molex."Was thinking something similar myself as i was reading.

I think once ram modules are 4gb or larger, then this could be very useful. But not until it gets updated with sata2, ddr400 etc. When the time comes to build an HTPC then ill give this another look.

Nice article.

ranger203 - Monday, July 25, 2005 - link

Not to shabby, but i was honestly expecting like 3 second boots, & 5 second game load times... why is there only a 20% speed increase in some areas?Griswold - Thursday, July 28, 2005 - link

Because the data still has to be processed after being loaded - bandwith is obviously not the biggest bottleneck here.forwhom - Tuesday, July 26, 2005 - link

What I would be very interested in seeing is the performance of the thing using it as the source for encoding a dvd/mpeg... Most encoders are heavily disk-based and if it could reduce the time significantly it might be worth while - assuming that eventually they come out with one big enough to hold the source. There's now reasonable CPU encode performance, just have to get the data to/from it... maybe the i-drive would help..highlandsun - Monday, July 25, 2005 - link

Hmmm, the WD Raptor has a sustained transfer rate of 72MB/sec. So on a freshly formatted drive, with no fragmentation, it should still be half the speed of the iRam. But at $200 for a 74GB drive, then you could get a pair of these running in RAID0, which would run at around 140MB/sec anyway, and still have spent less than the cost of the iRam and 4GB of DDR DIMMs. It definitely seems like this product falls short.The use of PCI 3.3V standby power is clever. Perhaps a future version should just use a dummy PCI card to provide the power, connected to a 5" drive-size case with many more DIMM slots. If you can't cram at least 16 DIMMs in there, then the ability to use old memory is kind of wasted, since the old modules will have such small capacities.

Ultimately I think this type of product will always be a failure.

What they should do instead is make it a pass-through cache for a real SATA drive. So you plug the SATA controller into it, and plug it into a real SATA drive, and it caches all I/O operations to the real drive. That's the only way that you can get meaningful benefit out of only 4GB of memory. A card like this would turn any SATA drive into a speed demon; 4GB is definitely a decent size for most caching purposes.

highlandsun - Monday, July 25, 2005 - link

Of course the next logical step is to put the DIMM slots on the SATA controller card, so that access to cached data occurs at real memory speeds, not just SATA bus speed. This would only be a useful product for folks stuck on 32-bit systems, because otherwise it would be best to just increase the system memory instead. But there are plenty of 32-bit systems out there that would benefit from the approach.