Vendor Cards: MSI NX7800GTX

by Derek Wilson & Josh Venning on July 24, 2005 10:54 PM EST- Posted in

- GPUs

Overclocking

Unlike the EVGA e-GeForce 7800 GTX that we reviewed, the MSI NX7800 GTX did not come factory overclocked. The core clock was 430MHz and the memory clock was set at 1.20GHz, the same as our reference card. We were, however, able to overclock our MSI card a bit more than the EVGA, giving us 485MHz core and 1.25GHz memory. Our EVGA only reached up to 475MHz, and we'll see how the numbers stand up to each other in the next section.We used the same method as mentioned in the EVGA article to get our overclock speeds. To recap, we turned up the core and memory clock speeds in increments and ran Battlefield 2 benchmarks to test stability until it no longer ran cleanly. Then we backed it down until it was stable and used those numbers.

For some more general info about how we deal with overclocking, check the overclocking section of the last article on the EVGA e-GeForce 7800 GT. The story doesn't end here, however. We have been doing some digging regarding NVIDIA's handling of clock speed adjustment and have found some quite problematic information.

After talking to NVIDIA at length, we have learned that it is more difficult to actually set the 7800 GTX to a particular clock speed than it is to achieve 18pi miles per hour in a Ferrari. Apparently, NVIDIA looks at clock speed adjustments as a "speed knob" rather than a real clock speed control. The granularity of NVIDIA's clock speed adjustment is not incremental by 1 MHz as the Coolbits slider would have us believe. There are multiple clock partitions with "most of the die area" being clocked at 430MHz on the reference card. This makes it difficult to say what parts of the chip are running at a particular frequency at any given time.

Presuming that we should know exactly how fast every transistor is running at all times is absurd, but we don't have any such info on CPUs, yet there's no problem there. When working to overclock CPUs, we look at multipliers and bus speeds, giving us good reference points. If core frequency and the clock speed slider are more like a "speed knob" and all we need is a reference point, why not pick 0 to 10? Remember when enthusiasts would go so far as to replace crystals on motherboards to overclock? Are we going to have to return to those days in order to know truly what speed our GPU is running? I, for one, certainly hope not.

We can understand the delicate situation that NVIDIA is in when it comes to revealing too much information about how their chips are clocked and designed. However, there is a minimum level of information that we need in order to understand what it means to overclock a 7800 GTX, and we just don't have it yet. NVIDIA tells us that fill rate can be used to verify clock speed, but this doesn't give us enough details to determine what's actually going on.

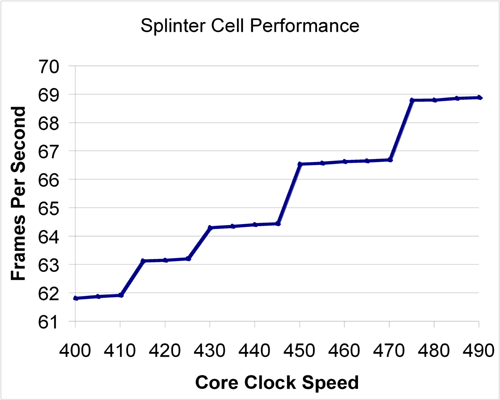

Asking NVIDIA for more information regarding how their chips are clocked has been akin to beating one's head against a wall. And so, we decided to take it upon ourselves to test a wide variety of clock speeds between 400MHz and 490MHz to try to get a better idea of what's going on. Here's a look at Splinter Cell: Chaos Theory performance over multiple core clock speeds.

As we can see, there is really no major effect on performance if clock speed isn't adjusted by about 15 to 25 MHz at a time. Smaller increases do yield some differences. The most interesting aspect to note is that it takes more of an increase to have a significant effect when starting from a higher frequency. We can see that at lower frequency, plateaus span about 10 MHz; while between 450 and 470 MHz, there is no useful increase in speed.

This data seems to indicate that each increase in the driver does increase the speed of something insignificant at every step. When moving up to one of the plateaus, it seems that a multiplier for something more important (like the pixel shader hardware) gets bumped up to the next discrete level. It is difficult to say with any certainty what is happening inside the hardware without more information.

We will be following this issue over time as we continue to cover individual 7800 GTX cards. NVIDIA has also indicated that they may "try to improve the granularity of clock speed adjustments", but when or if that happens and what the change will bring are questions that they would not discuss. Until we know more or have a better tool for overclocking, we will continue testing cards as we have in the past. For now, let's get back to the MSI card.

42 Comments

View All Comments

imaheadcase - Monday, July 25, 2005 - link

I know how to show FPS in bf2, but does it have a in game benchmark?Curious how you benchmark bf2 as I would like to see how some cards compare (or not compare i should say).

Good review

DerekWilson - Monday, July 25, 2005 - link

A lot of people have been asking, so here ya go ... links to our battlefield 2 benchmark demos files.[L]http://images.anandtech.com/reviews/video/bf2/atfi...[/L]

[L]http://images.anandtech.com/reviews/video/bf2/atfi...[/L]

There are instructions somewhere on battlefield2.com that describe how to use the demo.cmd file, but you will need to edit this file to set resolutions other than 800x600.

Our data is compiled from the last 1700 frames of our demo run. The many thousand other frames that can result come from the load screen and aren't useful to show performance.

Spacecomber - Monday, August 1, 2005 - link

What version of the game were these made under, Derek? Are they for the unpatched game? I couldn't get the demo to play. It would load the map, then it would crash me to the desktop while it was checking player assets or something to that effect. This was on a version of the game with the 1.02 patch.Space

DerekWilson - Monday, July 25, 2005 - link

by the way, since it's the last 1700 frame, you've got to go into the frametimes csv file and manual calculate an average for the last 1700 lines of the 3rd column (after you've split the columns on ; ) for every test you do. It's kind of a pain, but for people that care, there it is.bob661 - Monday, July 25, 2005 - link

How do you turn on the FPS in BF2? Thanks.Spacecomber - Monday, July 25, 2005 - link

Use the tilde ~ key to access the console and enter this command, "renderer.drawFps 1" (no quotes).You can find these tips and a lot more in the Battlefield Tweak Guide that I mentioned in my first link in the post above.

Space

Spacecomber - Monday, July 25, 2005 - link

You'll need to create (or find one for downloading) a "demo" file which you can then run with the timedemo feature of the demo.cmd script file.A couple of sources for general information on downloading the demo.cmd script,creating demos, converting them to AVIs, and running timedemos.

http://www.tweakguides.com/BF2_6.html">From the BF2 Tweak Guide

http://forum.eagames.co.uk/viewtopic.php?t=934">EA UK's BF2 Forum Thread on the BF2 Recorder

And, http://www.overclockers.com.au/article.php?id=3841...">Overclockers.AU article on running BF2 benchmarks (mentioned in the BF2 Tweak Guide.

HTH,

Space

p3r2y - Monday, July 25, 2005 - link

anand has an article about different gpu's in bf2 stupidSea Shadow - Monday, July 25, 2005 - link

Great review, and props for filtering all the random spam.I can't wait to see the BFG review as it will help me decide which 7800 I am going to get.

xsilver - Sunday, July 24, 2005 - link

congrats to anand to adding the filtersno more dumb "first post" or "in soviet russia" anymore

anyways with regard to the card

why on earth would you not buy the egva card as it comes with BF2 --- its one of the few games that taxes the card -- even if you have the game already - selling it would net you an extra $30 at least

kudos to evga for including a good game