Intel Goes Full XPU: Falcon Shores to Combine x86 and Xe For Supercomputers

by Ryan Smith on February 17, 2022 2:30 PM EST

One of Intel’s more interesting initiatives over the past few years has been XPU – the idea of using a variety of compute architectures in order to best meet the execution needs of a single workload. In practice, this has led to Intel developing everything from CPUs and GPUs to more specialty hardware like FPGAs and VPUs. All of this hardware, in turn, is overseen at the software level by Intel’s oneAPI software stack, which is designed to abstract away many of the hardware differences to allow easier multi-architecture development.

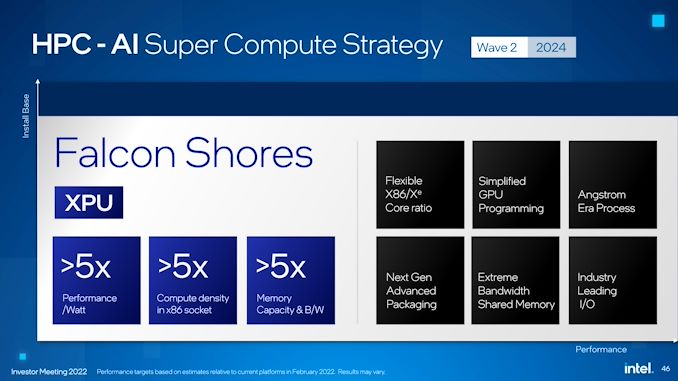

Intel has always indicated that their XPU initiative was just a beginning, and as part of today’s annual investor meeting, Intel is finally disclosing the next step in the evolution of the XPU concept with a new project codenamed Falcon Shores. Aimed at the supercomputing/HPC market, Falcon Shores is a new processor architecture that will combine x86 CPU and Xe GPU hardware into a single Xeon socket chip. And when it is released in 2024, Intel is expecting it to offer better than 5x the performance-per-watt and 5x the memory capacity of their current platforms.

At a very high level, Falcon Shores appears to be an HPC-grade APU/SoC/XPU for servers. While Intel is offering only the barest of details at this time, the company is being upfront in that they are combining x86 CPU and Xe GPU hardware into a single chip, with an eye on leveraging the synergy between the two. And, given the mention of advanced packaging technologies, it’s a safe bet that Intel has something more complex than a monolithic die planned, be it separate CPU/GPU tiles, HBM memory (e.g. Sapphire Rapids), or something else entirely.

Diving a bit deeper, while integrating discrete components often pays benefits over the long run, the nature of the announcement strongly indicates that there’s more to Intel’s plan here than just integrating a CPU and GPU into a single chip (something they already do today in consumer parts). Rather, the presentation from Raja Koduri, Intel’s SVP and GM of the Accelerated Computing Systems and Graphics (AXG) Group, makes it clear that Intel is looking to go after the market for HPC users with absolutely massive datasets – the kind that can’t easily fit into the relatively limited memory capacity of a discrete GPU.

A singular chip, in comparison, would be much better prepared to work from large pools of DDR memory without having to (relatively) slowly shuffle data in and out of VRAM, which remains a drawback of discrete GPUs today. In those cases, even with high speed interfaces like NVLink and AMD’s Infinity Fabric, the latency and bandwidth penalties of going between the CPU and GPU remain quite high compared to the speed at which HPC-class processors can actually manipulate data, so making that link as short as physically possible can potentially offer performance and energy savings.

Meanwhile, Intel is also touting Falcon Shores as offering a flexible ratio between x86 and Xe cores. The devil is in the details here, but at a high level it sounds like the company is looking at offering multiple SKUs with different numbers of cores – likely enabled by varying the number of x86 and Xe titles.

From a hardware perspective then, Intel seems to be planning to throw most of their next-generation technologies at Falcon Shores, which is fitting for its supercomputing target market. The chip is slated to be built on an “angstrom era process”, which given the 2024 date is likely Intel’s 20A process. And along with future x86/Xe cores, will also incorporate what Intel is calling “extreme bandwidth shared memory”.

With all of that tech underpinning Falcon Shores, Intel is currently projecting a 5x increase over their current-generation products in several metrics. This includes a 5x increase in performance-per-watt, a 5x increase in compute density for a single (Xeon) socket, a 5x increase in memory capacity, and a 5x increase in memory bandwidth. In short, the company has high expectations for the performance of Falcon Shores, which is fitting given the highly competitive HPC market it’s slated for.

And perhaps most interestingly of all, to get that performance Intel isn’t just tackling things from the raw hardware throughput side of matters. The Falcon Shores announcement also mentions that developers will have access to a "vastly simplified GPU programming model" for the chip, indicating that Intel isn’t just slapping some Xe cores into the chip and calling it a day. Just what this entails remains to be seen, but simplifying GPU programming remains a major goal in the GPU computing industry, especially for heterogeneous processors that combine CPU and GPU processing. Making it easier to program these high throughput chips not only makes them more accessible to developers, but reducing/eliminating synchronization and data preparation requirements can also go a long way towards improving performance.

Like everything else being announced as part of today’s investor meeting, this announcement is more of a teaser for Intel. So expect to hear a lot more about Falcon Shores over the next couple of years as Intel continues their work to bringing it to market.

Source: Intel

28 Comments

View All Comments

mode_13h - Monday, February 28, 2022 - link

> The perf/W and core density could be achieved by using E-cores.They already said it's integrating Xe-like GPU cores. That's where they're getting the big performance boost.

michael2k - Friday, February 18, 2022 - link

So is it me or does this sound like a unified memory pool that both cpu and GPU have equal access to?mode_13h - Monday, February 28, 2022 - link

Yes, if the GPU and CPU are being joined in-package, that would be the obvious thing to do.However, CXL means you can still have coherent memory pools, even with them residing in different devices.

erinadreno - Saturday, February 19, 2022 - link

Is it just me or Intel just got obsessed with water in the past 5 years?PeachNCream - Monday, February 21, 2022 - link

Maybe longer. The * Bridge series chips crossed over water and that was the first thing that came to mind off the top of my head. I'm not going to do the research, but I bet there are other bodies of water in Intel's history as well. I'm sure someone with more knowledge of CPU history could chime in, but I do get where you're going with this.JKflipflop98 - Wednesday, March 9, 2022 - link

The code names are based on where the part is designed. If it's designed in Oregon, it get a pacific NW name. If it's drawn up in Israel it gets a much drier handle.mode_13h - Monday, February 28, 2022 - link

Yes... bridges, wells, streams, creaks, lakes, rapids, coves, sounds. I think the bigger point is that these are geographical features that are plentiful, making them ripe for use as codenames.Some non-water naming themes they've used involve *mont, *pass, *forest, knights*, and I'm sure there are others that don't come to mind.

I like how AMD used the theme of islands, where they had a family named after a group of islands and the individual members were named after individual islands. I guess there aren't a ton of island chains, though. Still, seems like they could've continued that a bit longer.

mode_13h - Sunday, February 27, 2022 - link

> 5x increase in memory capacity, and a 5x increase in memory bandwidthI wish they were clearer about what they're using as a basis for comparison. Cynically, one could say 5x the capacity of current GPUs and 5x the bandwidth of current Xeons.