NVIDIA's GeForce 7800 GTX Hits The Ground Running

by Derek Wilson on June 22, 2005 9:00 AM EST- Posted in

- GPUs

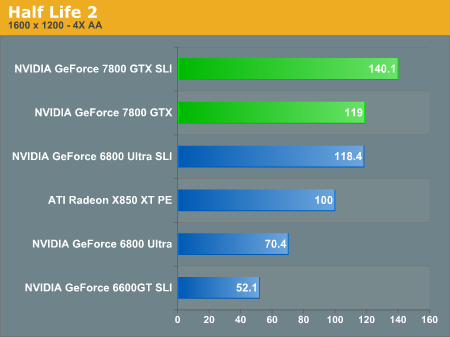

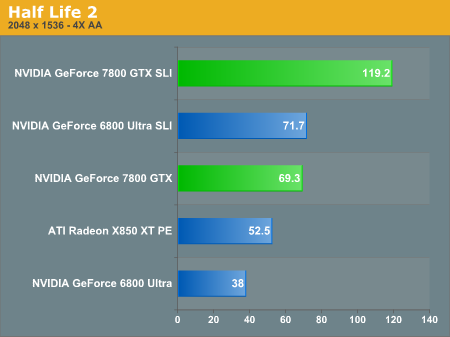

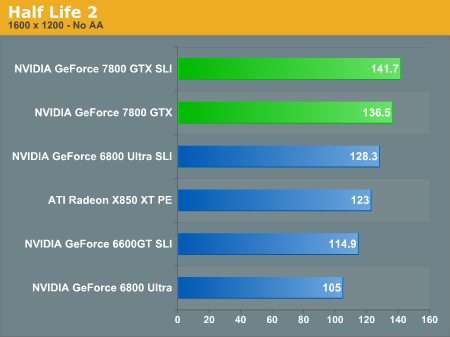

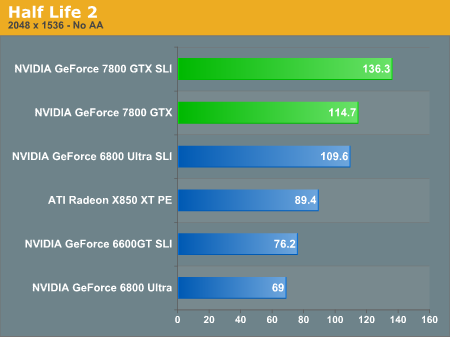

Half Life 2 Performance

Half-Life 2 is arguably one of the best looking games currently available. We mentioned earlier that it stresses pixel processing power more than memory bandwidth on the graphics card, and we see that here. While enabling AA/AF does cause a performance loss at these high resolutions, it isn't truly severe until we reach 2048x1536. Assuming a more common resolution of 1600x1200 - not everyone has a monitor with support for higher resolutions - the single 7800GTX is actually faster than 6800U SLI. In fact, the only time we see the 6800 SLI setup win out is when we run 4xAA/8xAF at 2048x1536, and then it's only by 3%. Also worth noting is that while SLI helps out the 6800 series quite a bit (provided you have a fast CPU and are running a high resolution), the 7800GTX is clearly running into CPU limitations. Our FX-55 can't push any of the cards past the 142 FPS mark regardless of resolution.ATI has done well in HL2 since its release, and that trend continues. The SLI configurations (other than the 6600GT) all surpass the performance of the X850XTPE, but it does come out ahead of the 6800U in a single card match - it's as much as 42% faster when we look at the 1600x1200 AA/AF scores. The 7800GTX, of course, manages to beat it quite easily. The 540 MHz core clock of the XTPE is quite impressive in the pixel shader heavy HL2, but with the additional pipelines and improved MADD functionality, the 7800GTX chews up HL2 rocks and spits out Combine gravel.

One thing that isn't immediately clear is why the 6800U cards have difficulties supporting the 2048x1536 resolution in some games. Performance drops by almost half when switching from 1600x1200 to 2048x1536, so either there's a driver problem or the 6800U simply doesn't do well with the demands of HL2 at such resolutions. There are 63% more pixels at 2048x1536 compared to 1600x1200, so it's rather shocking to see a performance decrease larger than this amount. We would venture to guess that it's a matter of priorities: the number of people that actually run 2048x1536 resolution in games is very small in comparison to the total number of gamers, and with most cards only providing a 60Hz refresh rate at that resolution, many don't worry too much about gaming performance.

127 Comments

View All Comments

BenSkywalker - Wednesday, June 22, 2005 - link

Derek-I wanted to offer my utmost thanks for the inclusion of 2048x1536 numbers. As one of the fairly sizeable group of owners of a 2070/2141 these numbers are enormously appreciated. As everyone can see 1600x1200x4x16 really doesn't give you an idea of what high resolution performance will be like. As far as the benches getting a bit messed up- it happens. You moved quickly to rectify the situation and all is well now. Thanks again for taking the time to show us how these parts perform at real high end settings.

blckgrffn - Wednesday, June 22, 2005 - link

You're forgiven, by me anyway :) It is also the great editorial staff that makes Anandtech my homepage on every browser on all of my boxes!Nat

yacoub - Wednesday, June 22, 2005 - link

#72 - Totally agree. Some Rome: Total War benchs are much needed - but primarily to see how the game's battle performance with large numbers of troops varies between AMD and Intel more so than NVidia and ATi, considering the game is highly CPU-limited currently in my understanding.DerekWilson - Wednesday, June 22, 2005 - link

Hi everyone,Thank you for your comments and feedback.

I would like to personally apologize for the issues that we had with our benchmarks today. It wasn't just one link in the chain that caused the problems we had, but there were many factors that lead to the results we had here today.

For those who would like an explanation of what happened to cause certain benchmark numbers not to reflect reality, we offer you the following. Some of our SLI testing was done forcing multi-GPU rendering on for tests where there was no profile. In these cases, the default mutli-GPU mode caused a performance hit rather than the increase we are used to seeing. The issue was especially bad in Guild Wars and the SLI numbers have been removed from offending graphs. Also, on one or two titles our ATI display settings were improperly configured. Our windows monitor properties, ATI "Display" tab properties, and refresh rate override settings were mismatched. This caused the card to render. Rather than push the display at a the pixel clock we expected, ATI defaulted to a "safe" mode where the game is run at the resolution requested, but only part of the display is output to the screen. This resulted in abnormally high numbers in some cases at resolutions above 1600x1200.

For those of you who don't care about why the numbers ran the way they did, please understand we are NOT trying to hide behind our explanation as an excuse.

We agree completely that the more important issue is not why bad numbers popped up, but that bad numbers made it into a live article. For this I can only offer my sincerest of apologies. We consider it our utmost responsibility to produce quality work on which people may rely with confidence.

I am proud that our readership demands a quality above and beyond the norm, and I hope that that never changes. Everything in our power will be done to assure that events like this will not happen again.

Again, I do apologize for the erroneous benchmark results that went live this morning. And thank you for requiring that we maintain the utmost integrity.

Thanks,

Derek Wilson

Senior CPU & Graphics Editor

AnandTech.com

Dmitheon - Wednesday, June 22, 2005 - link

I have to say, while I'm am extremely pleased with nVidia doing a real launch, the product leaves me scratching my head. They priced themselves into an extremely small market, and effectively made their 6800 series the second tier performance cards without really dropping the price on them. I'm not going to get one, but I do wonder how this will affect the company's bottom line.OrSin - Wednesday, June 22, 2005 - link

I not tring to be a buthole but can we get a benchmark thats a RTS game. I see 10+ games benchmarks and most are FPS, the few that are not might as well be. Those RPG seems to use a silimar type engine.stmok - Wednesday, June 22, 2005 - link

To CtK's question : Nope, SLI doesn't work with dual-display. (Last I checked, Nvidia got 2D working, but NO 3D)...Rumours say its a driver issue, and Nvidia is working on it.I don't know any more than that. I think I'd rather wait until Nvidia are actually demonstrating SLI with dual or more displays, before I lay down any money.

yacoub - Wednesday, June 22, 2005 - link

#60 - it's already to the point where it's turning people off to PC gaming, thus damaging the company's own market of buyers. It's just going to move more people to consoles, because even though PC games are often better games and much more customizable and editable, that only means so much and the trade-off versus price to play starts to become too imbalanced to ignore.jojo4u - Wednesday, June 22, 2005 - link

What was regarding the AF setting? I understand that it was set to 8x when AA was set to 4x?Rand - Wednesday, June 22, 2005 - link

I have to say I'm rather disappointed in the quality of the article. A number of apparently nonsensical benchmark results, with little to no analysis of most of the results.A complete lack of any low level theoretical performance results, no attempts to measure any improvements in efficiency of what may have caused such improvements.

Temporal AA is only tested on one game with image quality examined in only one scene. Given how dramatically different games and genres utilize alpha textures your providing us with an awfully limited perspective of it's impact.