Intel Alder Lake DDR5 Memory Scaling Analysis With G.Skill Trident Z5

by Gavin Bonshor on December 23, 2021 9:00 AM EST

One of the most agonizing elements of Intel's launch of its latest 12th generation Alder Lake desktop processors is its support of both DDR5 and DDR4 memory. Motherboards are either one or the other, while we wait for DDR5 to take hold in the market. While DDR4 memory isn't new to us, DDR5 memory is, and as a result, we've been reporting on the release of DDR5 since last year. Now that DDR5 is here, albeit difficult to obtain, we know from our Core i9-12900K review that DDR5 performs better at baseline settings when compared to DDR4. To investigate the scalability of DDR5 on Alder Lake, we have used a premium kit of DDR5 memory from G.Skill, the Trident Z5 DDR5-6000. We test the G.Skill Trident Z5 kit from DDR5-4800 to DDR5-6400 at CL36 and DDR5-4800 with as tight timings as we could to see if latency also plays a role in enhancing the performance.

DDR5 Memory: Scaling, Pricing, Availability

In our launch day review and analysis of Intel's latest Core i9-12900K, we tested many variables that could impact performance on the new platform. This includes the performance variation when using Windows 11 versus Windows 10, performance with both DDR5 and DDR4 at official speeds, and the impact that both the new hybrid Performance and Efficient cores.

With all of the different variables in that review, the purpose of this article is to evaluate and analyze the impact that DDR5 memory frequency plays on performance. While in our past memory scaling articles, we've typically stuck with just focusing on the effects of frequency, but this time we wanted to see how tighter latencies can have an impact on overall performance as well.

ASUS ROG Maximus Z690 Hero motherboard with G.Skill Trident Z5 DDR5-6000 memory

Touching on the pricing and availability of DDR5 memory at the time of writing, the TLDR is that it's currently hard to find any in stock, and when it is in stock, it costs a lot. With a massive global chip shortage that many put down to the Coronavirus Pandemic, the drought has bumped prices above MSRP on many components. Interestingly enough, it's not the DDR5 itself causing the shortage, but the power management controllers that DDR5 uses per module to get higher bandwidth are in short supply. As a result, the increased cost can be likened to a sort of early adopters fee, where users wanting the latest and greatest will have to pay through the nose to own it.

Another variable to consider with DDR5 memory is that a 32GB (2x16) kit of G.Skill Ripjaw DDR5-5200 can be found at retailer MemoryC for $390. In contrast, a more premium and faster kit such as G.Skill Trident Z5 DDR5-6000 has a price tag of $508, an increase of around 30%. One of the things to consider is that a price increase isn't linear to the performance increase, and that goes for pretty much every component from memory, graphics cards, and even processors. The more premium a product, the more it costs.

Enabling X.M.P 3.0: It's Technically Overclocking

In March 2021, we reported that Intel effectively discontinued its 'Performance Tuning Protection Plan.' This was essentially an extended warranty for users planning to overclock Intel's processors, which could be purchased at an additional cost. One of the main benefits of this was that if users somehow damaged the silicon with higher than typical voltages (CPU VCore and Memory related voltages), users could effectively RMA the processors back to Intel on a like for like replacement basis. Intel stated that very few people took advantage of the plan to continue it.

One of the variables to note when running Intel's Xtreme Memory Profiles (X.M.P 3.0) on DDR5 memory is that Intel classes this as overclocking. That means when RMA'ing a faulty processor, running the CPU at stock settings but enabling, an X.M.P 3.0 memory profile at DDR5-6000 CL36 is something they consider as an overclock. This could inherently void the warranty of the CPU. All processor manufacturers adhere to JEDEC specifications with their recommended memory settings to use with any given processor, such as DDR4-3200 for its 11th generation (Rocket Lake) and DDR5-4800/DDR4-3200 for its 12th generation (Alder Lake) processors.

When it comes to overclocking DDR5 memory on the ASUS ROG Maximus Z690 Hero, we did all of our testing with Intel's Memory Gear at the 1:2 ratio. We did test the 1:1 and 1:4 ratio but without any great success. When enabling X.M.P on the G.Skill kit, it automatically sets the 1:2 ratio, with the memory controller running at half the speed of the memory kit.

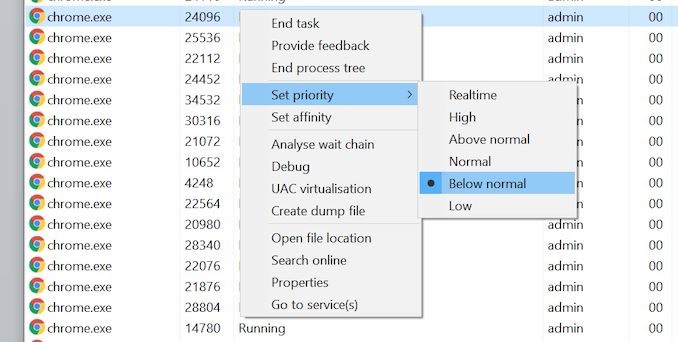

Issues Within Windows 10: Priority and Core Scheduling

As we highlighted in our review of the Intel Core i9-12900K processor, in certain software environments there can be unexpected performance behavior. When a thread starts, the operating system (Windows 10) will assign a task to a specific core. As the P-Cores (performance) and E-Cores (efficiency) on the hybrid Alder Lake design are at different performances and efficiencies, it is up to the scheduler to make sure the right task is on the right core. Intel's intended use case is that the in-focus software gets priority, and everything else is moved to background tasks. However, on Windows 10, there is an additional caveat - any software set to below normal (or lower) priority will also be considered background, and be placed on the E-cores, even if it is in focus. Some high-performance software sets itself as below normal priority in order to keep the system running it responsive, so there's a clash of ideology between the two.

Various solutions to this exist. Intel stated to us that users could either run dual monitors or change the Windows Power Plan to High Performance. To investigate the issue during testing, all of our testing in this article was done with the Windows Power Plan set to High-Performance (as I do for motherboard reviews) and running the tests with the High-Performance Power Plan active.

In addition to this, I also used a third-party scheduler, the Process Lasso software, to check for performance variations. I can safely and confidently say that there was around a 0.5% margin of variance between using the High-Performance Power Plan and setting the affinities and priorities to high using the Process Lasso software.

It should also be noted that users running Windows 11 shouldn't experience any of these issues. When set correctly, we saw no difference between Windows 10 and Windows 11 in our original Core i9-12900K review, and so to keep things consistent with our previous testing for now, we're sticking with Windows 10 with our fix applied.

Test Bed, Setup, and Hardware

As this article focuses on how well DDR5 memory scales, we have used a premium Z690 motherboard, the ASUS ROG Maximus Z690 Hero, and a premium ASUS ROG Ryujin II 360 mm AIO CPU cooler. In terms of settings, we've left the Intel Core i9-12900K at default variables as per the firmware, with the only changes made regarding the memory settings.

| DDR5 Memory Scaling Test Setup (Alder Lake) | |||

| Processor | Intel Core i9-12900K, 125 W, $589 8+8 Cores, 24 Threads 3.2 GHz (5.2 GHz P-Core Turbo) |

||

| Motherboard | ASUS ROG Maximus Z690 Hero (BIOS 0803) | ||

| Cooling | ASUS ROG Ryujin II 360 360mm AIO | ||

| Power Supply | Corsair HX850 80Plus Platinum 850 W | ||

| Memory | G.Skill Trident Z5 2 x 16 GB DDR5-6000 CL 36-36-36-76 (XMP) |

||

| Video Card | MSI GTX 1080 (1178/1279 Boost) | ||

| Hard Drive | Crucial MX300 1TB | ||

| Case | Open Benchtable BC1.1 (Silver) | ||

| Operating System | Windows 10 Pro 64-bit: Build 21H2 | ||

For the operating system, we've used the most widely available and latest build of Windows 10 64-bit (21H2) with all of the current updates at the time of testing. (For those wondering about our selection of GPU, the truth is that all our editors are in different locations in the world and we do not have a singular pool of resources. This is Gavin's regular testing GPU until we can get a replacement; which in this current climate is unlikely. - Ian)

| DDR5 Memory Frequencies/Latencies Tested | |||

| Memory | Frequency/Timings | Memory IC | |

| G.Skill Trident Z5 (2 x 16 GB) | DDR5-4800 CL 32-32-32-72 DDR5-4800 CL 36-36-36-76 DDR5-5000 CL 36-36-36-76 DDR5-5200 CL 36-36-36-76 DDR5-5400 CL 36-36-36-76 DDR5-5600 CL 36-36-36-76 DDR5-5800 CL 36-36-36-76 DDR5-6000 CL 36-36-36-76 DDR5-6200 CL 36-36-36-76 DDR5-6400 CL 36-36-36-76 |

Samsung | |

Above are all of the frequencies and latencies we've tested in this article. For scaling, we selected the G.Skill Trident Z5 memory kit as it had the best overclocking ability from all of the DDR5 kits we received at launch. Out of the box it was rated the highest for frequency, and we pushed it even further. The G.Skill Trident Z5 memory was tested from DDR5-4800 CL36 up to and including DDR5-6400 CL36, but also a special case of DDR5-4800 CL32 for lower CAS latencies. Details on our overclocking exploits are later in the review.

Read on for more information about G.Skill's Trident Z5 DDR5-6000, as well as our analysis on the scalability of DDR5 memory on Intel's Alder Lake. In this article, we cover the following:

- 1. Overview and Test Setup (this page)

- 2. A Closer Look at the G.Skill Trident Z5 DDR5-6000 CL36

- 3. CPU Performance

- 4. Gaming Performance: Low Resolution

- 5. Gaming Performance: High Resolution

- 6. Conclusion

82 Comments

View All Comments

TheinsanegamerN - Tuesday, December 28, 2021 - link

What is the hold up on video card reviews? I know there was that cali fire last year, but that was over a year ago now.gagegfg - Thursday, December 30, 2021 - link

The shortage of Chips is global and not just GPU. A Hardware tester like you, should not have problems in acquiring a GPU to do these tests, otherwise, you have a serious public relations problem.And returning to the point of my criticism, I think that those who understand why CPU scaling is tested in games, it is not only FPS, but also being able to evaluate longevity to upgrade to future GPUs or which CPU will generate a bottleneck sooner. that other. Today all CPUs can run games at 4k and that's not news to anyone.

In short, if you cannot get a decent GPU to test a top-of-the-range CPU and its limitations with different hardware combinations, try to eliminate the GPU bottleneck with 720p / 1080p resolutions or by dropping detail from excene. That is the correct way to test the other points and not the GPU itself.

This criticism is constructive, and is not intended to generate repudiation.

TheinsanegamerN - Monday, January 3, 2022 - link

Erm, you do know that other reviewers have been able to get ahold of GPUs for testing, right? If you dont have the budget for the stuff you need to do your job, cant get the stuff you need to do your job, and find excuses to now do the reviews your viewerbase wants to so, that says to a lot of people that anandtech is being mismanaged into the ground.Come to think of it, these same excuses were used when the 3080 was never reviewed, alongside "well we have one guy who does it and he lives near the fires in cali". That was a year and a half ago.

Perhaps Anandtech presenting excuses instead of articles is why you cant get companies to send you hardware? Just a thought.

Azix - Monday, January 10, 2022 - link

I can understand manufacturers being less likely to send out a GPU if they aren't guaranteed publicity. The key is that he said just for testing, not necessarily for a review. Most other reviewers are given for marketing purposes.zheega - Thursday, December 23, 2021 - link

I didn't even notice that at first, I just assumed that they would get rid of the GPU bottleneck. How amazingly weird.thestryker - Thursday, December 23, 2021 - link

The vast majority of people play at the highest playable resolution for the hardware they have which means they're GPU bound no matter what their GPU is. The frame rates in the review are perfectly playable and indicates the amount of variation one could expect for a mostly GPU bound situation. None of the titles are esports/competitive where you'd need to be maxing out frame rate so even if they were using a 3090 it'd be a pointless reflection for that.So while the metrics aren't perfect from a scaling under ideal circumstances perspective it's perfectly fine for practicality.

haukionkannel - Friday, December 24, 2021 - link

True. I have not latest PC hardware and still play at 1440p at highest settings. So I can confirm that is the way to test to see what we see in real world situation...Ooga Booga - Tuesday, December 28, 2021 - link

Because they haven't done meaningful GPU stuff in years, it all goes to Tom's Hardware. Eventually the card they use will be 10 years old if this site is even still around.TimeGoddess - Thursday, December 23, 2021 - link

If youre gonna use a gtx 1080 at least try and do the gaming benchmarks in 720p so that there is actually a CPU bottleneckIan Cutress - Thursday, December 23, 2021 - link

You know how many people complain when I run our CPU reviews at 720p resolutions? 'You're only doing that to show a difference'.