The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTFundamental Windows 10 Issues: Priority and Focus

In a normal scenario the expected running of software on a computer is that all cores are equal, such that any thread can go anywhere and expect the same performance. As we’ve already discussed, the new Alder Lake design of performance cores and efficiency cores means that not everything is equal, and the system has to know where to put what workload for maximum effect.

To this end, Intel created Thread Director, which acts as the ultimate information depot for what is happening on the CPU. It knows what threads are where, what each of the cores can do, how compute heavy or memory heavy each thread is, and where all the thermal hot spots and voltages mix in. With that information, it sends data to the operating system about how the threads are operating, with suggestions of actions to perform, or which threads can be promoted/demoted in the event of something new coming in. The operating system scheduler is then the ring master, combining the Thread Director information with the information it has about the user – what software is in the foreground, what threads are tagged as low priority, and then it’s the operating system that actually orchestrates the whole process.

Intel has said that Windows 11 does all of this. The only thing Windows 10 doesn’t have is insight into the efficiency of the cores on the CPU. It assumes the efficiency is equal, but the performance differs – so instead of ‘performance vs efficiency’ cores, Windows 10 sees it more as ‘high performance vs low performance’. Intel says the net result of this will be seen only in run-to-run variation: there’s more of a chance of a thread spending some time on the low performance cores before being moved to high performance, and so anyone benchmarking multiple runs will see more variation on Windows 10 than Windows 11. But ultimately, the peak performance should be identical.

However, there are a couple of flaws.

At Intel’s Innovation event last week, we learned that the operating system will de-emphasise any workload that is not in user focus. For an office workload, or a mobile workload, this makes sense – if you’re in Excel, for example, you want Excel to be on the performance cores and those 60 chrome tabs you have open are all considered background tasks for the efficiency cores. The same with email, Netflix, or video games – what you are using there and then matters most, and everything else doesn’t really need the CPU.

However, this breaks down when it comes to more professional workflows. Intel gave an example of a content creator, exporting a video, and while that was processing going to edit some images. This puts the video export on the efficiency cores, while the image editor gets the performance cores. In my experience, the limiting factor in that scenario is the video export, not the image editor – what should take a unit of time on the P-cores now suddenly takes 2-3x on the E-cores while I’m doing something else. This extends to anyone who multi-tasks during a heavy workload, such as programmers waiting for the latest compile. Under this philosophy, the user would have to keep the important window in focus at all times. Beyond this, any software that spawns heavy compute threads in the background, without the potential for focus, would also be placed on the E-cores.

Personally, I think this is a crazy way to do things, especially on a desktop. Intel tells me there are three ways to stop this behaviour:

- Running dual monitors stops it

- Changing Windows Power Plan from Balanced to High Performance stops it

- There’s an option in the BIOS that, when enabled, means the Scroll Lock can be used to disable/park the E-cores, meaning nothing will be scheduled on them when the Scroll Lock is active.

(For those that are interested in Alder Lake confusing some DRM packages like Denuvo, #3 can also be used in that instance to play older games.)

For users that only have one window open at a time, or aren’t relying on any serious all-core time-critical workload, it won’t really affect them. But for anyone else, it’s a bit of a problem. But the problems don’t stop there, at least for Windows 10.

Knowing my luck by the time this review goes out it might be fixed, but:

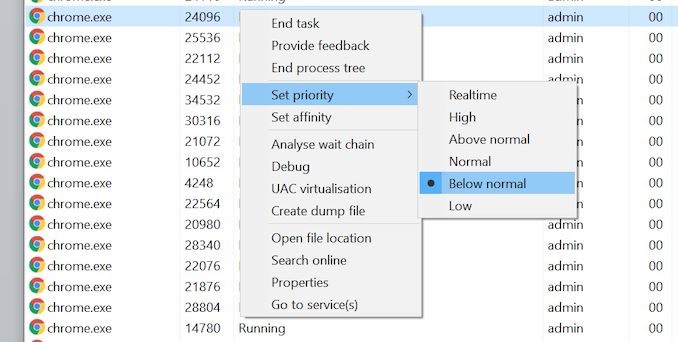

Windows 10 also uses the threads in-OS priority as a guide for core scheduling. For any users that have played around with the task manager, there is an option to give a program a priority: Realtime, High, Above Normal, Normal, Below Normal, or Idle. The default is Normal. Behind the scenes this is actually a number from 0 to 31, where Normal is 8.

Some software will naturally give itself a lower priority, usually a 7 (below normal), as an indication to the operating system of either ‘I’m not important’ or ‘I’m a heavy workload and I want the user to still have a responsive system’. This second reason is an issue on Windows 10, as with Alder Lake it will schedule the workload on the E-cores. So even if it is a heavy workload, moving to the E-cores will slow it down, compared to simply being across all cores but at a lower priority. This is regardless of whether the program is in focus or not.

Of the normal benchmarks we run, this issue flared up mainly with the rendering tasks like CineBench, Corona, POV-Ray, but also happened with yCruncher and Keyshot (a visualization tool). In speaking to others, it appears that sometimes Chrome has a similar issue. The only way to fix these programs was to go into task manager and either (a) change the thread priority to Normal or higher, or (b) change the thread affinity to only P-cores. Software such as Project Lasso can be used to make sure that every time these programs are loaded, the priority is bumped up to normal.

474 Comments

View All Comments

Netmsm - Sunday, November 7, 2021 - link

I believe, we're not talking about ISO-efficiency or manufacturing or engineering details as facts! These are facts but in the appropriate discussion. Here, we have results. These results are produced by all those technological efforts. In fact, those theoretical improvements are getting concluded in these pragmatical information. Therefore, we should NOT wink at performance per watt in RESULTS - not ISO-related matters.So, the fact, my friend, is Intel new architecture does tend to suck 70-80 percent more power and give 50-60 percent more heat. Just by overclocking 100MHz 12900k jumps from ~80-85 to 100 degrees centigrade while consuming ~300 watts.

Once in past, AMD tried to get ahead of Nvidia by 6990 in performance because they coveted the most powerful graphic card title. AMD made the hottest and the noisiest graphic card in the history and now Intel is mimicking :))

One can argue that it is natural when you cannot stop or catch a rival so try to do some chicaneries. As it is very clear that Anandtech deliberately does not tend to put even the nominal TDP of Intel 12900k in their benches. I loathe this iniquitous practice!

Wrs - Sunday, November 7, 2021 - link

@Netmsm I believe the mistake is construing performance-per-watt (PPW) of a consumer chip as indicative of PPW for a future server chip based on the same core. Consumer chips are typically optimized for performance-per-area (PPA) because consumers want snappiness and they are afraid of high purchase costs while simultaneously caring much less than datacenters about cost of electricity.Netmsm - Monday, November 8, 2021 - link

@Wrs You cannot totally separate efficiency of consumer and enterprise chips!As an incontrovertible fact, architecture is what primarily (not completely) determines the efficacy of a processor.

Is Intel going to kit out upcoming server CPUs in an improved architecture?

Wrs - Monday, November 8, 2021 - link

@Netmsm Architecture, process, and configuration all can heavily impact efficiency/PPW. I’m not aware of any architectural reason that Golden Cove would be much less efficient. It’s a mildly larger core, but it doesn’t have outrageous pipelining or execution imbalances. It derives from a lineage of reasonably efficient cores, and they had to be as they remained on aging 14nm. Processwise Intel 7 isn’t much less efficient than TSMC N7, either. (It could even be more efficient, but analysis hasn’t been precise enough to tell.) But clearly ADL in a 12900/12700k is set up to be inefficient yet performant at high load by virtue of high frequency/voltage scaling and thermal density. I could do almost the same on a dual CCD Ryzen, before running into AM4 socket limits. That’s obviously not how either company approaches server chips.Netmsm - Tuesday, November 9, 2021 - link

When you cannot infer or appraise or guess we should drop it for now and wait for real tests of upcoming server chips to come.regards ^_^

GamingRiggz - Tuesday, March 15, 2022 - link

Thankfully you are no engineer.AbRASiON - Thursday, November 4, 2021 - link

AMD would have less of an issue If the 5000 processors weren’t originally priced gouged.Many people held off switching teams due to that. Instead of the processor being an amazing must buy, it was just a decent purchase. So they waited.

If you’re On the back foot in this game, you should be competing hard always to get that stranglehold and mind share.

I’m glad they’re competing though and hopefully they release some very competitive and REASONABLY PRICED products in the near future.

Fataliity - Thursday, November 4, 2021 - link

Their revenue and marketshare #'s beg to disagree.Spunjji - Friday, November 5, 2021 - link

They've been selling every CPU they can make. There are shortages of every Zen 3 based notebook out there (to the extent that some OEMs have cancelled certain models) and they're selling so many products based on the desktop chiplets that Threadripper 5000 simply isn't a thing. You ought to factor that into your assessment of how they're doing.BillBear - Thursday, November 4, 2021 - link

Is anyone gullible enough to forget more than a decade of price gouging, low core counts and nearly nonexistent performance increases we got from Intel, vs. the high core counts, increasing performance, and lower prices we got from AMD?