Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Section by Ryan Smith

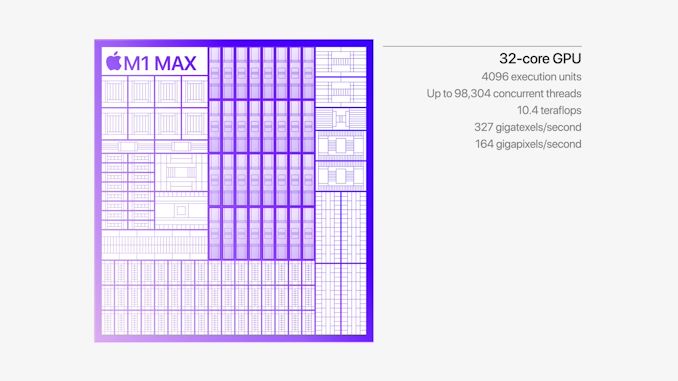

GPU Performance: 2-4x For Productivity, Mixed Gaming

Arguably the star of the show for Apple’s latest Mac SoCs is the GPU, as well as the significant resources that go into feeding it. While Apple doesn’t break down how much of their massive, 57 billion transistor budget on the M1 Max went to the GPU, it and its associated hardware were the only thing to be quadrupled versus the original M1 SoC. Last year Apple proved that it could develop competitive, high-end CPU cores for a laptop; now they are taking their same shot on the GPU side of matters.

Driving this has been one of the biggest needs for Apple – and one of the greatest friction points between Apple and former partner Intel – which is GPU performance. With tight control over their ecosystem and little fear over pushing (or pulling) developers forward, Apple has been on the cutting edge of expanding the role of GPUs within a system for nearly the past two decades. GPU-accelerated composition (Quartz Extreme), OpenCL, GPU-accelerated machine learning, and more have all been developed or first implemented by Apple. Though often rooted in efficiency gains and getting incredibly taxing tasks off of the CPU, these have also pushed up Apple’s GPU performance requirements.

This has led to Apple using Intel’s advanced Iris iGPU configurations over most of the last 10 years (often being the only OEM to make significant use of them). But even Iris was never quite enough for what Apple would like to do. For their largest 15/16-inch MacBook Pros, Apple has been able to turn to discrete GPUs to make up the difference, but the lack of space and power for a dGPU in the 13-inch MacBook Pro form factor has been a bit more constraining. Ultimately, all of this has pushed Apple to develop their own GPU architecture, not only to offer a complete SoC for lower-tier parts, but also to be able to keep the GPU integrated in their high-end parts as well.

It’s the latter that is arguably the unique aspect of Apple’s position right now. Traditional OEMs have been fine with a small(ish) CPU and then adding a discrete GPU as necessary. It’s cost and performance effective: you only need to add as big of a dGPU as the customer needs performance, and even laptop-grade dGPUs can offer very high performance. But like any other engineering decision, it’s a trade-off: discrete GPUs result in multiple display adapters, require their own VRAM, and come with a power/cooling cost.

Apple has long been a vertically integrated company, so it’s only fitting that they’ve been focused on SoC integration as well. Bringing what would have been the dGPU into their high-end laptop SoCs eliminates the drawbacks of a discrete part. And, again leveraging Apple’s ecosystem advantage, it means they can provide the infrastructure for developers to use the GPU in a heterogeneous computing fashion – able to quickly pass data back and forth with the CPU since they’re all processing blocks on the same chip, sharing the same memory. Apple has already been pushing this paradigm for years in its A-series SoC, but this is still new territory in the laptop space – no PC processor has ever shipped with such a powerful GPU integrated into the main SoC.

The trade-off for Apple, in turn, is that the M1 inherits the costs of providing such a powerful GPU. That not only includes die space for the GPU blocks themselves, but the fatter fabric needed to pass that much data around, the extra cache needed to keep the GPU immediately fed, and the extra external memory bandwidth needed to keep the GPU fed over the long run. Integrating a high-end GPU means Apple has inherited the design and production costs of a high-end GPU.

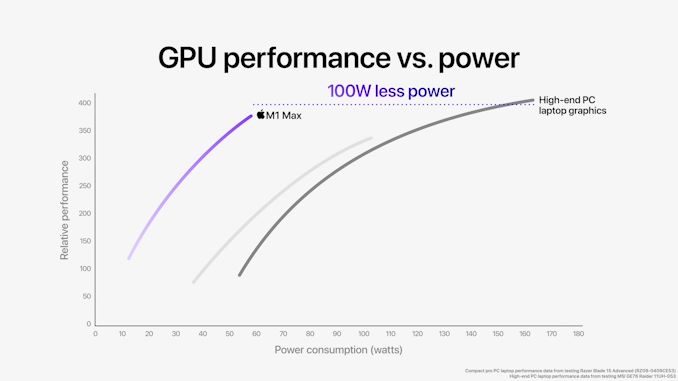

ALUs and GPU cores aside, the most interesting thing Apple has done to make this possible comes via their memory subsystem. GPUs require a lot of memory bandwidth, which is why discrete GPUs typically come with a sizable amount of dedicated VRAM using high-speed interfaces like HBM2 or GDDR6. But being power-minded and building their own SoC, Apple has instead built an incredibly large LPDDR5 memory interface; M1 Max has a 512-bit interface, four-times the size of the original M1’s 128-bit interface. To be sure, it’s always been possible to scale up LPDDR in this fashion, but at least in the consumer SoC space, it’s never been done before. With such a wide interface, Apple is able to give the M1 Max 400GB/sec (technically, 409.6 GB/sec) of memory bandwidth, which is comparable to the amount of bandwidth found on NVIDIA’s fastest laptop SKUs.

Ultimately, this enables Apple to feed their high-end GPU with a similar amount of bandwidth as a discrete laptop GPU, but with a fraction of the power cost. GDDR6 is very fast per pin – over 2x the rate – but efficient it ain’t. So while Apple does lose some of their benefit by requiring such a large memory bus, they more than make it up by using LPDDR5. This saves them over a dozen Watts under load, not only benefitting power consumption, but keeping down the total amount of heat generated by their laptops as well.

M1 Max and M1 Pro: Select-A-Size

There is one more knock-on effect for Apple in using integrated GPUs throughout their laptop SoC lineup: they needed some way to match the scalability afforded by dGPUs. As nice as it would be for every MacBook Pro to come with a 57 billion transistor M1 Max, the costs and chip yields of such a thing are impractical. The actual consumer need isn’t there either; M1 Max is designed to compete with high-end discrete GPU solutions, but most consumer (and even a lot of developer) workloads simply don’t fling around enough pixels to fully utilize M1 Max. And that’s not meant to be a subtle complement to Apple – M1 Max is overkill for desktop work and arguably even a lot of 1080p-class gaming.

So Apple has developed not one, but two new M1 SoCs, allowing Apple to have a second, mid-tier graphics option below M1 Max. Dubbed M1 Pro, this chip has half of M1 Max’s GPU clusters, half of its system level cache, and half of its memory bandwidth. In every other respect it’s the same. M1 Pro is a much smaller chip – Andrei estimates it’s around 245mm2 in size – which makes it cheaper to manufacture for Apple. So for lower-end 14 and 16-inch MacBook Pros that don’t need high-end graphics performance, Apple is able to offer a smaller slice of their big integrated GPU still paired with all of the other hardware that makes the latest M1 SoCs as a whole so powerful.

| Apple Silicon GPU Specifications | |||||

| M1 Max | M1 Pro | M1 | |||

| ALUs | 4096 (32 Cores) |

2048 (16 Cores) |

1024 (8 Cores) |

||

| Texture Units | 256 | 128 | 64 | ||

| ROPs | 128 | 64 | 32 | ||

| Peak Clock | 1296MHz | 1296MHz | 1278MHz | ||

| Throughput (FP32) | 10.6 TFLOPS | 5.3 TFLOPS | 2.6 TFLOPS | ||

| Memory Clock | LPDDR5-6400 | LPDDR5-6400 | LPDDR4X-4266 | ||

| Memory Bus Width | 512-bit (IMC) |

256-bit (IMC) |

128-bit (IMC) |

||

Taking a quick look at the GPU specifications across the M1 family, Apple has essentially doubled (and then doubled again) their integrated GPU design. Whereas the original M1 had 8 GPU cores, M1 Pro gets 16, and M1 Max gets 32. Every aspect of these GPUs has been scaled up accordingly – there are 2x/4x more texture units, 2x/4x more ROPs, 2x/4x the memory bus width, etc. All the while the GPU clockspeed remains virtually unchanged at about 1.3GHz. So the GPU performance expectation for M1 Pro and M1 Max are very straightforward: ideally, Apple should be able to get 2x or 4x the GPU performance of the original M1.

Otherwise, not reflected in the specifications or in Apple’s own commentary, Apple will need to have scaled up their fabric as well. Connecting 32 cores means passing around a massive amount of data, and the original M1’s fabric certainly wouldn’t have been up to the task. Still, whatever Apple had to do has been accomplished (and concealed) very neatly. From the outside the M1 Pro/Max GPUs behave just like M1, so even with those fabric changes, this is very clearly a virtually identical GPU architecture.

Synthetic Performance

Finally diving into GPU performance itself, let’s start with our synthetic benchmarks.

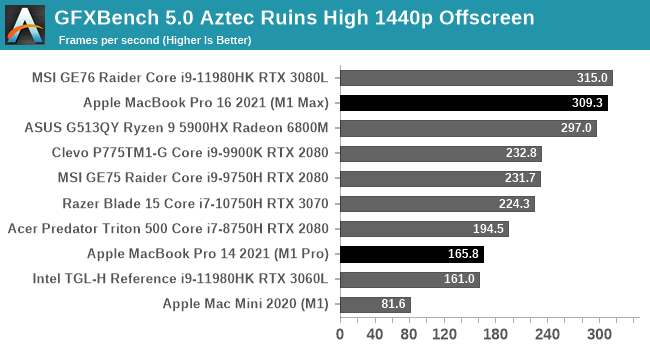

In an effort to try to get as much comparable data as possible, I’ve started with GFXBench 5.0 Aztec Ruins. This is one of our standard laptop benchmarks, so we can directly compare the M1 Max and M1 Pro to high-end PC laptops we’ve recently tested. As for Aztec Ruins itself, this is a benchmark that can scale from phones to high-end laptops; it’s natively available for multiple platforms and it has almost no CPU overhead, so the sky is the limit on the GPU font.

Aztec makes for a very good initial showing for Apple’s new SoCs. M1 Max falls just short of topping the chart here, coming in a few FPS behind MSI’s GE76, a GeForce RTX 3080 Laptop-equipped notebook. As we’ll see, this is likely to be something of a best-case scenario for Apple since Aztec scales so purely with GPU performance (and has a very good Metal implementation). But it goes to show where Apple can be when everything is just right.

We also see the scalability of the M1 family in action here. The M1->M1 Pro ->M1 Max performance progression is almost exactly 2x at each step,

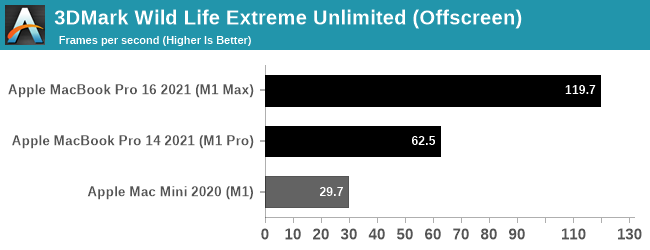

Since macOS can also run iOS applications, I’ve also tossed in 3DMark Wild Life Extreme benchmark. This is another cross-platform benchmark that’s available on mobile and desktop alike, with the Extreme version particularly suited for measuring PCs and Macs alike. This is run in Unlimited mode, which draws off-screen in order to ensure the GPU is fully weighed down.

Since 3DMark Wild Life Extreme is not one of our standard benchmarks, we don’t have comparable PC data to draw from. But from the M1 Macs we can once again see that GPU performance is scaling almost perfectly among the SoCs. The M1 Pro doubles performance over the M1, and the M1 Max doubles it again.

Gaming Performance

Switching gears, even though macOS isn’t an especially popular gaming platform, there are plenty of games to be had on the platform, especially as tools like MoltenVK have made it easier for developers to get a Metal API render backend up and running. With that said over, the vast majority of major macOS cross-platform games are still x86 only, so a lot of games are still reliant on Rosetta. Ideally products like the new MacBook Pros will push developers to develop Arm binaries as well, but that will be a bigger ask.

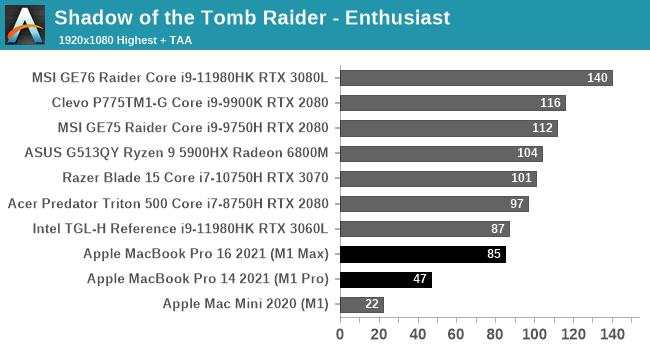

We’ll start with Shadow of the Tomb Raider, which is another one of our standard laptop benchmarks. This gives us a lot of high-end laptop configurations to compare against.

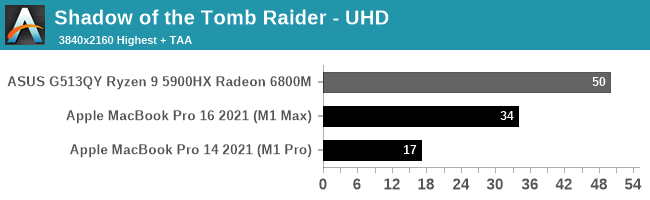

Unfortunately, Apple’s strong GPU performance under our synthetic benchmarks doesn’t extend to our first game. The M1 Macs bring up the tail-end of the 1080p performance chart, and they’re still well behind the Radeon 6800M at 4K.

Digging deeper, there are a couple of factors in play here. First and foremost, the M1 Max in particular is CPU limited at 1080p; the x86-to-Arm translation via Rosetta is not free, and even though Apple’s CPU cores are quite powerful, they’re hitting CPU limitations here. We have to go to 4K just to help the M1 Max fully stretch its legs. Even then the 16-inch MacBook Pro is well off the 6800M. Though we’re definitely GPU-bound at this point, as reported by both the game itself, and demonstrated by the 2x performance scaling from the M1 Pro to the M1 Max.

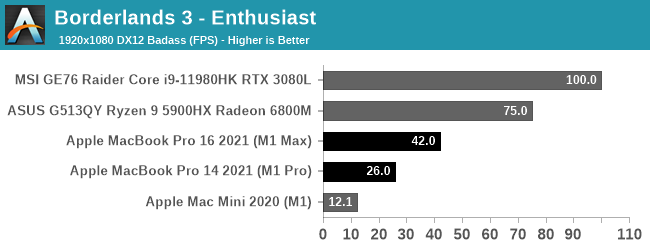

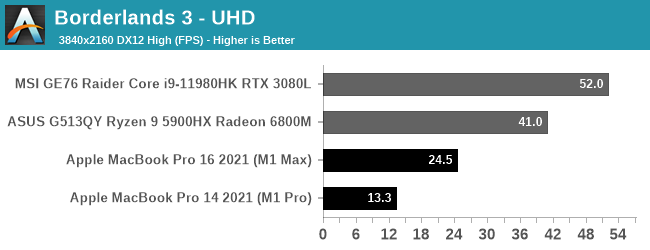

Our second game is Borderlands 3. This is another macOS port that is still x86-only, and part of our newer laptop benchmarking suite.

Borderlands 3 ends up being even worse for the M1 chips than Shadow of the Tomb Raider. The game seems to be GPU-bound at 4K, so it’s not a case of an obvious CPU bottleneck. And truthfully, I don’t enough about the porting work that went into the Mac version to say whether it’s even a good port to begin with. So I’m hesitant to lay this all on the GPU, especially when the M1 Max trails the RTX 3080 by over 50%. Still, if you’re expecting to get your Claptrap fix on an Apple laptop, a 2021 MacBook Pro may not be the best choice.

Productivity Performance

Last, but not least, let’s take a look at some GPU-centric productivity workloads. These are not part of our standard benchmark suite, so we don’t have comparable data on hand. But the two benchmarks we’re using are both standardized benchmarks, so the data is portable (to an extent).

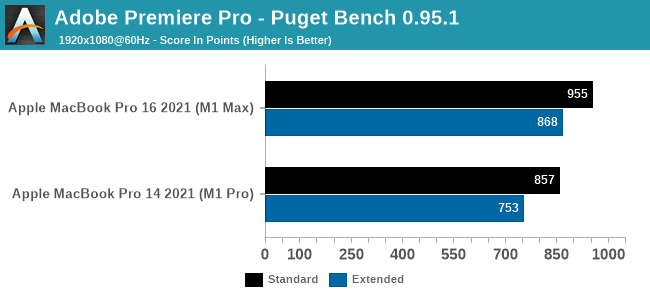

We’ll start with Puget System’s PugetBench for Premiere Pro, which is these days the de facto Premiere Pro benchmark. This test involves multiple playback and video export tests, as well as tests that apply heavily GPU-accelerated and heavily CPU-accelerated effects. So it’s more of an all-around system test than a pure GPU test, though that’s fitting for Premiere Pro giving its enormous system requirements.

On a quick note here, this benchmark seems to be sensitive to both the resolution and refresh rate of the desktop – higher refresh rates in particular seem to boost performance. Which means that the 2021 MacBook Pros’ 120Hz ProMotion displays get an unexpected advantage here. So to try to make things more apples-to-apples here, all of our testing is with a 1920x1080 desktop at 60Hz. (For reference, a MBP16 scores 1170 when using its native display)

What we find is that both Macs perform well in this benchmark – a score near 1000 would match a high-end, RTX 3080-equipped desktop – and from what I’ve seen from third party data, this is well, well ahead of the 2019 Intel CPU + AMD GPU 16-inch MacBook Pro.

As for how much of a role the GPU alone plays, what we see is that the M1 Max adds about 100 points on both the standard and extended scores. The faster GPU helps with GPU-accelerated effects, and should help with some of the playback and encoding workload. But there are other parts that fall to the CPU, so the GPU alone doesn’t carry the benchmark.

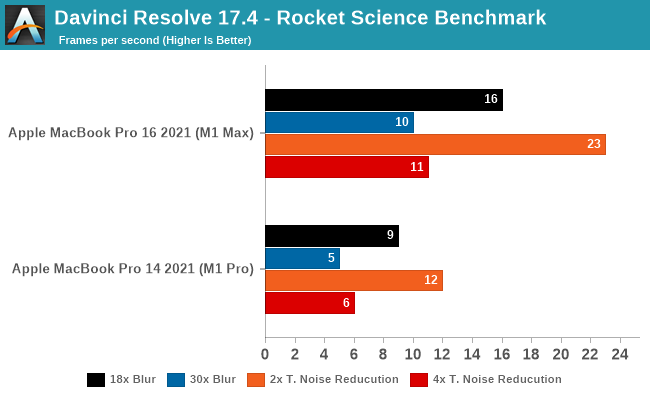

Our other productivity benchmark is DaVinci Resolve, the multi-faceted video editor, color grading, and VFX video package. Resolve comes up frequently in Apple’s promotional materials; not only is it popular with professional Mac users, but color grading and other effects from the editor are both GPU-accelerated and very resource intensive. So it’s exactly the kind of professional workload that benefits from a high-end GPU.

As Resolve doesn’t have a standard test – and Puget Systems’ popular test is not available for the Mac – we’re using a community-developed benchmark. AndreeOnline’s Rocket Science benchmark uses a variety of high-resolution rocket clips, processing them with either a series of increasingly complex blur or temporal noise reduction filters. For our testing we’re using the test’s 4K ProRes video file as an input, though the specific video file has a minimal impact relative to the high cost of the filters.

All of these results are well-below real time performance, but it’s to be expected from the complex nature of the filters. Still, the M1 Max comes closer than I was expecting to matching the clip’s original framerate of 25fps; an 18 step blur operation still moves at 16fps, and a 2-step noise resolution is 23fps. This is a fully GPU-bottlenecked scenario, so ramping those up to even larger filter sets has the expected impact to GPU performance.

Meanwhile, this is another case of the M1 Max’s GPU performance scaling very closely to 2x that of the M1 Pro’s. With the exception of 18-step blur, the M1 Max is 80% faster or better. All of which underscores that when a workload is going to be throwing around billions of pixels like Resolve, if it’s GPU-accelerated it can certainly benefit from the M1 Max’s more powerful GPU.

Overall, it’s clear that Apple’s ongoing experience with GPUs has paid off with the development of their A-series chips, and now their M1 family of SoCs. Apple has been able to scale up the small and efficient M1 into a far more powerful configuration; Apple built SoCs with 2x/4x the GPU hardware of the original M1, and that’s almost exactly what they’re getting out of the M1 Pro and M1 Max, respectively. Put succinctly, the new M1 SoCs prove that Apple can build the kind of big and powerful GPUs that they need for their high-end machines. AMD and NVIDIA need not apply.

With that said, the GPU performance of the new chips relative to the best in the world of Windows is all over the place. GFXBench looks really good, as do the MacBooks’ performance productivity workloads. For the true professionals out there – the people using cameras that cost as much as a MacBook Pro and software packages that are only slightly cheaper – the M1 Pro and M1 Max should prove very welcome. There is a massive amount of pixel pushing power available in these SoCs, so long as you have the workload required to put it to good use.

However gaming is a poorer experience, as the Macs aren’t catching up with the top chips in either of our games. Given the use of x86 binary translation and macOS’s status as a traditional second-class citizen for gaming, these aren’t apple-to-apple comparisons. But with the loss of Boot Camp, it’s something to keep in mind. If you’re the type of person who likes to play intensive games on your MacBook Pro, the new M1 regime may not be for you – at least not at this time.

493 Comments

View All Comments

Ppietra - Thursday, October 28, 2021 - link

Gosh, no! not 2.35m people. You are so obsessed with a GPU having everything on silicon for itself that you fail to see how much more cache resources the SoC has when compared with other processors. Even if there was 1GB of cache you would still be complaining because the CPU can use it. Get some common sense.richardnpaul - Friday, October 29, 2021 - link

You're wrong. I've shown that your fringe is larger than some country's populations and you've dismissed it and pivoted back to another talking point, a point that is a misrepresentation of what I was saying.I was wondering what the effect was on performance of the CPU and GPU when both are being used and both are using the shared cache simultaneously given that we know that in isolation with just their own cache it improves efficiency. I'm not and haven't been saying it's an actual issue, it's something that could be tested, and also, we have no clue as to whether it's a real world problem or not.

The article was the one that was talking about the GPU and it having access to all the 512bit memory interface, I was challenging that saying that actually the CPU is going to use some of that bandwidth, but the benefit of the design is that when the GPU needs more and the CPU isn't using it it has access to it and vice-versa.

And if you knew anything about common sense you wouldn't say to get some of it. You're rude and dismissive of anyone else who doesn't fit into your world view, you might want to do something about fixing that about yourself; but probably you won't.

Ppietra - Friday, October 29, 2021 - link

No, you haven’t shown anything, because for whatever reason you continue to ignore how big the cache is when compared with anything else out there, and how big the L2 cache is, also when compared with anything else out there - something that they don’t share. Thirdly, if you even tried to pay attention to what was said, you would see that the M1 Max has double the system cache size, and yet not much different CPU performance.You also continue to ignore that in a game (which is the thing you are obsessing about), CPU and GPU work together. Not having to send instructions to an external GPU, and CPU and GPU being able to work on the same data stored in cache, gives a big performance improvement, it removes bottlenecks. So you obsessing because the CPU can use system cache during a game makes no sense, because the sharing can actually give a boost in game performance.

Fringe cases would never be equivalent to every gamer.

richardnpaul - Friday, October 29, 2021 - link

"continue to ignore how big the cache is when compared with anything else out there"Like the previously mentioned RX 6800 which has 256MB? I've not mentioned the RX6800 (infinity) cache at all?

The L2 cache is large, but then it doesn't have an L3 cache. This is a balancing act that chip architects engage with all the time. It seems that zen3 and the M1 Max graphs are very similar for latency with full random being a little higher but most everything else looking close enough that I'm not going to stick my neck out and declare either a winner.

"and CPU and GPU being able to work on the same data stored in cache, gives a big performance improvement, it removes bottlenecks"

This is not represented in the benchmarking, which might be because there needs to be some specific optimisation done, or it could be due to something else. I expect the situation to improve though, probable with more focus on the M1 Pro which will carry over to the Max.

Ppietra - Friday, October 29, 2021 - link

You are not going to see something in benchmark that is inherent to how the system works, how it manages memory, there is no off switch. You need to have the knowledge of how things work."The L2 cache is large, but then it doesn't have an L3 cache."????????????????

System cache behaves as if it was a L3 cache for the CPU. How can you say that zen 3 and M1 are similar when the M1 Max has 3.5 times the cache size of a laptop Ryzen??? Just the L2 cache is larger than all the cache available in a laptop Ryzen.

"RX 6800 which has 256MB?" A RX6800 isn’t a laptop chip. [" laptop processors " - - it’s there in one of the first comments]

richardnpaul - Saturday, October 30, 2021 - link

This is where you need to look at the latency graphs for M1 Pro/Max and then go and find the Zen 3 article and compare the graphs for yourself. And I haven't been comparing the M1 Max to a laptop Ryzen, I have repeatedly compared it to a single zen3 core complex where they are much closer in terms of total cache. Compare the 5nm M1 Max to the 7nm Zen3 all you like, with its much higher transistor count. You're not talking about the same thing as I was all along.I have repeatedly compared whatever is the closest comparison, regardless of where its used to get a helpful idea of what benefits it could bring. That Apple have managed to do this in a laptop's power budget is, and I'll quote myself here "a technological marvel". The M1 Pro/Max are combined GPUs and CPUs, that means you can compare them to standalone GPUs and to CPUs. You're the one who can't seem to understand that they both need to stand on their own merits.

Ppietra - Saturday, October 30, 2021 - link

Really!??? You want to compare a laptop processor with Desktop chips that can consume 3-4 times more than the all laptop, and you think that is close? no common sense whatsoever!But guess what even then a M1 Max has more cache available than a consumer desktop Ryzen!

The latency graphs are for the CPU (which, by the way you can actually see differences because of the size of the level 1 and 2 caches even with desktop Ryzen), they don’t tell you anything when you want to compare the response latency between CPU and GPU, nor about the performance boost from processing the CPU and GPU being able to process the same data in cache without having to access RAM.

Who said you cannot compare with dedicated GPUs?

richardnpaul - Sunday, October 31, 2021 - link

I'm comparing architectures, not products, that's why it seems to you like this is an "unfair" comparison. I also bear in mind what node the architecture is at, as that makes quite a marked difference due to transistor budget constraints.Yes M1 Max has more cache, and where you're not using the GPU (a bit difficult as you'll be running an OS which has a GUI, but let's say that that is basically negligible) it should have a reasonable impact on usages which are heavy on memory bandwidth. In fact you can see that in the benchmarks, there are a number of which heavily reward the M1 Max over anything else, not that many in total but certain use cases will see great uplifts, just the same as Milan-X and the equivalent chiplets in Ryzen CPUs which we'll get to see in the next few days will have benefits in certain use cases.

What I was saying way back was, what's the contention there, when running a game, how much benefit is the GPU getting and if any how much is the CPU losing when contention starts to happen on the SLC. Caches usually work on some kind of LRU basis, so if two separate things are trying to use the same cache (which can have benefits where they are both using the cache for the same data) both suffer as their older cache data is evicted by the other processor. That should be measurable. Workloads that share the same data, if its small enough to fit into the 48MB on the Max, should see huge benefits, and yes, one application that has been highlighted has taken advantage of this. But we are yet to see others take this up, AMD, having tried this before will tell you that if you can't get broad software support that it's a dead duck, however, Apple have often made long term bets and stuck with them over a number of years, which could make the difference.

Apple have approached this in two different ways. They have created a monster APU, AMD's effort was... safe, I think they thought that they could iterate over time to large better designs, however, no-one wanted to put that much time and effort into a bet that AMD would deliver in the future when Intel wasn't making similar noises.

They're on a cutting edge node, with a cutting edge design, and there's no other choice for Apple users, sure you can get the original M1 or M1 Pro, but there's no Intel to get in the way and the only downside of the other chips are that they will be slower due to having fewer resources but it's all much the same design.

OreoCookie - Wednesday, October 27, 2021 - link

No, the 24 MB = 2 x 12 MB are the shared L2 caches amongst the performance core clusters, the two efficiency cores share another 4 MB (so the M1 Pro and M1 Max have close to Zen 3 desktop-level L2 caches if you ignore the system level cache). These caches are not shared between CPUs and GPUs at all. Only the system-level cache of yet *another* 48 MB is shared amongst all logic that has access to main memory. Given that the total memory bandwidth is larger than what CPU and GPU need in a worst-case scenario, I fail to see how this is somehow an edge case.It seems the memory bandwidth so large that it can accommodate all CPU cores running a memory-intensive workload at full tilt *and* the GPU running a memory-intensive workload with room to spare. Even if you could saturate the memory bandwidth by also using the NPU (ML accelerator) and/or the hardware en/decoder, I think you are really reaching. This would be far beyond the capabilities of any comparable machine. Even much more powerful machines would struggle with such a workload.

richardnpaul - Thursday, October 28, 2021 - link

Yes sorry, I do know that, the 24 in 24/48MB was a reference to the M1 Pro which has half the shared buffer. That shared buffer, I'd need to go back and look at the access times (and compare it to Zen3 desktop) because it's almost on the other side of the chip from the cores.I do see that they tested a game at 4K, and I know that some games lean more heavily on the onboard RAM on dGPUs and not all games have specific high resolution 4K textures and so use more RAM than others. And it is mentioned on the second page that they didn't see anything that pushed the GPU over using 90GB/s of bandwidth and I don't know if that they were measuring during that testing run (I would expect that they were but you know what they say about assumptions :D).

I think that you're right and that the architecture team probably went overboard on the bandwidth anticipating certain edge case scenarios where the system has multiple tasks loading multiple parts of the CPU and we'll see some rebalancing in future designs. I would like to see a game run with or without mods that does stress the GPU memory subsystem (games aren't usually hammering the CPU bandwidth so more should be available to the GPU, which may very well never be able to saturate it by design, but the cache may be saturated). This will also tell us something about longevity of the SoC too.

I don't think that I'm reaching, more that I see systems lasting for 7+ years, and when newer generations of hardware move on unusual usage when some hardware is new suddenly becomes common place because newer hardware is a evolving target over time and sometimes software does actually utilise it. (Sometimes CPU bugs rob you of performance and make your hardware feel slow, other times it's just that software is a bit more demanding now than it was years before when you got it)