The Apple A15 SoC Performance Review: Faster & More Efficient

by Andrei Frumusanu on October 4, 2021 9:30 AM EST- Posted in

- Mobile

- Apple

- Smartphones

- Apple A15

A few weeks ago, we’ve seen Apple announce their newest iPhone 13 series devices, a set of phones being powered by the newest Apple A15 SoC. Today, in advance of the full device review which we’ll cover in the near future, we’re taking a closer look at the new generation chipset, looking at what exactly Apple has changed in the new silicon, and whether it lives up to the hype.

This year’s announcement of the A15 was a bit odder on Apple’s PR side of things, notably because the company generally avoided making any generational comparisons between the new design to Apple’s own A14. Particularly notable was the fact that Apple preferred to describe the SoC in context of the competition; while that’s not unusual on the Mac side of things, it was something that this year stood out more than usual for the iPhone announcement.

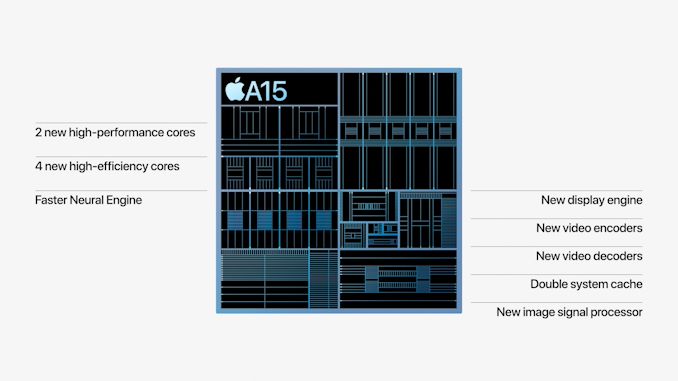

The few concrete factoids about the A15 were that Apple is using new designs for their CPUs, a faster Neural engine, a new 4- or 5-core GPU depending on the iPhone variant, and a whole new display pipeline and media hardware block for video encoding and decoding, alongside new ISP improvements for camera quality advancements.

On the CPU side of things, improvements were very vague in that Apple quoted to be 50% faster than the competition, and the GPU performance metrics were also made in such a manner, describing the 4-core GPU A15 being +30% faster than the competition, and the 5-core variant being +50% faster. We’ve put the SoC through its initial paces, and in today’s article we’ll be focusing on the exact performance and efficiency metrics of the new chip.

Frequency Boosts; 3.24GHz Performance & 2.0GHz Efficiency Cores

Starting off with the CPU side of things, the new A15 is said to feature two new CPU microarchitectures, both for the performance cores as well as the efficiency cores. The first few reports about the performance of the new cores were focused around the frequencies, which we can now confirm in our measurements:

| Maximum Frequency vs Loaded Threads Per-Core Maximum MHz |

||||||

| Apple A15 | 1 | 2 | 3 | 4 | ||

| Performance 1 | 3240 | 3180 | ||||

| Performance 2 | 3180 | |||||

| Efficiency 1 | 2016 | 2016 | 2016 | 2016 | ||

| Efficiency 2 | 2016 | 2016 | 2016 | |||

| Efficiency 3 | 2016 | 2016 | ||||

| Efficiency 4 | 2016 | |||||

| Maximum Frequency vs Loaded Threads Per-Core Maximum MHz |

||||||

| Apple A14 | 1 | 2 | 3 | 4 | ||

| Performance 1 | 2998 | 2890 | ||||

| Performance 2 | 2890 | |||||

| Efficiency 1 | 1823 | 1823 | 1823 | 1823 | ||

| Efficiency 2 | 1823 | 1823 | 1823 | |||

| Efficiency 3 | 1823 | 1823 | ||||

| Efficiency 4 | 1823 | |||||

Compared to the A14, the new A15 increases the peak single-core frequency of the two-performance core cluster by 8%, now reaching up to 3240MHz compared to the 2998MHz of the previous generation. When both performance cores are active, their operating frequency actually goes up by 10%, both now running at an aggressive 3180MHz compared to the previous generation’s 2890MHz.

In general, Apple’s frequency increases here are quite aggressive given the fact that it’s quite hard to push this performance aspect of a design, especially when we’re not expecting major performance gains on the part of the new process node. The A15 should be made on an N5P node variant from TSMC, although neither company really discloses the exact details of the design. TSMC claims a +5% frequency increase over N5, so for Apple to have gone further beyond this would have indicated an increase in power consumption, something to keep in mind of when we dive deeper into the power characteristics of the CPUs.

The E-cores of the A15 are now able to clock up to 2016MHz, a 10.5% increase over the A14’s cores. The frequency here is independent of the performance cores, as in the number of threads in the cluster doesn’t affect the other cluster, or vice-versa. Apple has done some more interesting changes to the little cores this generation, which we’ll come to in a bit.

Giant Caches: Performance CPU L2 to 12MB, SLC to Massive 32MB

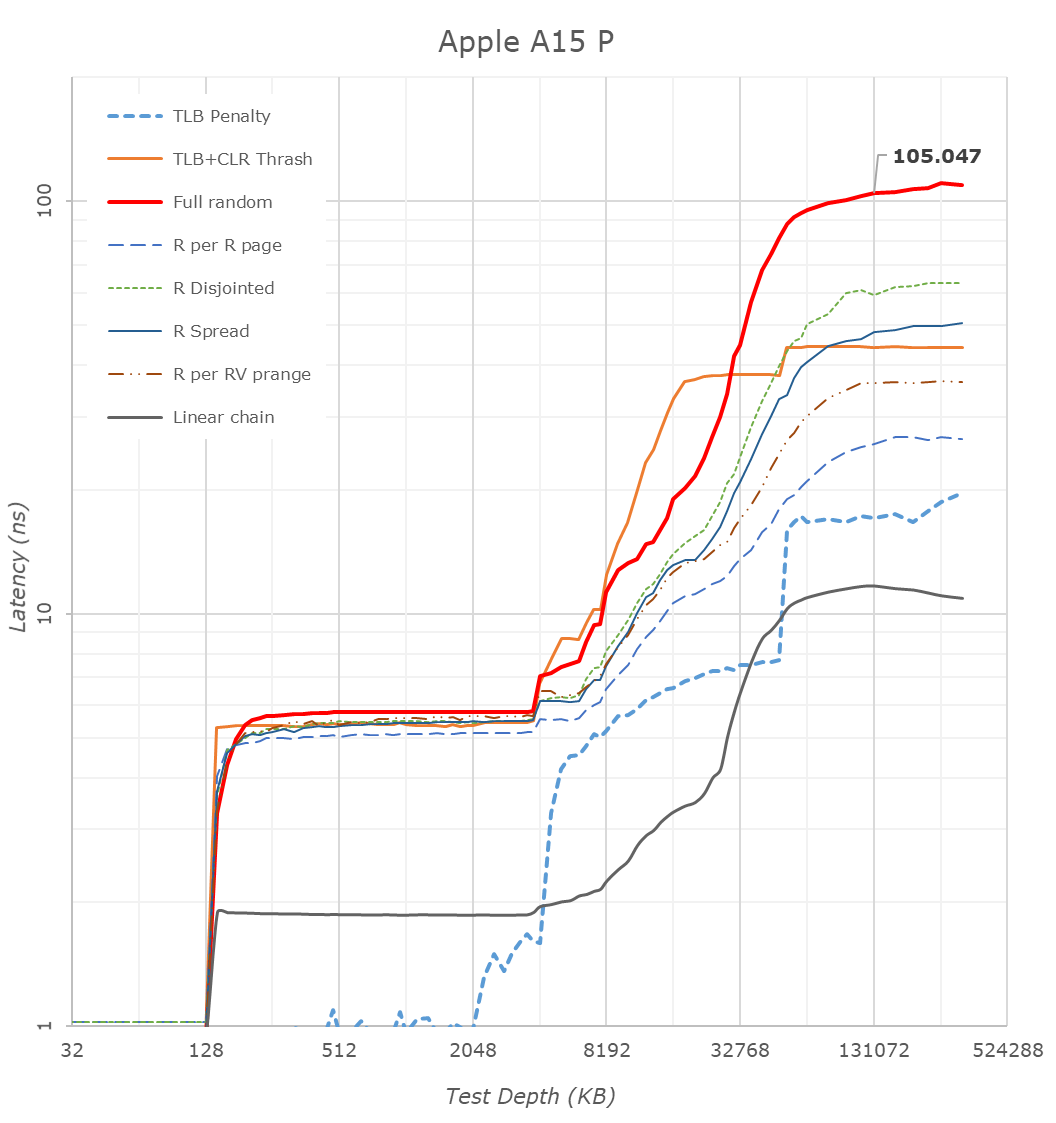

One more straightforward technical detail Apple revealed during its launch was that the A15 now features double the system cache compared to the A14. Two years ago we had detailed the A13’s new SLC which had grown from 8MB in the A12 to 16MB, a size that was also kept constant in the A14 generation. Apple claiming they’ve doubled this would consequently mean it’s 32MB now in the A15.

Looking at our latency tests on the new A15, we can indeed now confirm that the SLC has now doubled up to 32MB, further pushing the memory depth to reach DRAM. Apple’s SLC is likely to be a key factor in the power efficiency of the chip, being able to keep memory accesses on the same silicon rather than going out to slower, and more power inefficient DRAM. We’ve seen these types of last-level caches being employed by more SoC vendors, but at 32MB, the new A15 dwarfs the competition’s implementations, such as the 3MB SLC on the Snapdragon 888 or the estimated 6-8MB SLC on the Exynos 2100.

What Apple didn’t divulge, is also changes to the L2 cache of the performance cores, which has now grown by 50% from 8MB to 12MB. This was actually the same L2 size as on the Apple M1, only this time around it’s serving only two performance cores rather than four. The access latency appears to have risen from 16 cycles on the A14 to 18 cycles on the A15.

A 12MB L2 is again humongous, over double compared to the combined L3+L2 (4+1+3x0.5 = 6.5MB) of other designs such as the Snapdragon 888. It very much appears Apple has invested a lot of SRAM into this year’s SoC generation.

The efficiency cores this year don’t seem to have changed their cache sizes, remaining at 64KB L1D’s and 4MB shared L2’s, however we see Apple has increased the L2 TLB to 2048 entries, now covering up to 32MB, likely to facilitate better SLC access latencies. Interestingly, Apple this year now allows the efficiency cores to have faster DRAM access, with latencies now at around 130ns versus the +215ns on the A14, again something to keep in mind of in the next performance section of the article.

CPU Microarchitecture Changes: A Slow(er) Year?

This year’s CPU microarchitectures were a bit of a wildcard. Earlier this year, Arm had announced the new Armv9 ISA, predominantly defined by the new SVE2 SIMD instruction set, as well as the company’s new Cortex series CPU IP which employs the new architecture. Back in 2013, Apple was notorious for being the first on the market with an Armv8 CPU, the first 64-bit capable mobile design. Given that context, I had generally expected this year’s generation to introduce v9 as well, but however that doesn’t seem to be the case for the A15.

Microarchitecturally, the new performance cores on the A15 doesn’t seem to differ much from last year’s designs. I haven’t invested the time yet to look at every nook and cranny of the design, but at least the back-end of the processor is identical in throughput and latencies compared to the A14 performance cores.

The efficiency cores have had more changes, alongside some of the memory subsystem TLB changes, the new E-core now gains an extra integer ALU, bringing the total up to 4, up from the previous 3. The core for some time no longer could be called “little” by any means, and it seems to have grown even more this year, again, something we’ll showcase in the performance section.

The possible reason for Apple’s more moderate micro-architectural changes this year might be a storm of a few factors – Apple had notably lost their lead architect on the big performance cores, as well as parts of the design teams, to Nuvia back in 2019 (later acquired by Qualcomm earlier this year). The shift towards Armv9 might also imply some more work done on the design, and the pandemic situation might also have contributed to some non-ideal execution. We’ll have to examine next year’s A16 to really determine if Apple’s design cadence has slowed down, or whether this was merely just a slippage, or simply a lull before a much larger change in the next microarchitecture.

Of course, the tone here paints rather conservative improvement of the A15’s CPUs, which when looking at performance and efficiency, are anything but that.

204 Comments

View All Comments

techconc - Tuesday, October 5, 2021 - link

Agreed. Google did some early pioneering work with computational photography. However, unlike you, I don’t think most Android users understand just how far Apple has pushed in these areas, especially with regard to real time previews that require more processing power than is available on Android devices. This year’s “cinema mode” is just another example of that.Apple focuses on features and then designs silicon around that. Most others see what’s available in silicon and then decide which features they can add.

Nicon0s - Saturday, October 16, 2021 - link

>I don’t think most Android users understand just how far Apple has pushed in these areas, especially with regard to real time previews that require more processing power than is available on Android devices.I don't think you understand what you are taking about. Real time preview was implemented on Pixel 4 with the old Snapdragon 855. You are just trying to make it seem a much bigger deal that it is.

What's Apple has pushed for is to match camera software features implemented by Google and other Android manufacturers.

techconc - Monday, October 18, 2021 - link

Yeah, YEARS after iPhones have had this feature because Android phones have been anemic by comparison in terms of processing capabilities. The same with Apple adding this feature for video via Cinema mode. The point being, you're attempting to make it sound as if Android has completely led and pioneered computational photography and that's not true. Google has led in some areas, Apple has led in others. If you think computational photography is an area where Android devices currently lead, then don't really know what you're talking about.Nicon0s - Tuesday, October 19, 2021 - link

"Yeah, YEARS after iPhones have had this feature because Android phones have been anemic by comparison in terms of processing capabilities. "That's only what you think. That live preview is mostly dependent on the ISP anyway, which is the one doing the processing.

"The same with Apple adding this feature for video via Cinema mode."

A boring, pointless feature most won't use.

"The point being, you're attempting to make it sound as if Android has completely led and pioneered computational photography and that's not true. "

It is true. The advancements in terms of computational photography that we get with modern smartphones today were lead by Android manufacturers, Apple only followed. I still remember how apple fanboys all over the interned claimed that Google faked the iphone photo when they introduced Night Sight with Pixel 3. Night Sight was better than it seemed possible changing the paradigm when taking photos in low light.

You want to see another slew of new photo features, take a look at the Pixel 6 announcement. While apple introduced what? Fake video blur? LoL

" If you think computational photography is an area where Android devices currently lead, then don't really know what you're talking about."

Actually I'm the only one that knows what hes talking about.

Nicon0s - Saturday, October 16, 2021 - link

>A key differences is that the SE 2020 does computational photography/videography in real time, which necessitates a decently powerful professor to execute those tasks? The Pixel 4a doesn’t have Live HDR in preview/during recording when recording videos (only in stills), nor does it have real-time Portrait Mode/bokeh control simultaneously with Live HDR nor something like Portrait Lighting control before taking a pic?What's the most important is the results.

Also I'm pretty sure the 4a can approximate the HDR results in real time in the viewfinder, which is not really a big deal. I've seen it on other mid-range Androids as well.

The idea is you can have very decent computational photography even on slower phones in terms of CPU and GPU while Apple does intentionally cripple the capabilities of some of their phones, like lack of night mode on the SE, heck even on the iphone X night, mode should be possible no problem

>The 4a is great for the price and despite using a much slower processor, it has a pretty good camera. But this also makes it have disadvantages—and this is shown across the Pixel lineup, including the 5.

Honestly I don't see any disadvantages because of the performance vs pretty much any phone around it's price range so including the SE.

techconc - Monday, October 18, 2021 - link

>What's the most important is the results.Yeah, and seeing live previews helps with a photographer's composition and actually achieve those results. Without proper live previews, better results are more a matter of luck than skill.

Nicon0s - Tuesday, October 19, 2021 - link

"Yeah, and seeing live previews helps with a photographer's composition and actually achieve those results. Without proper live previews, better results are more a matter of luck than skill."Nonsense, you don't really understand photography. Like I've said what matter are the result. If I point my phone at the same subject and don't get an "approximated HDR result" in the live preview doesn't mean I'm going to take a worse photo or that I generally take worse photos.

Blark64 - Monday, October 11, 2021 - link

>The road for computational photography was paved by Android smaprhones not Apple.Computational photography on the Pixel 4a with the very old SD 730 is better than on an iphone SE 2020 for example.

Your historic perspective on computational photography is, well, shortsighted. Computational photography as a discipline is decades old (emerging from the fields of computer vision and digital imaging), and I was using computational photography apps on my iPhone 4 in 2010.

Nicon0s - Saturday, October 16, 2021 - link

We are taking about modern phones and modern solutions not the start of computational photography. Apple's camera software evolved as a reaction to the excelent camera features implemented in Android phones. It's not your iphone 4 that made computational photography popular and desirable it's Android manufacturersNicon0s - Tuesday, October 5, 2021 - link

>I believe Apple can mint money by selling their SOCs to Android smartphone manufacturers.I would really like to see that but more for the cost perspective.

Things to consider: it doesn't have a model so additional cost.

Need for hardware support as it's a new platform, support for developing the motherboard.

Need for support for software optimisations/ camera optimisations etc.

Need for support for drivers, when OEMs buy an SOC they buy it with driver support for a certain amount of years and this influences the final price.

All in all an A15 would probably cost an Android OEM a few times more than a Qualcomm SOC. So the real question is: would it be worth it?