The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review

by Dr. Ian Cutress on August 4, 2021 1:45 PM ESTCPU Tests: Encoding

One of the interesting elements on modern processors is encoding performance. This covers two main areas: encryption/decryption for secure data transfer, and video transcoding from one video format to another.

In the encrypt/decrypt scenario, how data is transferred and by what mechanism is pertinent to on-the-fly encryption of sensitive data - a process by which more modern devices are leaning to for software security.

Video transcoding as a tool to adjust the quality, file size and resolution of a video file has boomed in recent years, such as providing the optimum video for devices before consumption, or for game streamers who are wanting to upload the output from their video camera in real-time. As we move into live 3D video, this task will only get more strenuous, and it turns out that the performance of certain algorithms is a function of the input/output of the content.

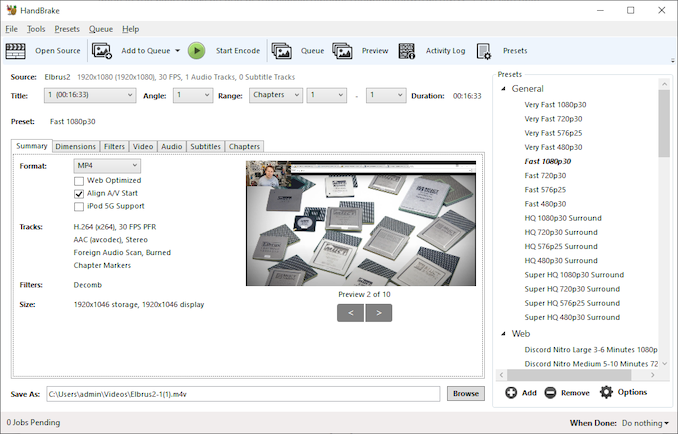

HandBrake 1.32: Link

Video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codecs, VP9 and AV1, there are others that are prominent: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H.265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content. There are other codecs coming to market designed for specific use cases all the time.

Handbrake is a favored tool for transcoding, with the later versions using copious amounts of newer APIs to take advantage of co-processors, like GPUs. It is available on Windows via an interface or can be accessed through the command-line, with the latter making our testing easier, with a redirection operator for the console output.

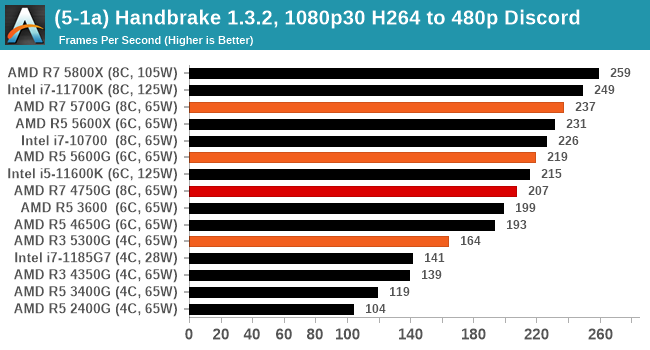

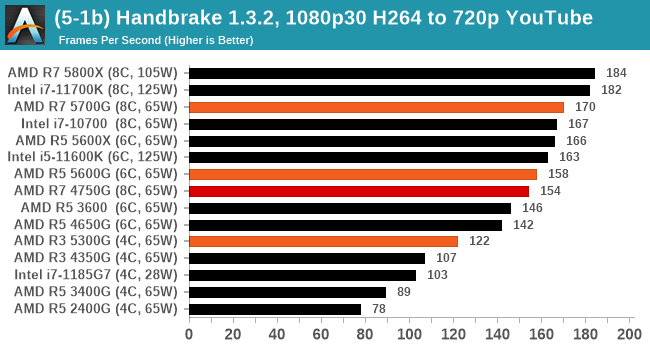

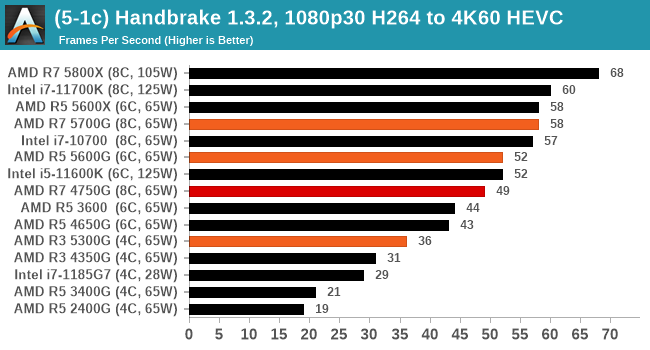

We take the compiled version of this 16-minute YouTube video about Russian CPUs at 1080p30 h264 and convert into three different files: (1) 480p30 ‘Discord’, (2) 720p30 ‘YouTube’, and (3) 4K60 HEVC.

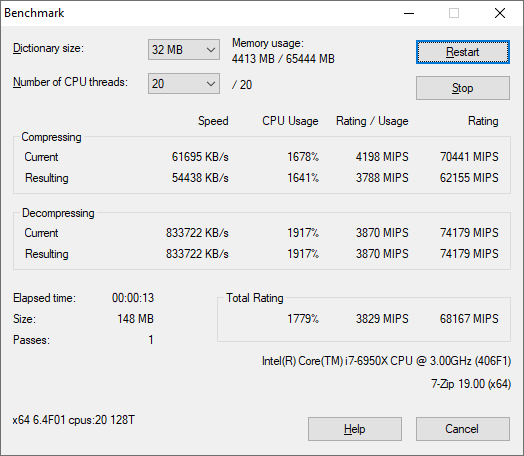

7-Zip 1900: Link

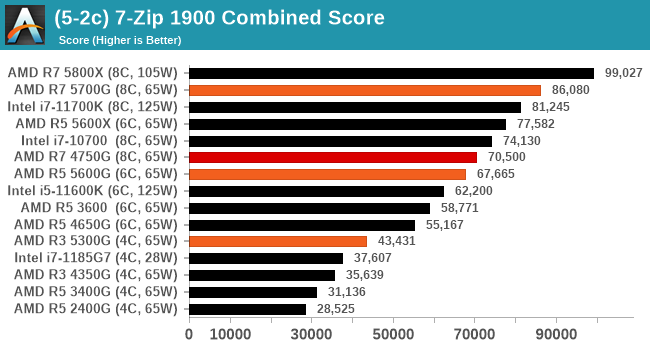

The first compression benchmark tool we use is the open-source 7-zip, which typically offers good scaling across multiple cores. 7-zip is the compression tool most cited by readers as one they would rather see benchmarks on, and the program includes a built-in benchmark tool for both compression and decompression.

The tool can either be run from inside the software or through the command line. We take the latter route as it is easier to automate, obtain results, and put through our process. The command line flags available offer an option for repeated runs, and the output provides the average automatically through the console. We direct this output into a text file and regex the required values for compression, decompression, and a combined score.

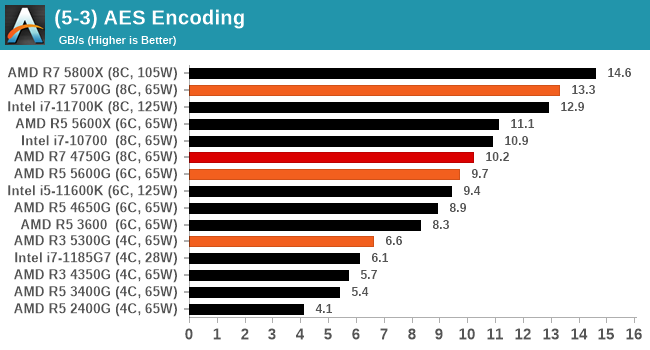

AES Encoding

Algorithms using AES coding have spread far and wide as a ubiquitous tool for encryption. Again, this is another CPU limited test, and modern CPUs have special AES pathways to accelerate their performance. We often see scaling in both frequency and cores with this benchmark. We use the latest version of TrueCrypt and run its benchmark mode over 1GB of in-DRAM data. Results shown are the GB/s average of encryption and decryption.

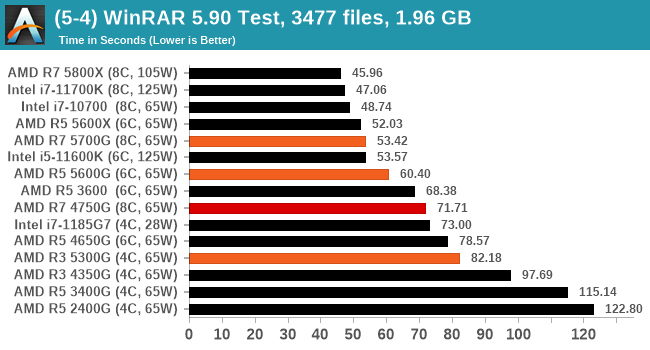

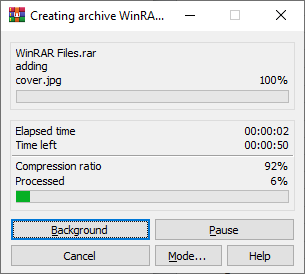

WinRAR 5.90: Link

For the 2020 test suite, we move to the latest version of WinRAR in our compression test. WinRAR in some quarters is more user friendly that 7-Zip, hence its inclusion. Rather than use a benchmark mode as we did with 7-Zip, here we take a set of files representative of a generic stack

- 33 video files , each 30 seconds, in 1.37 GB,

- 2834 smaller website files in 370 folders in 150 MB,

- 100 Beat Saber music tracks and input files, for 451 MB

This is a mixture of compressible and incompressible formats. The results shown are the time taken to encode the file. Due to DRAM caching, we run the test for 20 minutes times and take the average of the last five runs when the benchmark is in a steady state.

For automation, we use AHK’s internal timing tools from initiating the workload until the window closes signifying the end. This means the results are contained within AHK, with an average of the last 5 results being easy enough to calculate.

135 Comments

View All Comments

GeoffreyA - Friday, August 6, 2021 - link

Okay, that makes sense.19434949 - Friday, August 6, 2021 - link

Do you know if 5600G or 5700G can output 4k 120fps movie/video playback?GeoffreyA - Saturday, August 7, 2021 - link

Tested this now on a 2200G. Taking the Elysium trailer, I encoded a 10-second clip in H.264, H.265, and AV1 using FFmpeg. The original frame rate was 23.976, so using the -r switch, got it up to 120. Also, scaled the video from 3840x1606 to 3840x2160, and kept the audio (DTS-MA, 8 ch). On MPC-HC, they all ran smoothly. Rough CPU/GPU usage:H.264: 25% | 20%

H.265: 6% | 21%

AV1: 60% | 20%

So if Raven Ridge can hold up at 4K/120, Cezanne should have no problem. Note that the video was downscaled during playback, owing to the screen not being 4K. Not sure if that made it easier. 8K, choppy. And VLC, lower GPU usage but got stuck on the AV1 clip.

GeoffreyA - Sunday, August 8, 2021 - link

Something else I found. 10-bit H.264 seems to be going through software/CPU decoding, whereas 8-bit is running through the hardware, dropping CPU usage down to 6/8%. H.265 hasn't got the same problem. It could be the driver, the hardware, the player, or my computer.mode_13h - Monday, August 9, 2021 - link

> 10-bit H.264 seems to be going through software/CPU decoding...

> It could be the driver, the hardware, the player

It's quite likely the driver or a GPU hardware limitation. There's some nonzero chance it's the player, but I'd bet the player tries to use acceleration and then only falls back on software when that fails.

GeoffreyA - Tuesday, August 10, 2021 - link

Yes, quite likely.mode_13h - Monday, August 9, 2021 - link

> the video was downscaled during playback, owing to the screen not being 4K.> Not sure if that made it easier.

Not usually. I only have detailed knowledge of H.264, where hierarchical encoding is mostly aimed at adapting to different bitrates. That would never be enabled by default, because not only is it more work for the encoder, but it's also poorly supported by decoders and generates slightly less efficient bitstreams.

GeoffreyA - Tuesday, August 10, 2021 - link

"hierarchical encoding"That could be x264's b-pyramid, which, I think, is enabled most of the time. Apparently, allows B-frames to be used as references, saving bits. The stricter setting limits it to the Bluray spec.

GeoffreyA - Tuesday, August 10, 2021 - link

By the way, looking forward to x266 coming out. AV1 is excellent, but VVC appears to be slightly ahead of it.mode_13h - Wednesday, August 11, 2021 - link

> That could be x264's b-pyramidNo, I meant specifically Scalable Video Coding, which is what I thought, but I didn't want to cite the wrong term.

https://en.wikipedia.org/wiki/Advanced_Video_Codin...

The only way the decoder can opt to do less work is by discarding some layers of a SVC-encoded H.264 stream. Under any other circumstance, the decoder can't take any shortcuts without introducing a cascade of errors in all of the frames which reference the one being decoded.

> The stricter setting limits it to the Bluray spec.

I think blu-ray mainly limits which profiles (which are a collection of compression techniques) and levels (i.e. bitrates & resolutions) can be used, so that the bitstream can be decoded by all players and can be streamed off the disc fast enough.

I once tried authoring valid blu-ray video dics, but the consumer-grade tools were too limiting and the free tools were a mess to figure out and use. In the end, I found simply copying MPEG-2.ts files on a BD-R would play it every player I tested. I was mainly interested in using it for a few videos shot on a phone, plus a few things I recorded from broadcast TV.