The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review

by Dr. Ian Cutress on August 4, 2021 1:45 PM ESTCPU Tests: Synthetic

Most of the people in our industry have a love/hate relationship when it comes to synthetic tests. On the one hand, they’re often good for quick summaries of performance and are easy to use, but most of the time the tests aren’t related to any real software. Synthetic tests are often very good at burrowing down to a specific set of instructions and maximizing the performance out of those. Due to requests from a number of our readers, we have the following synthetic tests.

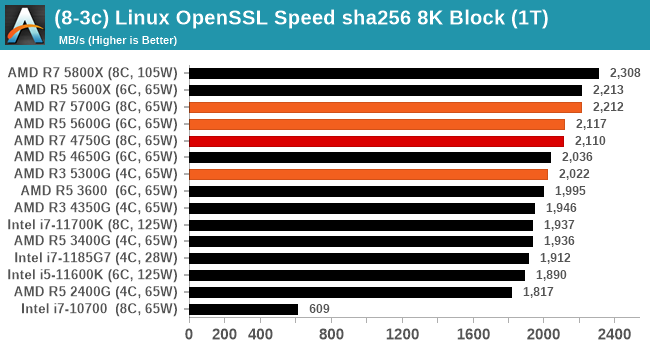

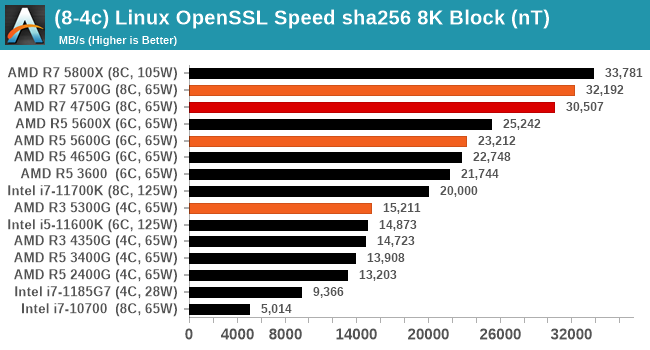

Linux OpenSSL Speed: SHA256

One of our readers reached out in early 2020 and stated that he was interested in looking at OpenSSL hashing rates in Linux. Luckily OpenSSL in Linux has a function called ‘speed’ that allows the user to determine how fast the system is for any given hashing algorithm, as well as signing and verifying messages.

OpenSSL offers a lot of algorithms to choose from, and based on a quick Twitter poll, we narrowed it down to the following:

- rsa2048 sign and rsa2048 verify

- sha256 at 8K block size

- md5 at 8K block size

For each of these tests, we run them in single thread and multithreaded mode. All the graphs are in our benchmark database, Bench, and we use the sha256 results in published reviews.

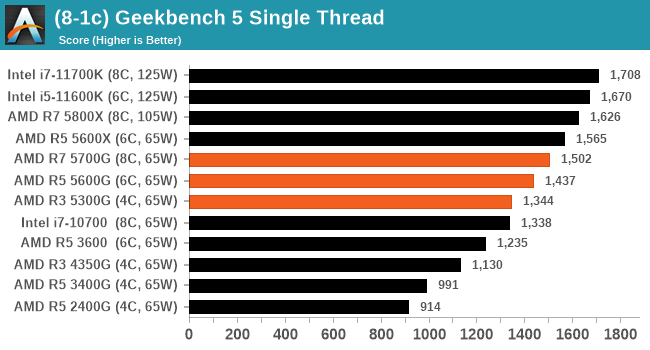

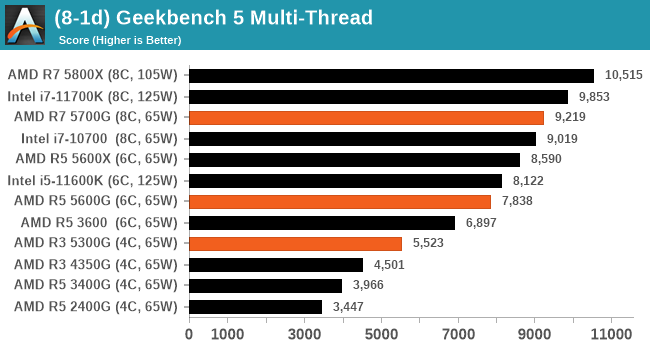

GeekBench 5: Link

As a common tool for cross-platform testing between mobile, PC, and Mac, GeekBench is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We have both GB5 and GB4 results in our benchmark database. GB5 was introduced to our test suite after already having tested ~25 CPUs, and so the results are a little sporadic by comparison. These spots will be filled in when we retest any of the CPUs.

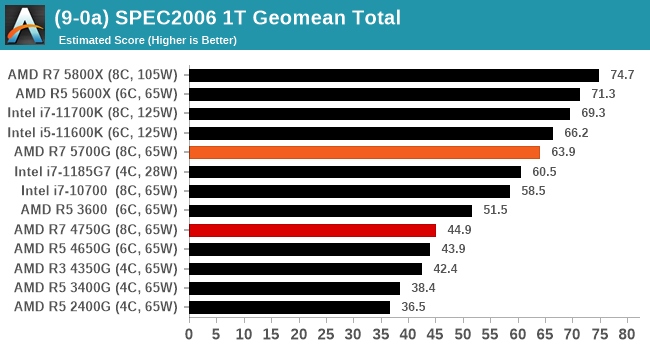

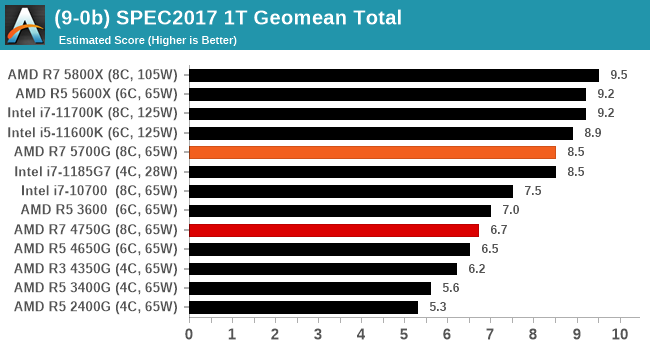

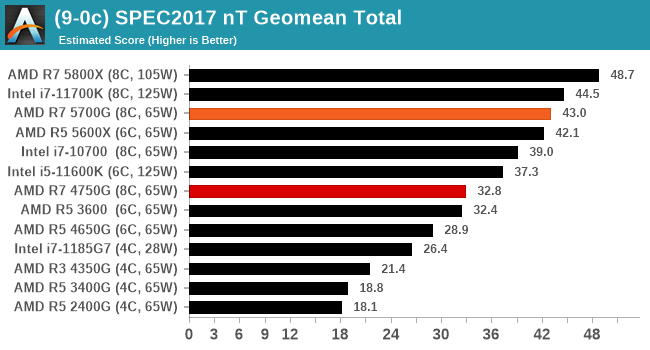

CPU Tests: SPEC

SPEC2017 and SPEC2006 is a series of standardized tests used to probe the overall performance between different systems, different architectures, different microarchitectures, and setups. The code has to be compiled, and then the results can be submitted to an online database for comparison. It covers a range of integer and floating point workloads, and can be very optimized for each CPU, so it is important to check how the benchmarks are being compiled and run.

We run the tests in a harness built through Windows Subsystem for Linux, developed by our own Andrei Frumusanu. WSL has some odd quirks, with one test not running due to a WSL fixed stack size, but for like-for-like testing is good enough. SPEC2006 is deprecated in favor of 2017, but remains an interesting comparison point in our data. Because our scores aren’t official submissions, as per SPEC guidelines we have to declare them as internal estimates from our part.

For compilers, we use LLVM both for C/C++ and Fortan tests, and for Fortran we’re using the Flang compiler. The rationale of using LLVM over GCC is better cross-platform comparisons to platforms that have only have LLVM support and future articles where we’ll investigate this aspect more. We’re not considering closed-sourced compilers such as MSVC or ICC.

clang version 10.0.0

-Ofast -fomit-frame-pointer

-march=x86-64

-mtune=core-avx2

-mfma -mavx -mavx2

Our compiler flags are straightforward, with basic –Ofast and relevant ISA switches to allow for AVX2 instructions. We decided to build our SPEC binaries on AVX2, which puts a limit on Haswell as how old we can go before the testing will fall over. This also means we don’t have AVX512 binaries, primarily because in order to get the best performance, the AVX-512 intrinsic should be packed by a proper expert, as with our AVX-512 benchmark. All of the major vendors, AMD, Intel, and Arm, all support the way in which we are testing SPEC.

To note, the requirements for the SPEC licence state that any benchmark results from SPEC have to be labelled ‘estimated’ until they are verified on the SPEC website as a meaningful representation of the expected performance. This is most often done by the big companies and OEMs to showcase performance to customers, however is quite over the top for what we do as reviewers.

For each of the SPEC targets we are doing, SPEC2006 1T, SPEC2017 1T, and SPEC2017 nT, rather than publish all the separate test data in our reviews, we are going to condense it down into a few interesting data points. The full per-test values are in our benchmark database.

We’re still running the tests for the Ryzen 5 5600G and Ryzen 3 5300G, but the Ryzen 7 5700G scores strong.

135 Comments

View All Comments

mode_13h - Monday, August 9, 2021 - link

> RAMBUS was supposed to unlock the true power of the Pentium 4I think Intel underestimated what the DDR consortium was capable of doing. Perhaps they were right, as the DDR makers were eventually forced to license some RAMBUS patents, as I recall.

> the Willamette I used for a decade had plain SDRAM, not even DDR.

Northwood was the best. Sadly, I bought a Prescott because I wanted hyperthreading and hoped the 2x L2 cache would compensate for the longer pipeline. But, it turns out you could even get hyperthreading and 800 MHz FSB, in a couple Northwoods. I also thought SSE3 might be useful, but never got around to doing anything with it.

BTW, I also used DDR400 in my P4.

GeoffreyA - Tuesday, August 10, 2021 - link

For me, both the Prescott and A64 were available, but I went with the latter because I always wanted an Athlon. Originally, was looking at the XP 3200+ and dreamt of coupling that with an nForce2 motherboard. As for Northwood, masterpiece of a CPU. P4 would have put up a respectable defence against the A64 had they continued with it. My aunt had a 2.4 GHz Northwood back then, and my school friend a 2.66 GHz one. His struggled at first, but once he got more RAM and a GeForce FX 5700, it really flew. Still remember running through Delta Labs in Doom 3 at 60 fps!mode_13h - Wednesday, August 11, 2021 - link

Prescott was rumored to have 64-bit support, though it wasn't enabled. I think that explains some of the additional pipeline depth.When Core 2 first launched, I was skeptical the IPC could increase so much that so much lower-clocked CPUs would really outperform their predecessors. It took me a little while to fully accept it. I was hopeful the final 65 nm iteration of Pentium 4 would finally let the Netburst architecture stretch its legs, but even that couldn't overcome its inefficiencies and other deficits.

GeoffreyA - Friday, August 13, 2021 - link

Quite likely. Come to think of it, didn't the Pentium Ds have x64? And they were Prescotts.Indeed, 65 nm might have taken Northwood further. Would've made an interesting processor which we'll never see. As for their 31-stage brethren, the 65 nm Cedar Mills dropped power a fair bit.

GeoffreyA - Friday, August 13, 2021 - link

"65 nm might have taken Northwood further"Well, we didn't even get to see a 90 nm one.

coolrock2008 - Wednesday, August 4, 2021 - link

Ryzen 5 APUs Table, there is a typo. the 5600G is listed as an 8 core part whereas its listed as a 6 core part in the previous table.Wereweeb - Wednesday, August 4, 2021 - link

I know how hard it is to actually publish something that is both excellently researched and at a moment the matter is still relevant. Thank you for your coverage.Plus, it's Anantech, the important parts here are the data and analysis, not how well a tired writer proofreads their own text.

Fulljack - Friday, August 6, 2021 - link

I disagree. any researcher would say that proofreading are also as important as the analysis itself. it's how you serve the data and the analysis to broader audience, after all.dsplover - Wednesday, August 4, 2021 - link

Three times the IPC of my beloved i7 4790k’s. I’ll try one, maybe a few as I don’t need the fastest.The cooler, fast enough is fine for my 1U builds.

Thanks AMD. Tiger Lake never appeared, you win.

dsplover - Wednesday, August 4, 2021 - link

I meant 30% more IPC…