Update: PCI Express 6.0 Draft 0.71 Released, Final Release by End of Year

by Ryan Smith on July 2, 2021 7:00 AM EST- Posted in

- CPUs

- Interconnect

- PCIe

- PCI-SIG

- PCIe 6.0

Update 07/02: Albeit a couple of days later than expected, the PCI-SIG has announced this morning that the PCI Express draft 0.71 specification has been released for member review. Following a minimum 30 day review process, the group will be able to publish the draft 0.9 version of the specficiation, putting them on schedule to release the final version of the spec this year.

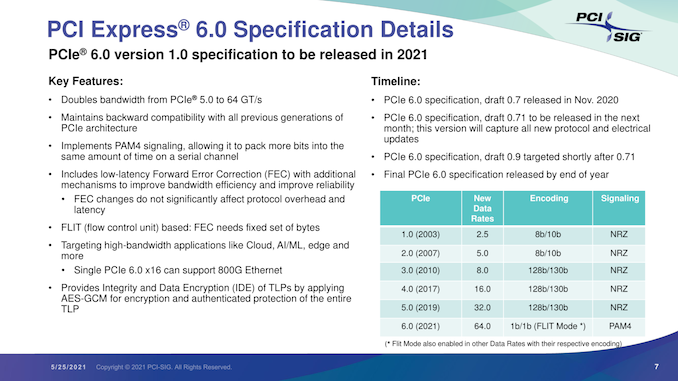

Originally Published 05/25

As part of their yearly developer conference, the PCI Special Interest Group (PCI-SIG) also held their annual press briefing today, offering an update on the state of the organization and its standards. The star of the show, of course, was PCI Express 6.0, the upcoming update to the bus standard that will once again double its data transfer rate. PCI-SIG has been working on PCIe 6.0 for a couple of years now, and in a brief update, confirmed that the group remains on track to release the final version of the specification by the end of this year.

The most recent draft version of the specification, 0.7, was released back in November. Since then, PCI-SIG has remained at work collecting feedback from its members, and is gearing up to release another draft update next month. That draft will incorporate the all of the new protocol and electrical updates that have been approved for the spec since 0.7.

In a bit of a departure from the usual workflow for the group, however, this upcoming draft will be 0.71, meaning that PCIe 6.0 will be remaining at draft 0.7x status for a little while longer. The substance of this decision being that the group is essentially going to hold for another round of review and testing before finally clearing the spec to move on to the next major draft. Overall, the group’s rules call for a 30-day review period for the 0.71 draft, after which the group will be able to release the final draft 0.9 specification.

Ultimately, all of this is to say that PCIe 6.0 remains on track for its previously-scheduled 2021 release. After draft 0.9 lands, there will be a further two-month review for any final issues (primarily legal), and, assuming the standard clears that check, PCI-SIG will be able to issue the final, 1.0 version of the PCIe 6.0 specification.

In the interim, the 0.9 specification is likely to be the most interesting from a technical perspective. Once the updated electrical and protocol specs are approved, the group will be able to give some clearer guidance on the signal integrity requirements for PCIe 6.0. All told we’re not expecting much different from 5.0 (in other words, only a slot or two on most consumer motherboards), but as each successive generation ratchets up the signaling rate, the signal integrity requirements have tightened.

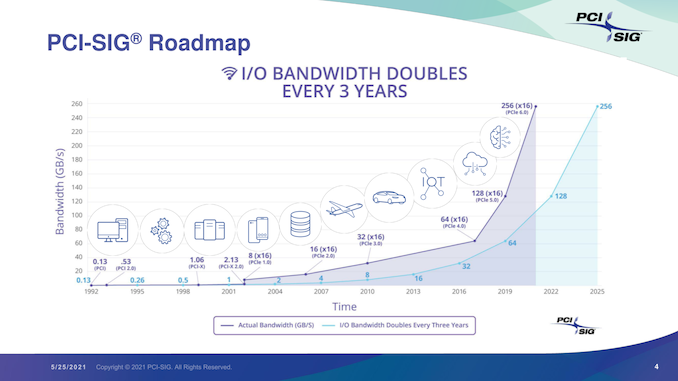

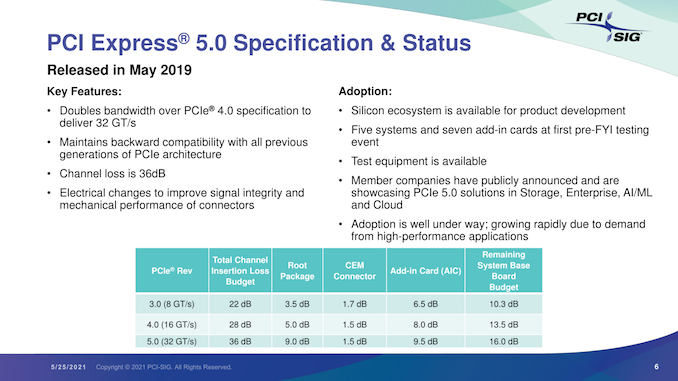

Overall, the unabashedly nerdy standards group is excited about the 6.0 standard, comparing it in significance to the big jump from PCIe 2.0 to PCIe 3.0. Besides proving that they’re once again able to double the bandwidth of the ubiquitous bus, it will mean that they’ve been able to keep to their goal of a three-year cadence. Meanwhile, as the PCIe 6.0 specification reaches completion, we should finally begin seeing the first PCIe 5.0 devices show up in the enterprise market.

103 Comments

View All Comments

p1esk - Thursday, May 27, 2021 - link

Yes, I know Intel is adding support for 5.0. But I'm not sure why. There are no PCIe 5.0 products on the horizon: no NICs, no SSDs, no video cards, nothing. PCIe 4.0 will remain fast enough for the next two years.So, in three years, if I am Nvidia, and CPUs added support for PCIe 6.0, why would I upgrade my products to 5.0 instead of 6.0? Low end cards don't need more than 4.0 - don't need to upgrade those. High end cards cost so much and consume so much power that any extra cost/power from 6.0 is insignificant. And there's a clear benefit from the increased bandwidth - otherwise why would Nvidia developed their proprietary NVLink?

Sure, Nvidia could upgrade their cards to 5.0 next year if they wanted to, but they just upgraded to 4.0 last year, so chances of that are slim.

mode_13h - Thursday, May 27, 2021 - link

> I know Intel is adding support for 5.0. But I'm not sure why.I think it could be just for the CPU -> chipset link. That would give them PCIe 5.0 bragging rights and let them shrink the width of that link back down to its historical x4 width. Moreover, it's soldered potentially right next to the CPU, making it the cheapest and simplest thing to connect via PCIe 5.

It'd also mean they could then drop the CPU back to x16 lanes, since the chipset would have plenty of bandwidth for all the NVMe cards people want to use, and even a respectable amount of bandwidth for a second GPU.

What flies in the face of that scenario is that the socket is also ballooning up to more than 1700 contacts. So, that casts doubt on the idea they'll be cutting back on any CPU-direct connectivity.

> in three years, ... and CPUs added support for PCIe 6.0

Why would they? If 5.0 adoption is low and 5.0 burns more power and increases board and peripheral costs, why would consumer CPUs ever go to 6?

> High end cards cost so much and consume so much power that any extra cost/power from 6.0 is insignificant.

Not sure about that. Cost is cost. And with Intel in the GPU race and if mining cools down, we could see GPU prices come back to Earth.

> there's a clear benefit from the increased bandwidth -

> otherwise why would Nvidia developed their proprietary NVLink?

That's not in most of their consumer cards, which is presumably what we're talking about.

Anyway, the RTX 3090 already has about 56 GB/s of NVLink bandwidth, compared to PCIe 5.0's 64 GB/s. However, the NVLink bandwidth is exclusive for GPU <-> GPU communication. NVidia has already announced a generation of NVLink beyond that.

I also don't see them ditching NVLink, because it scales better.

Yojimbo - Thursday, May 27, 2021 - link

CXL is why. And PCIe 4.0 is going to be short-lived. Both AMD and Intel will be changing over to PCIe 5.0 quickly. It remains to be seen how fast PCIe 6.0 is taken up, especially in the consumer space.Not sure why you think the chances are slim that NVIDIA will upgrade their cards to PCIe 5.0 The data center cards will certainly upgrade to 6.0 because 1) NVLink is built off PCIe and 2) they want to use CXL. As far as the gaming cards, what difference does it really make?

If you buy a motherboard an CPU Alder Lake or later it will be PCIe 5.0. Are you just going to hold off buying one because you think 6.0 will be out afterward? Why not just hold off for 7.0?

You seem to be trying very hard to make some sort of V with PCIe 4.0 and 6.0 at the tips and 5.0 at the trough. But it's not like that. People will buy PCIe 5.0 stuff because that's what will be available. And as far as consumers, they're going to need 6 even less than they will need 5. It seems SSDs will be able to take advantage of 5 when it comes out. But I wonder if they'll be able to take advantage of 6. Maybe if heterogeneous computing makes it to the PC then we'll see benefit of these higher bandwidths.

mode_13h - Saturday, May 29, 2021 - link

> CXL is why. And PCIe 4.0 is going to be short-lived.> Both AMD and Intel will be changing over to PCIe 5.0 quickly.

You're failing to draw any distinction between their consumer and server products. Consumer boards won't have CXL-support, for one thing. There's no reason for it.

> If you buy a motherboard an CPU Alder Lake or later it will be PCIe 5.0.

How do you know? Just because it said "PCIe 5" on a leaked roadmap? Nobody has yet shown me any evidence there will be actual PCIe 5 slots in a Alder Lake motherboard.

> It seems SSDs will be able to take advantage of 5

Just because there's server SSDs that burn like 25 W that can do it? That's not something you could fit in a M.2 slot. Not just for power and thermal reasons, but all the chips wouldn't even fit a M.2 board.

Again, consumer SSDs can barely peak above PCIe 4.0 speeds. Samsung just transitioned its mighty Pro line to TLC. Consumer SSDs are too limited by GB/$ to hit the kinds of speeds that $15k server SSDs can do, even in a AIC form factor.

mode_13h - Saturday, May 29, 2021 - link

> Again, consumer SSDsI meant to say "most PCIe 4 consumer SSDs".

schujj07 - Friday, May 28, 2021 - link

For consumer level products sure there won't be much available for PCIe 5.0. That won't be the case in the enterprise market. There are already switches that have 400GbE ports and PCIe 5.0 NICs are on the way. NVidia has already announced their ConnectX-7 cards with PCIe 5.0 x16/x32 links.schujj07 - Friday, May 28, 2021 - link

In the consumer space PCIe 5.0 is only important for SSDs. We have finally gotten to the point that PCIe 4.0 SSDs can in theory saturate an x4 link. For GPUs we aren't anywhere close to being limited on the bus speed.For the data center having PCIe 5.0 is VERY important for networking equipment. More and more hyperscalers are moving to faster and faster Ethernet. We were stuck on PCIe 3.0 for far too long in the data center and that stagnated network speeds and hyperconverged storage solutions. With the move to PCIe 4.0 we were able to get dual port 100GbE or single port 200GbE at bus speeds for each port. There were dual port PCIe 3.0 100GbE cards but you didn't get any benefit of the 2nd port as you were bus limited. Now with PCIe 5.0 you can get dual port 200GbE or single port 400GbE. This helps massively when you are running something like VMware vSAN for your storage. The idea is to make storage less of a bottleneck in the data center and the faster Ethernet in conjunction with faster SSD is making that possible. This ever increasing network bandwidth is also making it very hard on Fibre Channel as that is limited to 32Gb where iSCSI can do 200Gb right now.

mode_13h - Saturday, May 29, 2021 - link

> In the consumer space PCIe 5.0 is only important for SSDs. We have finally gotten to the> point that PCIe 4.0 SSDs can in theory saturate an x4 link. For GPUs we aren't anywhere

> close to being limited on the bus speed.

This is a laughable argument. In 2012, the first PCIe 3.0 GPUs could probably saturate a x16 link, but that doesn't mean we needed PCIe 4.0

Even a PCIe 4.0 consumer SSD being able to touch the limit of an x4 link, in peak speeds, doesn't justify transitioning to 5.0.

mode_13h - Wednesday, May 26, 2021 - link

> Starting the graph back at PCI in 1992 glosses over that PCI was too slow to begin with,> which is why there was VESA local bus

Huh? 486DX2 machines had VLB, because EISA wasn't fast enough! Once Pentiums came out, they pretty much immediately adopted the new PCI bus. And it was *plenty* fast, at the time!

It was only ~5 years later that PCI got stuck between 66 MHz and 64-bit, with neither seeming to gain mainstream acceptance. That's when Intel decided to push AGP, as the way forward. That lasted until 2004 or so, when PCIe hit the mainstream.

29a - Friday, July 2, 2021 - link

VLB did not replace PCI.