Intel 11th Generation Core Tiger Lake-H Performance Review: Fast and Power Hungry

by Brett Howse & Andrei Frumusanu on May 17, 2021 9:00 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Willow Cove

- SuperFin

- 11th Gen

- Tiger Lake-H

CPU Tests: Microbenchmarks

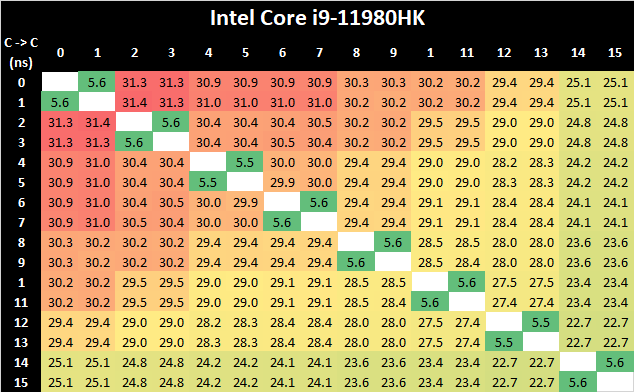

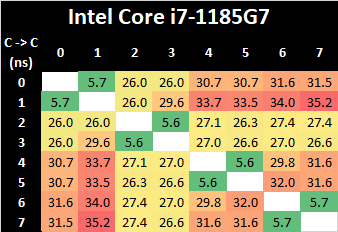

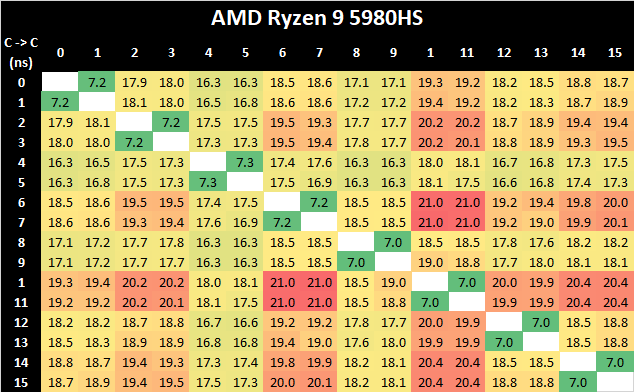

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

In terms of the core-to-core tests on the Tiger Lake-H 11980HK, it’s best to actually compare results 1:1 alongside the 4-core Tiger Lake design such as the i7-1185G7:

What’s very interesting in these results is that although the new 8-core design features double the cores, representing a larger ring-bus with more ring stops and cache slices, is that the core-to-core latencies are actually lower both in terms of best-case and worst-case results compared to the 4-core Tiger Lake chip.

This is generally a bit perplexing and confusing, generally the one thing to account for such a difference would be either faster CPU frequencies, or a faster clock of lower cycle latency of the L3 and the ring bus. Given that TGL-H comes 8 months after TGL-U, it is plausible that the newer chip has a more matured implementation and Intel would have been able to optimise access latencies.

Due to AMD’s recent shift to a 8-core core complex, Intel no longer has an advantage in core-to-core latencies this generation, and AMD’s more hierarchical cache structure and interconnect fabric is able to showcase better performance.

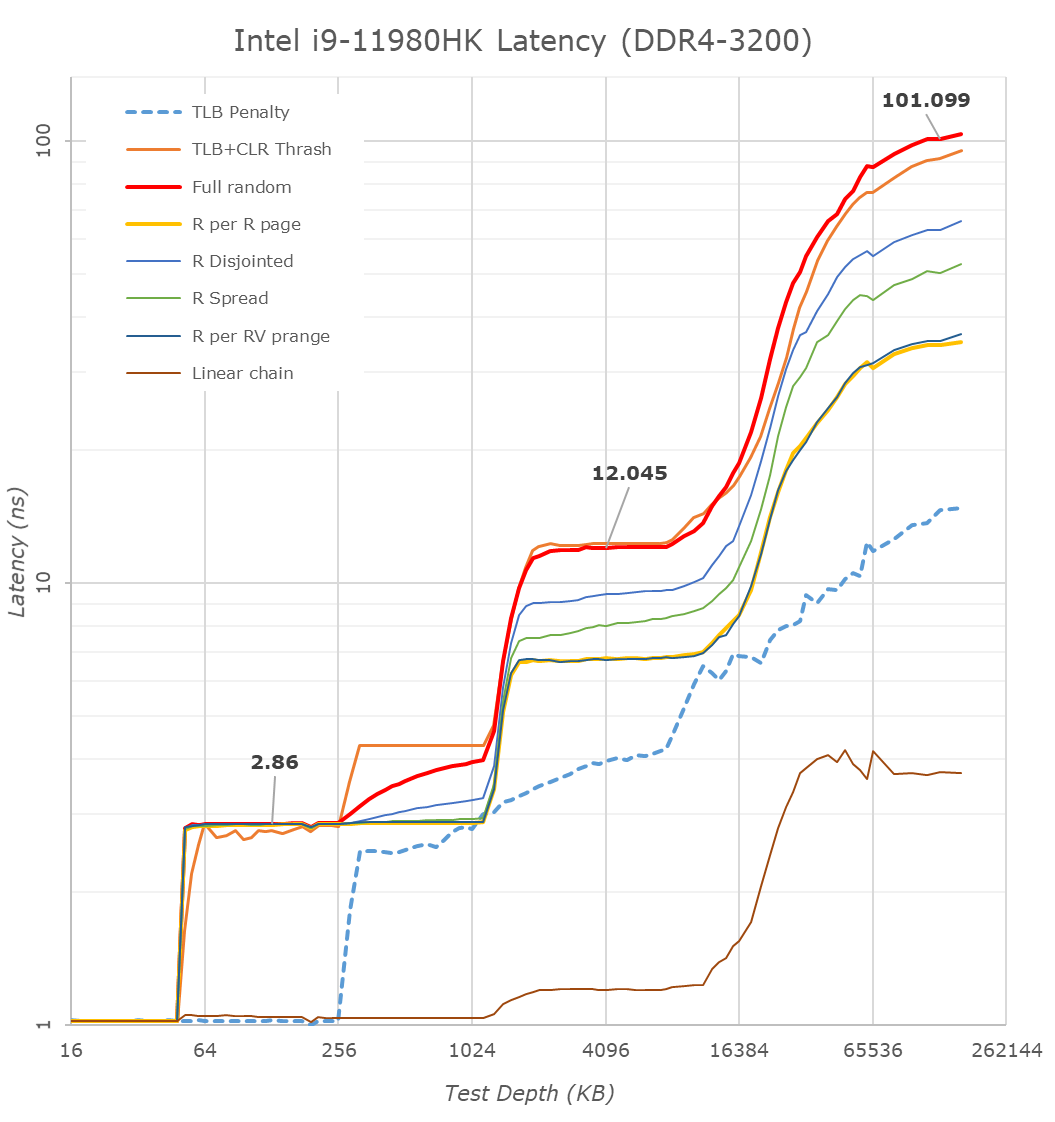

Cache & DRAM Latency

This is another in-house test built by Andrei, which showcases the access latency at all the points in the cache hierarchy for a single core. We start at 2 KiB, and probe the latency all the way through to 256 MB, which for most CPUs sits inside the DRAM (before you start saying 64-core TR has 256 MB of L3, it’s only 16 MB per core, so at 20 MB you are in DRAM).

Part of this test helps us understand the range of latencies for accessing a given level of cache, but also the transition between the cache levels gives insight into how different parts of the cache microarchitecture work, such as TLBs. As CPU microarchitects look at interesting and novel ways to design caches upon caches inside caches, this basic test proves to be very valuable.

What’s of particular note for TGL-H is the fact that the new higher-end chip does not have support for LPDDR4, instead exclusively relying on DDR4-3200 as on this reference laptop configuration. This does favour the chip in terms of memory latency, which now falls in at a measured 101ns versus 108ns on the reference TGL-U platform we tested last year, but does come at a cost of memory bandwidth, which is now only reaching a theoretical peak of 51.2GB/s instead of 68.2GB/s – even with double the core count.

What’s in favour of the TGL-H system is the increased L3 cache from 12MB to 24MB – this is still 3MB per core slice as on TGL-U, so it does come with the newer L3 design which was introduced in TGL-U. Nevertheless, this fact, we do see some differences in the L3 behaviour; the TGL-H system has slightly higher access latencies at the same test depth than the TGL-U system, even accounting for the fact that the TGL-H CPUs are clocked slightly higher and have better L1 and L2 latencies. This is an interesting contradiction in context of the improved core-to-core latency results we just saw before, which means that for the latter Intel did make some changes to the fabric. Furthermore, we see flatter access latencies across the L3 depth, which isn’t quite how the TGL-U system behaved, meaning Intel definitely has made some changes as to how the L3 is accessed.

229 Comments

View All Comments

back2future - Tuesday, May 18, 2021 - link

it's almost one could skip PCIe4 if early 2022 PCIe5 is stable on power management and performance expectations on mainboards?mode_13h - Thursday, May 20, 2021 - link

> it's almost one could skip PCIe4 if early 2022 PCIe5 is stable ... on mainboards?Uh, I'm still eager to see exactly how Intel is going to use PCIe 5, in Alder Lake. I suspect it'll be used only for the DMI link to the chipset, in fact.

Since graphics cards and M.2 SSDs aren't even close to maxing PCIe 4, I struggle to see why they would bother with the added cost and potential issues of supporting 5.

heickelrrx - Monday, May 17, 2021 - link

you can put 4x link on Video card and get 8x speed on Gen 3mean they can put more stuff, with less link, not faster stuff

mode_13h - Tuesday, May 18, 2021 - link

> you can put 4x link on Video card and get 8x speed on Gen 3In terms of power-efficiency, I'd bet the wider, slower link is better.

> mean they can put more stuff, with less link, not faster stuff

It's a laptop. So, prolly not gonna run out of PCIe lanes.

gagegfg - Monday, May 17, 2021 - link

"and if anticipated, great gaming performance"...Inside this notebook case he had a hard time controlling the temperature, if you add a 100W GPU, where is the rest for this cpu?

mmm .... it's going to be interesting.

Matthias B V - Monday, May 17, 2021 - link

Most OEMs still prefer Intel as it has capacity that AMD can't offer and even more it has better features and integration such as AV1 coding, USB / TB 4.0, Intel WIFI etc.Also Intels provides better support for OEMs in design and issues.

Gigaplex - Monday, May 17, 2021 - link

AMD systems can provide TB support, there's no technical limitation preventing it. Intel WiFi chips are standalone cards, which also work fine in AMD systems (my AMD board has Intel WiFi). There's no reason to use an Intel CPU for either of those features.Retycint - Monday, May 17, 2021 - link

The fact that not a single AMD laptop has thunderbolt, points to an issue with cost of implementation/PCI lanes limitations etc. which apparently doesn't exist on Intel CPUs, given how many Intel laptops come with TB as default. This is a fact, and talking about what's possible theoretically doesn't change the facts that AMD systems lack TBCityBlue - Monday, May 17, 2021 - link

> The fact that not a single AMD laptop has thunderbolt, points to an issue with cost of implementation/PCI lanes limitations etc.Perhaps. Or it's simply a reflection of the fact that there is only niche demand for TB.

It's on Intel based laptops because it's supported by the chipset so pretty much a no-brainer (or alternatively, Intel mandates it is included, in order to try and make it more relevant?)

However the vast majority of laptop consumers don't need, want or care about TB, so the extra cost to include it in AMD laptops doesn't appear justified. I'm sure a vendor could include TB on an AMD laptop if they ever thought they'd get a reasonable return on the extra cost.

And maybe now that Intel have been kicked in to touch by Apple, Intel might lose interest in TB in future.

TB has its fans, but it also has the distinct whiff of being the next FireWire.

RobJoy - Tuesday, May 18, 2021 - link

The fact that TB still exists, baffles me.We all should move on.