AMD's dual core Opteron & Athlon 64 X2 - Server/Desktop Performance Preview

by Anand Lal Shimpi, Jason Clark & Ross Whitehead on April 21, 2005 9:25 AM EST- Posted in

- CPUs

The Lineup - Opteron x75

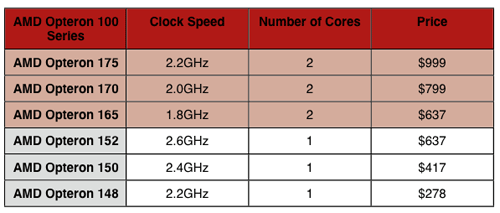

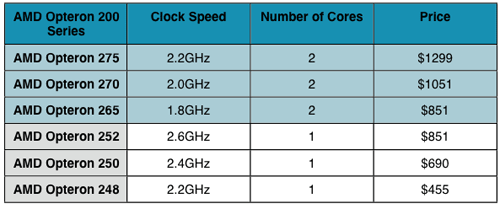

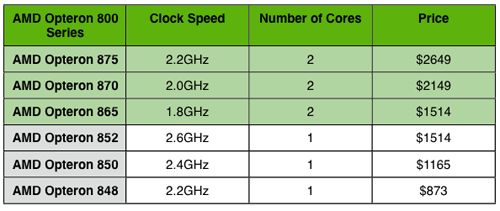

Prior to the dual core frenzy, multiprocessor servers and workstations were referred to by the number of processors that they had. A two-processor workstation would be called a 2-way workstation, and a four-processor server would be called a 4-way server.Both AMD and Intel sell their server/workstation CPUs not only according to performance characteristics (clock speed, cache size, FSB frequency), but also according to the types of systems for which they were designed. For example, the Opteron 252 and Opteron 852 both run at 2.6GHz, but the 252 is for use in up to 2-way configurations, while the 852 is certified for use in 4- and 8-way configurations. The two chips are identical; it's just that one has been run through additional validation and costs a lot more. As you may remember, the first digit in the Opteron's model number denotes the sorts of configurations for which the CPU is validated. So, the 100 series is uniprocessor only, the 200 series works in up to 2-way configurations and the 800 series is certified for 4+ way configurations.

AMD's dual core server/workstation CPUs will still carry the Opteron brand, but they will feature higher model numbers; and while single core Opterons increased in model numbers by 2 points for each increase in clock speed, dual core Opterons will increase by 5s. With each "processor" being dual core, AMD will start referring to their Opterons by the number of sockets for which they are designed. For example, the Opteron 100 series will be designed for use in 1-socket systems, the Opteron 200 series will be designed for use in up to 2-socket systems and the Opteron 800 series will be designed for use in 4 or more socket systems.

There are three new members of the Opteron family - all dual core CPUs: the Opteron x65, Opteron x70 and Opteron x75.

- The fastest dual core runs at 2.2GHz, two speed grades lower than the fastest single core CPU - not too shabby at all.

- The slowest dual core CPU is priced at the same level as the fastest single core CPU; in this case, $637.

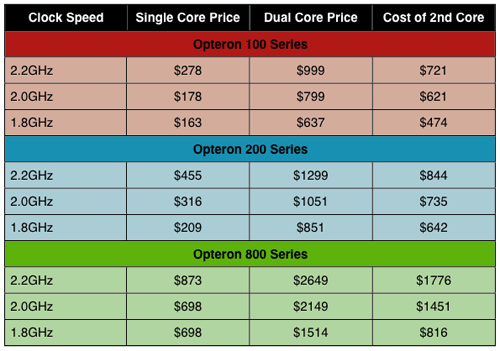

- Unlike Intel, AMD's second core comes at a much higher price. Take a look at the 148 vs. 175. Both run at 2.2GHz, but the dual core chip is over 3.5x the price of the single core CPU.

While AMD will undoubtedly hate the comparison below, it's an interesting one nonetheless. How much are you paying for that second core on these new dual core Opterons? To find out, let's compare prices on a clock for clock basis:

144 Comments

View All Comments

saratoga - Thursday, April 21, 2005 - link

" The three main languages used with .NET are: C# (similar to C++), VB.NET (somewhat similar to VB), and J# (fairly close to JAVA). Whatever language in which you write your code, it is compiled into an intermediate language - CIL (Common Intermediate Language). It is then managed and executed by the CLR (Common Language Runtime)."

Waaah?

C# is not similar c++, its not even like it. Its dervived from MS's experience with Java, and its intended to replace J#, Java and J++. Finally the language which is similar to c++ is managed c++ which is generally listed as the other main .net language.

http://lab.msdn.microsoft.com/express/

Shintai - Thursday, April 21, 2005 - link

#80, #81Tell me how much faster a singlecore 4000+ is compared to a 3800+ and you see it´s less than 5% in average, mostly about 2%. Your X2 2.2Ghz 1MB cache will perform same or max 1-1½% faster than a singlecore 2.2Ghz 1MB cache in games. So 10% lower for is kinda bad for a CPU with higher rating. Memory wont help you that much, cept in a fantasy world.

And less take a game as example.

Halo:

127.7FPS with a 2.4Ghz 512KB cache singlecore.

119.4FPS with a 2.2Ghz 1MB cache dualcore.

And we all know they basicly have the same PR rating due to cache difference.

And the cheap singlecore here beats the more expensive, powerusing and hotter dualcore CPU with 7% faster speed.

So instead of paying 300$ for a CPU, you now pay 500$+, get worse gaming speeds, more heat, more powerusage....for what, waiting 2 years on game developers? Or some 64bit rescue magic that makes SMP for anything possible? It´s even worse for intel with their crappy prescotts, 3.2Ghz vs 3.8Ghz. Atleast AMD is close to the top CPU, but still abit away.

Real gamers will use singlecores the next 1-2 years.

Shintai - Thursday, April 21, 2005 - link

Forgot to add about physics engine etc. For that alone you can add a dummy dedicated chip on say..a GFX card for it for 5-10$ more that will do it 10x faster than any dualcore CPU we will see the rest of the year. Kinda like GFX cards are 10s of times faster than our CPUs for that purpose. Not like good o´days where we used the CPU to render 3D in games.The CPUs are getting more and more irrelevant in games. Just look how a CPu that performs worse in anything else as the Pentium M can own everything in gaming. Tho it lacks all the goodies the P4 and AMD64 got.

It makes one wonder what they actually develop CPUs after, since 95% is gaming, 5% workstation/servers and corporate PCs could work perfectly and more with a K5-K6/P2-P3.

Then we could also stay with some 100W or 200W PSU rather than a 400W, 500W or 700W.

cHodAXUK - Thursday, April 21, 2005 - link

make that 'performed to 91% of the fastest gaming cpu around'.cHodAXUK - Thursday, April 21, 2005 - link

#79 Are you smoking something? A dual core 4400+ running with slow server memory and timing plus no NCQ drive peformed within 91% of the fastest gaming chip around. Now the real X2 4400+ with get at least a 15% pefromance boost from faster memory timings and unregistered memory and that is before we even think about overclocking at all.Shintai - Thursday, April 21, 2005 - link

So what the tests show is like 64bit tests.Dualcores are completely useless the next 2+ years unless you use your PC as a workstation for CAD, photoshop and heavy encoding of movies.

And WinXP 64bit will be toy/useless the next 1-2years aswell, unless you use it for servers.

Hype, hype, hype...

In 2 years when these current intel and amd cores are outdated and we have pentium V/VI or M2/3 and K8/K9. Then we can benefit from it. But look back in the mirror. Those early AMD64 and those lowspeed Pentium 4 with 64bit wont really be used for 64bit. Because when we finally get a stable driverset and windows on windows enviroment. Then we will all be using Longhorn and nextgen CPUs.

Dualcores will be slower than singlecores in games for a LONG LONG time. And it will be MORE expensive. And utterly useless cept for bragging rights. Ask all those people using dual xeons, dual opterons today how much gaming benefit they have. Oh ye, but hey lets all make some lunatic assumption that i´m downloading with p2p at 100mbit so 1 CPU will be 100% loaded while im encoding a movie meanwhile. yes then you can play your game on CPU#2. But how often is that likely to happen anyway. And all those multitasking things will just cripple your HD anyway and kill the sweet heaven there.

It´s a wakeup call before you be the fools.

games for 64bit and dualcores ain´t even started yet. So they will have their 1-3years developmenttime before we see them on the market. And if it´s 1 year it´s usually a crapgame ;)

calinb - Thursday, April 21, 2005 - link

"Armed with the DivX 5.2.1 and the AutoGK front end for Gordian Knot..."AutoGK and Gordian Knot are front ends for several common aps, but AutoGK doesn't use Gordian Knot at all. AutoGK and Gordian Knot are completely Independent programs. len0x, the developer of AutoGK, is also a contributor to Gordian Knot development too. That's the connection.

calinb, DivX Forums Moderator

cHodAXUK - Thursday, April 21, 2005 - link

Hmm, it does seem that dual core with hyperthreading can be a real help and yet some times a real hinderance. Some benchmarks show it giving stellar performance and some show it slowing the cpu right down by swamping it. Some very hit and miss results for Intel's top dual core part there makes me wonder if it is really worth the extra money for something that can be so unreliable in certain situations.Zebo - Thursday, April 21, 2005 - link

even if i can't spell them.Zebo - Thursday, April 21, 2005 - link

Fish bitsWords mean things sorry you don't like them.

Cripple is true. The X2 is crippled in this test since it's not really and X2.

It's a "misrepresentation" of it's true abilities.

I was'nt bagging on Anand but highlithing what was said in the article then speculating of potential performance of the real X2 all things considered.

Sorry I did'nt attend PC walk on my tip toes class. I say what I mean and use accurate words.