AMD 3rd Gen EPYC Milan Review: A Peak vs Per Core Performance Balance

by Dr. Ian Cutress & Andrei Frumusanu on March 15, 2021 11:00 AM ESTDisclaimer June 25th: The benchmark figures in this review have been superseded by our second follow-up Milan review article, where we observe improved performance figures on a production platform compared to AMD’s reference system in this piece.

SPEC - Single-Threaded Performance

Single-thread performance of server CPUs usually isn’t the most important metric for most scale-out workloads, but there are use-cases such as EDA tools which are pretty much single-thread performance bound.

Power envelopes here usually don’t matter, and what is actually the performance factor that comes at play here is simply the boost clocks of the CPUs as well as the IPC improvement, and memory latency of the cores. We’re also testing the results here in NPS1 mode as if you have single-threaded bound workloads, you should prefer to use the systems in a single NUMA node mode.

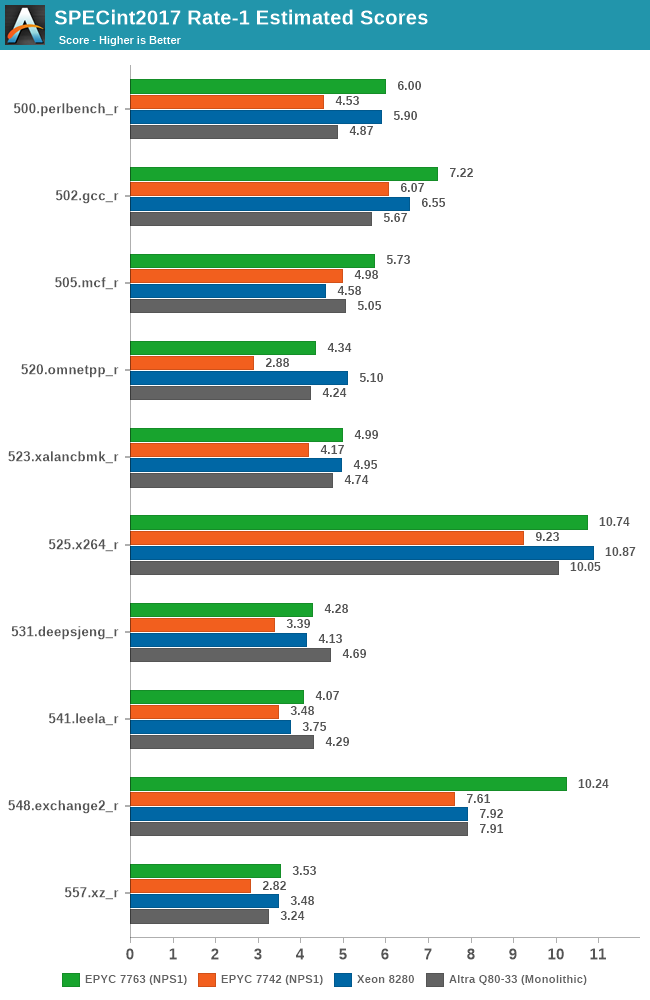

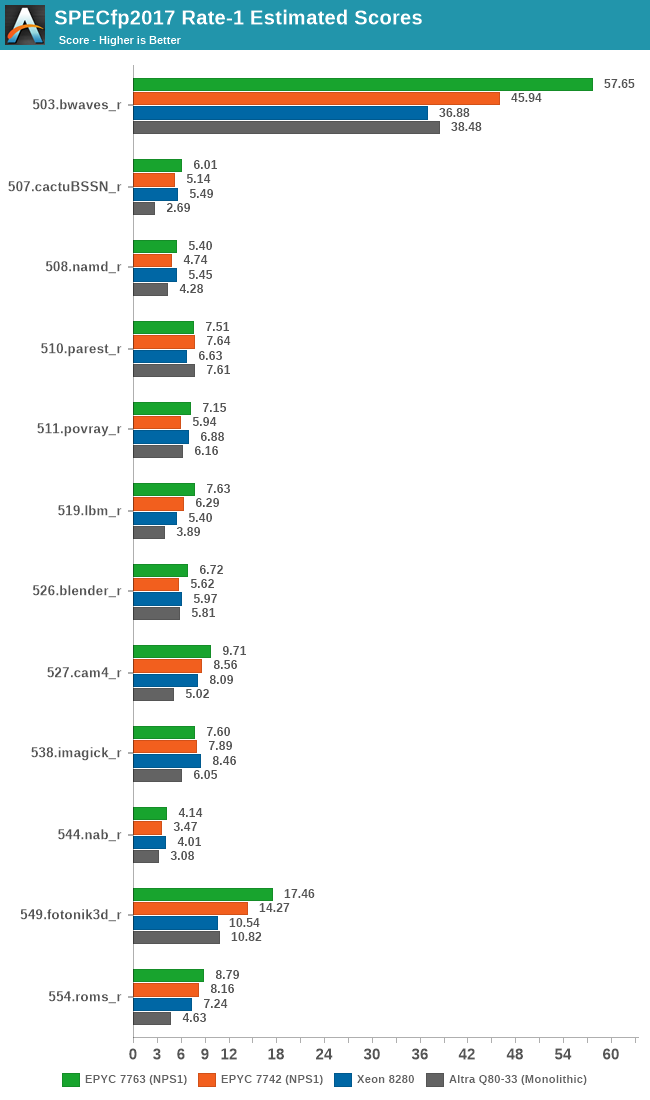

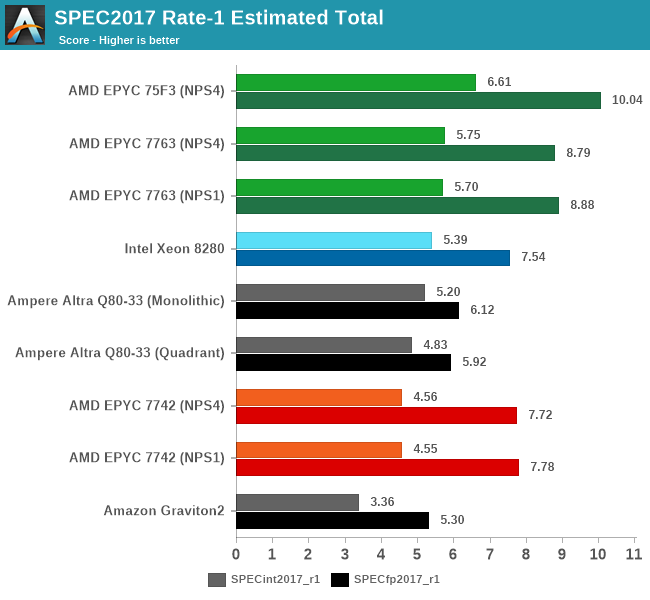

Generationally, the new Zen3-based 7763 improves performance quite significantly over the 7742, even though I noted that both parts boosted almost equally to around 3400MHz in single-threaded scenarios. The uplifts here average over a geomean of +25%, with individual increases from +15 to +50%, with a median of +22%.

The Milan part also now more clearly competes against the best of the competition, even though it’s not a single-threaded optimised part as the 75F3 – we’ll see those scores a bit later.

In SPECfp, the Zen3 based Milan chip also does extremely well, measuring an average geomean boost of +14.2% and a median of +18%.

The new 7763 takes a notable lead in single-threaded performance amongst the large core count SKUs in the market right now. More notably, the 75F3 further increases this lead through the higher 4GHz boost clock this frequency optimised part enables.

120 Comments

View All Comments

mkbosmans - Tuesday, March 23, 2021 - link

Even if you have a nice two-tiered approach implemented in your software, let's say MPI for the distributed memory parallelization on top of OpenMP for the shared memory parallelization, it often turns out to be faster to limit the shared memory threads to a single socket of NUMA domain. So in case of an 2P EPYC configured as NPS4 you would have 8 MPI ranks per compute node.But of course there's plenty of software that has parallelization implemented using MPI only, so you would need a separate process for each core. This is often because of legacy reasons, with software that was originally targetting only a couple of cores. But with the MPI 3.0 shared memory extension, this can even today be a valid approach to great performing hybrid (shared/distributed mem) code.

mode_13h - Tuesday, March 23, 2021 - link

Nice explanation. Thanks for following up!Andrei Frumusanu - Saturday, March 20, 2021 - link

This is vastly incorrect and misleading.The fact that I'm using a cache line spawned on a third main thread which does nothing with it is irrelevant to the real-world comparison because from the hardware perspective the CPU doesn't know which thread owns it - in the test the hardware just sees two cores using that cache line, the third main thread becomes completely irrelevant in the discussion.

The thing that is guaranteed with the main starter thread allocating the synchronisation cache line is that it remains static across the measurements. One doesn't actually have control where this cache line ends up within the coherent domain of the whole CPU, it's going to end up in a specific L3 cache slice depended on the CPU's address hash positioning. The method here simply maintains that positioning to be always the same.

There is no such thing as core-core latency because cores do not snoop each other directly, they go over the coherency domain which is the L3 or the interconnect. It's always core-to-cacheline-to-core, as anything else doesn't even exist from the hardware perspective.

mkbosmans - Saturday, March 20, 2021 - link

The original thread may have nothing to do with it, but the NUMA domain where the cache line was originally allocated certainly does. How would you otherwise explain the difference between the first quadrant for socket 1 to socket 1 communication and the fourth quadrant for socket 2 to socket 2 communication?Your explanation about address hashing to determine the L3 cache slice may be makes sense when talking about fixing the inital thread within a L3 domain, but not why you want that that L3 domain fixed to the first one in the system, regardless of the placement of the two threads doing the ping-ponging.

And about core-core latency, you are of course right, that is sloppy wording on my part. What I meant to convey is that roundtrip latency between core-cacheline-core and back is more relevant (at least for HPC applications) when the cacheline is local to one of the cores and not remote, possibly even on another socket than the two thread.

Andrei Frumusanu - Saturday, March 20, 2021 - link

I don't get your point - don't look at the intra-remote socket figures then if that doesn't interest you - these systems are still able to work in a single NUMA node across both sockets, so it's still pretty valid in terms of how things work.I'm not fixing it to a given L3 in the system (except for that socket), binding a thread doesn't tell the hardware to somehow stick that cacheline there forever, software has zero say in that. As you see in the results it's able to move around between the different L3's and CCXs. Intel moves (or mirrors it) it around between sockets and NUMA domains, so your premise there also isn't correct in that case, AMD currently can't because probably they don't have a way to decide most recent ownership between two remote CCXs.

People may want to just look at the local socket numbers if they prioritise that, the test method here merely just exposes further more complicated scenarios which I find interesting as they showcase fundamental cache coherency differences between the platforms.

mkbosmans - Tuesday, March 23, 2021 - link

For a quick overview of how cores are related to each other (with an allocation local to one of the cores), I like this way of visualizing it more:http://bosmans.ch/share/naples-core-latency.png

Here you can for example clearly see how the four dies of the two sockets are connected pairwise.

The plots from the article are interesting in that they show the vast difference between the cc protocols of AMD and Intel. And the numbers from the Naples plot I've linked can be mostly gotten from the more elaborate plots from the article, although it is not entirely clear to me how to exactly extend the data to form my style of plots. That's why I prefer to measure the data I'm interested in directly and plot that.

imaskar - Monday, March 29, 2021 - link

Looking at the shares sinking, this pricing was a miss...mode_13h - Tuesday, March 30, 2021 - link

Prices are a lot easier to lower than to raise. And as long as they can sell all their production allocation, the price won't have been too high.Zone98 - Friday, April 23, 2021 - link

Great work! However I'm not getting why in the c2c matrix cores 62 and 74 wouldn't have a ~90ns latency as in the NW socket. Could you clarify how the test works?node55 - Tuesday, April 27, 2021 - link

Why are the cpus not consistent?Why do you switch between 7713 and 7763 on Milan and 7662 and 7742 on Rome?

Why do you not have results for all the server CPUs? This confuses the comparison of e.g. 7662 vs 7713. (My current buying decision )