Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

CPU Tests: Encoding

One of the interesting elements on modern processors is encoding performance. This covers two main areas: encryption/decryption for secure data transfer, and video transcoding from one video format to another.

In the encrypt/decrypt scenario, how data is transferred and by what mechanism is pertinent to on-the-fly encryption of sensitive data - a process by which more modern devices are leaning to for software security.

Video transcoding as a tool to adjust the quality, file size and resolution of a video file has boomed in recent years, such as providing the optimum video for devices before consumption, or for game streamers who are wanting to upload the output from their video camera in real-time. As we move into live 3D video, this task will only get more strenuous, and it turns out that the performance of certain algorithms is a function of the input/output of the content.

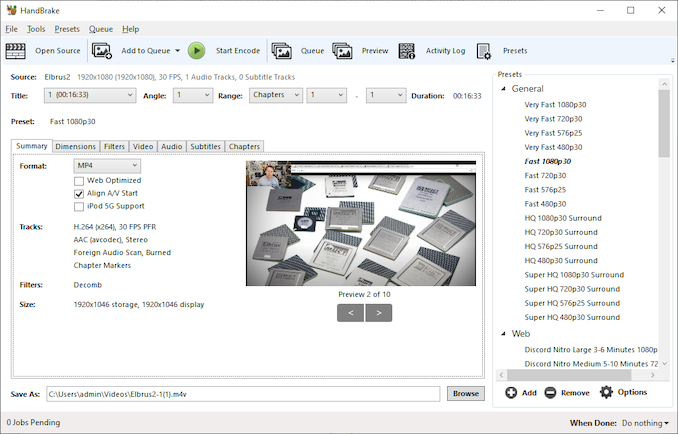

HandBrake 1.32: Link

Video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codecs, VP9 and AV1, there are others that are prominent: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H.265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content. There are other codecs coming to market designed for specific use cases all the time.

Handbrake is a favored tool for transcoding, with the later versions using copious amounts of newer APIs to take advantage of co-processors, like GPUs. It is available on Windows via an interface or can be accessed through the command-line, with the latter making our testing easier, with a redirection operator for the console output.

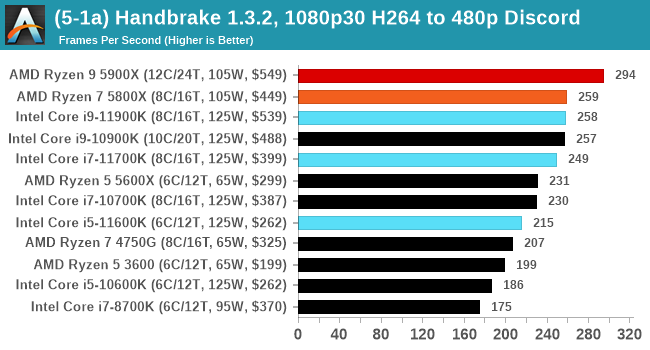

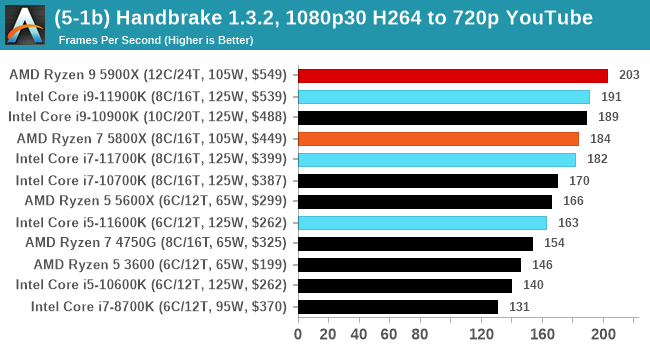

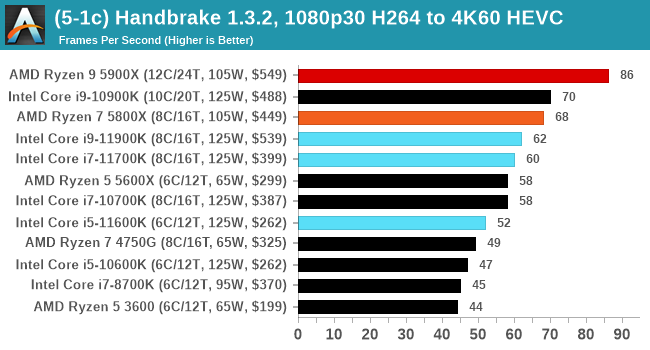

We take the compiled version of this 16-minute YouTube video about Russian CPUs at 1080p30 h264 and convert into three different files: (1) 480p30 ‘Discord’, (2) 720p30 ‘YouTube’, and (3) 4K60 HEVC.

Up to the final 4K60 HEVC, in CPU-only mode, the Intel CPU puts up some good gen-on-gen numbers.

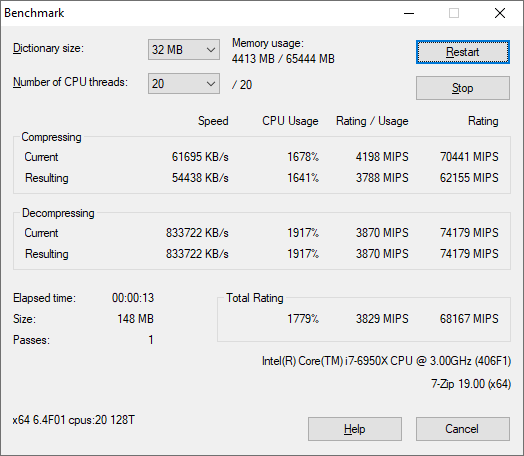

7-Zip 1900: Link

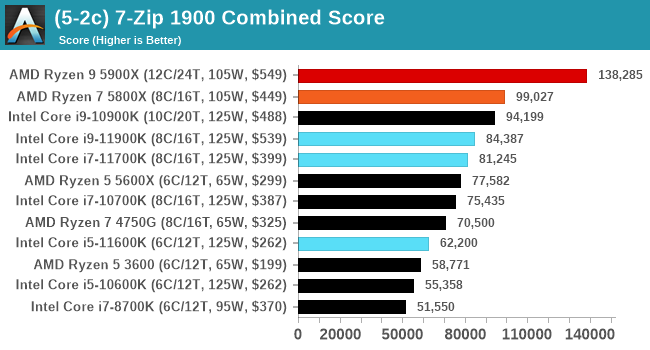

The first compression benchmark tool we use is the open-source 7-zip, which typically offers good scaling across multiple cores. 7-zip is the compression tool most cited by readers as one they would rather see benchmarks on, and the program includes a built-in benchmark tool for both compression and decompression.

The tool can either be run from inside the software or through the command line. We take the latter route as it is easier to automate, obtain results, and put through our process. The command line flags available offer an option for repeated runs, and the output provides the average automatically through the console. We direct this output into a text file and regex the required values for compression, decompression, and a combined score.

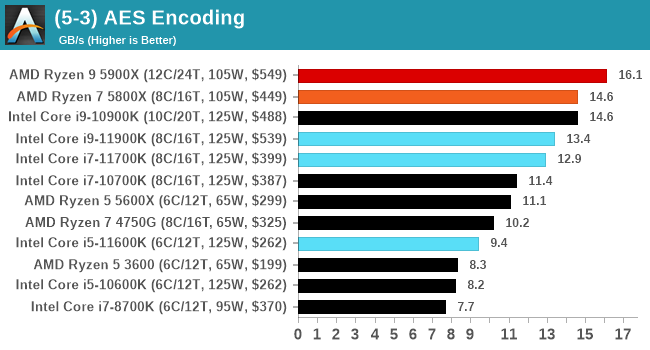

AES Encoding

Algorithms using AES coding have spread far and wide as a ubiquitous tool for encryption. Again, this is another CPU limited test, and modern CPUs have special AES pathways to accelerate their performance. We often see scaling in both frequency and cores with this benchmark. We use the latest version of TrueCrypt and run its benchmark mode over 1GB of in-DRAM data. Results shown are the GB/s average of encryption and decryption.

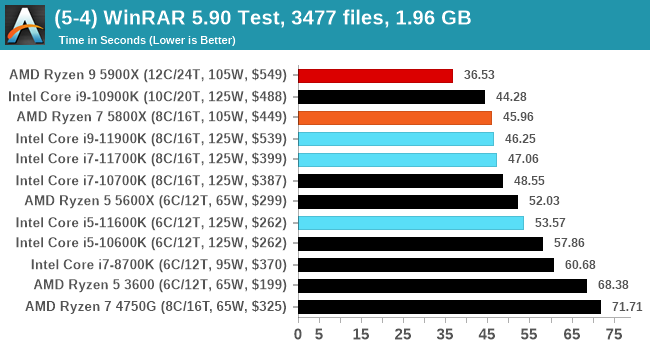

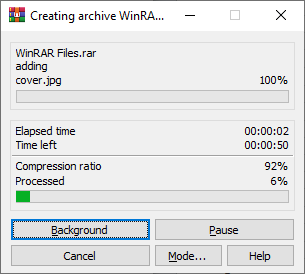

WinRAR 5.90: Link

For the 2020 test suite, we move to the latest version of WinRAR in our compression test. WinRAR in some quarters is more user friendly that 7-Zip, hence its inclusion. Rather than use a benchmark mode as we did with 7-Zip, here we take a set of files representative of a generic stack

- 33 video files , each 30 seconds, in 1.37 GB,

- 2834 smaller website files in 370 folders in 150 MB,

- 100 Beat Saber music tracks and input files, for 451 MB

This is a mixture of compressible and incompressible formats. The results shown are the time taken to encode the file. Due to DRAM caching, we run the test for 20 minutes times and take the average of the last five runs when the benchmark is in a steady state.

For automation, we use AHK’s internal timing tools from initiating the workload until the window closes signifying the end. This means the results are contained within AHK, with an average of the last 5 results being easy enough to calculate.

CPU Tests: Synthetic

Most of the people in our industry have a love/hate relationship when it comes to synthetic tests. On the one hand, they’re often good for quick summaries of performance and are easy to use, but most of the time the tests aren’t related to any real software. Synthetic tests are often very good at burrowing down to a specific set of instructions and maximizing the performance out of those. Due to requests from a number of our readers, we have the following synthetic tests.

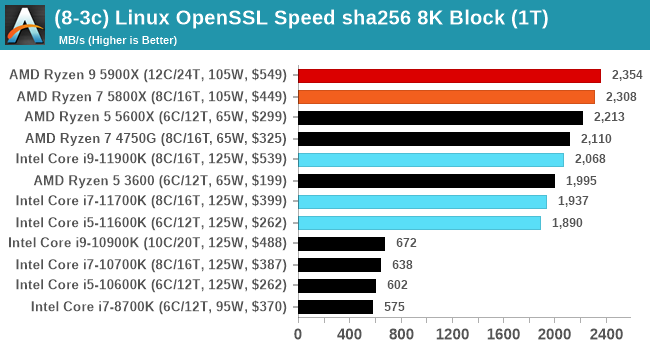

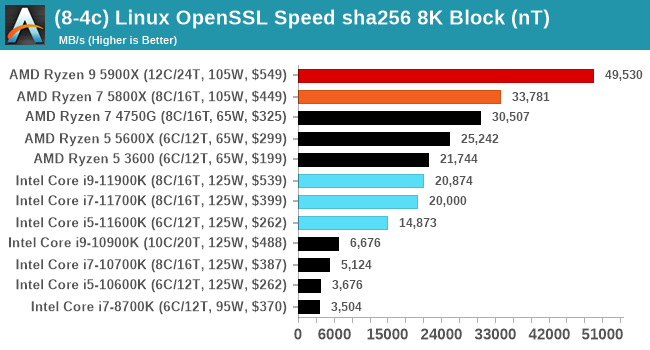

Linux OpenSSL Speed: SHA256

One of our readers reached out in early 2020 and stated that he was interested in looking at OpenSSL hashing rates in Linux. Luckily OpenSSL in Linux has a function called ‘speed’ that allows the user to determine how fast the system is for any given hashing algorithm, as well as signing and verifying messages.

OpenSSL offers a lot of algorithms to choose from, and based on a quick Twitter poll, we narrowed it down to the following:

- rsa2048 sign and rsa2048 verify

- sha256 at 8K block size

- md5 at 8K block size

For each of these tests, we run them in single thread and multithreaded mode. All the graphs are in our benchmark database, Bench, and we use the sha256 results in published reviews.

Intel comes back into the game in our OpenSSL sha256 test as the AVX512 helps accelerate SHA instructions. It still isn't enough to overcome the dedicated sha256 units inside AMD.

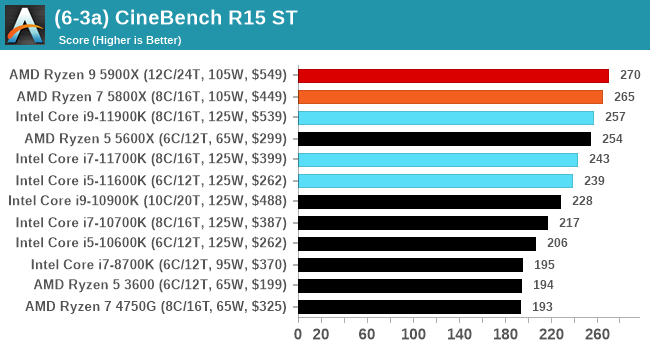

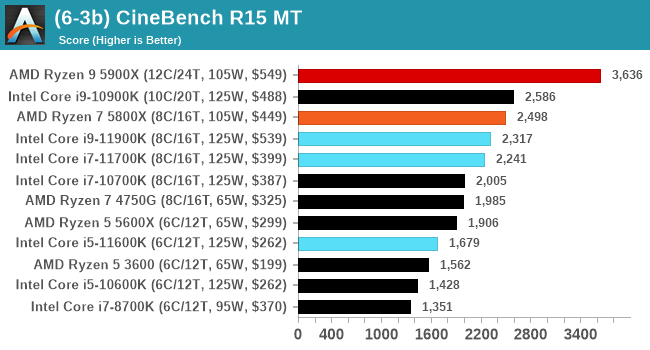

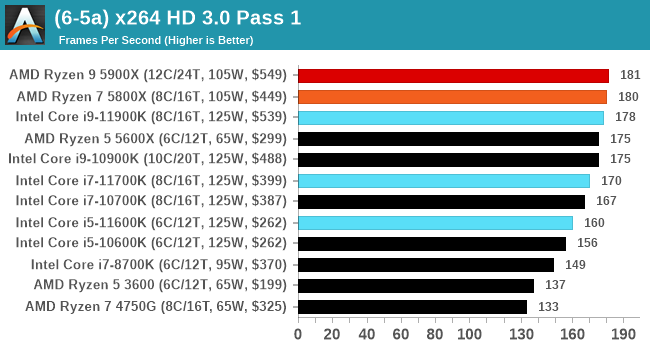

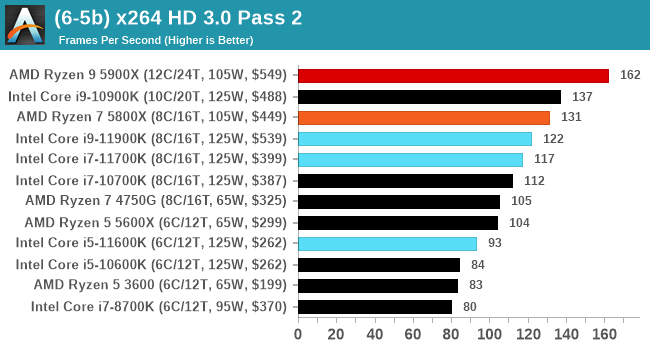

CPU Tests: Legacy and Web

In order to gather data to compare with older benchmarks, we are still keeping a number of tests under our ‘legacy’ section. This includes all the former major versions of CineBench (R15, R11.5, R10) as well as x264 HD 3.0 and the first very naïve version of 3DPM v2.1. We won’t be transferring the data over from the old testing into Bench, otherwise it would be populated with 200 CPUs with only one data point, so it will fill up as we test more CPUs like the others.

The other section here is our web tests.

Web Tests: Kraken, Octane, and Speedometer

Benchmarking using web tools is always a bit difficult. Browsers change almost daily, and the way the web is used changes even quicker. While there is some scope for advanced computational based benchmarks, most users care about responsiveness, which requires a strong back-end to work quickly to provide on the front-end. The benchmarks we chose for our web tests are essentially industry standards – at least once upon a time.

It should be noted that for each test, the browser is closed and re-opened a new with a fresh cache. We use a fixed Chromium version for our tests with the update capabilities removed to ensure consistency.

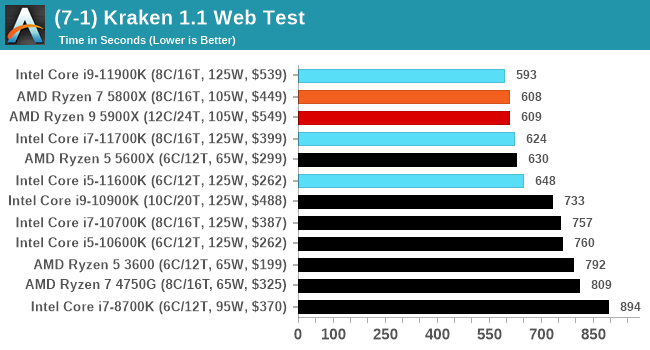

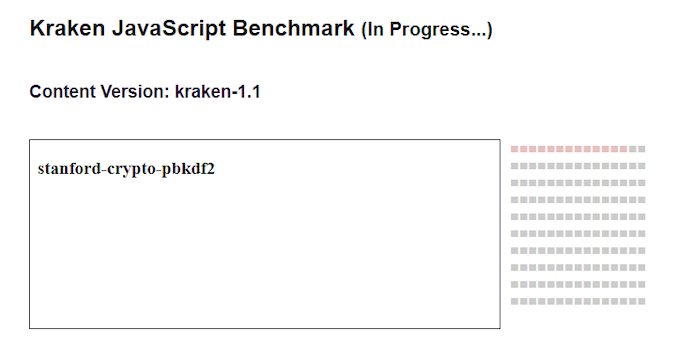

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

We loop through the 10-run test four times (so that’s a total of 40 runs), and average the four end-results. The result is given as time to complete the test, and we’re reaching a slow asymptotic limit with regards the highest IPC processors.

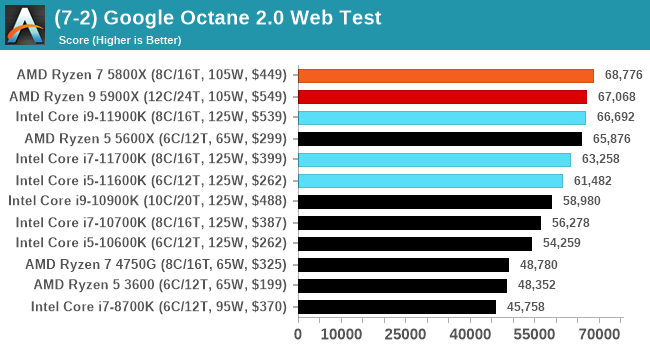

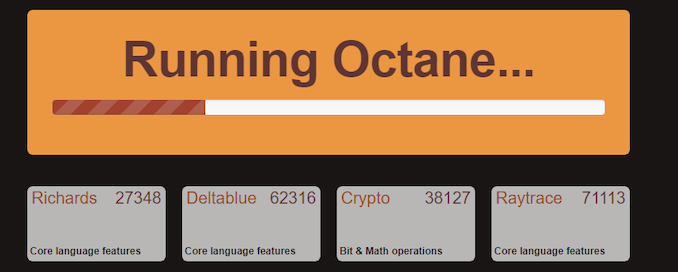

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

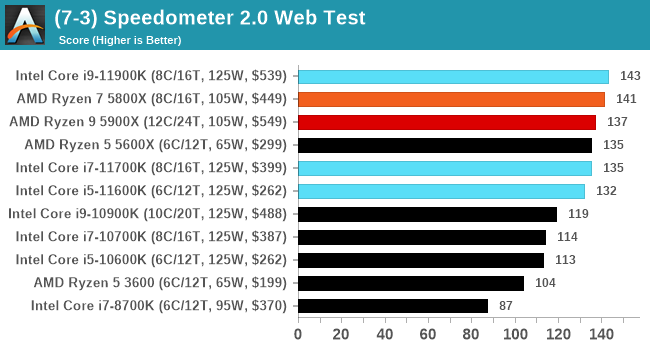

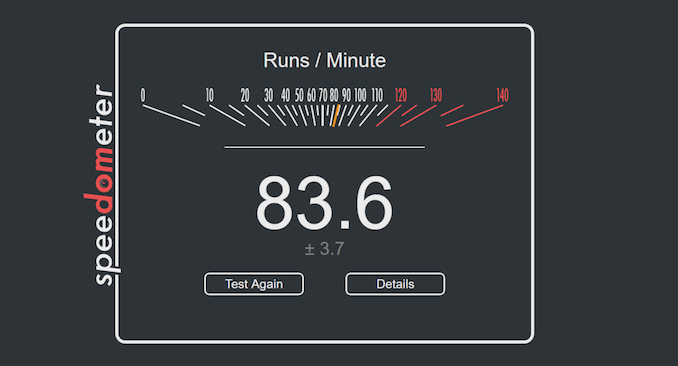

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Legacy Tests

279 Comments

View All Comments

blppt - Tuesday, March 30, 2021 - link

I disagree. I had a 9590 (which shipped WITH a small AIO cooler!) and the thing was shaky at best for stability, easily topping 90c at stock settings.Not the mobo fault either, I had the top end ASUS CHVF-Z 990FX, which was such a mature chipset it practically had grey hairs.

TheinsanegamerN - Wednesday, March 31, 2021 - link

the 9000 series all had stability issues. Backing off 1 clock bin or tinkering with voltage would usually fix them.Bulldozer didnt have the thermal density issues modern CPUs have. If you had the cooling, it would work. Bulldozer's issue was the sheer amount of heat being being generated would overwhelm many CPU coolers of the time, which were built aroudn the more tradiitonal ~100w power draw of intel I7s and the ~125-140 of phenoms. The 200+ that bulldozer was pulling was new territory.

Oxford Guy - Wednesday, March 31, 2021 - link

Certain motherboard makers played loose with the VRMs. AsRock in particular was known for its 9000-series-certified boards frying. MSI was also bad. Only a few boards were suited to the 9000 series and any enthusiast would have skipped the 9000 series in favor of one of the lower-leakage chips, which could be overclocked to the same 4.7 GHz. 5 GHz with Piledriver was not stable, requiring too much voltage. ASUS tried to hide that by under-reporting the voltage used in its flagship board. 4.4 GHz was optimal, 4.5 was okay, and 4.7 was as far as one wanted to go for frequent use. That's with the lower-leakage 'E' parts."The Stilt" said AMD would have sent the 9000 series to the crusher had it not come up with an after-the-fact lower standard for leakage. So, Hruska gets his take spectacularly wrong in his Rocket Lake article. The 9000 series was not aimed at 'the enthusiast faithful'. Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7.

It was aimed at people who could be tricked by the 5 Ghz number. It was the most cynical cash grab possible. Not only did AMD offer only 4 FPU cores (important for gaming) it offered a CPU that was priced into the stratosphere while having un-fixable single-core performance.

Piledriver's fatal flaw was its abysmal single-thread performance, not its power consumption. It could have been okay enough with the lower-leakage standard (and a more strict socket standard as Zen 1 had). But, reportedly, the 32nm SOI wasn't very good for some time (Bulldozer and the first generation of Piledriver), so AMD let the AM3+ spec be pretty loose (although not as loose as FM).

Overclocking Piledriver even to 5 GHz wasn't enough to give it decent single-thread performance.

I do have to agree that the 9590 was the single worst consumer CPU product ever released. It even edges out the Pentium III that wasn't stable — since that one was actually pulled from the market. Not only was the 9590 100% cynical exploitation of consumer ignorance, it was really bad technologically. Figures that Hruska would praise it.

(If, though, one lived in Iceland with a solar array backed by an iron-nickle battery complex, the 9590 would have been okay for playing Deserts of Kharak, provided one didn't buy it at its original price.)

blppt - Thursday, April 1, 2021 - link

"Those people knew better than to buy a 9000 series chip, even though there were a few astroturfers trying to get people to buy them — like one guy who claimed his was running at 5.1 GHz 24/7."What is especially sad here is that even IF he managed to pump the 250-300W into that 9590 to run at 5.1 (all cores), it was probably still slower than a 4790K at stock speeds.

Oxford Guy - Saturday, April 3, 2021 - link

In single core, certainly. However, 2011 is stamped onto the spreaders of Piledriver and it hit the market in 2012. The 4790K hit the market in Q2 2014.In 2014, the only FX to consider was the 8320E. Not only was it cheap (at least at MicroCenter), it could run in any AM3+ board without killing it — and could be overclocked better than a 9000 series with anything below nitrogen, due to its much superior leakage.

The 8320E was the only FX worth anyone’s time. Paired with a UD3P board it could do 4.4 GHz readily and could manage 4.7 with a fast fan angled at the VRM sink. Total cost was very low for the CPU and board from MicroCenter, which is why I recommended that setup to the tightest budget people. But, the bad single core was a problem for frametime consistency.

AMD should have been publicly tarred and feathered by the tech press for the 9590. All the light mockery wasn’t enough.

Spunjji - Friday, April 9, 2021 - link

Broadly agreed, but I'd note that the 6300 was also reasonable if you were on a painfully low budget. I suggested it to a friend (his alternative was a Sandy Bridge i3) and it lasted him until a year back as his main gaming system. It's now moved on to another friend, who still uses it for games. Those chips have aged surprisingly well, all things considered, though it is probably holding his RX 470 back a little bit.Oxford Guy - Wednesday, March 31, 2021 - link

• The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then.• The overclocked 'The Stilt' said the 9000 series is not the chip to judge the design by because it has the worst leakage characteristics and would have been sent to the crusher had AMD not decided to create a lower standard after the fact. Instead, the chips that should be used to represent Piledriver are the 'E' series. They have the lowest leakage and can manage the same 4.7 GHz the 9590 uses with much more reasonable (although still non-competitive) demands. The 9000 series was really AMD's gift to Intel, by making the bad ancient Piledriver design look much worse.

• AMD was a small cash-strapped company, thanks to Intel's monopoly abuses. When AMD was leading the x86 industry Intel kept it from getting the profit. So, Piledriver, although very bad in a number of ways, will never be as bad as Rocket Lake. The 9000 series is the only exception, though, since it was a purely cynical cash grab by AMD, using '5 GHz' to sucker people.

blppt - Thursday, April 1, 2021 - link

"The 9590 posted the highest results in the game Deserts of Kharak, in a dual 980 Ti setup at only 1080 or 1440. And, SLI setups showed competitive 4K scores for many games back then."As I stated, in the (exceedingly rare) case where a game or app can saturate all 8 cores, when the 9590 was in its prime, it could be competitive.

That almost never happened, especially in games. About the only 2 I can think of offhand that could do that in the 9590's prime was GTA5 and Company of Heroes 2. And even then, you were using 150+ more watts to get the same or slightly better performance than Intel's high-end quad cores. Along with the required AIO water cooling and required high-end mobo with a beastly VRM setup. As far as I know, only 3 pricey mobos were approved for the 9590, my CHVF-Z, one Gigabyte board, and an ASRock.

9590 was one of the worst cpus ever. Probably the single worst (special edition) cpu. I had one for years.

This rocket lake, while disappointing, hot, and power consuming, is consistently competitive in every game versus its direct competitors. The 9590 cannot come close to saying that.

Oxford Guy - Saturday, April 3, 2021 - link

I cite Desert of Kharak because it’s the only game I’ve seen put the FX ahead of Intel at below 4K.Not only would the game need to be able to leverage 8 integer cores without needing more than 4 FPU cores, it would have to be able to saturate a narrow deep pipeline and not rely heavily on single thread IPC. It should also scale with clock and not need the best RAM and L3 performance. RTS is probably the best genre for the Piledriver design.

Gondalf - Tuesday, March 30, 2021 - link

AMD FX-9590 had not AVX-512. Very high performance have a cost.Try to image Zen 3 with AVX-512, it could not be a champion in low power consumption at all.

If you do not like high power draw, simply disable AVX-512.