Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

Power Consumption: AVX-512 Caution

I won’t rehash the full ongoing issue with how companies report power vs TDP in this review – we’ve covered it a number of times before, but in a quick sentence, Intel uses one published value for sustained performance, and an unpublished ‘recommended’ value for turbo performance, the latter of which is routinely ignored by motherboard manufacturers. Most high-end consumer motherboards ignore the sustained value, often 125 W, and allow the CPU to consume as much as it needs with the real limits being the full power consumption at full turbo, the thermals, or the power delivery limitations.

One of the dimensions of this we don’t often talk about is that the power consumption of a processor is always dependent on the actual instructions running through the core. A core can be ‘100%’ active while sitting around waiting for data from memory or doing simple addition, however a core has multiple ways to run instructions in parallel, with the most complex instructions consuming the most power. This was noticeable in the desktop consumer space when Intel introduced vector extensions, AVX, to its processor design. The concurrent introduction of AVX2, and AVX512, means that running these instructions draws the most power.

AVX-512 comes with its own discussion, because even going into an ‘AVX-512’ mode causes additional issues. Intel’s introduction of AVX-512 on its server processors showcased that in order to remain stable, the core had to reduce the frequency and increase the voltage while also pausing the core to enter the special AVX-512 power mode. This made the advantage of AVX-512 suitably only for strong high-performance server code. But now Intel has enabled AVX-512 across its product line, from notebook to enterprise, with the running AI code faster, and enabling a new use cases. We’re also a couple of generations on from then, and AVX-512 doesn’t get quite the same hit as it did, but it still requires a lot of power.

For our power benchmarks, we’ve taken several tests that represent a real-world compute workload, a strong AVX2 workload, and a strong AVX512 workload.

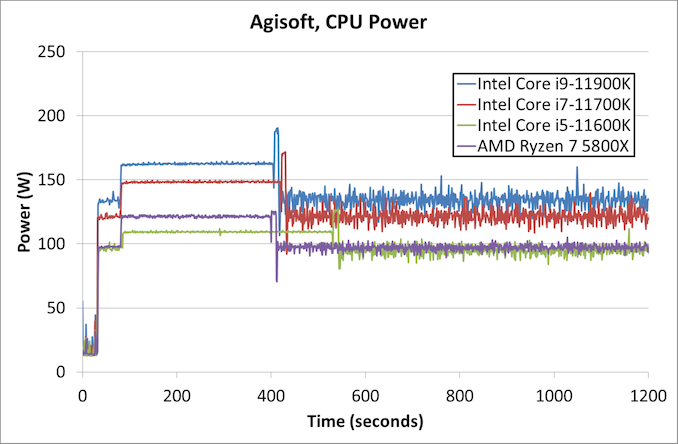

Starting with the Agisoft power consumption, we’ve truncated it to the first 1200 seconds as after that the graph looks messy. Here we see the following power ratings in the first stage and second stage:

- Intel Core i9-11900K (1912 sec): 164 W dropping to 135 W

- Intel Core i7-11700K (1989 sec): 149 W dropping to 121 W

- Intel Core i5-11600K (2292 sec): 109 W dropping to 96 W

- AMD Ryzen 7 5800X (1890 sec): 121 W dropping to 96 W

So in this case, the heavy second section of the benchmark, the AMD processor is the lowest power, and quickest to finish. In the more lightly threaded first section, AMD is still saving 25% of the power compared to the big Core i9.

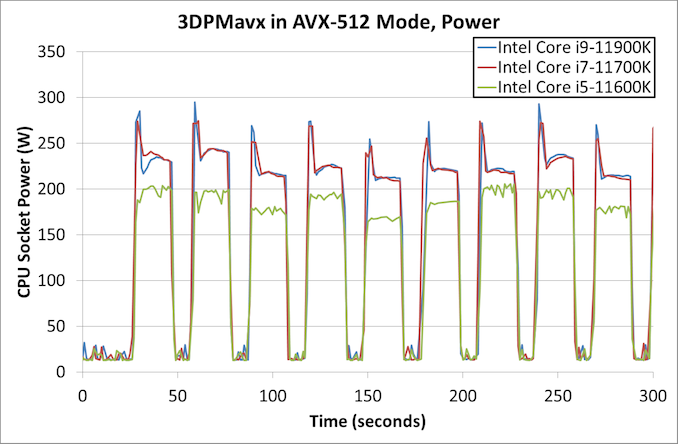

One of the big takeaways from our initial Core i7-11700K review was the power consumption under AVX-512 modes, as well as the high temperatures. Even with the latest microcode updates, both of our Core i9 parts draw lots of power.

The Core i9-11900K in our test peaks up to 296 W, showing temperatures of 104ºC, before coming back down to ~230 W and dropping to 4.5 GHz. The Core i7-11700K is still showing 278 W in our ASUS board, tempeartures of 103ºC, and after the initial spike we see 4.4 GHz at the same ~230 W.

The Core i5-11600K, with fewer cores, gets a respite here. Our peak power numbers are around the 206 W range, with the workload not doing an initial spike and staying around 4.6 GHz. Peak temperatures were at the 82ºC mark, which is very manageable. During AVX2, the i5-11600K was only at 150 W.

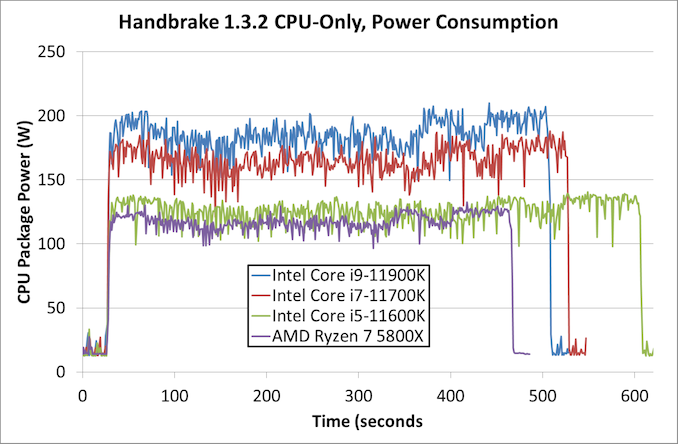

Moving to another real world workload, here’s what the power consumption looks like over time for Handbrake 1.3.2 converting a H.264 1080p60 file into a HEVC 4K60 file.

This is showing the full test, and we can see that the higher performance Intel processors do get the job done quicker. However, the AMD Ryzen 7 processor is still the lowest power of them all, and finishes the quickest. By our estimates, the AMD processor is twice as efficient as the Core i9 in this test.

Thermal Hotspots

Given that Rocket Lake seems to peak at 104ºC, and here’s where we get into a discussion about thermal hotspots.

There are a number of ways to report CPU temperature. We can either take the instantaneous value of a singular spot of the silicon while it’s currently going through a high-current density event, like compute, or we can consider the CPU as a whole with all of its thermal sensors. While the overall CPU might accept operating temperatures of 105ºC, individual elements of the core might actually reach 125ºC instantaneously. So what is the correct value, and what is safe?

The cooler we’re using on this test is arguably the best air cooling on the market – a 1.8 kilogram full copper ThermalRight Ultra Extreme, paired with a 170 CFM high static pressure fan from Silverstone. This cooler has been used for Intel’s 10-core and 18-core high-end desktop variants over the years, even the ones with AVX-512, and not skipped a beat. Because we’re seeing 104ºC here, are we failing in some way?

Another issue we’re coming across with new processor technology is the ability to effectively cool a processor. I’m not talking about cooling the processor as a whole, but more for those hot spots of intense current density. We are going to get to a point where can’t remove the thermal energy fast enough, or with this design, we might be there already.

I will point out an interesting fact down this line of thinking though, which might go un-noticed by the rest of the press – Intel has reduced the total vertical height of the new Rocket Lake processors.

The z-height, or total vertical height, of the previous Comet Lake generation was 4.48-4.54 mm. This number was taken from a range of 7 CPUs I had to hand. However, this Rocket Lake processor is over 0.1 mm smaller, at 4.36 mm. The smaller height of the package plus heatspreader could be a small indicator to the required thermal performance, especially if the airgap (filled with solder) between the die and the heatspreader is smaller. If it aids cooling and doesn’t disturb how coolers fit, then great, however at some point in the future we might have to consider different, better, or more efficient ways to remove these thermal hotspots.

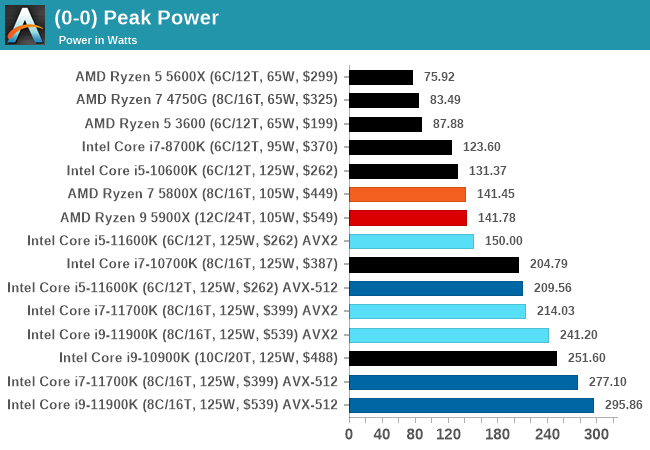

Peak Power Comparison

For completeness, here is our peak power consumption graph.

Platform Stability: Not Complete

It is worth noting that in our testing we had some issues with platform stability with our Core i9 processor. Personally, across two boards and several BIOS revisions, I would experience BSODs in high memory use cases. Gavin, our motherboard editor, was seeing lockups during game tests with his Core i9 on one motherboard, but it worked perfectly with a second. We’ve heard about issues of other press seeing lockups, with one person going through three motherboards to find stability. Conversations with an OEM showcased they had a number of instability issues running at default settings with their Core i9 processors.

The exact nature of these issues is unknown. One of my systems refused to post with 4x32 GB of memory, only with 2x32 GB of memory. Some of our peers that we’ve spoken to have had zero problems with any of their systems. For us, our Core i7 and Core i5 were absolutely fine. I have a second Core i9 processor here which is going through stability tests as this review goes live, and it seems to be working so far, which might point that it is a silicon/BIOS issue, not a memory issue.

Edit: As I was writing this, the second Core i9 crashed and restarted to desktop.

We spoke to Intel about the problem, and they acknowledged our information, stating:

We are aware of these reports and actively trying to reproduce these issues for further debugging.

Some motherboard vendors are only today putting out updated BIOSes for Intel’s new turbo technology, indicating that (as with most launches) there’s a variety of capability out there. Seeing some of the comments from other press in their reviews today, we’re sure this isn’t an isolated incident; however we do expect this issue to be solved.

279 Comments

View All Comments

macakr - Tuesday, March 30, 2021 - link

really? that bad? I can get that on a 15w Ryzen 4700u!Slash3 - Tuesday, March 30, 2021 - link

The 4700u mobile APU has a much stronger iGPU core, similar to that of Rocket Lake.Alistair - Wednesday, March 31, 2021 - link

Yeah it is that bad. Generally if you keep the resolution at 900p or 720p (or 50 percent scaling of 1080p, which is ~768p) the performance is ok. But it falls off dramatically at 1080p. No linear scaling here. Basically it is MUCH worse than laptop parts. I have DDR3600C16 so was expecting better. Oh well.Runeterra was barely playable at 1440p, just a basic card game, but the FPS shoots up dramatically at 1080p or lower, so that's fine. Would be nice to play Hearthstone and Runeterra with integrated graphics one day...

Tom Sunday - Thursday, April 8, 2021 - link

I am getting on the years and like to finally replacing my 13-year old Dell XPS 730x. Its time after being forced to replacing (3-times) PSU's, Motherboards, AIOs, GPU's and RAM. The new Intel i5 11600K holds interest. Will the 'integrated graphics' be good enough for just browsing the Net and watching old western or war movies on utube and with not doing any gaming? How good is the IGPU in this regard? Once I have more money I can hopefully buy a used discrete GPU 'over the table' next year at the local computer show? Will probably have my new system cobbled together by the local stripcenter PC shop and by one of the Bangladesh boys. So it will be good to sound somewhat intelligent discussing the hardware and not being pushed into what is cheap and in stock that day. Thoughts?Spunjji - Friday, April 9, 2021 - link

The iGPU on Rocket Lake will be fine for those purposes. However, so would the iGPU on the cheaper Comet Lake processors out there - they may be a better (cheap) option if you're going to buy now and upgrade later.Another option would be to go for a system based around the AMD Ryzen 5 3600 and re-use an existing GPU, which would also give you the option to upgrade the CPU again to something like a 5800X or even 5900X later. Personally, I'd go with that approach.

0ldman79 - Friday, April 16, 2021 - link

The integrated GPU is fine for movies and web.I've got a Skylake laptop with GTX 960M, it uses the iGPU until I fire up a game.

The h264 and h265 playback are accelerated through the iGPU, barely draws any power at all for video playback. The screen draws all the power. It'll play back 1080P 60 h264 or h265 all day long at under 2W. There are no issues using it for the web or anything else using integrated, it'll even play some games at lower settings, roughly 1/4 of a 750 Ti (960M) in gaming, though the newer chips will be slightly better.

Alexvrb - Tuesday, March 30, 2021 - link

Vega 11 is actually a bit slower than the latest 8 CU Vega found in Renoir/Cezanne. Not enough to catch up to Iris Xe, I don't think... but impressive given the smaller GPU and same power (or better). That's still GCN, too. If they release an APU with a ~10 CU RDNA2 GPU, it should give them a substantial boost... as long as bandwidth doesn't cripple it. Next gen memory should help, but they might also integrate a chunk of Infinity Cache. It has proven effective on larger RDNA2 siblings, giving them good performance with a relatively narrow memory bus.Oxford Guy - Wednesday, March 31, 2021 - link

Good ole iGPU distraction.How about the most important stuff? How about having it appear on the first page?

• performance per watt

• performance per decibel

Apples-to-apples comparison, which means the same CPU cooler in the same case for Intel and AMD.

That is important, not this obsession over a pointless sort-of GPU.

Jezwinni - Saturday, April 3, 2021 - link

I agree the iGPU is a distraction, but disagree on what declare the important things.Personally the performance for the price is the important thing.

Any extra power draw isn't going to blow up my PSU, make my electricity bills unmanageable, or save the world.

Why you consider the performance per watt most important?

0ldman79 - Friday, April 16, 2021 - link

Performance per watt on iGPU only matters in mobile devices, even then it's barely measurable.The iGPU is only going to pull 10W max, normally they peak around half that.