Understanding the Cell Microprocessor

by Anand Lal Shimpi on March 17, 2005 12:05 AM EST- Posted in

- CPUs

High Level Overview of Cell

Cell is just as much of a multi-core processor as the upcoming multi-core CPUs from AMD and Intel, the only difference being that Cell's architecture doesn't have an entirely homogeneous set of cores.Cell's Execution Cores

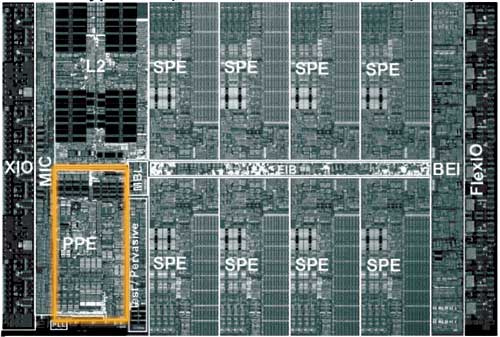

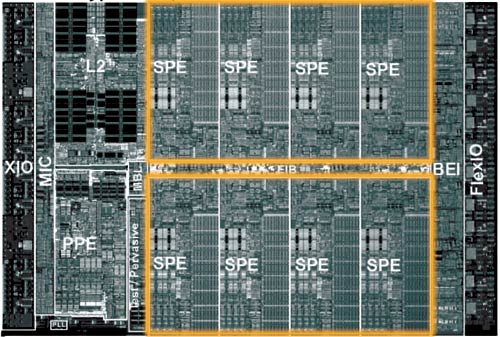

The Cell architecture debuted in a configuration of 9 independent cores: one PowerPC Processing Element (PPE) and eight Synergistic Processing Elements (SPEs). The PPE and SPEs are obviously different, but all eight SPEs are identical to one another.The PPE is IBM's major contribution to the Cell project; it also appears to be very similar to the core being used in the next Xbox console.

The PPE features a 64KB L1 cache and a 512KB L2 cache and features SMT, similar to Intel's Hyper Threading. The PPE features a strictly in-order core, which the desktop x86 market hasn't seen since the death of the original Pentium (the Pentium Pro brought out-of-order execution to the x86 market), so the move for an in-order core is an interesting one. The PPE is also only a 2-issue core, meaning that, at best, it can execute two instructions simultaneously. For comparison, the Athlon 64 is a 3-issue core, so immediately, you get the sense that the PPE is a much simpler core than anything that we have on the desktop. IBM's VMX instruction set (aka Altivec) is also supported by the PPE. Much like the rest of the Cell processor, the PPE is designed to run at very high clock speeds.

There's not much that's impressive about the PPE, other than it's a small, fast, efficient core. Put up against a Pentium 4 or an Athlon 64, the PPE would lose undoubtedly, but the PPE's architecture is one answer to a shift in the performance paradigm. Performance in business/office applications requires a very powerful, very fast general purpose microprocessor, but performance in a game console, for example, does not. The original Xbox used a modified Intel Celeron processor running at 733MHz, while the fastest desktops had 2.0GHz Pentium 4s and 1.60GHz Athlon XPs. Given that the first implementation of Cell is supposed to be Sony's Playstation 3, the simplicity of the PPE is not surprising. Should Cell ever make its way into a PC, the PPE would definitely have to be beefed up, or at least paired with multiple other PPEs.

The majority of the Cell's die is composed of the eight Synergistic Processing Elements (SPEs). If you consider the PPE to be a general purpose microprocessor, think of the SPEs as general purpose processors with a slightly more specific focus.

The SPEs have no branch predictor, meaning that they rely solely on software branch prediction. There are ways that the compiler can avoid branches, and the SPE architecture lends itself very well to things like loop unrolling. Any elementary programmer is familiar with a loop, where one or more lines of code is repeated until a certain condition is met. The checking of that condition (e.g. i < 100) often results in a branch, so one way of removing that branch is simply to unroll the loop. If you have a statement in a loop that is supposed to execute 100 times, you could either keep it in the loop and execute it that way, or you could remove the loop and simply copy the statement 100 times. The end result is the same - the only difference is that in one case, you have a branch condition while the other case results in more lines of code to execute.

The problem with loop unrolling is that you need a large number of registers to unroll some loops, which is one reason that each SPE has 128 registers. Originally, the SPEs were supposed to use the VMX (Altivec) ISA, but because of a need for more than 32 architectural registers, the SPEs implemented a new ISA with support for 128 registers.

Each SPE is only capable of issuing two instructions per clock, meaning that at best, each SPE can execute two instructions at the same time. The issue width of a microprocessor can determine a big part of how large the microprocessor will be; for example, the Itanium 2 is a 6-issue core, so being a 2-issue core makes each SPE significantly smaller than most general purpose microprocessors.

In the end, what we see with the SPEs is that they sacrifice some of the normal tricks to improve ILP in favor of being able to cram more SPEs onto a single die, effectively sacrificing some ILP for greater TLP. Given the direction that the industry is headed, a move to a very TLP centric design makes a lot of sense, but at the same time, it will be quite dependent on developers adhering to very specific development models.

Clearly, the architects of Cell saw the SPEs as being used to run a highly parallelizable workload, and as Derek Wilson mentioned in his article about AGEIA's PhysX PPU:

"One of the properties of graphics that made the feature a good fit for a specialized processor inside a PC is the fact that the task is infinitely parallelizable. Hundreds of thousands, and even millions of pixels, need to be processed every frame. The more detailed a rendering needs to be, the more parallel the task becomes. The same is true with physics. As with the visual world, the physical world is continuous rather than discrete. The more processing power we have, the more things we can simulate at once, and the more realistically we can approximate the real world."

With NVIDIA supplying some form of a GPU for Playstation 3, Cell's array of SPEs have one definite purpose in a gaming console - physics and AI processing. Many have argued that the array of SPEs seems capable of taking over the pixel processing workload of a GPU, but for a high performance console, that's not much of an option. The SPE array could offer better CPU-based 3D rendering, but it would be a tough sell (no pun intended) for this array of SPEs to be the end of dedicated GPU hardware.

70 Comments

View All Comments

WishIKnewComputers - Thursday, March 17, 2005 - link

Well, I dont really see the Cell 'breaking' in any way. Between being in the PS3, IBM servers/supercomputers, and Sony and Toshiba electronics, the chip will be all over the place.As for it showing up in PCs... no it wont happen anytime soon, but I really dont think it's intended to at this point. Workstation and playstations are its main concern, and smartly so. The Cell in its first generation isnt cut out for superior general tasking, obviously, but when those things start pumping out (and they will... the PS2 has sold what, 80 million units?), there will likely be different and more advanced versions. And if some of those are changed for enhanced general purposing somehow or another, then they could have shot at entering the PC world. As for taking on Intel, though... I dont think IBM is even considering that. If I had to guess, if they wanted to be in a PC, they would have OS X adapted to Cell and IBM would have these things in Apples.

But no matter which way they go, is it me or does IBM seem light-years ahead of Intel? After looking at Intel's future plans, it seems that they are trying to move towards what IBM is doing now. So is the Cell a processor just ahead of its time, or has Intel just gotten behind?

AnnihilatorX - Thursday, March 17, 2005 - link

This article is seriously a kill for a child like me. I appreciate it though. Well done Anandtechravedave - Thursday, March 17, 2005 - link

I can't wait to see what devlopers thing of the cell & the SDK's for it. I have a feeling thats what will kill the cell or make it successfull.microbrew - Thursday, March 17, 2005 - link

"System on a Chip (SoC)"What will make or break the Cell is the tools available, especially the operating system and libraries.

I would like to see what they're doing in terms of marketing the chip to consumer electronics, telecom, military and other embedded applications. I could see the Cell as a viable alternative to the usual mixures of PowerPcs, ARMs and DSPs.

I also agree with Final Words; I don't see the Cell breaking into the consumer PC market any time soon either.

Locut0s - Thursday, March 17, 2005 - link

#17 Yeah that was a bit too harsh I agree.Eug - Thursday, March 17, 2005 - link

I'm just wondering how well a dual-core PPE-based 4+ GHz chip would do in general purpose (desktop) code.And I also wonder how cool/hot such a chip would be. The Xbox 2's CPU is probably a 3-core PPE, but it runs at 3 GHz, and we don't have power specs for it anyway.

Filibuster - Thursday, March 17, 2005 - link

#11 (well, everyone should if they haven't before) read the Arstechnica article on PS2 vs PC - static applications vs dynamic media. Cell is taking it to the next level.http://arstechnica.com/articles/paedia/cpu/ps2vspc...

Very nice article Anand!

Googer - Thursday, March 17, 2005 - link

Besides a release date, is there any news or knowledge of a Linux Kit for Playstation 3 like there was for PS2? Does anyone KNOW OF Either?Illissius - Thursday, March 17, 2005 - link

Damn. Awesome article. If I hadn't known the site and author beforehand, I would've guessed Ars and Hannibal. Seems he isn't the only one with a talent for these kinds of articles ;)You should do more of them.

scrotemaninov - Thursday, March 17, 2005 - link

#22: This is just a guess so don't rely on this. The POWER5 has 2way SMT. Each cycle it fetches 8 instructions from the L1I cache. All instructions fetched per cycle are for the same thread so it alternates (round robin). It also has capabilities for setting the thread priority so that you effectively run with 1 thread and it just fetches 8 instructions per cycle for the one running thread.I would expect the PPE to be similar to this, fetching 2 instructions for the same thread each cycle. The POWER5 has load balancing stuff in there too - if one thread keeps missing in L2 then the other thread gets more instructions decoded in order to keep the CPU functional unit utilisation up. I've no idea whether this kind of stuff has made it over into the PPE, I'd be a little surprised if it has, especially seeing as this is in-order anyway so it's not like you're going to be aiming for high utilisations rates.