64 Cores of Rendering Madness: The AMD Threadripper Pro 3995WX Review

by Dr. Ian Cutress on February 9, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- Lenovo

- ThinkStation

- Threadripper Pro

- WRX80

- 3995WX

CPU Tests: Microbenchmarks

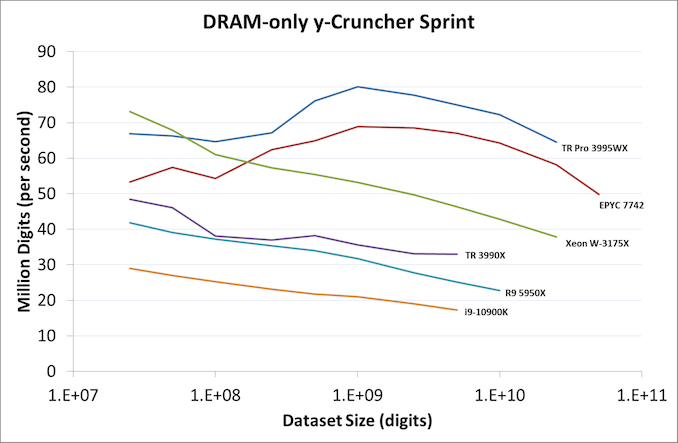

A y-Cruncher Sprint

The y-cruncher website has a large amount of benchmark data showing how different CPUs perform when calculating pi up to a given number of digits. Not only are the pi world records present, but below these there are a few CPUs showing the scaling of the hardware, where it shows the time to compute moving from 25 million digits to 50 million, 100 million, 250 million, and all the way up to 10 billion, to showcase how the performance scales with digits (assuming everything is in memory). This range of results, from 25 million to 250 billion, is something I’ve dubbed a ‘sprint’.

I have written some code in order to perform a sprint on every CPU we test. It detects the DRAM, works out the biggest value that can be calculated with that amount of memory, and works up starting from 25 million digits. For the tests that go up to the ~25 billion digits, it only adds an extra 15 minutes to the suite for an 8-core Ryzen CPU. With this test, we can see the effect of increasing memory requirements on the workload and the scaling factor for a workload such as this.

Longer lines indicate more memory installed in the system at the time

For this sprint, we’ve covered each result into how many million digits are calculated per second at each of the dataset sizes. The more cores a system has, the better the compute, and Intel gets an AVX-512 bonus here as well because the software can use AVX-512. But as the dataset gets larger, there is more shuffling of values back and forth between memory and cache, so being able to keep a high bandwidth while also a low latency to all cores is crucial in this test, especially as the test increases.

The 8-channel 64-core TR Pro 3995WX here does very well, peaking at around 80 million per second, and at the end of the test still being very fast. It sits above the EPYC 7742 here due to the fact that it has a higher TDP and frequency. They are both well above the Threadripper 3990X, which only has quad-channel memory, which is the reason for the decrease as the dataset increases.

The W-3175X from Intel has the AVX-512 advantage, which is why the 28 cores can compete with the 64 cores from AMD, however the six-channel memory bandwidth and probably the mesh quickly becomes a bottleneck as each core needs to feed those AVX-512 units. This is the sort of situation where in-package HBM is likely to make a big difference. But at the smaller dataset sizes at least the W-3175X can feed enough data across the mesh to the AVX-512 units for the peak throughput.

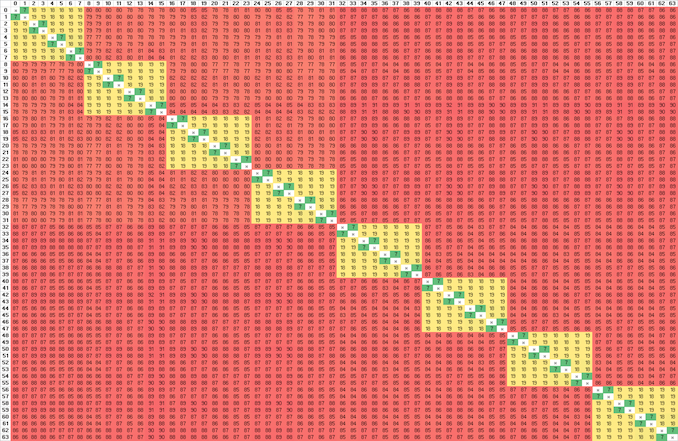

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

Due to a test limitation, we’re only probing the first 64 threads of the system, but the scale out to 128 threads would be identical. This generation of Threadripper Pro is built on Zen 2, similar to Threadripper 3990X and the EPYC 7742, and so we only have quad-core CCXes in play here. A thread speaking to itself has a latency of around 7 nanoseconds, inside a quad-core CCX is around 18-19 nanoseconds, and then accessing any other core varies from 77-89 nanoseconds. Even accessing the CCX on the same chiplet has the same latency, as the communication is designed to ping out to the central IO die first. If Threadripper Pro gets boosted to Zen 3 for the next generation, this will be a big uplift as we’ve already seen with Zen 3. But TR Pro with Zen 3 might only be launched only when Zen 4 comes out, and we’ll be talking about that difference when that happens.

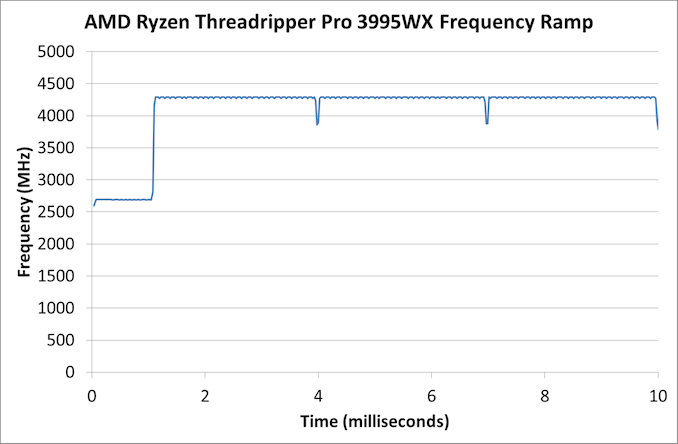

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

The frequency ramp here is around one millisecond, indicative of AMD implementing its CPPC2 management design.

118 Comments

View All Comments

avb122 - Tuesday, February 9, 2021 - link

Those cases do not matter unless you are checking that the result is the same as a golden reference. Otherwise the image it creates is just as if the object it was rendering moved 10 micrometers. To our brain it not doesn't matter.Being off by one bit with FP32 for geometry is about the same magnitude as modeling light as a partial instead of a wave. For color intensity, one bit of FP32 is less than one photon in real world cases.

But, CPUs and GPUs all get the same answer when doing the same FP32 arithmetic. The programmer can choose to do something else like use lossy texture compression or goofy rounding modes.

avb122 - Tuesday, February 9, 2021 - link

It's not because of the hardware. AMD and NVIDIA's GPUs have IEEE complient FPUs. So, they get the same answer as the CPU when using the same algorithm.With CUDA, the same C or C++ code doing computations can run on the CPU and GPU and get the same answer.

The REAL reasons to not use a GPU are that the non-compete parts (threading, memory management, synchronization, etc.) are different on the GPU and not all GPUs support CUDA. Those are very good reasons. But it is not about the hardware. It is about the software ecosystem.

Also GPUs do not have a tiny amount of cache. They have more total cache than a CPU. The ratio of "threads" to cache is lower. That requires changing the size of the block that each "thread" operates on. Ultimately, GPUs have so much more internal and external bandwidth than a CPU that only extreme cases where everything fits in the CPUs' L1 caches buy not in the GPU's register file can a CPU have more bandwidth.

Ian's statement about wanting 36 bits so that it can do 12-bit color is way off. I only know CUDA and NVIDIA's OpenGL. For those, each color channel is represented by a non-SIMD register. Each color channel is then either an FP16 or FP32 value (before neural networks GPUs were not faster at FP16, it was just for memory capacity and bandwidth). Both cover 12-bit color space. Remember, games have had HDR for almost two decades.

Dug - Tuesday, February 9, 2021 - link

It's software.But sometimes you don't want perfect. It can work in your benefit depending on what end results you view and interpret.

Smell This - Tuesday, February 9, 2021 - link

Page 4

Cinebench R20

Paragraph below the first image

**Results for Cinebench R20 are not comparable to R15 or older, because both the scene being used is different, but also the updates in the code bath. **

I do like my code clean ...

alpha754293 - Tuesday, February 9, 2021 - link

It's a pity that the processor and as a platform, you can buy a used dual EPYC 7702 server and still reap the multithreaded performance of 128-cores/256-threads moreso than you would be able to get out of this processor.I'd wished that this review actually included the results of a dual EPYC 7702/7742 system for the purposes of comparing the two, as I think that the dual EPYC 7702/7742 would still outperform this Threadripper Pro 3995WX.

Duncan Macdonald - Tuesday, February 9, 2021 - link

Given the benchmarks and the prices, the main reason for using the Threadripper Pro rather than the plain Threadripper is likely to be the higher memory capacity (2TB vs 256GB) .Even a small overclock on a standard Threadripper would allow it to be faster than a non-overclocked Threadripper Pro for any application that fits into 256GB.

twtech - Tuesday, February 9, 2021 - link

There are a couple other pretty significant differences that matter perf-wise in some scenarios - the Pro has 8-channel memory support, and more PCIE lanes.Significant differences not directly tied to performance include registered ECC support, and management tools for corporate security, which actually matters quite a bit with everyone working remotely.

WaltC - Tuesday, February 9, 2021 - link

On the whole, a nice review...;)Yes, it's fairly obvious that one CPU core does not equal one GPU core, as comparatively, the latter is wide and shallow and handles fewer instructions, IPC, etc. GPU cores are designed for a specific, narrow use case, whereas CPU cores are much deeper (in several ways) and designed for a much wider use case. It's nice that companies are designing programming languages to utilize GPUs as untapped computing resources, but the bottom line is that GPUs are designed primarily to accelerate 3d graphics and CPUs are designed for heavy, multi-use, multithreaded computation with a much deeper pipeline, etc. While it might make sense to use both GPUs and CPUs together in a more general computing case once the specific-case programming goals for each kind of processing hardware are reached, it makes no sense to use GPUs in place of CPUs or CPUs in place of GPUs. AMD has recently made no secret it is divulging its GPU line to provide more 3d-acceleration circuitry and less compute circuitry for gaming, and another branch that will include more CU circuitry and less gaming-use 3d-acceleration circuitry. 'bout time.

The software rendering of Crysis is a great example--an old, relatively slow 3d GPU accelerator with a CPU can bust the chops of even WX3995 CPUs *if* the 3995WX is tasked to rendering Crysis sans a 3d accelerator. When the Crysis-engine talks about how many cores and so on it will support, it's talking about using a 3d accelerator *with* a general-purpose CPU. That's what the engine is designed to do, actually. Take the CPU out and the engine won't run at all--trying to use the CPU as the API renderer and it's a crawl that no one wants...;) Most of all, using the CPU to "render" Crysis in software has no comparison to a CPU rendering a ray-traced scene, for instance. Whereas the CPU is rendering to a software D3d API in Crysis, ray-tracing is done by far more complex programming that will not be found in the Crysis engine (of course.)

I was surprised to read that Ian didn't think that 8-channel memory would add much of anything to performance beyond 4-channel support....;) Eh? It's the same principle as expecting 4-channel to outperform 2 channel, everything else being equal. Of course, it makes a difference--if it didn't there would be no sense in having 3995WX support 8 channels. No point at all...;)

Oxford Guy - Tuesday, February 9, 2021 - link

Yes, the same principle of expecting a dual core to outperform a single core — which is why single/core CPUs are still dominant.(Or, we could recognize that diminishing returns only begin to matter at a certain point.)

tyger11 - Tuesday, February 9, 2021 - link

Definitely waiting for the Zen 3 version of the 3955X. I'm fine with 16 cores.