How We Test PCIe 4.0 Storage: The AnandTech 2021 SSD Benchmark Suite

by Billy Tallis on February 1, 2021 1:15 PM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance.

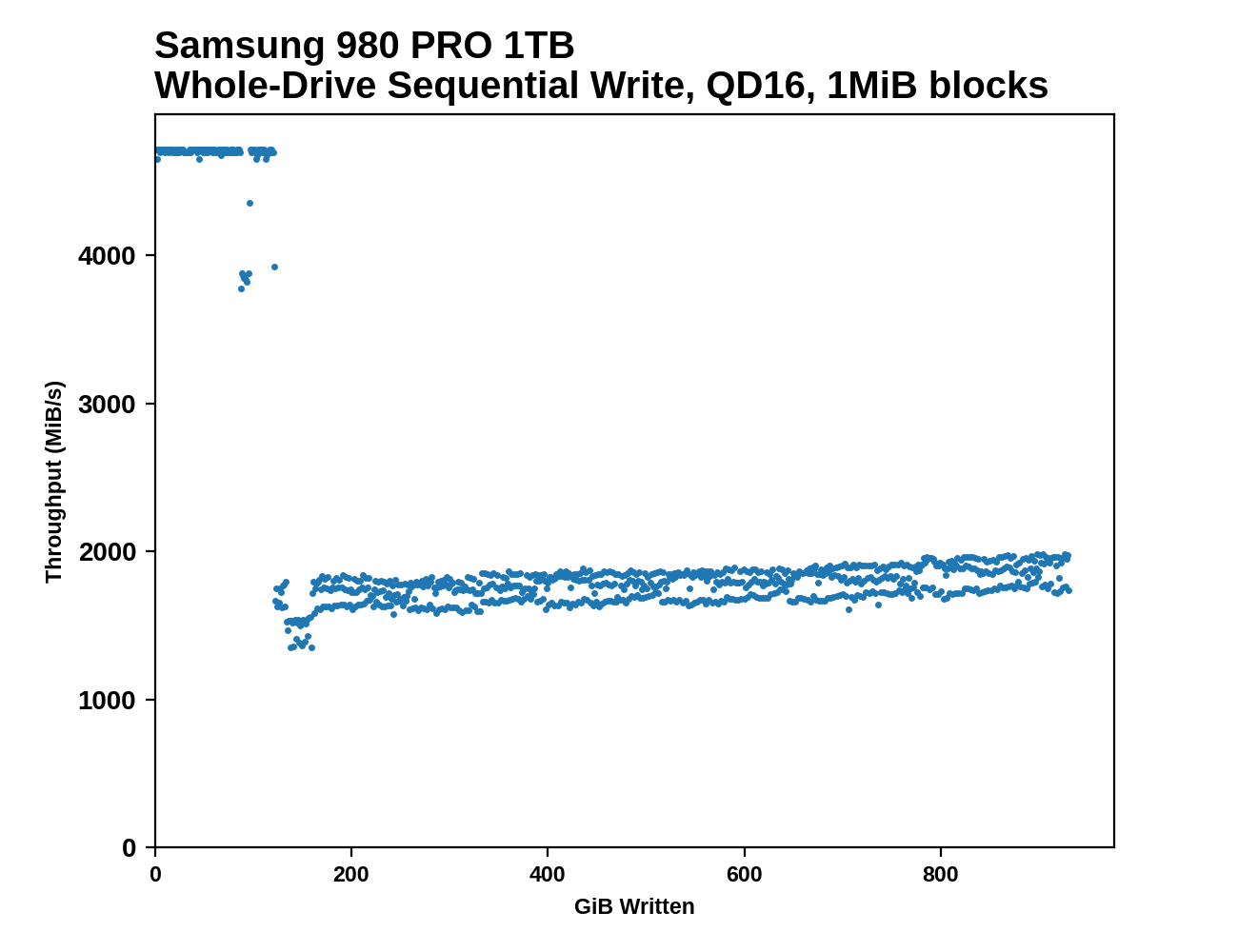

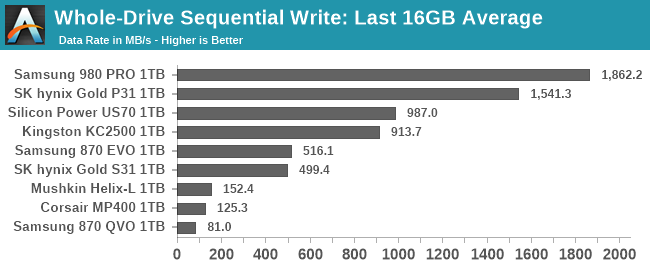

Sequential Drive Fill

The main purpose of the sequential drive fill tests are to estimate the size of a drive's SLC write cache. This test is also one of the most likely to trigger thermal throttling, because it is the longest-running sustained IO test in our suite. This test performs two passes of writing to the drive. The first is conducted after erasing the drive and giving it a few minutes to cool down and finish any background work. This first pass of sequential writes shows us the best-case SLC cache capacity, since any variable-sized cache will be at its largest when starting on an empty drive. The second pass is conducted after giving the drive some idle time and performing some read performance tests. By the time the second write pass begins, the drive should have finished any background work and we should observe the worst-case SLC cache capacity for drives that have a variable size cache.

As the second sequential write pass continues, the SLC cache will eventually be filled and even drives that don't use SLC caching will usually show some performance drop. This is pushing the drive well beyond the limits of any real-world consumer workload, so aside from any SLC cache at the beginning, performance during the second pass is irrelevant. However, since this second pass is overwriting data that was also written sequentially, the drive's garbage collection during this process is quite straightforward. Overwriting the drive with random writes instead of sequential writes would be more likely to fill the drive's spare area and induce more severe performance drops.

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

After both passes of sequential writes are complete, the last 20% of the drive is TRIMed and the drive is given plenty of idle time. This prepares the drive for the battery of tests that are conducted on an 80%-full drive—full enough that SLC cache size is significantly reduced, but still leaving some empty space to avoid testing the absolute worst-case scenario of performance on a completely full drive.

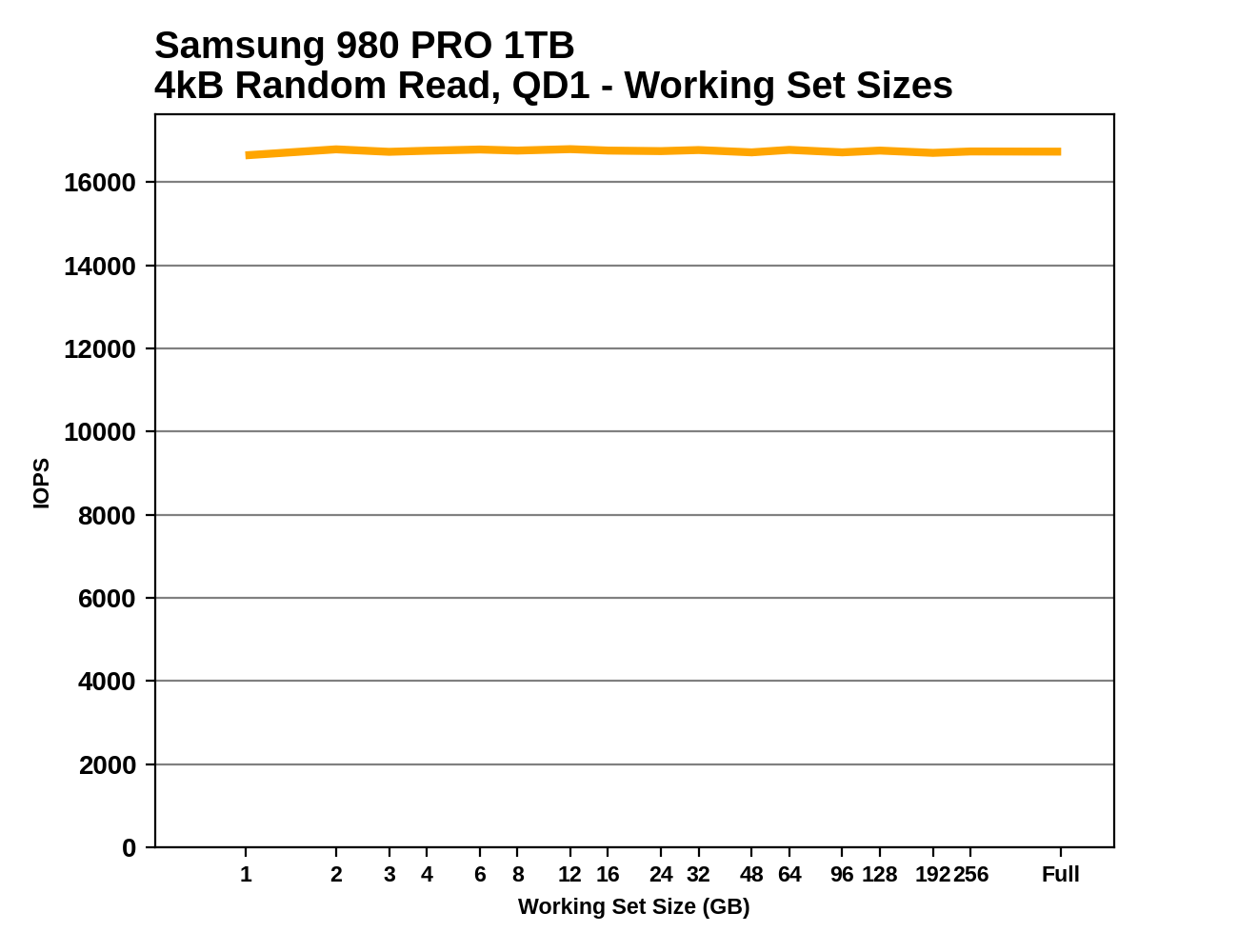

Working Set Size

This test performs random 4kB reads at queue depth 1 while varying the working set size: the size of the dataset that the random reads are coming from. When the working set size is small, the access pattern has a high degree of spatial locality, and DRAMless drives should have no trouble caching the limited amount of NAND mapping information needed to handle the reads. As the working set size increases, drives with little or no RAM are likely to show reduced performance from an increasing number of FTL cache misses. Often there is a sharp drop in performance that suggests the size of any on-controller SRAM or HMB cache in use. Drives with some DRAM but not the full 1GB per 1TB ratio may be able to handle very large working set sizes with good performance, but typically still show reduced performance when random reads span the entire drive.

|

|||||||||

This test also provides an opportunity to verify that the TRIM command is working properly: when attempting to read data from a portion of the drive that is empty (or has been trimmed), the drive should return a bunch of zeros as soon as it has looked up the relevant LBAs in the FTL and determined that there isn't actually any real flash memory currently allocated to those addresses. So in addition to running the working set size test on a full drive, we also run it when the drive is 32GB full and 80% full, expecting to see substantially increased performance when many or most of the reads should be handled without actually touching the NAND flash memory. These extra test runs aren't included in the graphs we publish, but we're keeping an eye out for drives that don't behave as expected.

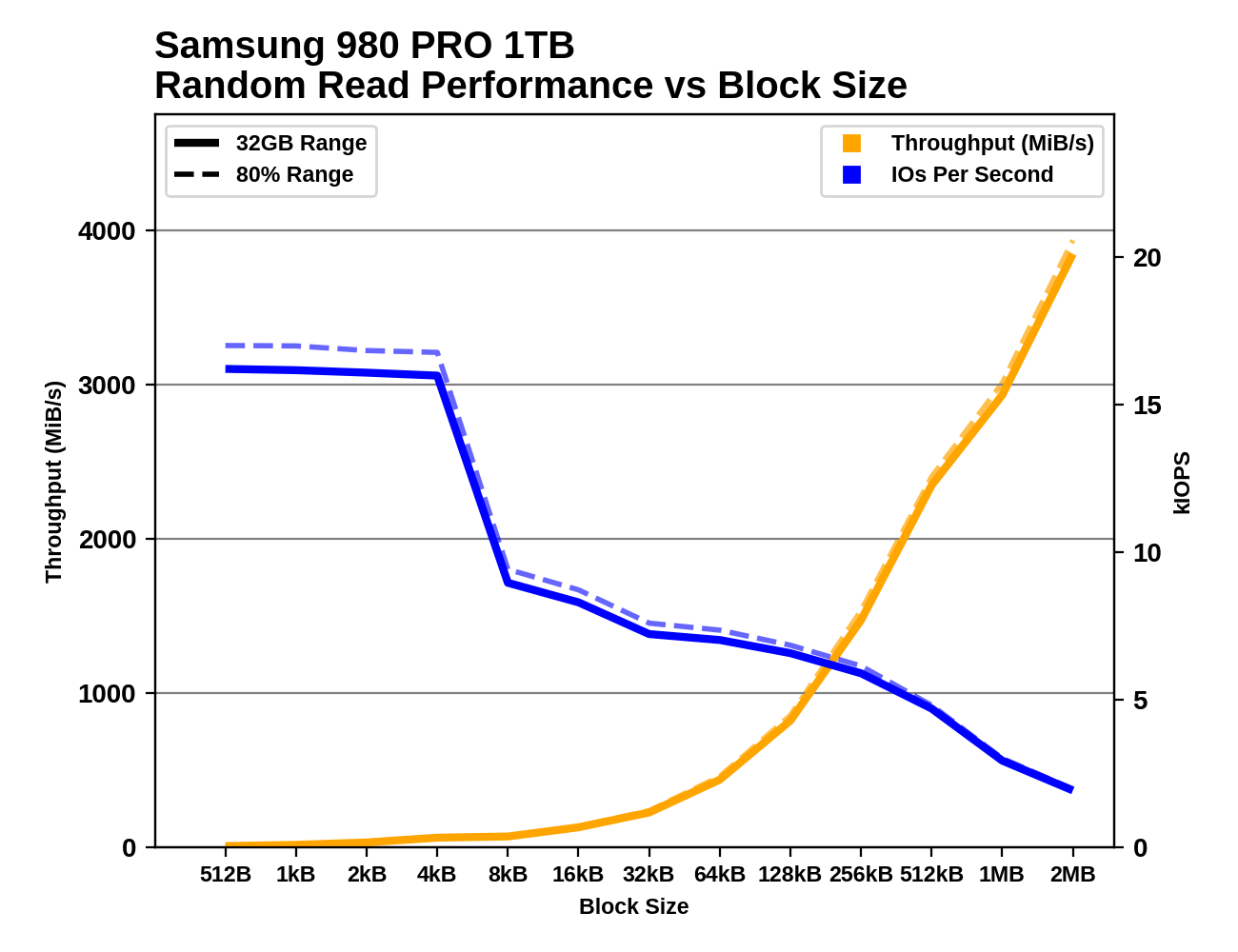

Performance vs Block Size

Industry standard practice is to measure random IO performance using 4kB operations and sequential IO performance using 128kB operations. But SSDs permit IOs as small as 512 bytes, and real-world workloads include a wide variety of actual IO block sizes. Our trace-based tests subject drives to IOs of various sizes, but are ill-suited for analyzing how specific block sizes perform.

These tests perform 1GB of IO at each block size, at a queue depth of 1 and with the usual idle time after each step. Like our other synthetic tests, they're performed both with the drive 32GB full and 80% full, to capture any differences due to things like SLC caching. Regular readers may recognize these tests as based on ones we use as part of our enterprise SSD test suite. The principle is the same, but the configuration here has been adjusted to match the rest of our synthetic tests, and we're now testing up to block sizes of 2MB. As with some of the other tests, the fact that we're testing under Linux means that IOs larger than 128kB get split up by the OS and issued to the drive as a batch. For example, IO with a 1MB block size ends up looking to the drive like eight operations of 128kB issued at the same time.

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

There are several interesting phenomena to keep an eye out for. With block sizes smaller than 4kB, we generally see performance that is roughly the same IOPS as with a 4kB block size. This is a consequence of the fact that virtually all flash-based SSDs manage the NAND flash memory in 4kB chunks, even when configured to expose a 512-byte LBA size. Some drives exhibit pathologically low performance with sub-4kB block sizes, especially for writes, where a read-modify-write cycle may be necessary for the drive to preserve the data in the rest of the 4kB block.

Sequential IO with small to medium block sizes can also reveal some surprises, such as drives that seem to assume any 4kB access will be a random access and choose not to read and cache the rest of the (typically ~16kB) NAND page. Quite a few drives also show little improvement in sequential throughput with the medium block sizes, but show significant throughput scaling once the block size is well past 128kB. This is part of why we changed our burst sequential IO tests to use 1MB block sizes instead of 128kB.

70 Comments

View All Comments

nobozos - Tuesday, February 2, 2021 - link

One thing that bothers me about benchmarks in general is that they often don't show the statistics normalized against the cost of the thing being measured. For example, I'd like to see iops/$, or GBs/$, or ???/$ in all your tables and charts. I think you've sometime done this in the past, but it should become a regular feature of every review.kepstin - Tuesday, February 2, 2021 - link

Prices are so volatile in the market (and sometimes even regional) that a static number here doesn't make sense imo. The periodic roundups of recommended drives do take price and performance into account.KarlKastor - Tuesday, February 2, 2021 - link

@BillyThank you for the detailed test and the explanation of each procedure.

There is one thing that I am missing in this test. How does a drive perform in heavy and light, if it is 80 or 90% full?

Is it closer to a fresh drive or closer to full drive?

Maybe you can run a drive in that precondition. Not as a general test, but just once to show how a drive behaves.

Oxford Guy - Tuesday, February 2, 2021 - link

Great article. I particularly agree with the use of 80% full because that's a lot more realistic than empty drive testing. In fact, I would skip empty drive testing and stick with 60% and 80% full tests.• Having three Samsung drives out of nine shown seems like an ad for Samsung, even if that wasn't the intention. That Samsung is a popular brand is not a good reason. OCZ used to be popular and the company's bad practices caught up with it.

• Please test the Inland brand drives. People can find Samsung drive tests all over the Internet. I'm not saying don't test them, of course. I am asking that you provide significantly more added value to your SSD reviews by reviewing drives almost no one else reviews. For instance, I recently purchased the 2 TB Inland Performance Plus drive, which uses the Phison E18 controller. It should provide very good performance but reviews would help.

Another issue with brands like Inland is firmware updates. Sandforce, the most infamously poor-quality SSD controller outfit, finally (they claimed) fixed a serious bug in their second-generation controller years ago and OCZ released yet another firmware update. Yet, other brands were sold using the controller and the OCZ tool wouldn't recognize them so they could be patched. Sandforce, of course, never bothered to provide a utility for patching these other brands' drives.

This issue isn't so severe if the consumer just happened to have purchased a Sandforce drive from a vendor that sometimes makes the effort to create patches, like Intel. But, it's really inexcusable to have such a caveat emptor attitude that one doesn't make a strong effort to warn consumers about any risks involved in buying drives from less dominant brands. Phison, for instance, has reportedly been working on improving the firmware for the E18. Will Inland ever receive a patch? I haven't looked much into it but when I did a a few cursory searches about Inland and firmware patches over the years it seemed that it was the typical "off brand" situation — where the drives are stuck forever with their initial firmware.

That's not such a severe problem if the firmware is decent to begin with (unlike OCZ, which, despite dozens of updates never fixed the Vertex 2 drive at all) — but it's something Anandtech should be and should have been raising awareness about. Your site covered OCZ's bait and switch tactics (when it switched 32-bit NAND in the Vertex 2 for 64-bit NAND, causing the drives to brick randomly — especially when put to sleep), which was great.

But, unless I missed it I haven't seen any articles about the drawbacks of purchasing SSDs from smaller brands. And, why not put some pressure on the industry to stop enabling companies like Sandforce to not provide utilities to patch their drives (and utilities to un-brick them when they go into 'panic mode'). It was completely inexcusable — the industry silence around that. Sandforce made it much more important to brick the drive when there was a software glitch, no matter how minor, apparently to 'protect its IP'. Shouldn't the consumer's data be considered the priority? Well, they came out with a not-at-all-conflict-of-interest partnership with DriveSavers. That's right — you get the joy of a drive that will brick at any moment and then you can spend thousands to 'protect the vaunted Sandforce IP' and pad its pockets and DriveSavers'.

The tech press is supposed to protect us from caveat emptor. So, please... start reviewing smaller brands, start providing a bigger picture than the latest from Samsung, and put more pressure on industry players (like Inland) to do the right things, like keeping their drives' firmware current.

Oxford Guy - Tuesday, February 2, 2021 - link

Speaking of bad practices, let's take a look at Samsung.1. The company breaks industry convention and intentionally confuses consumers by labeling QLC drives "MLC", and TLC drives as well. That's an example of fraud which is, unfortunately, legal.

There should have been an article from every tech site condemning this. I don't recall seeing even one. You know, it's not too late, either!

2. The company posted fantasy power consumption figures for drives like the 830 and the tech press and companies like Newegg dutifully posted those specs. Samsung sold a lot of drives based on word of mouth — about how amazingly efficient its drives were, based on those nonsensical power usage claims.

3. The company released its planar TLC drives in such an under-engineered (half-baked) state that they had to be kludged into frequently rewriting stored data to keep their performance somewhat acceptable. The steady state performance of the 128 GB 840 drive earned particular, fully justified, scorn from HardOCP.

Kristian Vättö - Tuesday, February 2, 2021 - link

All SSDs with a Phison controllers are the same - designed and assembled by Phison. Sure, there are some FW differences as every customer can request customisations, but at a high-level an SSD with a Phison controller is a Phison SSD. None of the small brands produce their own SSDs, they simply work with Phison and other similar ODMs who offer turnkey solutions. Anyone can start their own brand if they have enough capital to meet the MOQ requirements.It was different 10 years ago when there were numerous incumbent controller and SSD vendors shipping new designs every 6-12 months ago. At that time, it was never sure what to expect and at AT we were more or less a validation partner even. Nowadays there are a few large factories pumping out stuff with different labels.

Oxford Guy - Tuesday, February 2, 2021 - link

The Sandforce 2200 controller was used by a bunch of different companies but to my knowledge it’s not possible to patch that bug if one owns one of the smaller brands’ drives. It’s unlikely enough to get OCZ’ utility to recognize its own drives, let alone another vendor’s.So, even if the controller is the same and even if the other hardware is standard, is there a standard utility that can be used with any drive made by any brand? Sandforce never seemed to bother to offer anything like that and there were a lot of different brands using its controllers.

Also, even when a controller is standard the firmware may not be, as in the case of Intel’s Sandforce drives as far as I know.

Oxford Guy - Tuesday, February 2, 2021 - link

So my question remains: are all the Inland drives able to be firmware-updated and secure erased?Or, are such ‘small brand’ drives locked out of those things?

rahvin - Tuesday, February 2, 2021 - link

Why would they offer a tool when they can charge the OEM to produce a branded tool for those drives only?There's little incentive for an ODM to provide anything they aren't paid for and their customers aren't the retail buyers, it's the OEM's.

Billy Tallis - Tuesday, February 2, 2021 - link

Samsung's over-represented in this article mainly because they're one of the few companies still sampling new SATA drives for review, and I didn't want to have the SATA market segments represented by old 64-layer drives that you can no longer purchase.As for the Inland drives: I don't have any easy way to get samples of a large number of their drives. I strongly prefer not wasting time re-testing the same drive with a different brand's sticker. I do plan to soon have full results for E12+TLC, E12S+QLC, E16+TLC, E16+QLC drives in Bench, and I'll be getting an E18 sample soon. They won't all be from the same brand, but the results will be generally representative of the equivalents from other Phison-based brands.

I also wish the smaller SSD brands did a better job of making firmware updates available. That is definitely a valid reason for preferring some brands over others. It's a little hard to evaluate vendors on the timeliness of their firmware update releases at product launch, and I've never made it a priority to systematically compare vendors on this post-launch.

Part of why it's been a low priority has been because it seems like firmware updates are generally not as important these days. When a controller is first launched there are often a few updates to optimize performance, but those usually don't have a big impact on the overall standings of a drive. Firmware updates to fix critical bugs seem to be thankfully less common. And for users who really do care about making sure they've got the absolute latest firmware on their Phison drives, you can usually find a way to apply the update using a different vendor's tool—not ideal by any means, but it works.