How We Test PCIe 4.0 Storage: The AnandTech 2021 SSD Benchmark Suite

by Billy Tallis on February 1, 2021 1:15 PM ESTAdvanced Synthetic Tests

Our benchmark suite includes a variety of tests that are less about replicating any real-world IO patterns, and more about exposing the inner workings of a drive with narrowly-focused tests. Many of these tests will show exaggerated differences between drives, and for the most part that should not be taken as a sign that one drive will be drastically faster for real-world usage. These tests are about satisfying curiosity, and are not good measures of overall drive performance.

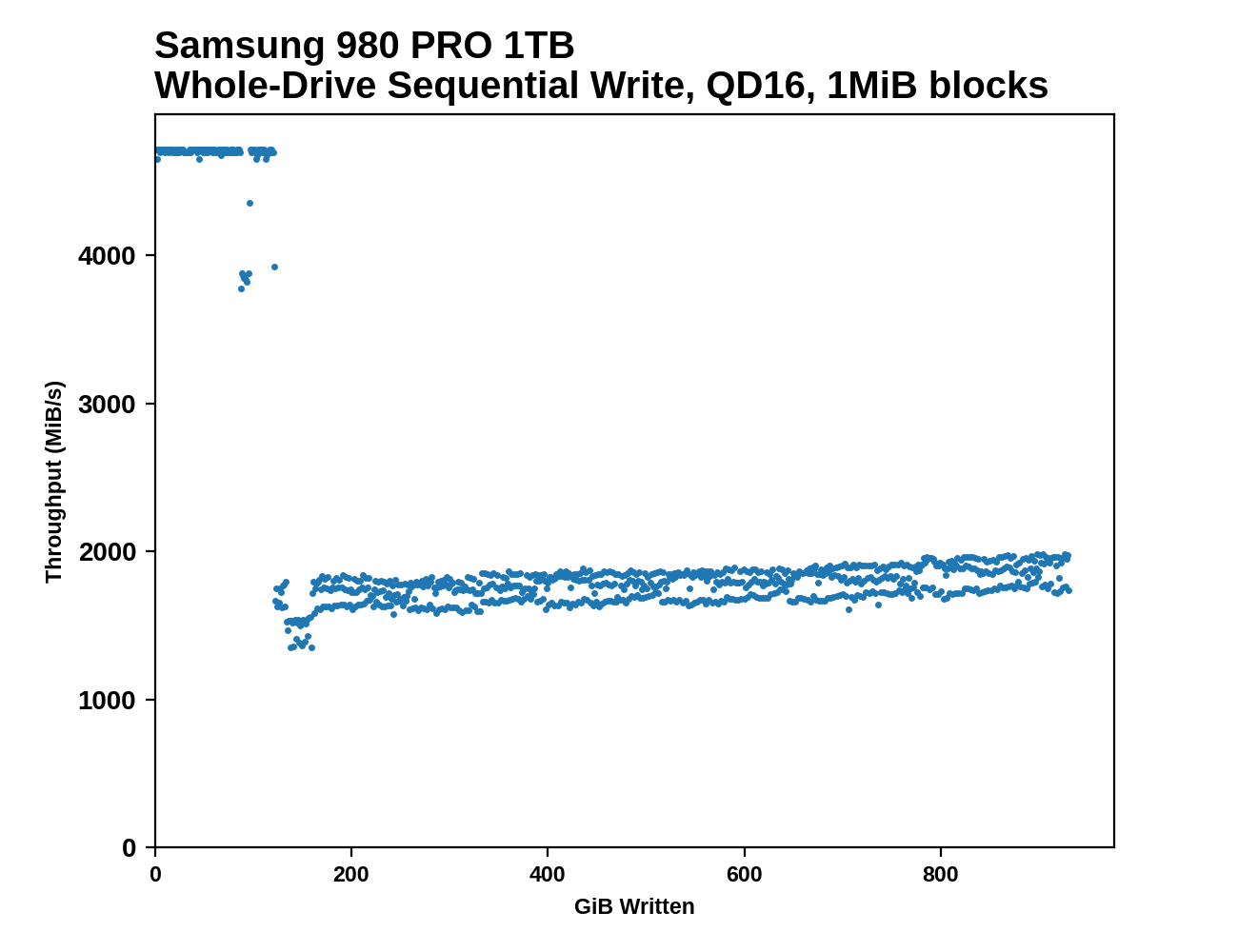

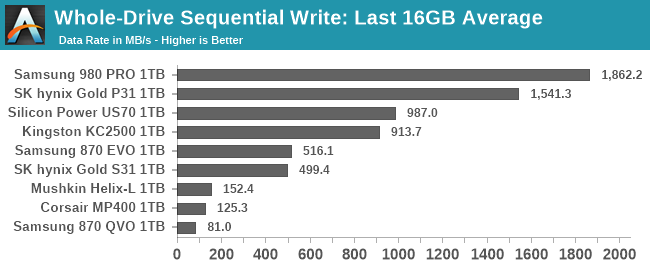

Sequential Drive Fill

The main purpose of the sequential drive fill tests are to estimate the size of a drive's SLC write cache. This test is also one of the most likely to trigger thermal throttling, because it is the longest-running sustained IO test in our suite. This test performs two passes of writing to the drive. The first is conducted after erasing the drive and giving it a few minutes to cool down and finish any background work. This first pass of sequential writes shows us the best-case SLC cache capacity, since any variable-sized cache will be at its largest when starting on an empty drive. The second pass is conducted after giving the drive some idle time and performing some read performance tests. By the time the second write pass begins, the drive should have finished any background work and we should observe the worst-case SLC cache capacity for drives that have a variable size cache.

As the second sequential write pass continues, the SLC cache will eventually be filled and even drives that don't use SLC caching will usually show some performance drop. This is pushing the drive well beyond the limits of any real-world consumer workload, so aside from any SLC cache at the beginning, performance during the second pass is irrelevant. However, since this second pass is overwriting data that was also written sequentially, the drive's garbage collection during this process is quite straightforward. Overwriting the drive with random writes instead of sequential writes would be more likely to fill the drive's spare area and induce more severe performance drops.

|

|||||||||

| Pass 1 | |||||||||

| Pass 2 | |||||||||

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

After both passes of sequential writes are complete, the last 20% of the drive is TRIMed and the drive is given plenty of idle time. This prepares the drive for the battery of tests that are conducted on an 80%-full drive—full enough that SLC cache size is significantly reduced, but still leaving some empty space to avoid testing the absolute worst-case scenario of performance on a completely full drive.

Working Set Size

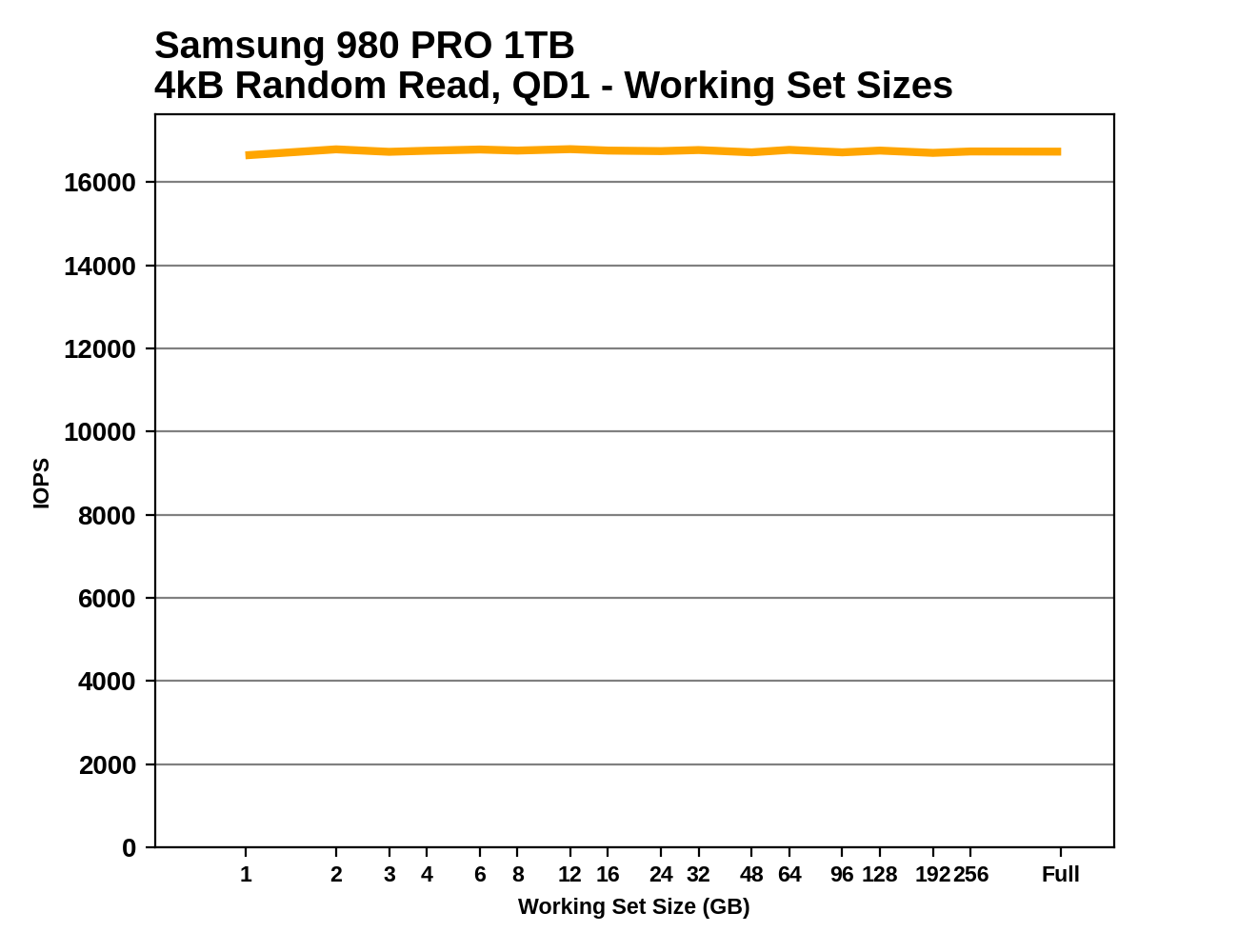

This test performs random 4kB reads at queue depth 1 while varying the working set size: the size of the dataset that the random reads are coming from. When the working set size is small, the access pattern has a high degree of spatial locality, and DRAMless drives should have no trouble caching the limited amount of NAND mapping information needed to handle the reads. As the working set size increases, drives with little or no RAM are likely to show reduced performance from an increasing number of FTL cache misses. Often there is a sharp drop in performance that suggests the size of any on-controller SRAM or HMB cache in use. Drives with some DRAM but not the full 1GB per 1TB ratio may be able to handle very large working set sizes with good performance, but typically still show reduced performance when random reads span the entire drive.

|

|||||||||

This test also provides an opportunity to verify that the TRIM command is working properly: when attempting to read data from a portion of the drive that is empty (or has been trimmed), the drive should return a bunch of zeros as soon as it has looked up the relevant LBAs in the FTL and determined that there isn't actually any real flash memory currently allocated to those addresses. So in addition to running the working set size test on a full drive, we also run it when the drive is 32GB full and 80% full, expecting to see substantially increased performance when many or most of the reads should be handled without actually touching the NAND flash memory. These extra test runs aren't included in the graphs we publish, but we're keeping an eye out for drives that don't behave as expected.

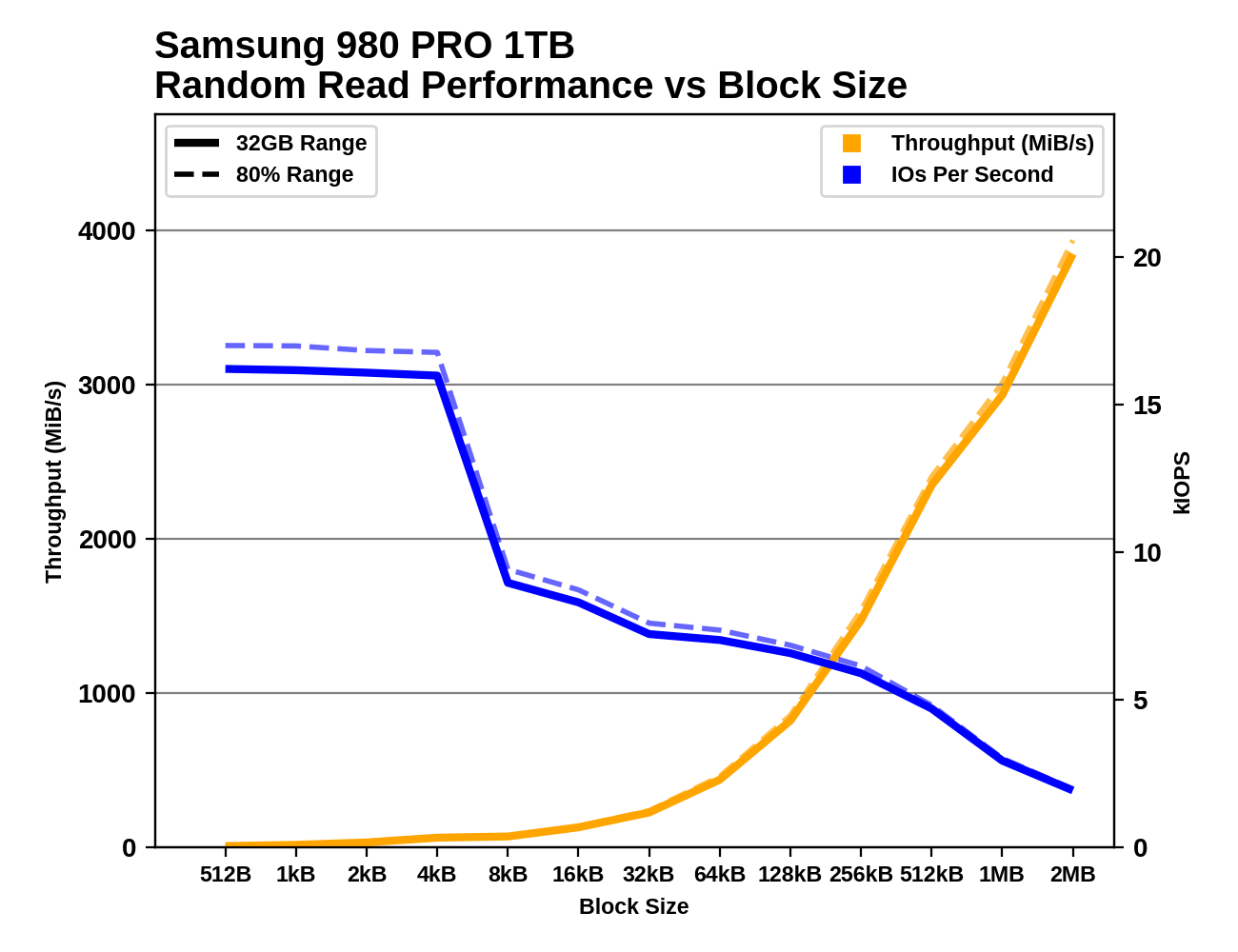

Performance vs Block Size

Industry standard practice is to measure random IO performance using 4kB operations and sequential IO performance using 128kB operations. But SSDs permit IOs as small as 512 bytes, and real-world workloads include a wide variety of actual IO block sizes. Our trace-based tests subject drives to IOs of various sizes, but are ill-suited for analyzing how specific block sizes perform.

These tests perform 1GB of IO at each block size, at a queue depth of 1 and with the usual idle time after each step. Like our other synthetic tests, they're performed both with the drive 32GB full and 80% full, to capture any differences due to things like SLC caching. Regular readers may recognize these tests as based on ones we use as part of our enterprise SSD test suite. The principle is the same, but the configuration here has been adjusted to match the rest of our synthetic tests, and we're now testing up to block sizes of 2MB. As with some of the other tests, the fact that we're testing under Linux means that IOs larger than 128kB get split up by the OS and issued to the drive as a batch. For example, IO with a 1MB block size ends up looking to the drive like eight operations of 128kB issued at the same time.

|

|||||||||

| Random Read | |||||||||

| Random Write | |||||||||

| Sequential Read | |||||||||

| Sequential Write | |||||||||

There are several interesting phenomena to keep an eye out for. With block sizes smaller than 4kB, we generally see performance that is roughly the same IOPS as with a 4kB block size. This is a consequence of the fact that virtually all flash-based SSDs manage the NAND flash memory in 4kB chunks, even when configured to expose a 512-byte LBA size. Some drives exhibit pathologically low performance with sub-4kB block sizes, especially for writes, where a read-modify-write cycle may be necessary for the drive to preserve the data in the rest of the 4kB block.

Sequential IO with small to medium block sizes can also reveal some surprises, such as drives that seem to assume any 4kB access will be a random access and choose not to read and cache the rest of the (typically ~16kB) NAND page. Quite a few drives also show little improvement in sequential throughput with the medium block sizes, but show significant throughput scaling once the block size is well past 128kB. This is part of why we changed our burst sequential IO tests to use 1MB block sizes instead of 128kB.

70 Comments

View All Comments

Billy Tallis - Monday, February 1, 2021 - link

I set up the testbed with an old 5450 I had lying around, and once all the software was configured I pulled the GPU back out. (That ancient GPU interferes with suspend, which is needed to unlock drives so they can be secure erased.) The system boots without complaint with no GPU, and I use SSH and RDP to run the tests. I'm keeping the 580 in the other system for now because I want to work on getting some application or gaming tests working on that machine when I have spare time.Billy Tallis - Monday, February 1, 2021 - link

I should mention that I have had a bit of trouble booting the ASRock B550 Pro motherboard, because it seems to have half-assed UEFI boot support. The motherboard will forget its boot settings at the slightest provocation, and when that happens it will search for and boot the first Windows it can find. It refuses to detect a Linux bootloader on an internal drive, even if it's in the canonical EFI/Boot/bootx64.efi location on the ESP. So any time this machine decides to forget its boot settings, I have to plug in a GPU and keyboard and boot a Linux off a USB device to re-create the UEFI boot entry for grub.DominionSeraph - Monday, February 1, 2021 - link

This is the power of AMD.Billy Tallis - Monday, February 1, 2021 - link

On the other hand, I was quite surprised to discover that a quad M.2 riser works on this board. Intel would never let PCIe bifurcation be enabled on an entry-level motherboard.Slash3 - Wednesday, February 3, 2021 - link

Asus actually bundles a quad NVMe M.2 adapter with their Strix B550-XE, for some absolutely baffling reason. I tried asking the Asus technical marketing rep on Reddit why they did this, but he didn't understand the question. What a weird friggin decision.https://rog.asus.com/us/motherboards/rog-strix/rog...

frbeckenbauer - Tuesday, February 2, 2021 - link

I have had the same issue on my old ASRock intel board, on my current MSI AMD board, on my Intel laptop, and on my AMD laptop. People really like writing UEFIs that just randomly boot a windows they find for no reasonkepstin - Tuesday, February 2, 2021 - link

I've seen a few AMD boards that have bios options to enable headless operation - basically, setting that option disables the post check for the graphics card. If you're running in native UEFI mode and your operating system doesn't require a GPU, it'll work fine.Makaveli - Monday, February 1, 2021 - link

This was a great article thank you Billy!WaltC - Monday, February 1, 2021 - link

Nice write up...;) Seems rather unsurprising that the two PCIe4 drives running in PCIe4 mode (I gather from the test hardware described) were the best overall performing in your tests. Although you described the PCIe/SATA interfaces of most of the drives--I wasn't clear on the Mushkin or the MP400. Also, a more thorough description of the drivers employed would help--open source or proprietary, etc. Linux distro tests, however, would seem to me to be of somewhat less "practical" value than Win10 tests--considering that Win10 is where the overwhelming bulk of these drives will be deployed by the consumers who buy them, I would think. Overall, it's nice to see changes here in AT's testing methodology!evilspoons - Monday, February 1, 2021 - link

Nice to see another substantial benchmark upgrade, keeping up with this stuff can be mind-boggling at times.The article could use someproofreading though. Even just the first paragraph:

"on accident" -> by accident

"holy unified metric" -> wholly-unified metric

Unless religion is somehow involved in benchmarking SSDs 😉