AMD Ryzen 9 5980HS Cezanne Review: Ryzen 5000 Mobile Tested

by Dr. Ian Cutress on January 26, 2021 9:00 AM EST- Posted in

- CPUs

- AMD

- Vega

- Ryzen

- Zen 3

- Renoir

- Notebook

- Ryzen 9 5980HS

- Ryzen 5000 Mobile

- Cezanne

CPU Tests: Microbenchmarks

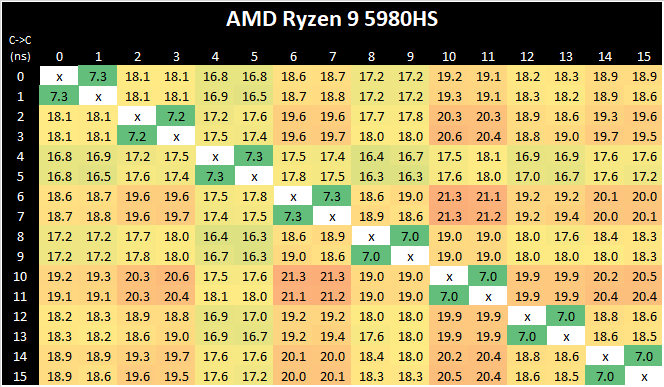

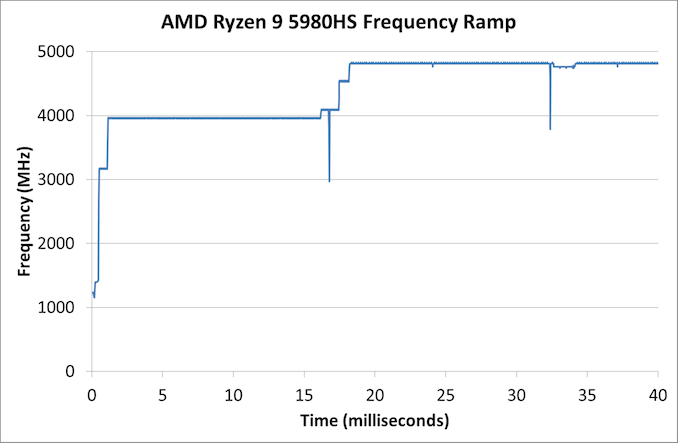

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

AMD’s move from a dual 4-core CCX design to a single larger 8-core CCX is a key characteristic of the new Zen3 microarchitecture. Beyond aggregating the separate L3’s together for a large single pool in single-threaded scenarios, the new Cezanne-based mobile SoCs also completely do away with core-to-core communications across the SoC’s infinity fabric, as all the cores in the system are simply housed within the one shared L3.

What’s interesting to see here is also that the new monolithic latencies aren’t quite as flat as in the previous design, with core-pair latencies varying from 16.8ns to 21.3ns – probably due to the much larger L3 this generation and more wire latency to cross the CCX, as well as different boost frequencies between the cores. There has been talk as to the exact nature of the L3 slices, whether they are connected in a ring or in an all-to-all scenario. AMD says it is an 'effective' all-to-all, although the exact topology isn't quite. We have some form of mesh with links, beyond a simple ring, but not a complete all-to-all design. This will get more complex should AMD make these designs larger.

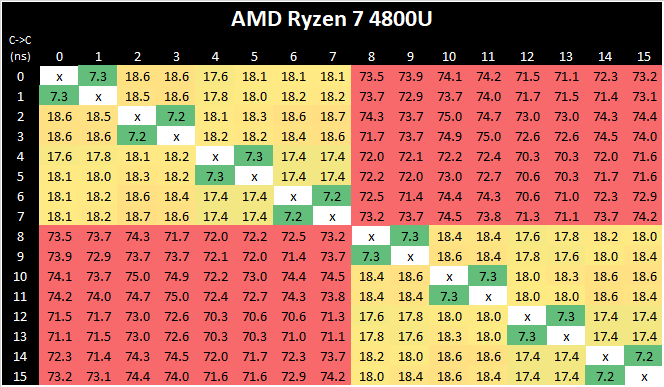

Cache-to-DRAM Latency

This is another in-house test built by Andrei, which showcases the access latency at all the points in the cache hierarchy for a single core. We start at 2 KiB, and probe the latency all the way through to 256 MB, which for most CPUs sits inside the DRAM (before you start saying 64-core TR has 256 MB of L3, it’s only 16 MB per core, so at 20 MB you are in DRAM).

Part of this test helps us understand the range of latencies for accessing a given level of cache, but also the transition between the cache levels gives insight into how different parts of the cache microarchitecture work, such as TLBs. As CPU microarchitects look at interesting and novel ways to design caches upon caches inside caches, this basic test proves to be very valuable.

As with the Ryzen 5000 Zen3 desktop parts, we’re seeing extremely large changes in the memory latency behaviour of the new Cezanne chip, with AMD changing almost everything about how the core works in its caches.

At the L1 and L2 regions, AMD has kept the cache sizes the same at respectively 32KB and 512KB, however depending on memory access pattern things are very different for the resulting latencies as the engineers are employing more aggressive adjacent cache line prefetchers as well as employing a brand-new cache line replacement policy.

In the L3 region from 512KB to 16 MB - well, the fact that we’re seeing this cache hierarchy quadrupled from the view of a single core is a major benefit of cache hit rates and will greatly benefit single-threaded performance. The actual latency in terms of clock cycles has gone up given the much larger cache structure, and AMD has also tweaked and changes the dynamic behaviour of the prefetchers in this region.

In the DRAM side of things, the most visible change is again this much more gradual latency curve, also a result of Zen3’s newer cache line replacement policy. All the systems tested here feature LPDDR4X-4266 memory, and although the new Cezanne platform has a slight advantage with the timings, it ends up around 13ns lower latency at the same 128MB test depth point into DRAM, beating the Renoir system and tying with Intel’s Tiger Lake system.

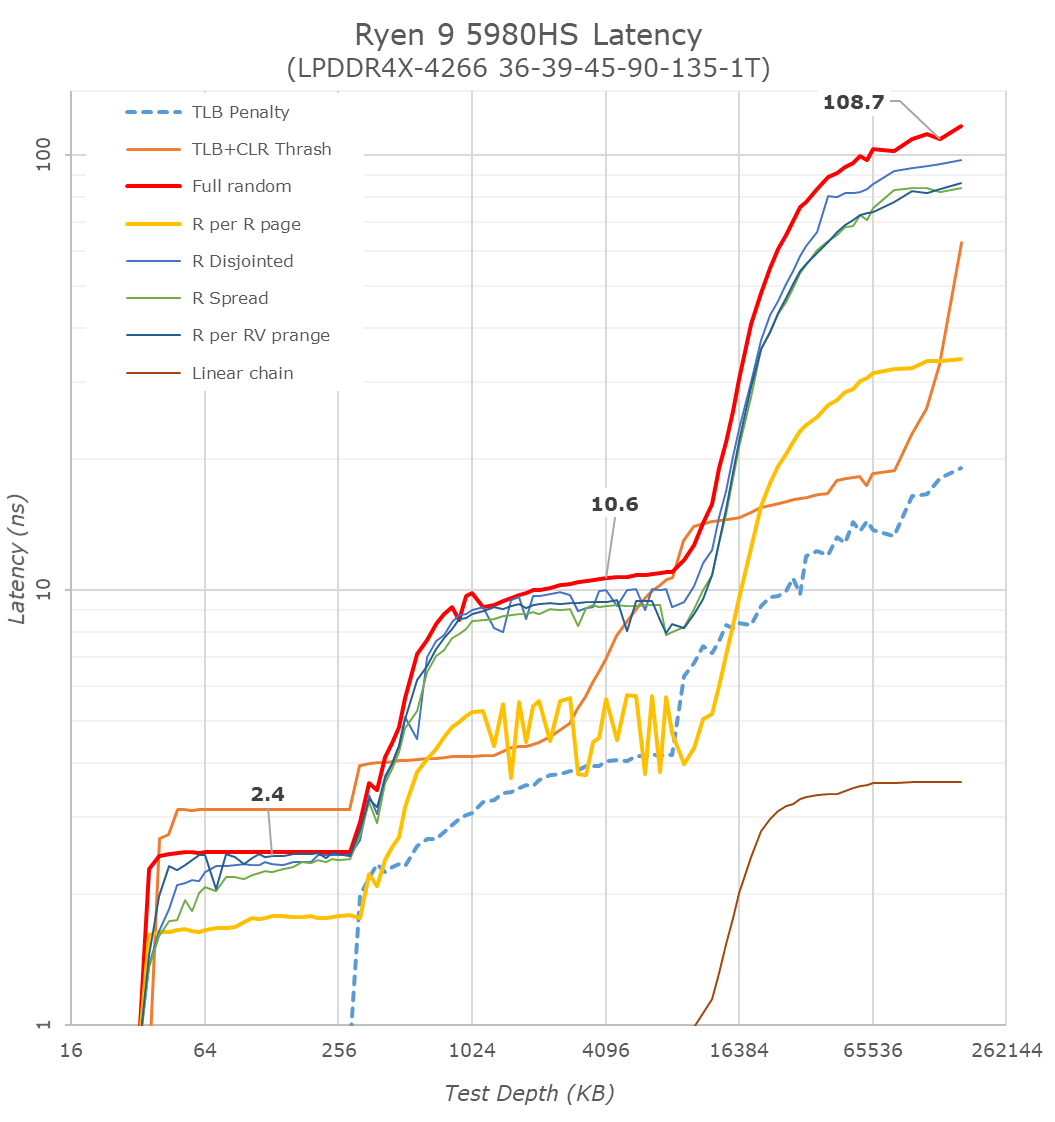

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

Our frequency ramp showcases that AMD does indeed ramp up from idle to a high speed within 2 milliseconds as per CPPC2. It does take another frame at 60 Hz (16 ms) to go up to the full turbo of the processor mind.

218 Comments

View All Comments

Meteor2 - Thursday, February 4, 2021 - link

Great point.ikjadoon - Tuesday, January 26, 2021 - link

It's great to see AMD kicking Intel's butt in a much larger market (i.e., laptops vastly outsell desktops): AMD really should be alongside, or simply replacing, Intel in most premium notebooks. Gaming notebooks are not my cup of tea, but glad to see for upcoming 15W Zen3 parts.Will we see actual, high-end Zen3 notebooks? Lenovo, HP, ASUS, Dell: for shame if you keep ramming toasty Tiger Lake down customers' throats. Lenovo's done some great offerings with both AMD & Intel; that means some compromises with notebook design (just go all AMD, man; if/when Intel is on top, switch back!), but beefier cooling for Intel will also help AMD.

Still, overall, I don't see anything convincing me that x86 is really right for notebooks, either. So much waste heat...for what? The M1 has rightly rejiggered expectations: 20 hours on 150 nits should be ordinary, not miraculous. Limited to no fan spin-up and max CPU load should yield a chassis maximum of 40C (slightly warmer than body temperature). And, all the while with class-leading 1T performance.

As this is a gaming laptop, it's not too relevant to compare web benchmarks (what most laptops do), but this is peak Zen3 mobile and it still falls quite short:

Speedometer 2.0

35W Ryzen 5980HS: 102 points (-57%)

125W i9-10900K: 119 points (-49%)

35W i7-1185G7: 128 points (-46%)

105W Ryzen 5950X: 140 points (-40%)

30W Apple M1: 234 points

You can double / triple x86 wattage and still be miles behind M1. I almost feel silly buying an x86 laptop again: just kilowatts of waste heat over time. Why? Electrons that never get used, just exhausted and thrown out as soon as possible because it'll throttle even worse otherwise.

undervolted_dc - Tuesday, January 26, 2021 - link

because you here are benchmarking javascript engine in the browserbut not being enough you are comparing those in single thread so here you are comparing 1/16 of the 5950hs vs 1/4 of the m1

a 128core epyc or a 64core threadripper probably will be even worse in this single threaded benchmark ( because those are levaring threads and are less efficient in single threaded app )

if you like wrong calculations then 1 core of the 15w version use less tha 1w for what result ? ~ 100 points ? so who is wasting electrons here ?

( btw 1 core doesn't use 1/16 because there are boosts , but it's even less wrong than your comparison )

ZoZo - Tuesday, January 26, 2021 - link

128-core EPYC? Where?His comparison is indeed misleading in terms of energy efficiency, but it's sad that no x86 is able to come even close to that single-threaded performance.

WaltC - Tuesday, January 26, 2021 - link

Doubly sad for the M1 that we are living in the multicore/multithread era...;)ikjadoon - Tuesday, January 26, 2021 - link

The energy efficient comparisons are pretty clear: the best x86 (Zen3) has stunningly lower IPC than M1, which barely cracks 3 GHz. The only way to make up for such a gulf in IPC is faster clocks. Faster clocks require the 100+W TDPs so common in high-performance desktop CPUs. It's why Zen3 mobile clocks so much lower than Zen3 desktop (3-4 GHz instead of 4-5 GHz)A CPU that needs 3x power to do the same work (and do it slower in most cases) must exhaust an enormous amount of heat, when considering nT or 1T benchmarks (Zen3 requires ~20W for 5 GHz boost on a *single* core). Look at those boost power consumption measurements.

Specifically in desktops (noted in my comparison about tripling TDP...), the CPU *alone* eats up an extra 60 to 90 watts during peak usage. Call it +20W average continuously, so we can do the math.

20W x 8 hours x 7 days a week = +1.1 kWh excess exhaust heat per week. x86 had two corporate giants to do better. It's been severely litigated, but that's Intel's comeuppance. If Intel can't put out high-perf, high-efficiency x86 architectures, then people will start to feel less attached to x86 as an ISA. x86 had billions and billions and billions of R&D.

I see no reason for consumers to religiously follow x86 Wintel or Wintel-clones in laptops especially, but desktops, too: where is the efficiency going to be coming from? Even if Apple *had flat 1T* for the next three years, I'd still feel more optimistic about M1-based CPUs in the long-term than x86.

Dug - Tuesday, January 26, 2021 - link

"I see no reason for consumers to religiously follow x86 Wintel or Wintel-clones in laptops especially, but desktops, too: where is the efficiency going to be coming from?"Software, and getting work done. M1 is great and all, but just need to convince the boss that Apple or 3rd party has software available for our company....... Nope, oh well.

Other negatives-

For personal use, people aren't going to spend thousands of dollars to get new software on new platform.

They can't play games (or should I say they can't play a majority), which is probably the largest market.

They can't change anything about their software

They can't customize anything.

They can't upgrade any piece of their hardware.

They don't have options for same accessories.

So I'll go ahead and spend the extra $15 a year on energy to keep Windows.

Spunjji - Thursday, January 28, 2021 - link

"A CPU that needs 3x power to do the same work"It doesn't. It's been demonstrated a few times now that if you scale back Zen 3 cores to similar performance levels to M1, M1's perf/watt advantage drops to about 30%. It's still better than the node advantage alone, but it's not crippling, and M1 is simply not capable of scaling up to the clock speeds required to match x86 on desktop / HPC workloads.

They're different core designs matched to different purposes (ultra-mobile first vs. server first) and show different strengths as a result.

M1 is a significant achievement - no doubt about it - but you're *massively* overstating the case in its favour.

GeoffreyA - Friday, January 29, 2021 - link

Thank you for this.Meteor2 - Thursday, February 4, 2021 - link

"M1 is simply not capable of scaling up to the clock speeds required to match x86 on desktop / HPC workloads" ...Yet. In a couple of years x86 will be behind ARM across the board.Fastest HPC in the world is ARM *right now*. Only the fifth fastest is x86.