The Samsung 980 PRO PCIe 4.0 SSD Review: A Spirit of Hope

by Billy Tallis on September 22, 2020 11:20 AM ESTWhole-Drive Fill

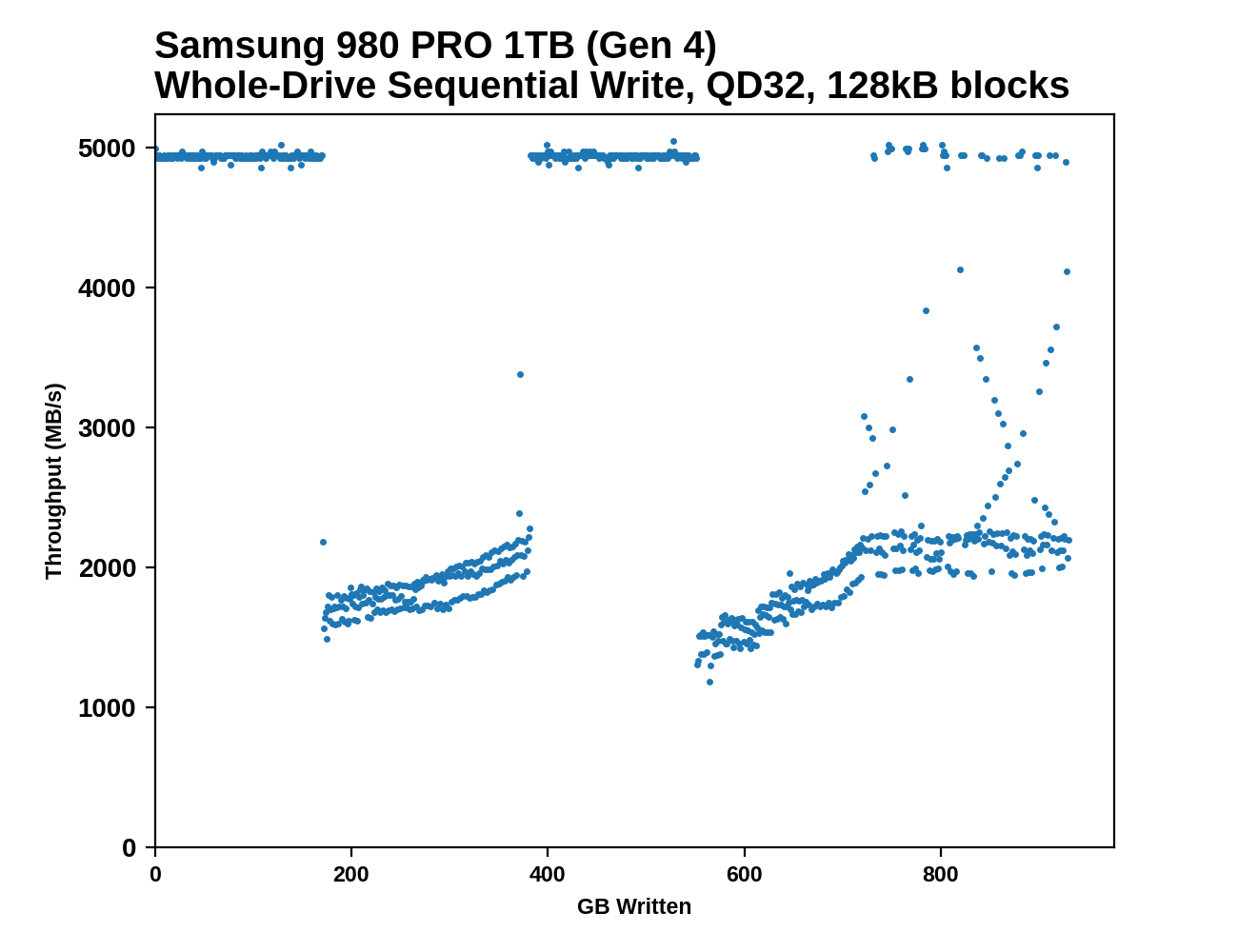

This test starts with a freshly-erased drive and fills it with 128kB sequential writes at queue depth 32, recording the write speed for each 1GB segment. This test is not representative of any ordinary client/consumer usage pattern, but it does allow us to observe transitions in the drive's behavior as it fills up. This can allow us to estimate the size of any SLC write cache, and get a sense for how much performance remains on the rare occasions where real-world usage keeps writing data after filling the cache.

|

|||||||||

Both tested capacities of the 980 PRO perform more or less as advertised at the start of the test: 5GB/s writing to the SLC cache on the 1TB model and 2.6GB/s writing to the cache on the 250GB model - the 1 TB model only hits 3.3 GB/s when in PCIe 3.0 mode. Surprisingly, the apparent size of the SLC caches is larger than advertised, and larger when testing on PCIe 4 than on PCIe 3: the 1TB model's cache (rated for 114GB) lasts about 170GB @ Gen4 speeeds and about 128GB @ Gen3 speeds, and the 250GB model's cache (rated for 49GB) lasts for about 60GB on Gen4 and about 49GB on Gen3. If anything it seems that these SLC cache areas are quoted more for PCIe 3.0 than PCIe 4.0 - under PCIe 4.0 however, there might be a chance to free up some of the SLC as the drive writes to other SLC, hence the increase.

An extra twist for the 1TB model is that partway through the drive fill process, performance returns to SLC speeds and stays there just as long as it did initially: another 170GB written at 5GB/s (124GB written at 3.3GB/s on Gen3). Looking back at the 970 EVO Plus and 970 EVO we can see similar behavior, but it's impressive Samsung was able to continue this with the 980 PRO while providing much larger SLC caches—in total, over a third of the drive fill process ran at the 5GB/s SLC speed, and performance in the TLC writing phases was still good in spite of the background work to flush the SLC cache.

|

|||||||||

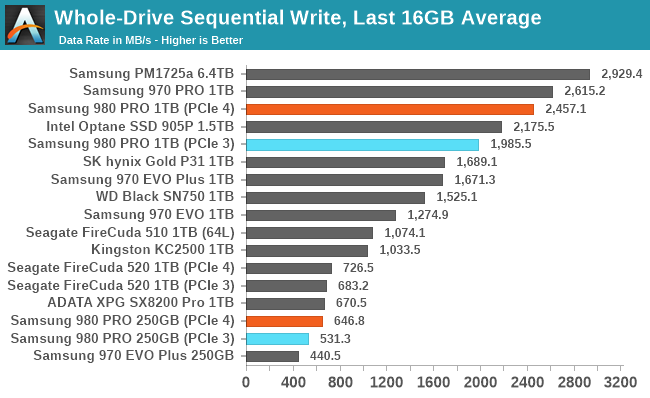

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

On the Gen4 testbed, the overall average throughput of filling the 1TB 980 PRO is only slightly slower than filling the MLC-based 970 PRO, and far faster than the other 1TB TLC drives. Even when limited by PCIe Gen3, the 980 Pro's throughput remains in the lead. The smaller 250GB model doesn't make good use of PCIe Gen4 bandwidth during this sequential write test, but it is a clear improvement over the same capacity of the 970 EVO Plus.

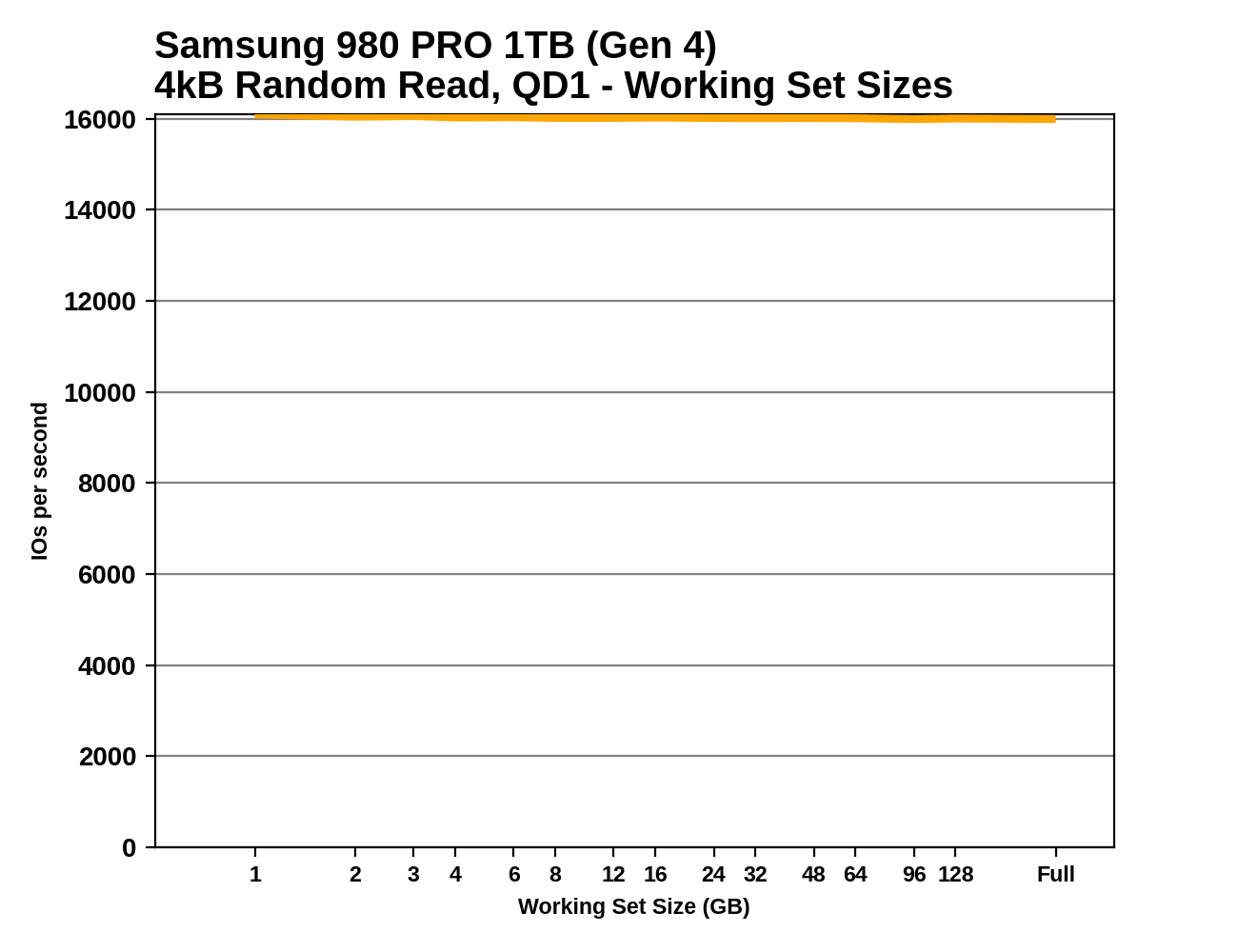

Working Set Size

Most mainstream SSDs have enough DRAM to store the entire mapping table that translates logical block addresses into physical flash memory addresses. DRAMless drives only have small buffers to cache a portion of this mapping information. Some NVMe SSDs support the Host Memory Buffer feature and can borrow a piece of the host system's DRAM for this cache rather needing lots of on-controller memory.

When accessing a logical block whose mapping is not cached, the drive needs to read the mapping from the full table stored on the flash memory before it can read the user data stored at that logical block. This adds extra latency to read operations and in the worst case may double random read latency.

We can see the effects of the size of any mapping buffer by performing random reads from different sized portions of the drive. When performing random reads from a small slice of the drive, we expect the mappings to all fit in the cache, and when performing random reads from the entire drive, we expect mostly cache misses.

When performing this test on mainstream drives with a full-sized DRAM cache, we expect performance to be generally constant regardless of the working set size, or for performance to drop only slightly as the working set size increases.

|

|||||||||

Since these are all high-end drives, we don't see any of the read performance drop-off we expect from SSDs with limited or no DRAM buffers. The two drives using Silicon Motion controllers show a little bit of variation depending on the working set size, but ultimately are just as fast when performing random reads across the whole drive as they are reading from a narrow range. The read latency measured here for the 980 PRO is an improvement of about 15% over the 970 EVO Plus, but is not as fast as the MLC-based 970 PRO.

137 Comments

View All Comments

Billy Tallis - Thursday, September 24, 2020 - link

The reviewers guide indicated that Samsung plans to continue using the PRO/EVO/QVO branding, but they don't have any new EVO or QVO drives to announce at this time. That's part of why I expect the EVO to continue on as a more entry-level TLC tier, without switching to QLC.Luke212 - Wednesday, September 23, 2020 - link

Terrible release. No faster after all this time. And to be outclassed by E18 Phisonjtester - Wednesday, October 14, 2020 - link

how is it not faster when on most charts it's well above the last generation? Also, why is MLC going to matter when the 1tb MLC has the same endurance as a 2tb evo plus? So is there no point in anyone having a 970 pro, regardless of their use case?I can either have a 1tb 970 pro for $240 after various discounts and credits or I can have a 2tb 970 evo plus for $270 or a 1tb 980 pro for $190. So how to decide? I already have a 1tb 970 evo plus, but haven't built yet and want at least 2 or 3 samsung drives.

Tom Sunday - Wednesday, September 23, 2020 - link

I just recently purchased a pair of Samsung 2TB 970 EVO Plus NVMe's to basically upgrading my older PC. They are super fast at 3500 MB/s sequential read speed. Its particulary felt in the booting-up process for sure. I question the necessity of the 980 PRO PCIe 4.0 because how fast does one really needs to go. I would think that for a few seconds here and there it's not worth it to graduate up again to the 4.0 world.Oxford Guy - Thursday, September 24, 2020 - link

"I question the necessity of the 980 PRO PCIe 4.0 because how fast does one really needs to go."That's not the point here. The point here is that the "980 Pro" is an EVO masquerading as a Pro. As others have said, it's likely a maneuver to foist QLC junk as the "upgrade" to the EVO line.

Luckz - Thursday, September 24, 2020 - link

To validate your faster booting process claim, did you compare your "super fast 3500 MB/s sequential read speed" to a cheaper 2000 MB/s drive like an A2000, or merely to a HDD from ancient times? And if it's "a pair" and an "older PC", you're going to run out of PCIe lanes anyway, unless it's a reasonably beefy HEDT or you don't (really) need a graphics card.im.thatoneguy - Thursday, September 24, 2020 - link

Thank you for testing last 16GB stats. As someone who uses Nvme drives for offloading TBs of video footage at a time this is actually really helpful to my workflows.Any reason though why there are no Sabrent drives benchmarked? They seem to be the most popular and best selling on Amazon. Seems like a good test to see if they are a value or if they are just cheap.

Billy Tallis - Thursday, September 24, 2020 - link

Sabrent drives are mostly or all Phison reference designs, sometimes with a custom heatsink. So their Rocket and Rocket 4.0 are basically equivalent to the Seagate drives featured in this review.I do have the 8TB Sabrent Rocket Q, but paused testing of that to work on this review. The 8TB review will probably be my next one finished, but those big drives take a while to test.

clieuser - Sunday, September 27, 2020 - link

How fast the 980 pro with PCIE 3.0, not with PCIE 4.0?ballsystemlord - Tuesday, September 29, 2020 - link

Spelling error:"and thus having optimized products to go along with it has always been the case as new generations trump the told."

Excess "t":

"and thus having optimized products to go along with it has always been the case as new generations trump the old."

I'll read the rest when the benchmarks are completed.