Intel’s Tiger Lake 11th Gen Core i7-1185G7 Review and Deep Dive: Baskin’ for the Exotic

by Dr. Ian Cutress & Andrei Frumusanu on September 17, 2020 9:35 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Tiger Lake

- Xe-LP

- Willow Cove

- SuperFin

- 11th Gen

- i7-1185G7

- Tiger King

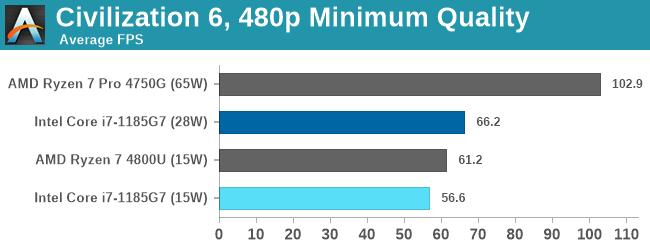

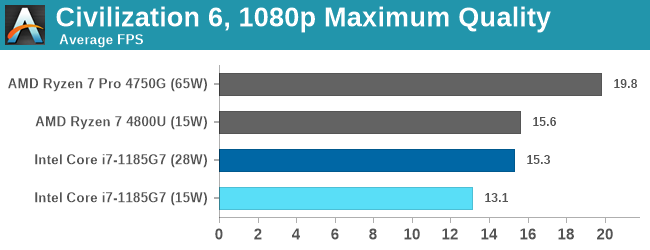

Xe-LP GPU Performance: Civilization VI

Originally penned by Sid Meier and his team, the Civilization series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer underflow. Truth be told I never actually played the first version, but I have played every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, and it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Civ6 is a game that enjoys lots of CPU performance, so we can see the desktop APU out front here. The eight cores of the 4800U get ahead of the 15 W version of Tiger Lake in both of our tests, although the 28 W power mode gets an 8% lead in the CPU-limited test.

253 Comments

View All Comments

blppt - Saturday, September 26, 2020 - link

Sure, the box sitting right next to my desk doesn't exist. Nor the 10 or so AMD cards I've bought over the past 20 years.1 5970

2 7970s (for CFX)

1 Sapphire 290x (BF4 edition, ridiculously loud under load)

2 XFX 290 (much better cooler than the BF4 290x) mistakenly bought when I thought it would accept a flash to 290x, got the wrong builds, for CFX)

2 290x 8gb sapphire custom edition (for CFX, much, much quieter than the 290x)

1 Vega 64 watercooled (actually turned out to be useful for a Hackintosh build)

1 5700xt stock edition

Yeah, i just made this stuff up off the top of my head. I guarantee I've had more experience with AMD videocards than the average gamer. Remember the separate CFX CAP profiles? I sure do.

So please, tell me again how I'm only a Nvidia owner.

Santoval - Sunday, September 20, 2020 - link

If the top-end Big Navi is going to be 30-40% faster than the 2080 Ti then the 3080 (and later on the 3080 Ti, which will fit between the 3080 and the 3090) will be *way* beyond it in performance, in a continuation of the status quo of the last several graphics card generations. In fact it will be even worse this generation, since Big Navi needs to be 52% faster than the 2080 Ti to even match the 3070 in FP32 performance.Sure, it might have double the memory of the 3070, but how much will that matter if it's going to be 15 - 20% slower than a supposed "lower grade" Nvidia card? In other words "30-40% faster than the 2080 Ti" is not enough to compete with Ampere.

By the way, we have no idea how well Big Navi and the rest of the RDNA2 cards will perform in ray-tracing, but I am not sure how that matters to most people. *If* the top-end Big Navi has 16 GB of RAM, it costs just as much as the 3070 and is slightly (up to 5-10%) slower than it in FP32 performance but handily outperforms it in ray-tracing performance then it might be an attractive buy. But I doubt any margins will be left for AMD if they sell a 16 GB card for $500.

If it is 15-20% slower and costs $100 more noone but those who absolutely want 16 GB of graphics RAM will buy it; and if the top-end card only has 12 GB of RAM there goes the large memory incentive as well..

Spunjji - Sunday, September 20, 2020 - link

@Santoval, why are you speaking as if the 3080's performance characteristics are not already known? We have the benchmarks in now.More importantly, why are you making the assumption that AMD need to beat Nvidia's theoretical FP32 performance when it was always obvious (and now extremely clear) that it has very little bearing on the product's actual performance in games?

The rest of your speculation is knocked out of what by that. The likelihood of an 80CU RDNA 2 card underperforming the 3070 is nil. The likelihood of it underperforming the 3080 (which performs like twice a 5700, non-XT) is also low.

Byte - Monday, September 21, 2020 - link

Nvidia probably has a good idea how it performs with access to PS5/Xbox, they know they had to be aggressive this round with clock speeds and pricing. As we can see 3080 is almost maxed, o/c headroom like that of AMD chips, and price is reasonable decent, in line with 1080 launch prices before minepocalypse.TimSyd - Saturday, September 19, 2020 - link

Ahh don't ya just love the fresh smell of TROLLevernessince - Sunday, September 20, 2020 - link

The 5700XT is RDNA1 and it's 1/3rd the size of the 2080 Ti. 1/3rd the size and only 30% less performance. Now imagine a GPU twice the size of the 5700XT, thus having twice the performance. Now add in the node shrink and new architecture.I wouldn't be surprised if the 6700XT beat the 2080 Ti, let alone AMD's bigger Navi 2 GPUs.

Cooe - Friday, December 25, 2020 - link

Hahahaha. "Only matching a 2080 Ti". How's it feel to be an idiot?tipoo - Friday, September 18, 2020 - link

I'd again ask you why a laptop SoC would have an answer for a big GPU. That's not what this product is.dotjaz - Friday, September 18, 2020 - link

"This Intel Tiger" doesn't need an answer for Big Navi, no laptop chip needs one at all. Big Navi is 300W+, no way it's going in a laptop.RDNA2+ will trickle down to mobile APU eventually, but we don't know if Van Gogh can beat TGL yet, I'm betting not because it's likely a 7-15W part with weaker Quadcore Zen2.

Proper RDNA2+ APU won't be out until 2022/Zen4. By then Intel will have the next gen Xe.

Santoval - Sunday, September 20, 2020 - link

Intel's next gen Xe (in Alder Lake) is going to be a minor upgrade to the original Xe. Not a redesign, just an optimization to target higher clocks. The optimization will largely (or only) happen at the node level, since it will be fabbed with second gen SuperFin (formerly 10nm+++), which is supposed to be (assuming no further 7nm delays) Intel's last 10nm node variant.How well will that work, and thus how well 2nd gen Xe will perform, will depend on how high Intel's 2nd gen SuperFin will clock. At best 150 - 200 MHz higher clocks can probably be expected.